Abstract

The stein variational gradient descent (SVGD) algorithm is a deterministic particle method for sampling. However, a mean-field analysis reveals that the gradient flow corresponding to the SVGD algorithm (i.e., the Stein Variational Gradient Flow) only provides a constant-order approximation to the Wasserstein gradient flow corresponding to the KL-divergence minimization. In this work, we propose the Regularized Stein Variational Gradient Flow, which interpolates between the Stein Variational Gradient Flow and the Wasserstein gradient flow. We establish various theoretical properties of the Regularized Stein Variational Gradient Flow (and its time-discretization) including convergence to equilibrium, existence and uniqueness of weak solutions, and stability of the solutions. We provide preliminary numerical evidence of the improved performance offered by the regularization.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Given a potential function \(V:{\mathbb {R}}^d \rightarrow {\mathbb {R}}\), the sampling problem involves generating samples from the density

being the normalization constant, which is typically assumed to be unknown or hard to compute. The task of sampling arises in several fields of applied mathematics, including Bayesian statistics and machine learning in the context of numerical integration. There are two widely-used approaches for sampling: (i) diffusion-based randomized algorithms, which are based on discretizations of certain diffusion processes, and (ii) particle-based deterministic algorithms, which are discretizations of certain approximate gradient flows. A central idea connecting the two approaches is the seminal work by [23] which provided a variational interpretation of the Langevin diffusion as the Wasserstein gradient flow (WGF),

where the term \(\nabla _{W_2}F(\mu _t)= \nabla \log \frac{\mu _t}{\pi } \) could be interpreted as the Wasserstein gradientFootnote 1 of the relative entropy functional (also called as the Kullback–Leibler divergence), defined by

evaluated at \(\mu _t\). This leads to the idea that sampling could be viewed as optimization on the space of densities/measures, a viewpoint that has provided a deeper understanding of the sampling problem [44, 50].

There are several merits and disadvantages to both the randomized and deterministic discretizations of the (approximate) WGF. First, note that obtaining exact space-time discretization of the WGF in (2) is not possible. Indeed, due to the presence of the diffusion term, when initialized with a N-particle based empirical measure, the particles do not remain as particles for any time \(t >0\). Hence, on the one hand, randomized discretizations like the Langevin Monte Carlo algorithm, are used as implementable space-time discretizations of the WGF. On the other hand, motivated by applications where the randomness in the discretization is undesirable, in the applied mathematics literature, other discretizations of approximate WGF were developed. Such methods are predominantly based on using mollifiers and we refer the reader to [7, 14, 16, 34, 36] for a partial list and to [10], for a comprehensive overview.

Recently, in the machine learning community, the stein variational gradient descent [25, 27] was proposed as another deterministic discretization of approximate WGF, and has gathered significant attention due to applications in reinforcement learning [29], graphical modeling [48], measure quantization [51], and other fields of machine learning and applied mathematics [12, 12, 24, 47]. Due to the use of the reproducing kernels, the stein variational gradient descent (SVGD) algorithm provides a space-time discretization of the following approximate Wasserstein gradient flow (which we refer to as the Stein Variational Gradient Flow (SVGF) for simplicity)

where \({\mathcal {T}}_{k,\mu }: L^d_2(\mu ) \rightarrow L^d_2(\mu )\)Footnote 2 is the integral operator defined as \({\mathcal {T}}_{k,\mu }f(x) = \int k(x,y) f(y) \mu (y) dy \) for any function \(f\in L^d_2(\mu )\), and for a kernel \(k:{\mathbb {R}}^d\times {\mathbb {R}}^d \rightarrow {\mathbb {R}}\); see, for example [30]. Hence, SVGD (which is based on the SVGF), in this context, while being deterministic only provides a discretization of a constant-order approximation to the Wasserstein gradient flow due to the presence of the kernel integral operator. Indeed, if \(\text {supp}(\mu _t)={\mathbb {R}}^d\) and k is a bounded continuous translation invariant characteristic kernel [41] on \({\mathbb {R}}^d\) (e.g., Gaussian, Laplacian kernels), then

where \({\textbf{1}}=(1,{\ldots },1)^\intercal \in \mathbb {R}^d\). This shows that the order of the error is crucially dependent on the choice of the kernel k.

To overcome the above issue with the SVGF, in this work, we propose the Regularized Stein Variational Gradient Flow (R-SVGF). To motivate the proposed flow, we first note that the Wasserstein gradient \(\nabla \log (\mu _t/\pi )\) lives in \(L^d_2(\mu _t)\), while the kernelized Wasserstein gradient \({\mathcal {T}}_{k,\mu _t}\nabla \log (\mu _t/\pi )\) morally lives in the reproducing kernel Hilbert spaceFootnote 3\({\mathcal {H}}^d_k \subset L^d_2(\mu _t)\). If \(\nabla \log (\mu _t/\pi )\in \overline{\text {Ran}({\mathcal {T}}_{k,\mu _t})}\), then it is easy to verify that

Additionally, if \(\nabla \log (\mu _t/\pi ) \) is sufficiently smooth, i.e., there exists \(\gamma \in \left( 0,\frac{1}{2}\right] \) such that \(\nabla \log (\mu _t/\pi ) = {\mathcal {T}}^\gamma _{k,\mu _t} h\), for some \(h\in L_2^d(\mu _t)\) (see, for example, [15]), then

In other words, \( ((1-\nu ){\mathcal {T}}_{k,\mu _t}+\nu I)^{-1}{\mathcal {T}}_{k,\mu _t}\nabla \log (\mu _t/\pi )\) is a good approximation to \(\nabla \log (\mu _t/\pi )\) for small \(\nu \). With this motivation, we propose the following R-SVGF given by

for some regularization parameter \(\nu \in (0,1]\), where R-SVGF arbitrarily approximates the WGF as \(\nu \rightarrow 0\). It is important to note that in the case of \(\gamma =1/2\), we have \(\nabla \log (\mu _t/\pi ) \in {\mathcal {H}}_k^d\), yet, (3) suffers from the drawback of providing only a constant-order approximation to (2).

1.1 Summary of Contributions

Our contributions in this work are as follows:

-

(1)

We propose the Regularized SVGF (R-SVGF) that interpolates between the Wasserstein gradient flow and the SVGF. The advantage of the proposed flow is that one could obtain an implementable space-time discretization as long as the regularization parameter is bounded away from zero. The main intuition behind the proposed flow is to pick an appropriately small regularization parameter so that we could arbitrarily approximate the WGF (Theorems 1 and 3).

-

(2)

For the R-SVGF, we provide rates of convergence to the equilibrium density in two cases: (i) in the Fisher Information metric under no further assumptions on the target \(\pi \in {\mathcal {P}}({\mathbb {R}}^d)\) (Theorem 2) and (ii) in the KL-divergence metric under an LSI assumption on the target (Theorem 4). We also establish similar results for the time-discretized R-SVGF (Theorems 5 and 6).

-

(3)

We characterize the existence and uniqueness (Theorem 7), and stability (Theorem 8) of solutions to the R-SVGF.

-

(4)

We provide preliminary numerical experiments demonstrating the advantage of the space-time discretization of the R-SVGF, which we call the Regularized stein variational gradient descent (R-SVGD) algorithm, over the standard SVGD algorithm.

1.2 Organization

The rest of the paper is organized as follows. In Sect. 1.3, we introduce the notations used in the rest of the paper. In Sect. 2, we provide the preliminaries on reproducing kernel Hilbert spaces required for our work. In Sect. 3, we introduce the R-SVGF, along with the notion of regularized Stein-Fisher information, required for our analysis. Due to the technical nature of the proofs, we postpone the results on existence and uniqueness of the R-SVGF, and related stability results respectively to Sects. 5 and 6. In Sect. 4, we provide convergence results on the R-SVGF and its time-discretized version. We conclude in Sect. 7 with a space-time discretization which provides a practically implementable algorithm, and provide preliminary empirical results.

1.3 Notations

We use the following notations throughout this work:

-

For a matrix, \(\Vert \cdot \Vert _2\) denotes the matrix 2-norm (spectral norm) and \(\Vert \cdot \Vert _{HS}\) denotes the Hilbert-Schmidt norm which is defined as \(\Vert A \Vert _{HS}^2=\sum _{i,j=1}^d |a_{ij}|^2\) for any matrix \(A=(a_{ij})_{i,j\in [d]}\).

-

The term id denotes the \(d\times d\) identity matrix. \(I_d\) corresponds to the identity operator in the RKHS. I corresponds to the identity operator in \(L_2(\mu )\).

-

\(\left( L_\infty (\mathbb {R}^d),\left\Vert \cdot \right\Vert _{L_\infty (\mathbb {R}^d)}\right) \) denotes the space of all essentially bounded measurable functions on \(\mathbb {R}^d\) with \(\left\Vert f \right\Vert _{L_\infty (\mathbb {R}^d)}:= \inf \{ C: |f(x)|\le C \ \text {for almost every }x\in \mathbb {R}^d \} \) for any \(f\in L_\infty (\mathbb {R}^d)\).

-

\(\mathcal {C}(\mathbb {R}^d;\mathbb {R}^d)\) is the space of all \(\mathbb {R}^d\)-valued continuous functions on \(\mathbb {R}^d\).

-

\(\mathcal {C}_0^\infty ([0,\infty )\times \mathbb {R}^d)\) is the space of all \(\mathbb {R}\)-valued measurable functions on \([0,\infty )\times \mathbb {R}^d\) that vanish at infinity, i.e., for any \(f\in \mathcal {C}_0^\infty ([0,\infty )\times \mathbb {R}^d)\), \(f(t,x)\rightarrow 0\) as \(t\rightarrow \infty \) and \(f(t,x)\rightarrow 0\) as \(x\rightarrow \infty \).

-

For any function space \(\mathcal {H}\) on \(\mathbb {R}^d\), \(\mathcal {C}([0,T];\mathcal {H})\) is the space of functions f such that for any fixed \(t\in [0,T]\), \(f(t,\cdot )\in \mathcal {H}\) and for any fixed \(x\in \mathbb {R}^d\), \(f(\cdot ,x)\) is a continuous function on [0, T]. \(\mathcal {C}^1([0,T];\mathcal {H})\) is the space of functions f such that for any fixed \(t\in [0,T]\), \(f(t,\cdot )\in \mathcal {H}\) and for any fixed \(x\in \mathbb {R}^d\), \(f(\cdot ,x)\) is a continuous function with a continuous first order derivative on [0, T].

-

Let \(\mathcal {P}(\mathbb {R}^d)\) denote the space of all probability densities (with respect to the Lebesgue measure) on \(\mathbb {R}^d\) that are twice continuously differentiable with full supports on \(\mathbb {R}^d\) and finite second moments.

-

For any \(\mu _1,\mu _2\in \mathcal {P}(\mathbb {R}^d)\) and any \(p\ge 1\), \(\mathcal {W}_p(\mu _1,\mu _2)\) denotes the Wasserstein-p distance between \(\mu _1\) and \(\mu _2\) defined as \(\mathcal {W}_p(\mu _1,\mu _2){:}{=}\inf _{X\sim \mu _1,Y\sim \mu _2} \mathbb {E}[|X-Y|^p]^{\frac{1}{p}}\).

-

For any \(\mu \in \mathcal {P}(\mathbb {R}^d)\), \(\left( L_2(\mu ), \left\Vert \cdot \right\Vert _{L_2(\mu )} \right) \) is the space of all \(\mu \)-square integrable measurable function on \(\mathbb {R}^d\) with \(\left\Vert f\right\Vert _{L_2(\mu )}^2:=\int _{\mathbb {R}^d} |f(x)|^2 \mu (x) dx\).

-

For any function space \({\mathcal {H}}\), we say \(f\in \mathcal {H}^d\) if \(f=[f_1,\cdots ,f_d]^\intercal \) such that \(f_i\in \mathcal {H}\) for all \(i\in [d]\). If the function space \((\mathcal {H},\left\Vert \cdot \right\Vert _{\mathcal {H}})\) is further a Hilbert space, \((\mathcal {H}^d, \left\Vert \cdot \right\Vert _{\mathcal {H}^d})\) denotes the Hilbert space of all vector valued functions whose entries are in \((\mathcal {H},\left\Vert \cdot \right\Vert _{\mathcal {H}})\) with \(\left\Vert f \right\Vert _{\mathcal {H}^d}^2{:}{=}\sum _{i=1}^d \left\Vert f_i \right\Vert _{\mathcal {H}}^2\) for any \(f=[f_1,\cdots ,f_d]^\intercal \in \mathcal {H}^d\).

-

Let \(\left( {\mathcal {H}},\left\Vert \cdot \right\Vert _{\mathcal {H}}\right) \) and \(\left( {\mathcal {G}},\left\Vert \cdot \right\Vert _{\mathcal {G}}\right) \) be two function spaces. For an operator \(A:{\mathcal {H}} \rightarrow {\mathcal {G}} \), we denote the adjoint operator of A by \(A^*\). We denote the operator norm by \(\Vert A\Vert _{{\mathcal {H}} \rightarrow {\mathcal {G}}}\), which is defined as \(\left\Vert A \right\Vert _{\mathcal {H}\rightarrow \mathcal {G}}:=\sup _{\left\Vert u \right\Vert _{\mathcal {H}}\le 1} \left\Vert A u \right\Vert _{\mathcal {G}}\). When we don’t emphasize the spaces, we denote the operator norm of A by \(\left\Vert A \right\Vert _{\text {op}}\) for simplicity.

-

Let \(\left( {\mathcal {H}},\Vert \cdot \Vert _{{\mathcal {H}}} \right) \) and \(\left( {\mathcal {G}},\Vert \cdot \Vert _{{\mathcal {G}}} \right) \) be two Hilbert spaces. For an operator \(A:{\mathcal {H}}\rightarrow {\mathcal {G}}\), we denote the Hilbert-Schmidt norm by \(\Vert A \Vert _{HS}\) which is defined as \(\Vert A \Vert _{HS}^2:=\sum _{i\in I} \Vert A e_i\Vert _{{{\mathcal {G}}}}^2 \) where \(\{e_i\}_{i\in I}\) is an orthonormal basis of \({\mathcal {H}}\). We denote the nuclear norm by \(\Vert A\Vert _{nuc}\) which is defined as \(\left\Vert A \right\Vert _{nuc}:=\sum _{i\in I} \langle (A ^* A)^{\frac{1}{2}}e_i, e_i \rangle _{\mathcal {H}} \) where \(\{e_i\}_{i\in I}\) is an orthonormal basis of \({\mathcal {H}}\).

-

For a smooth function \(f:\mathbb {R}^d\times \mathbb {R}^d\rightarrow \mathbb {R}\), \(\nabla _1 f \) denotes the gradient of f in the first variable and \(\nabla _2 f \) denotes the gradient of f in the second variable.

-

For a map \(\phi : {\mathbb {R}}^d \rightarrow {\mathbb {R}}^d\), \(\phi _i\) denotes the i-th component of the function value and \(\nabla \phi \) denotes the Jacobian, i.e., \((\nabla \phi )_{ij} = \partial _j\phi _i \) for all \(i,j\in [d]\).

-

\(T_{\#}\rho \) represents the push-forward of the density \(\rho \) under a map T.

-

\(\langle \cdot , \cdot \rangle _{\mathcal {H}}\) denotes inner-product in the Hilbert space \(\mathcal {H}\). \(\langle \cdot , \cdot \rangle \) denotes inner-product in the Euclidean space \(\mathbb {R}^d\).

2 Preliminaries on Reproducing Kernel Hilbert Space

In this section, we introduce some properties of RKHS which would be used later in the formulation and analysis of R-SVGF. We refer the reader to [6, 33, 42] for the basics of RKHS. We let \(\mathcal {H}_k\) be a separable RKHS over \(\mathbb {R}^d\) with the reproducing kernel \(k:\mathbb {R}^d\times \mathbb {R}^d\rightarrow \mathbb {R}_{>0}\) and with \(\Vert \cdot \Vert _{\mathcal {H}_k}\) denoting the associated RKHS norm.

We make the following assumption on the kernel function k throughout the paper.

Assumption A1

The kernel function \(k:\mathbb {R}^d \times \mathbb {R}^d \rightarrow \mathbb {R}\) is symmetric, strictly positive definite and continuous.

Additional assumptions on the kernel will be introduced when they are required. The following results are essentially based on [42, Lemma 4.23, and Theorems 4.26 and 4.27].

Proposition 1

[ [42]] The following holds.

-

(i)

The kernel function k is bounded if and only if every \(f\in \mathcal {H}_k\) is bounded. Moreover, the inclusion \(\iota _d:\mathcal {H}_k\rightarrow L_\infty (\mathbb {R}^d)\) is continuous and \(\left\Vert \iota _d \right\Vert _{\mathcal {H}_k\rightarrow L_\infty (\mathbb {R}^d)}=\left\Vert k \right\Vert _\infty \), where \(\left\Vert k \right\Vert _\infty :=\sup _{x\in \mathbb {R}^d} \sqrt{k(x,x)} \).

-

(ii)

Let \(\mu \) be a \(\sigma \)-finite measure on \(\mathbb {R}^d\) and k be a measurable kernel. Assume that

$$\begin{aligned} \left\Vert k \right\Vert _{L_2(\mu )}:=\left( \int _{\mathbb {R}^d} {k(x,x)} d\mu (x) \right) ^{\frac{1}{2}}<\infty . \end{aligned}$$Then \(\mathcal {H}_k\) consists of 2-integrable functions and the inclusion \(\iota _{k,\mu }: \mathcal {H}_k\rightarrow L_2(\mu )\) is continuous with \(\left\Vert \iota _{k,\mu }\right\Vert _{\mathcal {H}_k\rightarrow L_2(\mu ) }\le \left\Vert k \right\Vert _{L_2(\mu )} \). Moreover, the adjoint of this inclusion is the operator \(\iota _{k,\mu }^*:L_2(\mu )\rightarrow \mathcal {H}_k \) defined by

$$\begin{aligned} \iota _{k,\mu }^* g(x):=\int _{\mathbb {R}^d} k(x,y) g(y) d\mu (y) ,\qquad g\in L_2(\mu ),\ x\in \mathbb {R}^d. \end{aligned}$$ -

(iii)

Under the assumptions in (ii), \(\mathcal {H}_k\) is dense in \(L_2(\mu )\) if and only if \(\iota _{k,\mu }^*:L_2(\mu )\rightarrow \mathcal {H}_k\) is injective. Alternatively, \(\iota _{k,\mu }^*:L_2(\mu )\rightarrow \mathcal {H}_k\) has a dense image if and only if \(\iota _{k,\mu }:\mathcal {H}_k\rightarrow L_2(\mu )\) is injective.

-

(iv)

Under the assumptions in (ii), \(\iota _{k,\mu }:\mathcal {H}_k\rightarrow L_2(\mu )\) is a Hilbert-Schmidt operator with \(\left\Vert \iota _{k,\mu } \right\Vert _{HS}=\left\Vert k \right\Vert _{L_2(\mu )}\). Moreover, the integral operator \(\mathcal {T}_{k,\mu }=\iota _{k,\mu }\iota _{k,\mu }^*:L_2(\mu )\rightarrow L_2(\mu )\) is compact, positive, self-adjoint, and nuclear with \(\left\Vert \mathcal {T}_{k,\mu } \right\Vert _{nuc}=\left\Vert \iota _{k,\mu } \right\Vert _{HS}=\left\Vert k \right\Vert _{L_2(\mu )}\).

When \(f\in \mathcal {H}_k^d\) with \(f=[f_1,\cdots , f_d]^\intercal \) and \(g\in \mathcal {H}_k\), we define \(\langle f,g \rangle _{\mathcal {H}_k}\) as a vector in \(\mathbb {R}^d\) and \(\left( \langle f,g \rangle _{\mathcal {H}_k} \right) _i=\langle f_i,g \rangle _{\mathcal {H}_k}\) for all \(i\in [d]\). When \(f\in L_2^d(\mu )\) with \(f=[f_1,\cdots , f_d]^\intercal \) and \(g\in L_2(\mu )\), we define \(\langle f,g \rangle _{L_2(\mu )}\) as a vector in \(\mathbb {R}^d\) and \(\left( \langle f,g \rangle _{L_2(\mu )} \right) _i=\langle f_i,g \rangle _{L_2(\mu )}\) for all \(i\in [d]\). Note also that \(\mathcal {T}_{k,\mu }=\iota _{k,\mu }\iota ^*_{k,\mu }\) and \(\text {Ran}({\mathcal {T}_{k,\mu }^{1/2}})={\mathcal {H}}_k\subset L_2(\mu )\). We refer the interested reader to [15] for more details.

Finally, we remark that by letting \((\lambda _i,e_i)_{i=1}^\infty \) be the set of eigenvalues and eigenfunctions of the operator \({\mathcal {T}_{k,\mu }}\) where \(\lambda _1\ge \lambda _2\ge \cdots >0\) and \((e_i)_{i=1}^\infty \) form an orthonormal system in \(\text {Ran}(\mathcal {T}_{k,\mu })\), we have the following spectral representation that, for all \(f\in L_2(\mu )\),

Computing the spectral representation, in general for any given \(\mu \) and kernel k is a non-trivial task. Results are only known on a case-by-case basis; see, for example, [2, 8, 31, 39]. However, we use the decomposition only in our analysis. For the purely practical algorithm that we describe eventually in Sect. 7, we do not need to know the decomposition explicitly.

Remark 1

Strictly speaking, the above notation implicitly assumes that the operator \({\mathcal {T}}_{k,\mu }\) has a trivial null space, in which case \(\overline{\text {Ran}({\mathcal {T}}_{k,\mu })} \equiv L_2(\mu )\) and hence the eigenfunctions \((e_i)_{i=1}^\infty \) form an orthonormal basis to \(L_2(\mu )\). However, our analysis does not require this condition on \({\mathcal {T}}_{k,\mu }\). In particular, if \({\mathcal {T}}_{k,\mu }\) has a non-trivial null-space, then \(\overline{\text {Ran}({\mathcal {T}}_{k,\mu })} \subset L_2(\mu )\). In this case, our analysis still holds true. For example, with a slight abuse of notation, if we let \(e_i\), for certain values of i, also denote the basis of the null-space of \({\mathcal {T}}_{k,\mu }\), conclusions similar to our results hold.

3 Regularized SVGF

We now introduce the formulation of the Regularized-SVGF and discuss its connection with the SVGF and the WGF. Recall that in the mean-field limit, the SVGF in (3) only provides a constant order approximation to the WGF in (2), due to the presence of the operator \({\mathcal {T}}_{k,\mu }\). As the operator \({\mathcal {T}}_{k,\mu }\) is not invertible, we seek to obtain a regularized inverse so that we end up with the following Regularized-SVGF, as in (4), for some regularization parameter \(\nu \in (0,1]\). Note in particular that, as \(\nu \rightarrow 0\), the Regularized-SVGF gets arbitrarily close to the WGF. Our goal in this section is to derive the above-mentioned R-SVGF from first principles.

The central operator required in our formulation is the following Stein operator, which is defined for all \(p\in \mathcal {P}(\mathbb {R}^d)\), and for all smooth maps \(\phi :\mathbb {R}^d\rightarrow \mathbb {R}^d\), as

where \(\otimes \) denotes the outer-product. Generally speaking, the Stein operator can also be defined for some densities that are outside of \(\mathcal {P}(\mathbb {R}^d)\). We restrict to \(p\in \mathcal {P}(\mathbb {R}^d)\) in the paper for the purpose of our analysis. Now, the Wasserstein gradient flow in (2) could be thought of as follows. Consider moving a particle \(x \sim \rho \) (for some \(\rho \in {\mathcal {P}}({\mathbb {R}}^d)\)) based on the mapping \(x \mapsto T(x){:}{=}x+h\phi (x)\), where \(h>0\) is a step-size parameter, and \(\phi \) is a vector-field chosen so that the KL-divergence between the pushforward of \(\rho \) according to T, denoted as \(T_{\#}\rho \), and the target density \(\pi \) in minimal. Liu and Wang [27, Theorem 3.1], showed that

We also refer to [23] for an earlier version of the same result. Based on this observation, if we try to find the vector-field \(\phi \) in the unit-ball of \(L_2^d(\rho )\) that maximizes the quantity \([\mathbb {E}_{x\sim \rho }[\text {trace}(\mathcal {A}_\pi \phi (x) )]]^2\), a straight-forward calculation based on integration-by-parts, results in the optimal \(\phi \) being the Wasserstein gradient \(\nabla \log \frac{\rho }{\pi }\). To have a practical implementation, [27] considered maximizing \([\mathbb {E}_{x\sim \rho }[\text {trace}(\mathcal {A}_\pi \phi (x) )]]^2\) over the unit-ball in the RKHS \(\mathcal {H}_k^d\), which results in the optimal vector-field being equal to \({\mathcal {T}}_{k,\rho }\nabla \log \frac{\rho }{\pi }\), and correspondingly results in the SVGF in (3).

In this work, we propose to find the vector field \(\phi \) that maximizes \(\left[ \mathbb {E}_{x\sim \rho } \left[ \text {trace}(\mathcal {A}_\pi \phi (x))\right] \right] ^2\) over the unit-ball with respect to an interpolated norm between \(L_2^d(\rho )\) and \(\mathcal {H}_k^d\). Specifically, the interpolation norm that we consider is of the form \(\nu \left\Vert \cdot \right\Vert _{\mathcal {H}_k^d}^2+(1-\nu )\left\Vert \cdot \right\Vert _{L_2^d(\rho )}^2\), for some regularization parameter \(\nu \in (0,1]\), which trades-off between \(\Vert \cdot \Vert ^2_{{\mathcal {H}}^d_k}\) and \(\Vert \cdot \Vert ^2_{L^d_2(\rho )}\). We also remark here that a similar idea has been leveraged in the context of RKHS-based statistical hypothesis testing [5]. Formally, for \(\rho , \pi \in \mathcal {P}(\mathbb {R}^d)\), we consider the following optimization problem.

For any \(\rho \in \mathcal {P}(\mathbb {R}^d)\), the optimal vector field, \(\phi \) that minimizes \(\textsc {KL}(T_{\#}\rho |\pi )\) can be described via the following result. To make the statements more consistent with the analysis (and proofs), we will denote \(\mathcal {T}_{k,\cdot }\) explicitly as \(\iota _{k,\cdot }\iota ^*_{k,\cdot }\) in the rest of the paper. Before we proceed, we also recall that the Fisher information between two densities \(\rho , \pi \in {\mathcal {P}}({\mathbb {R}}^d)\), is defined as

with \((e_i)_{i=1}^\infty \) being an orthonormal basis to \(L_2(\rho )\).

Proposition 2

Let \(T(x)=x+h\phi (x)\) and \(T_{\#}\rho (z)\) be the density of \(z=T(x)\) for any \(\rho \in {\mathcal {P}}({\mathbb {R}}^d)\). For \(\nu \in (0,1]\), define

Let \(\pi \propto e^{-V}\) and \(\pi \in \mathcal {P}(\mathbb {R}^d)\). If \(I(\rho |\pi )<\infty \) and k satisfies Assumption A1 with \(\int k(x,x)\rho (x)dx<\infty \), then the direction of steepest descent in \(\mathcal {B}\) that maximizes

is given by

where \(\iota _{k,\rho }:\mathcal {H}_k^d\rightarrow L_2^d(\rho )\) is the inclusion operator and \(\iota _{k,\rho }^*\) is its adjoint, as in Proposition 1. Furthermore, under the optimal vector field \(\phi _{\rho ,\pi }^*\), we have \(-\nabla _h KL (T_{\#}\rho |\pi )|_{h=0}=S(\rho ,\pi )\).

Proof

First note that according to [27, Theorem 3.1], we have

Therefore, we have

Next, observe that we have

The term \(\mathbb {E}_{x\sim \rho } [-\nabla V(x)k(x,\cdot )+\nabla k(x,\cdot )]\) is finite for all \(x\in \mathbb {R}^d\) due to the following inequality:

where the first inequality follows from the Cauchy-Schwartz inequality and the reproducing property of the RKHS. The second inequality follows from conditions on k and \(\rho \). Meanwhile, the constraint can be written as

where \(I_d:\mathcal {H}_k\rightarrow \mathcal {H}_k\) is the identity operator. Now, note that \(\left( (1-\nu )\iota _{k,\rho }^*\iota _{k,\rho }+\nu I_d \right) ^{\frac{1}{2}}\) is well-defined since \(\iota _{k,\rho }^*\iota _{k,\rho }:\mathcal {H}_k^d\rightarrow \mathcal {H}_k^d\) is positive, compact and self-adjoint. Therefore, based on the above display, the constraint \(\{ \phi \in \mathcal {H}_k^d: \nu \left\Vert \phi \right\Vert _{\mathcal {H}_k^d}^2+(1-\nu )\left\Vert \phi \right\Vert _{L_2^d(\rho )}^2\le 1 \}\) is equivalent to

Since the spectrum of \(\iota _{k,\rho }^*\iota _{k,\rho }\) is positive and \(\nu \in (0,1]\), \((1-\nu )\iota _{k,\rho }^*\iota _{k,\rho }+\nu I_d\) is invertible. For all \(\phi \in \mathcal {H}_k^d\), there exists a unique \(\psi \in \mathcal {H}_k^d\) such that \(\left( (1-\nu )\iota _{k,\rho }^*\iota _{k,\rho }+\nu I_d \right) ^{-\frac{1}{2}}\psi =\phi \). Applying this fact along with the equivalent form of the constraint, we have

where the second identity follows from the fact that \(\left( (1-\nu )\iota _{k,\rho }^*\iota _{k,\rho }+\nu I_d \right) ^{-\frac{1}{2}}\) is self-adjoint and the upper bound in the last inequality is achieved when

and the result hence follows. \(\square \)

With the optimal-vector field as derived above, we consider the following mean-field partial differential equation (PDE) as the R-SVGF:

It is important to notice that the R-SVGF interpolates between the SVGF and the WGF. However, the regime of interest for us is when \(\nu \rightarrow 0\), as we get arbitrarily close to the WGF. We quantify this statement precisely in the later sections. On the other hand, when \(\nu \rightarrow 1\), the R-SVGF becomes the SVGF.

Remark 2

We now make the following remarks about the above result.

-

(i)

We can alternatively write \(\phi _{\rho ,\pi }^*\) from Proposition 2 as

$$\begin{aligned} \phi _{\rho ,\pi }^* \propto -\left( (1-\nu )\iota _{k,\rho }^*\iota _{k,\rho }+\nu I_d \right) ^{-1} \iota _{k,\rho }^* \left( \nabla \log \frac{\rho }{\pi } \right) , \end{aligned}$$since we have

$$\begin{aligned} \mathbb {E}_{x\sim \rho } [-\nabla V(x)k(x,\cdot )+\nabla k(x,\cdot )] =&\int _{\mathbb {R}^d} k(\cdot , x)\left( -\nabla V(x)-\frac{\nabla \rho (x)}{\rho (x)}\right) \rho (x)dx\\ =&-\iota _{k,\rho }^*\left( \nabla \log \frac{\rho }{\pi }\right) . \end{aligned}$$ -

(ii)

The operator in (7) has an equivalent expression, as we discuss below. First, we claim that

$$\begin{aligned} \iota _{k,\rho }\left( (1-\nu )\iota _{k,\rho }^*\iota _{k,\rho }+\nu I_d\right) ^{-1}\iota _{k,\rho }^*=\left( (1-\nu )\iota _{k,\rho }\iota _{k,\rho }^*+\nu I \right) ^{-1}\iota _{k,\rho }\iota _{k,\rho }^*. \end{aligned}$$To see that, we start with the trivial identity in the first line below and proceed as

$$\begin{aligned}&\left( (1-\nu )\iota _{k,\rho }\iota _{k,\rho }^*+\nu I \right) \iota _{k,\rho }=\iota _{k,\rho }\left( (1-\nu )\iota _{k,\rho }^*\iota _{k,\rho }+\nu I_d \right) ,\\ \implies&\iota _{k,\rho }=\left( (1-\nu )\iota _{k,\rho }\iota _{k,\rho }^*+\nu I \right) ^{-1}\iota _{k,\rho }\left( (1-\nu )\iota _{k,\rho }^*\iota _{k,\rho }+\nu I_d \right) \\ \implies&\iota _{k,\rho }\left( (1-\nu )\iota _{k,\rho }^*\iota _{k,\rho }+\nu I_d \right) ^{-1}=\left( (1-\nu )\iota _{k,\rho }\iota _{k,\rho }^*+\nu I \right) ^{-1}\iota _{k,\rho } \\ \implies&\iota _{k,\rho }\left( (1-\nu )\iota _{k,\rho }^*\iota _{k,\rho }+\nu I_d \right) ^{-1}\iota _{k,\rho }^*=\left( (1-\nu )\iota _{k,\rho }\iota _{k,\rho }^*+\nu I \right) ^{-1}\iota _{k,\rho }\iota _{k,\rho }^*. \end{aligned}$$According to this observation, (7) can also be written in the following form

$$\begin{aligned} \begin{aligned} \partial _t \rho _t&=\nabla \cdot \left( \rho _t\ \left( (1-\nu )\iota _{k,\rho _t}\iota _{k,\rho _t}^*+\nu I \right) ^{-1}\iota _{k,\rho _t}\iota _{k,\rho _t}^*\left( \nabla \log \frac{\rho _t}{\pi }\right) \right) \\&= \nabla \cdot \left( \rho _t ~ \left( (1-\nu ){\mathcal {T}}_{k,\rho _t}+\nu I \right) ^{-1} {\mathcal {T}}_{k,\rho _t}\left( \nabla \log \frac{\rho _t}{\pi } \right) \right) ,\\ \end{aligned} \end{aligned}$$ -

(iii)

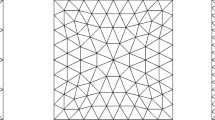

Particle-based spatial discretization. We now describe the spatial discretization of the R-SVGF. Based on the results in Proposition 2 and (ii) in Remark 2, we obtain the following ODE system:

$$\begin{aligned} \left\{ \begin{aligned} \frac{d x_i(t)}{dt}&=-\left( (1-\nu )\iota _{k,\rho _t^N}^*\iota _{k,\rho _t^N}+\nu I_d \right) ^{-1}\\ {}&\left( \frac{1}{N}\sum _{j=1}^N -\nabla _2 k\left( x_i(t),x_j(t)\right) +k\left( x_i(t),x_j(t)\right) \nabla V(x_j(t)) \right) \\ x_i(0)&=x_i^0\in \mathbb {R}^d,\quad i=1,2,\ldots ,N \end{aligned} \right. , \end{aligned}$$where \(\{x_i(t)\}_{i=1}^N\) is the set of N particles. \(\rho _t^N=\frac{1}{N}\sum _{j=1}^N \delta _{x_j(t)}\) is the empirical distribution at time t, provides a N-particle spatial discretization of the R-SVGF.

-

(iv)

Time discretization. We also have the following time-discretization of the R-SVGF. Let \(\{h_{n}\}_{n=1}^\infty \) be the sequence of time step-size. We denote the density at the n-th iterate by \(\rho ^n\) for all integers \(n \ge 1\). Then the time discretization of the R-SVGF can be written as

$$\begin{aligned} \begin{aligned} \rho ^{n+1}=\left( id-h_{n+1} \mathcal {D}_{\nu _{n+1},\rho ^n} \nabla \log \frac{\rho ^n}{\pi }\right) _{\#\rho ^n}, \end{aligned} \end{aligned}$$(8)where \(\mathcal {D}_{\nu _n,\rho ^n}=\left( (1-\nu _n)\iota _{k,\rho ^n}\iota ^*_{k,\rho ^n}+\nu _n I_d \right) ^{-1}\iota _{k,\rho ^n}\iota _{k,\rho ^n}^*\).

-

(v)

The parameter \(\nu \) can also be made to be dependent on t or n; in fact, in our analysis, we pick a time-varying regularization parameter.

4 Convergence Results in Continuous and Discrete Time

Our goal in this section is to derive convergence guarantees for the R-SVGF. Before we proceed, we introduce the notion of Regularized Stein-Fisher information (or Regularized Kernel Stein Discrepancy).

4.1 Regularized Stein-Fisher Information and its Properties

Related to the Fisher information defined in (6), several works, for example [19, 24, 37], used the notion of Stein-Fisher Information to understand the convergence properties of the SVGD algorithm. The Stein-Fisher information was introduced in [13, 22, 26] under the name Kernel Stein Discrepancy. It is defined as

where \((\lambda _i,e_i)_{i=1}^\infty \) are the set of eigenvalues and eigenvectors of the operator \(\iota _{k,\rho }\iota _{k,\rho }^*\), with \(\lambda _1\ge \lambda _2\ge \cdots >0\). A drawback of the Stein-Fisher information is that it is a weaker metric, for example in comparison to the Fisher information metric in (6); see [21, 22, 40]. Indeed, comparing (6) and the above definition for the Stein-Fisher information, it is immediately clear that the Stein-Fisher information is severely restrictive, in particular when the eigenvalues of the chosen RKHS decay fast. To counter this effect, we introduce the following regularized Stein-Fisher information and show that when the regularization parameter is chosen appropriately, the regularized Stein-Fisher information upper and lower bounds the Fisher information.

Definition 1

[Regularized Stein-Fisher Information] For any \({\rho \in \mathcal {P}(\mathbb {R}^d)}\), the regularized Stein Fisher information from \(\rho \) to \(\pi \), denoted as \(I_{\nu ,Stein}(\rho |\pi )\), is defined as

The regularized Stein Fisher information in (9) is well-defined because the operator

is positive and for any \(f\in \mathcal {H}_k^d\), \(\left( (1-\nu )\iota _{k,\rho }^*\iota _{k,\rho }+\nu I_d \right) f =0\) if and only if \(f=0\).

Remark 3

The regularized Stein Fisher information has the following alternative representation:

For \(\nu >0\), with the fact that \(\lambda _i\) decreases to zero as \(i\rightarrow \infty \), the regularized Stein Fisher information and the Stein Fisher information both encode the spectral decay information of \(\iota _{k,\rho }\iota _{k,\rho }^*\). However, note that the regularized Stein Fisher information tends to the Fisher information as \(\nu \rightarrow 0\). Hypothetically speaking, if \(\nu \) is set to zero, then the regularized Stein Fisher information actually becomes the Fisher information. In our analysis, we will take advantage of the relation between the regularized Stein Fisher information and the Fisher information, while studying the convergence properties of R-SVGF under Log-Sobolev inequality assumptions on the target \(\pi \). A precise relation between the regularized Stein Fisher information and the Fisher information is stated in the following result. Before stating the result, we introduce the following notation for convenience. For \(\gamma \in (0,\frac{1}{2}]\), we denote the pre-image of \(\nabla \log \frac{\rho }{\pi }\in L^d_2(\rho )\) under \((\iota _{k,\rho }\iota _{k,\rho }^*)^{\gamma }\) as

Note that \(\Vert {\mathfrak {I}}(\rho , \gamma )\Vert _{L^d_2(\rho )}\) is finite if and only if \(\nabla \log \frac{\rho }{\pi }\in \text {Ran}((\iota _{k,\rho }\iota _{k,\rho }^*)^{\gamma })\).

Proposition 3

[Equivalence relation between \(I(\rho |\pi )\) and \(I_{\nu ,Stein}(\rho |\pi )\)] Let \(\rho ,\pi \in \mathcal {P}(\mathbb {R}^d)\) and suppose there exists \(\gamma \in (0,\frac{1}{2}]\) such that \(\left\Vert {\mathfrak {I}}(\rho , \gamma ) \right\Vert _{L_2^d(\rho )}<\infty .\) If the regularization parameter is chosen to satisfy the following condition,

then we have that

Proof of Proposition 3

According to (10), we have

On the other hand, since \(\left\Vert {\mathfrak {I}}(\rho , \gamma ) \right\Vert _{L_2^d(\rho )}<\infty \) for some \(\gamma \in (0,\frac{1}{2}]\), there exists \(h={\mathfrak {I}}(\rho , \gamma )\in L_2^d(\rho )\) such that

Therefore

where the second to last inequality follows from the fact that

and the last inequality follows from the condition in (11).

4.2 Convergence Results for R-SVGF

4.2.1 Relationship Between R-SVGF and WGF

Assuming the existence and uniqueness of the R-SVGF (see Sect. 5) and WGF (see [23] for sufficient conditions) we now provide the relationship between the R-SVGF and the WGF in various metrics. We first start with the relationship in the Fisher information metric, without any stringent assumptions on the target density (thereby allowing for multi-modal and complex densities that arise in practice). Note that the Fisher information metric corresponds to the first-order stationarity metric for the WGF, obtained by minimizing the KL divergence. This metric has been recently proposed as a meaningful metric to consider in the case of sampling from general non-log-concave densities in [4]. Note in particular under mild conditions on q (e.g., connected support) that having the Fisher information \(I(p|q) = 0\) implies \(p\equiv q\). However, even when \(I(p|q) \le \epsilon \), for some \(\epsilon >0\), we have that the modes of the two densities are well-aligned, as argued in [4]. In order to state our next result, we denote by \((\lambda _{i,t},e_{i,t})_{i=1}^\infty \), the set of eigenvalues and eigenvectors of the operator \(\iota _{k,\rho _t}\iota _{k,\rho _t}^*\) for any \(t\ge 0\), with \(\lambda _{1,t}\ge \lambda _{2,t}\ge \cdots >0\).

Theorem 1

[Relation to the WGF in Relative Fisher Information] Let \((\rho _t)\) be the solution to (7) and \((\mu _t)\) be the solution to the WGF, i.e.,

For any \(t>0\), suppose there exists \(\gamma _t\in (0,\frac{1}{2}]\) such that \(\left\Vert {\mathfrak {I}}(\rho _t, \gamma _t) \right\Vert _{L_2^d(\rho _t)}<\infty .\) Then, for any initial density \(\mu _0 \in {\mathcal {P}}({\mathbb {R}}^d)\), and for any \(T\in (0,\infty )\), we have

where \(\lambda _{1,t}\) is the largest eigenvalue of \(\iota _{k,\rho _t}\iota ^*_{k,\rho _t}\) for all \(t\ge 0\).

Proof of Theorem 1

First note that we have the following upper bound on \( \frac{d}{dt}\textsc {KL}(\rho _t|\mu _t)\):

In the above calculation, the fourth equality follows by integration-by-parts. The inequality follows by Young’s inequality for the inner product (i.e., \(\langle p,q \rangle \le \frac{1}{2}c|p|^2+\frac{1}{2c}|q|^2\) for any \(p,q\in \mathbb {R}^d\)) and the last equality follows from the proof of Proposition 3. Since \(\nabla \log \frac{\rho _t}{\pi }=(\iota _{k,\rho _t}\iota _{k,\rho _t}^*)^{\gamma _t} h_t\) for some \(\gamma _t\in (0,1/2]\) with \(h_t:={\mathfrak {I}}(\rho _t, \gamma _t)\in L_2^d(\rho _t)\), we obtain

where the last inequality follows from the facts that

Integrating from \(t=0\) to \(t=T\), we get

Since KL-divergence is non-negative, (14) is proved.

Remark 4

The sequence \(\{\lambda _{1,t}\}_{t\ge 0}\) in Theorem 1 is the largest eigenvalue of \(\iota _{k,\rho _t}\iota _{k,\rho _t}^*\) for all \(t\ge 0\). Note that it depends on both the kernel k and the solution \((\rho _t)\) to (7). If the kernel function k is assumed to be uniformly bounded, according to Proposition 1, \(\lambda _{1,t}^2\) is uniformly upper bounded by \(\sup _{x\in \mathbb {R}^d} k(x,x) \) for all \(t\ge 0\). For specific choices of kernel function, initial and target distributions, it is required to track \(\lambda _{1,t}\) for all \(t\ge 0\). We will illustrate this further with an example in Remark 10.

Remark 5

The above result shows that as long as \(\rho _0 = \mu _0\), i.e., both the WGF and the R-SVGF are initialized with the same density, and \(\nu \) is chosen such that

the averaged Fisher information along the path tends to zero. This shows the benefit of regularizing the SVGF – it enables one to closely approximate the WGF with appropriate choice of the regularization parameters.

4.2.2 Convergence to Equilibrium Along the Fisher Information

We now provide results on the convergence to equilibrium along the Fisher information for the R-SVGF. We re-emphasize here that our result provided below holds as long as the target \(\pi \in \mathcal {P}(\mathbb {R}^d)\), without additional structural assumptions (via, say, functional inequalities).

Theorem 2

[Convergence of Fisher information] Let \((\rho _t)\) be the solution to (7). For any \(t>0\), suppose there exists \(\gamma _t\in (0,\frac{1}{2}]\) such that \(\left\Vert {\mathfrak {I}}(\rho _t, \gamma _t) \right\Vert _{L_2^d(\rho _t)}<\infty .\) Then

Furthermore, if \(\int _0^\infty \nu ^{2\gamma _t}(1-\nu )^{-2\gamma _t} \left\Vert {\mathfrak {I}}(\rho _t, \gamma _t) \right\Vert _{L_2^d(\rho _t)}^2 dt<\infty \), then we get \(I({\bar{\rho }_t}|\pi )\rightarrow 0\) as \(t\rightarrow \infty \), where \(\bar{\rho }_t{:}{=}\frac{1}{t}\int _0^t \rho _s ds\) is the averaged density of \((\rho _s)_{0\le s\le t}\).

Before proving the above theorem, we introduce a few intermediate results.

Proposition 4

[Decay of the KL-divergence] For the solution \((\rho _t)_{t\ge 0}\) to the PDE (7), it holds that

and consequently

Proof of Proposition 4

Note that

It suffices to show that for all \(\nu > 0\), \(\left( (1-\nu )\iota _{k,\rho _t}^*\iota _{k,\rho _t}+\nu I_d\right) ^{-1}\) is a positive operator from \(\mathcal {H}_k^d\) to \(\mathcal {H}_k^d\). By the definition of \(\iota _{k,\rho _t}\), for any \(f\in \mathcal {H}_k^d\) with \(\left\Vert f \right\Vert _{\mathcal {H}_k^d}=1\),

for all \(\nu > 0\). Therefore, \( (1-\nu )\iota _{k,\rho _t}^*\iota _{k,\rho _t}+\nu I_d\) is a positive operator from \(\mathcal {H}_k^d\) to \(\mathcal {H}_k^d\). So is the operator \(\left( (1-\nu )\iota _{k,\rho _t}^*\iota _{k,\rho _t}+\nu I_d\right) ^{-1}\). Hence, we have (16). The claim in (15) follows directly from (16), (5) and Definition 1.

We now provide the proof of Theorem 2. For the proof, we recall that we use \((\lambda _{i,t},e_{i,t})_{i=1}^\infty \) to denote the set of eigenvalues and eigenvectors of the operator \(\iota _{k,\rho _t}\iota _{k,\rho _t}^*\) for any \(t\ge 0\), with \(\lambda _{1,t}\ge \lambda _{2,t}\ge \cdots >0\).

Proof of Theorem 2

From Proposition 4 and (12), we know that

where \(\nabla \log \frac{\rho _t}{\pi }=(\iota _{k,\rho _t}\iota _{k,\rho _t}^*)^{\gamma _t}h_t\) for some \(\gamma _t\in (0,\frac{1}{2}]\) with \(h_t:= {\mathfrak {I}}(\rho _t, \gamma _t)\in L_2^d(\rho _t)\). Therefore, we have

The result follows by integrating over t and noting that the KL-divergence is non-negative. Now, with \(\rho _t\) denoting the solution to (9), we have that \(I(\rho _t|\pi )\) is non-negative and continuous in t. The claim of convergence follows from the convexity of \(\rho \mapsto I(\rho |\mu )\).

4.2.3 Convergence in KL-Divergence Under LSI

While the previous result was provided for any the target density \(\pi \in {\mathcal {P}}({\mathbb {R}}^d)\), in this section, we provide improved convergence results of the R-SVGF under the assumption that the \(\pi \) further satisfies the Log-Sobolev Inequality. Recall that we say that \(\pi \) satisfies the Log-Sobolev inequality with constant \(\lambda >0\) if for all \(\mu \in \mathcal {P}(\mathbb {R}^d)\):

Our first result below is a stronger version of the result in Theorem 1, under the assumption that the target \(\pi \) satisfies LSI and Assumption 1 on the initialization of the WGF.

Assumption 1

The initial density \(\mu _0\) is chosen so that the solution \((\mu _t)\) to (13) also satisfies LSI with parameter \(\lambda \), for all \(t>0\).

Under the stronger assumption that the target density \(\pi \) is strongly log-concave, following the arguments in [45, Theorem 8], it is easy to show that Assumption 1 is satisfied as long as \(\mu _0\) is chosen such that it satisfies LSI. We conjecture that the same holds true even when the target density satisfies LSI and additional mild smoothness assumptions (i.e., LSI is preserved along the trajectory as long as the initial density \(\mu _0\) satisfies LSI, presumably with additional milder assumptions). However, a proof of this conjecture has eluded us thus far.

Theorem 3

[Relation to the WGF under LSI] Assume \(\pi \) satisfies the log-Sobolev inequality with parameter \(\lambda \). Let \((\rho _t)\) be the solution to (7). Let \((\mu _t)\) be the solution to the WGF, defined in (13), with \(\mu _0\) satisfying Assumption 1. For any \(t>0\), suppose there exists \(\gamma _t\in (0,\frac{1}{2}]\) such that \( \left\Vert {\mathfrak {I}}(\rho _t, \gamma _t) \right\Vert _{L_2^d(\rho _t)}<\infty .\) Then, for any \(T\in (0,\infty )\), we have

where \(\lambda _{1,t}\) is the largest eigenvalue of \(\iota _{k,\rho _t}\iota ^*_{k,\rho _t}\) for all \(t\ge 0\).

Proof of Theorem 3

Following the same arguments as in the proof of Theorem 1, we obtain that for any \(t>0\),

Hence, under Assumption 1 we obtain

Finally, (17) follows from the Gronwall’s inequality.

Our second result is a stronger version of the result in Theorem 2, under the assumption that the target distribution \(\pi \) satisfies LSI. We remark that convergence to equilibrium of the related WGF under various functional inequalities is a well-studied topic. We refer the interested reader to [3] for a detailed overview.

Theorem 4

[Decay of KL-divergence under LSI] Assume that \(\pi \) satisfies the log-Sobolev inequality with \(\lambda >0\). Let \((\rho _t)\) be the solution to (7). For any \(t>0\), suppose there exists \(\gamma _t\in (0,\frac{1}{2}]\) such that \( \left\Vert {\mathfrak {I}}(\rho _t, \gamma _t) \right\Vert _{L_2^d(\rho _t)}<\infty .\) Then, for any \(T\in (0,\infty )\), we have

Proof of Theorem 4

From the proof of Theorem 2, we have

where the last inequality follows the log-Sobolev inequality. The final statement follows from Gronwall’s inequality.

Remark 6

[Exponential Decay of KL-divergence] Yet another way to state the above result is via the introducing the following regularized Stein-LSI, similar to the introduction of Stein-LSI in [19]. However, the introduction of Stein-LSI is quite restrictive in the sense that it couples assumptions on the target and the chosen RKHS. This makes verifying the conditions more delicate. To counter this effect, we now introduce the notion of Regularized Stein-LSI. We say that \(\pi \in \mathcal {P}(\mathbb {R}^d)\) satisfies the regularized Stein log-Sobolev inequality with constant \(\lambda >0\) if for all \(\mu \in \mathcal {P}(\mathbb {R}^d)\):

An advantage of the above condition is that, as \(\nu \rightarrow 0\) the regularized Stein-LSI inequality becomes equivalent to the standard LSI inequality. Under the condition that the target density \(\pi \) satisfies (18), and letting \((\rho _t)\) be the solution to (7), it holds that

The proof of (19) follows immediately from Proposition 4 and (18).

4.3 Convergence Results for Time-Discretized R-SVGF

In this section, we analyze the convergence properties of the time-discretized R-SVGF in (8). To do so, we require the following additional assumptions.

Assumption A2

The following conditions hold:

-

(1)

There exists a constant \(B>0\) such that \(\left\Vert \nabla _1 k(x,\cdot ) \right\Vert _{\mathcal {H}_k^d}\le B\) for all \(x\in \mathbb {R}^d\).

-

(2)

The potential function \(V:\mathbb {R}^d\rightarrow \mathbb {R}\) is twice continuously differentiable and gradient Lipschitz with parameter L.

-

(3)

Along the time discretization (8)), \(I(\rho ^n|\pi )<\infty \) for all fixed \(n\ge 0\).

The smoothness assumptions in points (1) and (2) of Assumption A2 are commonly required in analyzing any discrete-time algorithms, albeit deterministic [24, 37] or randomized [4, 11, 45]. While it could be relaxed (see, for example, [43]), in general it is impossible to completely avoid them as in the case of analyzing the corresponding flows. Before stating our results, we also introduce some convenient notations. We let

where the sequence \(\{\lambda _i^{(n)}\}_{i\ge 1}\) corresponds to the positive eigenvalues of the operator \(\iota _{k,\rho ^n}\iota _{k,\rho ^n}^*\) in the order of decreasing values for all \(n\ge 0\).

Theorem 5

[Convergence in Fisher Divergence] Suppose Assumption A2 holds. Let \((\rho ^n)\) be the time discretization of the R-SVGF described in (8) with initial condition \(\rho ^0=\rho _0\) such that \(\textsc {KL}(\rho _0|\pi )\le R\). For each n, suppose that \(\nu _{n+1}\) and the step-size \(h_{n+1}\) are chosen such that,

where \(\alpha \in (1,2]\) is some constant, and suppose that there exists \(\gamma _n\in (0,\frac{1}{2}]\), such that \({\mathfrak {I}}(\rho ^n, \gamma _n) \in L_2^d(\rho ^n)\). Then,

Before proving Theorem 5, we first prove the following intermediate result. For the proofs, we let \((\lambda _i^{(n)},e_i^{(n)})_{i=1}^\infty \)to denote the set of eigenvalues and eigenvectors of the operator \(\iota _{k,\rho ^n}\iota _{k,\rho ^n}^*\), with \(\lambda _1^{(n)}\ge \lambda _2^{(n)}\ge \cdots >0\).

Lemma 1

For each \(n\ge 1\), define \(g=\mathcal {D}_{\nu _{n+1},\rho ^n}\nabla \log \frac{\rho ^n}{\pi }\). Under the conditions in Theorem 5, we have that, for any \(x\in \mathbb {R}^d\) and \(t\in [0,h_{n+1}]\),

Proof of Lemma 1

Since for each n, there exists \(\gamma _n\in (0,1/2]\) and a function \( h={\mathfrak {I}}(\rho ^n, \gamma _n) \in L_2^d(\rho ^n)\) such that \((\iota _{k,\rho ^n}\iota _{k,\rho ^n}^*)^{2\gamma _n} h_j=\partial _j \log \frac{\rho ^n}{\pi } \), where \(h_j\) is the j-th component of the function value of h, we have

In the above, the first inequality follows from Cauchy-Schwartz inequality, the second inequality follows from the fact that

and the last inequality follows from Assumption A2. Meanwhile, since \(\left\Vert \nabla g(x) \right\Vert _{2}\le \left\Vert \nabla g(x) \right\Vert _{HS}\) for all \(x\in \mathbb {R}^d\), for every \(t\in [0,h_{n+1}]\), we have

where the last inequality follows from (21).

Proof of Theorem 5

We start from studying the single step along (8). In the following analysis, for each \(n\ge 1\), we denote \(g=\mathcal {D}_{\nu _{n+1},\rho ^n}\nabla \log \frac{\rho ^n}{\pi }\), \(\phi _t(x)=x-t g(x)\) for all \(x\in \mathbb {R}^d\), \(t\in [0,h_{n+1}]\) and \(\tilde{\rho }_t=(\phi _t)_{\#}\rho ^n\). Therefore, we have

The following analysis is motivated by [37, Proposition 3.1]. According to [46, Theorem 5.34], the velocity field ruling the evolution of \(\tilde{\rho }_t\) is \(\omega _t\in L_2^d(\tilde{\rho }_t)\) and \(\omega _t(x)=-g(\phi _t^{-1}(x))\). Define \(\psi (t)=\textsc {KL}(\tilde{\rho }_t|\pi )\). According to the chain rule in [46, Section 8.2],

where \(\text {Hess}_{\textsc {KL}(\cdot |\pi )}(\tilde{\rho }_t)\) is the Wasserstein Hessian of \(\textsc {KL}(\cdot |\pi )\) at \(\tilde{\rho }_t\). For any \(\mu \in \mathcal {P}(\mathbb {R}^d)\) and any v in the Wasserstein tangent space at \(\mu \), the Wasserstein Hessian is given by [24],

Therefore we can expand the difference in KL-divergence between the two consecutive iterations as

The first term on the right-hand side of (23) can be studied via the spectrum of the operator \(\iota _{k,\rho ^n}\iota _{k,\rho ^n}^*\).

Since \(\tilde{\rho }_t=(\phi _t)_{\#}\rho ^n\), for any function h we have \(\mathbb {E}_{X\sim \tilde{\rho }_t}\left[ h(X) \right] =\mathbb {E}_{Y\sim \rho {^n}}\left[ h(\phi _t(Y)) \right] \). Hence, for the second term on the right side of (23), we obtain

where the last inequality follows from Assumption A2-(2). Therefore, we obtain

where

with \((\lambda _i^{(n)}, e_i^{(n)})_{i=1}^\infty \) being the sequence of eigenvalues and eigenvectors to the operator \(\iota _{k,\rho ^n}\iota _{k,\rho ^n}^*\) such that \(\lambda _1^{(n)}\ge \cdots \ge \lambda _i^{(n)}\ge \cdots >0\) and \((e_i^{(n)})_{i=1}^\infty \) is an orthonormal basis of \(L_2(\rho ^n)\). According to Lemma 1 and Assumption A2,

and furthermore according to Lemma 1, \(\left\Vert (id-t \nabla g(x))^{-1}\right\Vert _2^2\le \alpha ^2\). Therefore, we get

where the last inequality follows from (21) and the second inequality follows from the fact that

which is proved in Proposition 3. Lastly, summing over n and we obtain

where the last inequality follows from the fact that KL divergence is non-negative. Therefore, (22) is proved.

Remark 7

We emphasize that the above result does not make any assumptions on the target density \(\pi \), except for \(\pi \in \mathcal {P}(\mathbb {R}^d)\) and the Lipschitz gradient assumption. In particular, it holds for multi-modal densities. However, the metric of convergence is the weaker Fisher information metric.

We now provide a stronger result under the LSI assumption.

Theorem 6

Suppose Assumption A2 holds and \(\pi \) satisfies the log-Sobolev inequality with parameter \(\lambda \). Let \((\rho ^n)\) be as described in (8) with initial condition \(\rho ^0=\rho _0\) such that \(\textsc {KL}(\rho _0|\pi )\le R\). Assume the regularization parameter and the step-size parameters are chosen such that for all \(n\ge 0\), they satisfy

where \(\alpha \in (1,2]\) is a constant, \(\gamma _n\in (0,\frac{1}{2}]\), and \({\mathfrak {I}}(\rho ^n, \gamma _n) \in L_2^d(\rho ^n)\). Then, for all \(n\ge 1\),

Proof of Theorem 6

From the proof of Theorem 5, we can bound the difference in KL-divergence between two consecutive iterations by

where the second inequality follows from the fact that \(\frac{1}{2}h_{n+1} \nu _{n+1}^{-1} \alpha ^2 B^2\le 1\), and the last inequality follows from (24).

Last, since \(\pi \) satisfies the log-Sobolev inequality with parameter \(\lambda \), we get

and (25) follows from the above recursive inequality.

Remark 8

[Choice of \(\{\nu _n\}_{n\ge 1}\)] From (24) and (25), we observe that there is a trade-off in terms of \(\{\nu _n\}_{n\ge 1}\), as smaller \(\{\nu _n\}_{n\ge 1}\) will result in slower convergence of the time-discretized R-SVGF in KL-divergence. Indeed, if \(\{\nu _n\}_{n\ge 1}\) are chosen to be small, (24) requires the step-size \(\{h_n\}_{n\ge 1}\) to be small as well. And, as shown in (25), \(\textsc {KL}(\rho _n|\pi )\) decays at a slower rate when \(\{h_n\}_{n\ge 1}\) are smaller. We also refer to Remark 10 below and Remark 12 for further trade-offs with respect to the parameter \(\{\nu _n\}_{n\ge 1}\).

Remark 9

According to (25), to reach an \(\epsilon \)-accuracy in KL-divergence, we need the number of iterations to be at least \(n_\epsilon \) such that \(\prod _{i=1}^{n_\epsilon } \left( 1-\frac{1}{2}\lambda (1-\nu _i)^{-1} h_i\right) R\le \epsilon \). With the fact that \(\log (1-x)<-x\) for all \(x\in (0,1)\), we get \(n_\epsilon \) satisfies

Under (24), if we can choose the time step sizes \((h_i)_{i=1}^\infty \) to be a constant \(h>0\), then we have \(n_\epsilon = O(\log (R/\epsilon ))\). For comparison, in Table 1, we provide the iteration complexity results for different methods, to obtain \(\text {KL}(\rho _n|\pi ) \le \epsilon \), under the assumption that the target \(\pi \) satisfies LSI.

We also emphasize that prior results on the analysis of time-discretization of the SVGF under functional inequality assumptions are established only in the weaker Stein-Fisher information metric [24, 37]. Our results above are established for the \(\textsc {KL}\)-divergence and is more in line with similar results established for other randomized Monte Carlo algorithms [4, 11, 45]. We end this section with the following remark on an illustrative example.

Remark 10

(An illustrative example) Consider sampling from a Gaussian target \(\pi =\mathcal {N}(0,Q)\), where Q is strictly positive definite and \(k(x,y)=\langle x,y \rangle +1\), the linear kernel. This model has recently been studied in [28] motivated by connections to Gaussian variational inference. Note that the above target \(\pi \) satisfies LSI with \(\lambda \) being the minimal eigenvalue of Q. Based on the results established in [28], our results on the R-SVGF and the time-discretized R-SVGF can be interpreted as follows. Before we proceed, we point out a subtle fact. To establish existence and uniqueness later in Sect. 5 we assume that the kernel is bounded, which is not satisfied by the linear kernel. However, based on an explicit calculation, existence and uniqueness results were shown for the linear kernel in [28].

R-SVGF: If \(\rho _0=\mathcal {N}(0,\Sigma _0)\), then note that we have for all \(t\ge 0\), \(\rho _t=\mathcal {N}(0,\Sigma _t)\), \(\gamma _t={1}/{2}\), \(\lambda _{1,t}\le 1+\left\Vert \Sigma _t \right\Vert _F^2\) and \(\left\Vert \mathcal {J}(\rho _t,\gamma _t) \right\Vert _{L_2(\rho _t)}=\left\Vert Q^{-1}-\Sigma _t^{-1} \right\Vert _F \). It follows from [28, Equation 9] that

Further assuming that \(\Sigma _0 Q=Q \Sigma _0\), there exists an orthonormal matrix P such that we have \(Q=P^\intercal \text {diag}\{q_1,\cdots ,q_d\} P\) and \(\Sigma _t=P^\intercal \text {diag}\{\sigma _1(t),\cdots ,\sigma _d(t)\} P\) with \(q_1\ge q_2\ge \cdots \ge q_d\). According to [28, page 45], we also have that \(\lambda =q_d\), \(\left\Vert \Sigma _t-Q \right\Vert =O\big (\exp (-\frac{2t}{(1-\nu )q_1+\nu } )\big )\) and

Our convergence result in Theorem 4, hence translates as

for \(T\in (0,\infty )\). The first term in the above bound indicates the exponential convergence of the R-SVGF and the convergence rate is proportional to \(1/(1-\nu )\). The second term characterizes the bias between \(\rho _T\) and \(\pi \). For any fixed values of T, the bias term vanishes as \(\nu \rightarrow 0\). Therefore, in order to obtain an optimal upper bound on \(\textsc {KL}(\rho _T|\pi )\), there is a trade-off between choosing large \(\nu \) (i.e., \(\nu \rightarrow 1\)) and small \(\nu \) (i.e., \(\nu \rightarrow 0\)), which depends on T and eigenvalues of \(\Sigma _0\) and Q.

Time-discretized R-SVGF: Note that if \(\rho ^0=\mathcal {N}(0,S_0)\), then for all \(n\ge 0\), \(\rho ^n=\mathcal {N}(0,S_n)\), \(\gamma _n={1}/{2}\). \(R_n=\left\Vert \mathcal {J}(\rho ^n,\gamma _n) \right\Vert _{L_2(\rho ^n)}=\left\Vert Q^{-1}-S_n^{-1} \right\Vert _F \) and \(I(\rho ^n|\pi )=\text {trace}(S_n(Q^{-1}-S_n^{-1})^\intercal (Q^{-1}-S_n^{-1}))\), where \((S_n)_{n\ge 0}\) is updated as:

Since \(\nabla _1 k(x,y)= y\), the RKHS norm of \(\nabla _1 k(x,y)\) is obtained as follows

Therefore, we have \(\gamma _n=1/2\), \(B=\sqrt{d}\) and \({\mathfrak {S}}_n\le (1-\nu _{n+1})^{-2}\) for all \(n\ge 0\). Now, the convergence result in Theorem 6 translates as:

where for all \(i\ge 1\),

We now show that such a choice of \(\{\nu _i,h_i\}_{i\ge 1}\) exists. Assuming \(QS_0=S_0Q\), there exists an orthonormal matrix P such that \(Q=P^\intercal \text {diag}\{q_1,\cdots ,q_d\} P\) and \(S_i=P^\intercal \text {diag}\{\sigma _{1,i},\cdots ,\sigma _{d,i}\} P\) with \(q_1\ge q_2\ge \cdots \ge q_d\). Then, (26) implies that for any \(k= 1,\cdots ,d \),

Now, we pick

with \(\delta \in (0,1/2)\). According to [28, Theorem 3.10], if \(q_k^{-1}\sigma _{k,0}\in [u_\delta , 1/3+1/(3\delta )]\) for all \(k=1,\cdots ,d\), we have \(\left\Vert S_i-Q \right\Vert _2\le e^{-i\delta } \left\Vert S_0-Q \right\Vert \). Here \(u_\delta \) is the smaller root of \(f_\delta '(u)=1-\delta \) with \(f_\delta (x)=(1+\delta (1-x))^2x\). According to the convergence of \(S_i\) and the fact that

we have that \(\{\nu _i\}_{i\ge 1}\) and \(\{1-\nu _i\}_{i\ge 1}\) have uniform positive lower bounds, i.e., \(\inf _i \nu _i>0\) and \(\inf _i 1-\nu _i>0\). Therefore, we can further choose

where the positivity of the third term follows from the fact that

Therefore, we have shown that there exists \(\delta \in (0,1/2)\) such that \(\{\nu _i,h_i\}_{i\ge 1}\) defined in (27) satisfies the assumptions in Theorem 6 and \(\inf _i h_i>0\).

Last, we discuss the iteration complexity under the choice of \(\{\nu _i,h_i\}_{i\ge 1}\) in (27). According to Remark 9, \(\textsc {KL}(\rho ^n|\pi )\le \epsilon \) if n satisfies

Since \(\inf _i h_i>0\) and \(\inf _i 1-\nu _i>0\), we obtain \(n=O(\log ({\textsc {KL}(\rho ^0|\pi )}/{\epsilon }))\).

5 Existence and Uniqueness

The existence and uniqueness of the SVGF was studied in [30]. Motivated by their approach, in this section we study the existence and uniqueness of solutions to (7) under appropriate assumptions. Our main difficulty is in handling the non-linear operator \(\left( (1-\nu ){\mathcal {T}}_{k,\mu }+ \nu I\right) ^{-1} {\mathcal {T}}_{k,\mu }\) in the R-SVGF.

We first introduce the definition of weak solutions to (7). We restrict the initial conditions in the probability measure space \(\mathcal {P}_V\) which is defined as

where, in this section, with a slight overload of notations, we use \(\mathcal {P}\) to denote the set of all probability measures on \(\mathbb {R}^d\). We emphasize here that that \(\mathcal {P}_V\) is a space of probability measures because the weak solutions do not necessarily have densities even if the target measure and the initial measure has a density; see [30] for additional details. We say that a measure-valued function \(\rho \in \mathcal {C}([0,\infty ),\mathcal {P}_V)\) is a weak solution to (7) with initial condition \(\rho _0\in \mathcal {P}_V\) if

and

for all \(\phi \in \mathcal {C}_0^\infty ([0,\infty )\times \mathbb {R}^d)\) and \(U[\rho ]:=-\left( (1-\nu )\iota _{k,\rho }\iota _{k,\rho }^*+\nu I\right) ^{-1}\iota _{k,\rho }\iota _{k,\rho }^*(\nabla \log \frac{\rho }{\pi }) \).

In order to study the existence of weak solutions, we consider the characteristic flow (see, for example, [32] and [30, Definition 3.1]) induced by (7), which is written as

where \(\mathcal {D}_{\nu ,\rho _t}=\left( (1-\nu )\iota _{k,\rho _t}\iota _{k,\rho _t}^*+\nu I\right) ^{-1}\iota _{k,\rho _t}\iota _{k,\rho _t}^*\) for all \(t>0\). Here, the expression \(\rho _t=\Phi (t,\cdot ,\rho _0)_{\#}\rho _0\) means that the measure \(\rho _t\) is the push-forward measure of \(\rho _0\) under the map \(x\rightarrow \Phi (t,x,\rho _0)\). We think of \(\{ X(t,\cdot ,\rho _0)\}_{t\ge 0,\rho _0}\) as a family of maps from \(\mathbb {R}^d\) to \(\mathbb {R}^d\) parameterized by t and \(\rho _0\). The existence and uniqueness of the weak solutions of (7) is equivalent to the existence and uniqueness of solutions to (28). In Theorem 7, we first prove that the mean field characteristic flow in (28) is well-defined. To do so, we also require the following additional assumptions on the kernel and the potential functions.

Assumption K1

The kernel \(k:\mathbb {R}^d\times \mathbb {R}^d\rightarrow \mathbb {R}\) is symmetric, positive definite and fourth continuously differentiable in both variables with bounded derivatives up to fourth order. More explicitly, we assume

-

(1)

\(\left\Vert k \right\Vert _\infty :=\sup _{x\in \mathbb {R}^d} \sqrt{k(x,x)}<\infty \)

-

(2)

\(\left\Vert \nabla k \right\Vert _\infty :=\sup _{x,y\in \mathbb {R}^d} |\nabla _1 k(x,y)|=\sup _{x,y\in \mathbb {R}^d}|\nabla _2 k(x,y)|<\infty \)

-

(3)

\(\left\Vert \nabla _1\cdot \nabla _2 k \right\Vert _{\infty }:=\sup _{x,y\in \mathbb {R}^d} |\nabla _x\cdot \nabla _y k(x,y)|<\infty \).

-

(4)

\(\left\Vert \nabla ^2 k \right\Vert _\infty :=\sup _{x,y\in \mathbb {R}^d} \left\Vert \nabla _x^2 k(x,y) \right\Vert _2<\infty \).

-

(5)

\(\left\Vert \nabla _1\nabla _2 k(x,y) \right\Vert _\infty :=\sup _{x,y\in \mathbb {R}^d} \left\Vert \nabla _x\nabla _y k(x,y) \right\Vert _2<\infty \).

-

(6)

\(\left\Vert \nabla ^2(\nabla _1\cdot \nabla _2 k) \right\Vert _\infty :=\sup _{x,y\in \mathbb {R}^d} \left\Vert \nabla _x^2(\nabla _x\cdot \nabla _y k(x,y)) \right\Vert _2<\infty \).

-

(7)

\(\left\Vert \nabla _1\nabla _2(\nabla _1\cdot \nabla _2 k) \right\Vert _\infty :=\sup _{x,y\in \mathbb {R}^d} \left\Vert \nabla _x\nabla _y (\nabla _x\cdot \nabla _y k(x,y)) \right\Vert _2<\infty \).

-

(8)

\(\left\Vert \nabla _1^2 \cdot \nabla _2^2 k \right\Vert _\infty :=\sup _{x,y\in \mathbb {R}^d} \sum _{i,j=1}^d |\partial _{x_i}\partial _{x_j}\partial _{y_i}\partial _{y_j}k(x,y)|<\infty \).

We emphasize here that [30] required that the kernel is radial for their analysis. However, our analysis does not require this assumption. A classical example of a kernel satisfying the above conditions is the Gaussian kernel.

Assumption V1

The potential function \(V:\mathbb {R}^d\rightarrow \mathbb {R}\) satisfies

-

(1)

\(V\in \mathcal {C}^2(\mathbb {R}^d)\), \(V\ge 0\) and \(V(x)\rightarrow +\infty \) as \(|x|\rightarrow +\infty \).

-

(2)

For any \(\alpha ,\beta >0\), there exists a constant \(C_{\alpha ,\beta }>0\) such that if \(|y|\le \alpha |x|+\beta \), then

$$\begin{aligned} (1+|x|)(|\nabla V(y)|+\left\Vert \nabla ^2 V(y)\right\Vert _2)\le C_{\alpha ,\beta }(1+V(x)). \end{aligned}$$ -

(3)

V is gradient Lipschitz with parameter \(L_V\), i.e., for all \(x\in \mathbb {R}^d\), \(\left\Vert \nabla ^2 V (x)\right\Vert _2\le L_V\).

To present our result, we define the set of functions

which is a complete metric space with the uniform metric \(d_Y(u,v)=\sup _{x\in \mathbb {R}^d} |u(x)-v(x)|\).

Theorem 7

Let k satisfy Assumption K1, V satisfy Assumption V1 and \(\rho _0\in \mathcal {P}_V\).

-

(i)

For any \(T>0\), there exists a unique solution \(\Phi (\cdot ,\cdot ,\rho _0)\in \mathcal {C}^1([0,T];Y)\) to (28). Moreover, the measure \(\rho _t=\Phi (t,\cdot ,\rho _0)_{\#}\rho _0\) satisfies

$$\begin{aligned} \left\Vert \rho _t\right\Vert _{\mathcal {P}_V}\le \left\Vert \rho _0 \right\Vert _{\mathcal {P}_V} \exp (C_{1,0}\nu ^{-1/2} \left\Vert k \right\Vert _\infty \textsc {KL}(\rho _0|\pi )^{1/2}t^{1/2}). \end{aligned}$$ -

(ii)

For any \(\rho _0\in \mathcal {P}_V\), there is a unique \(\rho \in \mathcal {C}([0,\infty ); \mathcal {P}_V )\) which is a weak solution to (7). Moreover, for all \(t\ge 0\),

$$\begin{aligned} \left\Vert \rho _t\right\Vert _{\mathcal {P}_V}\le \left\Vert \rho _0 \right\Vert _{\mathcal {P}_V} \exp (C_{1,0}\nu ^{-1/2} \left\Vert k \right\Vert _\infty \textsc {KL}(\rho _0|\pi )^{1/2}t^{1/2}). \end{aligned}$$

Remark 11

In Theorem 7, we introduce an upper bound to the \(\mathcal {P}_V\)-norm of the solution to (7) for any \(\nu \in (0,1]\). A similar result is established for the case of SVGF, i.e., when \(\nu =1\) in [30, Theorem 2.4]. In comparison to [30, Theorem 2.4], our result requires that the initial KL-divergence to the target is bounded. Furthermore, if we set \(\nu =1\) in our result, we do not end up recovering their result. When \(\nu =1\), there is an explicit integral formula to \(\mathcal {D}_{1,\rho _t}\nabla \log \frac{\rho _t}{\pi }\) which is leveraged in [30] for their proof. For \(\nu \in (0,1)\), due to the absence of an explicit representation, we get the result in Theorem 7 by carefully analyzing the quantity \(\mathcal {D}_{\nu ,\rho _t}(\nabla \log \frac{\rho _t}{\pi })\) along with the decay of KL-divergence property introduced in Proposition 4.

Proof of Theorem 7

Our proof leverages the approach of [30, Theorem 3.2] for the case of SVGF. In comparison to [30], we handle various difficulties arising with the non-linear operator in R-SVGF. We first prove claim (i) based on the following two steps. Claim (ii) follows directly from claim (i) and [46, Theorem 5.34].

Step 1 (Local well-posedness): Fix \(r>0\) and define

We will prove that there exists \(T_0>0\) such that (28) has a unique solution \(\Phi (t,x,\rho _0)\) in the set \(S_r=\mathcal {C}([0,T_0];Y_r)\) which is a complete metric space with metric

The integral formulation of (28) is

Let us define the operator \(\mathcal {F}:u(t,\cdot )\mapsto \mathcal {F}(u)(t,\cdot )\) by

where \(\rho _{u,t}=\left( u(t,\cdot ) \right) _{\#}\rho _0\). For the simplicity of notation, we will denote the map defined in (31) by \(\mathcal {F}(u)(t,x)=x-\int _0^t \mathcal {D}_{\nu ,\rho _{u,s}}\nabla \log \frac{\rho _{u,s}}{\pi }(u(s,x))ds\) for any \(u\in S_r\). We now show that \(\mathcal {F}\) is a contraction from \(S_r\) to \(S_r\) and thus has a unique fixed point. First, we show that \(\mathcal {F}\) maps \(S_r\) into \(S_r\) for some \(T_0>0\). For any \(u\in S_r\), checking that \((t,x)\mapsto \mathcal {F}(u)(t,x)\) is continuous is straightforward. We need to show that \(\sup _{x\in \mathbb {R}^d} |\mathcal {F}(u)(t,x)-x|\le r \) for any \(u\in S_r\).

Note that there is an equivalent representation for \(\mathcal {D}_{\nu ,\rho _{u,s}}\):

We then analyze the operators \(\iota _{k,\rho _{u,s}}\) and \(\left( (1-\nu )\iota _{k,\rho _{u,s}}^*\iota _{k,\rho _{u,s}}+\nu I_d\right) ^{-1}\iota _{k,\rho _{u,s}}^*\) respectively. Since \(\left\Vert k \right\Vert _{\infty }<\infty \), according to Proposition 1, \(\iota _{k,\rho _{u,s}}\) is the inclusion operator from \(\mathcal {H}_k^d\) to \(L_\infty ^d(\mathbb {R}^d)\). The corresponding operator norm, denoted as \(\left\Vert \iota _{k,\rho _{u,s}} \right\Vert _{\mathcal {H}_k^d\rightarrow L_\infty ^d}\) can be bounded in the following way:

Meanwhile, let \((\lambda _i,e_i)_{i=1}^\infty \) be the spectrum of \(\iota _{k,\rho _{u,s}}\iota _{k,\rho _{u,s}}^*\) with \((e_i)_{i=1}^\infty \) being an orthonormal basis of \(L^d_2(\rho _{u,s}) \equiv \overline{\text {Ran}(\iota _{k,\rho _{u,s}}\iota _{k,\rho _{u,s}}^*)}\). According to Proposition 1, \((\sqrt{\lambda _i}e_i)_{i=1}^\infty \) is an orthonormal basis of \(\mathcal {H}^d_k\); see also Remark 1. Hence, we have

where the last inequality follows from (9) and the fact that \((1-\nu )\iota _{k,\rho _{u,s}}^*\iota _{k,\rho _{u,s}}\) is positive. With (32) and (33), we get the following uniform bound on \(|\mathcal {D}_{\nu ,\rho _{u,s}}{\nabla }\log \frac{\rho _{u,s}}{\pi }(x)|\) for all \(x\in \mathbb {R}^d\),

Therefore, for all \(t\in [0,T]\) and all \( x\in \mathbb {R}^d\):

According to Lemma 2, there exists \(T_0>0\) such that for all \(u\in S_r\),

which along with (34) implies \(|\mathcal {F}(u)(t,x)-x|\le r\) for all \(u\in S_r\).

Next we show that \(\mathcal {F}\) is a contraction on \(S_r\). Our goal is to show that there exists \(T_0>0\) and \(C\in (0,1)\) such that for any \(u,v\in S_r\),

Let \(\rho _{u,t}=(u(t,\cdot ))_{\#}\rho _0\), \(\rho _{v,t}=(v(t,\cdot ))_{\#}\rho _0\), we have that

where the second inequality follows from Lemma 3 and Lemma 4. Furthermore, according to (38) and (39), there exists \(T_0>0\) such that

Therefore we have proved that there exists \(T_0>0\) such that \(\mathcal {F}\) is a contraction from \(S_r\) into \(S_r\). According to the contraction theorem, \(\mathcal {F}\) has a unique fixed point \(\Phi (\cdot ,\cdot ,\rho _0)\in S_r\) which solves (28). Defining \(\rho _t=(\Phi (t,\cdot ,\rho _0))_{\#}\rho _0\), one sees that \(\Phi (t,x,\rho _0)\) solves (28) in the time interval \([0,T_0]\).

Step 2 (Extension of local solution): According to (37) and (39), we can extend the local solution beyond time \(T_0\) as long as the quantity

remains finite. Next we establish a bound on this quantity showing that the local solution can be extended to any \(t>0\).

where

where the last inequality follows from Assumption V1. Therefore,

where the last inequality follows from (33). It follows from Gronwall’s inequality that

where the second inequality follows from Jensen’s inequality and the last inequality follows from (15). With this bound, we can iterate the argument to extend the local solution defined on \([0,T_0]\times \mathbb {R}^d\) to all of \([0,\infty )\times \mathbb {R}^d\), so that (35) holds for all \(t>0\). Finally, \(\Phi (\cdot ,x,\rho _0)\) has continuous first order derivative due to the integral formulation in (30), Assumption K1 and Assumption V1. The proof is thus complete.

Lemma 2

Let \(\rho _0\in \mathcal {P}_V\) and suppose the assumptions in Theorem 7 hold. Then, for any \(\epsilon >0\), there exists a constant \(T>0\) such that for all \(u\in S_r\) and \(t\in [0,T]\), with \(\rho _{u,t}=u(t,\cdot )_{\#}\rho _0\), we have

Proof of Lemma 2

According to Proposition 2, the regularized kernelized Stein discrepancy can be written as

Meanwhile, since \(\rho _{u,t}=u(t,\cdot )_{\#}\rho _0\) with \(u\in S_r\), for any \(\mathbb {R}^d\)-valued random vector X, \(u(t,X)\sim \rho _{u,t}\) and \(|u(t,X)-X|\le r\) almost surely. Therefore,

where \(\mathcal {W}_1\) is the Wasserstein-1 distance defined in Sect. 1.3. Next, we upper bound the regularized kernelized Stein discrepancy by the Wasserstein-1 distance. According to [22, Lemma 18], for any general vector field \(\phi \in \mathcal {H}_k^d\), we have

where for any \(g:\mathbb {R}^d\rightarrow \mathbb {R}^d\) and \(g\in C^1(\mathbb {R}^d)\),

For any \(\phi \in \mathcal {H}_k^d\) and \(\phi =[\phi _1,\cdots ,\phi _d]^T\), according to [42, Lemma 4.34],

Therefore,

According to Assumption V1, \(M_1(\nabla V)=L_V\) and \(\mathbb {E}_{x\sim \pi }\left[ |\nabla V(x)|^2 \right] \le C_{1,0} \left\Vert \pi \right\Vert _{\mathcal {P}_V} \). Therefore,

Note that \(\phi _{k,\rho _{u,t}}^*\) satisfies that \(\nu \left\Vert \phi _{k,\rho _{u,t}}^* \right\Vert _{\mathcal {H}_k^d}^2+(1-\nu )\left\Vert \phi _{k,\rho _{u,t}}^* \right\Vert _{L^d_2(\rho _{u,t})^d}\le 1\). Therefore,

and

Since the upper bound is independent of the choice of \(u(t,\cdot )\in S_r\) and the time variable t, for any \(\epsilon >0\), we can choose a small enough T such that (36) holds.

Lemma 3

Under the assumptions in Theorem 7, let \(S_r=\mathcal {C}([0,T];Y_r)\) with \(Y_r\) defined in (29). Then for any \(t\in [0,T]\), there exists \(L(t)>0\) such that for any \(u\in S_r\), for all \(x,y\in \mathbb {R}^d\) and \(t\in [0,T]\),

where for all \(t\in [0,T]\), \(\rho _{u,t}=(u(t,\cdot ))_{\#}\rho _0\) and

Proof of Lemma 3

Since \(\mathcal {D}_{\nu ,\rho _{u,t}}=\iota _{k,\rho _{u,t}}\left( (1-\nu )\iota _{k,\rho _{u,t}}^*\iota _{k,\rho _{u,t}}+\nu I_d\right) ^{-1}\iota _{k,\rho _{u,t}}^*\) and \(\iota _{k,\rho _{u,t}}\) is the inclusion operator,

where the second identity follows from the reproducing property and the last inequality follows from (33). Furthermore, we can write

where the first identity follows from the RKHS property and the second identity follows from Taylor expansion and Assumption K1. Therefore, (37) holds with L(t) defined in (38).

Lemma 4

Under the assumptions in Theorem 7, let \(S_r=\mathcal {C}([0,T];Y_r)\) with \(Y_r\) defined in (29). Then for any \(t\in [0,T]\), there exists \(C_1(t)>0\) such that for any \(u,v\in S_r\),

where for all \(t\in [0,T]\), \(\rho _{u,t}=(u(t,\cdot ))_{\#}\rho _0\), \(\rho _{v,t}=(v(t,\cdot ))_{\#}\rho _0\) and

with \(L_r\) being defined in (44).

Proof of Lemma 4

With the facts that \(\mathcal {D}_{\nu ,\mu }=\iota _{k,\mu }(\iota _{k,\mu }^*\iota _{k,\mu }+\nu I)^{-1}\iota _{k,\mu }^*\) and \(\iota _{k,\mu }\) is the inclusion operator we get,

We then turn to study the two terms in the upper bound separately.

First term: Note that, we have

As we are bounding the function value by its \(L_\infty ^d\) norm, the second step allows the function to be in the space of \(L_\infty ^d\), without which we think of the function as belonging to the RKHS. The second inequality follows from (32) and (33). The last inequality follows from the fact that

where the first identity follows from the definitions of \(\rho _{u,t}\) and \(\rho _{v,t}\) and change of variable. The second inequality holds due to the symmetry in x and y. The second identity follows from the reproducing property of the RKHS. The last identity follows from the fact that \(\left\Vert k(x,\cdot ) \right\Vert _{\mathcal {H}_k}=\sqrt{k(x,x)}\) for all x and the last inequality follows from Assumption K1 and Taylor expansion on both variables in k up to second order.

Second term: Note that we have

where the last inequality follows from (32) and for all \(x\in \mathbb {R}^d\),

Therefore, we have

For simplicity, in the following calculations, we denote u(t, y) and v(t, y) as u and v respectively. We will bound \(\left\Vert k(\cdot ,u)\nabla V(u)-k(\cdot ,v)\nabla V(v) \right\Vert _{\mathcal {H}_k^d}\) and \(\left\Vert \nabla _2 k(\cdot ,u)-\nabla _2 k(\cdot ,v) \right\Vert _{\mathcal {H}_k^d}\) respectively. Note that we have

where

The second inequality follows from Assumption V1 and the last inequality follows from Assumption K1 and Taylor expansion on both variables in k up to first order. And, we also have

where the first inequality follows from Assumption V1 and the last inequality follows from Assumption K1 and Taylor expansion on both variables in k up to second order. With the above two inequalities, we have

Observe that, for all \(x,y\in \mathbb {R}^d\)

If we denote the function \(\nabla _1\cdot \nabla _2 k=D_{1,2}k\) where \(D_{1,2}k\) is symmetric since k is symmetric, we get

where the inequality follows from Taylor expansion on both variables of \(D_{1,2}k\). Therefore,

According to (42) and (43), we get

with

Therefore, the second term is bounded as

6 Stability

In this section, we prove a stability estimate for the weak solutions to (7). To do this, we introduce a space of probability measures on \(\mathbb {R}^d\) and assumptions on V as follows,

where \(\mathcal {P}\) denotes the set of all probability measures on \(\mathbb {R}^d\).

Assumption V2

In addition to Assumption V1, there exists a constant \(C_V>0\) and \(q>1\) such that \(|\nabla V(x)|^q \le C_V (1+V(x))\) for all \(x\in \mathbb {R}^d\) and \(\sup _{\theta \in [0,1]} \left\Vert \nabla ^2 V (\theta x+(1-\theta )y)\right\Vert _2^q \le C_V (1+V(x)+V(y)) \).

Theorem 8

Let V satisfy Assumption V2 with \(q\in (1,\infty )\) and k satisfy Assumption K1. Let p be the conjugate of q, i.e., \(p^{-1}+q^{-1}=1\). Let \(\rho _1,\rho _2\in \mathcal {P}_p\) be two initial probability measures satisfying \(\left\Vert \rho _i \right\Vert _{\mathcal {P}_p}\le R\) for some constant \(R>0\) and \(i=1,2\). Let \(\rho _{1,t}\) and \(\rho _{2,t}\) be the associated weak solution to (7). Then given any \(T>0\), there exists a constant \(C>0\) depending on \(k,V,q,\nu ,\rho _1,\rho _2\) such that,

More explicitly, the constant C is given by

where \(C(T,k,V,\nu ,\rho _1,\rho _2,q)\) is defined in (56).

Before we prove Theorem 8, we introduce the following corollary to Theorem 8.

Corollary 1

Suppose the assumptions in Theorem 8 hold. Let \(\rho _1 \in \mathcal {P}_p\) and \(\rho _1^N\) be the empirical measure formed from N samples from \(\rho _1\). Then for any \(T>0\),

where \(\rho _{1,t}\) and \(\rho _{1,t}^N\) are the unique weak solutions to (7) with initial conditions \(\rho _1\) and \(\rho _1^N\) respectively.

Since \(\mathcal {W}_p(\rho _1^N,\rho _1)\rightarrow 0\) as \(N\rightarrow \infty \), Corollary 1 follows directly from Theorem 8. Non-asymptotic bounds on \(\sup _{t\in [0,T]}\mathcal {W}_p(\rho _{1,t},\rho _{1,t}^N)\) that are non-uniform in time T (i.e., for \(T<\infty \)) in terms of N can also be obtained based on Theorem 8 and the convergence of empirical measures in \(\mathcal {W}_p\) [20, 49]. We leave that to the interested reader.

Remark 12

If we focus on the dependency on \(\nu \) and T in (46), we have