Abstract

Scientific assessments, such as those by the Intergovernmental Panel on Climate Change (IPCC), inform policymakers and the public about the state of scientific evidence and related uncertainties. We studied how experts from different scientific disciplines who were authors of IPCC reports, interpret the uncertainty language recommended in the Guidance Note for Lead Authors of the IPCC Fifth Assessment Report on Consistent Treatment of Uncertainties. This IPCC guidance note discusses how to use confidence levels to describe the quality of evidence and scientific agreement, as well likelihood terms to describe the probability intervals associated with climate variables. We find that (1) physical science experts were more familiar with the IPCC guidance note than other experts, and they followed it more often; (2) experts’ confidence levels increased more with perceptions of evidence than with agreement; (3) experts’ estimated probability intervals for climate variables were wider when likelihood terms were presented with “medium confidence” rather than with “high confidence” and when seen in context of IPCC sentences rather than out of context, and were only partly in agreement with the IPCC guidance note. Our findings inform recommendations for communications about scientific evidence, assessments, and related uncertainties.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Scientific reports about climate change, such as those of the Intergovernmental Panel on Climate Change (IPCC) often involve large-scale, interdisciplinary efforts to assess and combine scientific evidence from different disciplines for policymakers and the public (Swart et al. 2009). Findings from these reports typically inform policy decisions about issues such as climate change mitigation and adaptation (Ogunbode et al. 2020).

Organizations like the IPCC and the Intergovernmental Science-Policy Platform on Biodiversity and Ecosystem Services (IPBES) have created calibrated uncertainty language that experts from different disciplines can use to describe scientific evidence and related uncertainties (Mach et al. 2017; Swart et al. 2009; Fischhoff 2016; Mastrandrea and Mach 2011). For example, the IPCC’s Guidance Note for Lead Authors of the IPCC Fifth Assessment Report on Consistent Treatment of Uncertainties (henceforth: IPCC guidance note) describes how to judge confidence levels for the presented evidence, as well as likelihood terms associated with projected climate variables (Yohe and Oppenheimer 2011; Pidgeon and Fischhoff 2011; Budescu et al. 2014; Mach et al. 2017; Anttila et al. 2018). Both confidence levels and likelihood terms are described in more detail below.

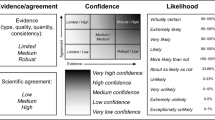

First, the IPCC guidance note (Mastrandrea et al. 2010) encourages experts to assign confidence levels to the presented evidence, using the terms “very low,” “low,” “medium,” “high,” and “very high.” (Fig. 1a). Confidence levels should be greater as the described evidence becomes more robust and the scientific agreement increases (Fig. 1a). Evidence is more robust if it is based on multiple, consistent, and independent lines of high-quality research (Mach et al. 2017). The degree of scientific agreement is greater if presented evidence is well-established in the scientific community, rather than based on competing or speculative findings (Mach et al. 2017). Second, the IPCC guidance note (Mastrandrea et al. 2010) encourages experts to use likelihood terms associated with projected climate variables, on a scale varying from “exceptionally unlikely” to “virtually certain” (Fig. 1b). The IPCC guidance note (Mastrandrea et al. 2010) includes a table that translates each likelihood term into a probability interval, with “exceptionally unlikely” referred to as 0–10% and “virtually certain” to as 99–100% (Fig. 1b). Additionally, the IPCC guidance note (Mastrandrea et al. 2010) describes how and when to apply confidence levels and likelihood terms to different types of variables, including ones that are ambiguous, a sign without a magnitude, an order of magnitude, a range, a likelihood or probability, or a probability distribution (Table 1).

a The IPCC guidance note about the relationship between evidence, agreement, and confidence levels in scientific evidence (Mastrandrea et al. 2010). Confidence levels increase towards the top right corner, where the shading is darker. b The IPCC guidance note for translating likelihood terms into intervals when communicating scientific evidence (Mastrandrea et al. 2010)

However, several issues have been raised in regards to the IPCC guidance note (Mastrandrea et al. 2010) about how to apply confidence levels and likelihood terms. For example, the IPCC guidance note (Mastrandrea et al. 2010) remains somewhat unclear about how to integrate evidence and agreement into confidence levels (Janzwood 2020). The IPCC guidance note (Mastrandrea et al. 2010) does not recommend expressing confidence levels for all types of evidence (Table 1). Indeed, a suggestion has been to only use confidence levels when evidence is robust and agreement is high (Mastrandrea and Mach 2011). Consequently, the use of high confidence levels has increased since the IPCC’s first assessment report in 1990 (Janzwood 2020; Molina and Abadal 2021).

Moreover, confidence levels are often used inconsistently across IPCC working groups, perhaps as a result of differing traditions in authors’ disciplines (Mach et al. 2017). Interviews with authors of the IPCC’s Fifth Assessment Report have revealed that they vary in how they use confidence levels and likelihood terms, and that they request clearer guidance (Janzwood 2020). Some IPCC authors also indicated that they consider confidence levels and likelihood terms as interchangeable (Janzwood 2020; Kandlikar et al. 2005). Others interpret confidence levels as independent “meta judgments” about the quality of likelihood estimates in climate models, and thus consider the two metrics as distinct (Janzwood 2020).

Research on risk communication also shows that there often is disagreement about how to interpret likelihood terms such as “likely” and “very likely” (Budescu et al. 2014; Kause et al. 2021; Harris et al. 2017; Howe et al. 2019). People disagree more about how to interpret positive likelihood terms (“likely”) than about how to interpret negative likelihood terms such as “unlikely” (Smithson et al. 2012). Presenting confidence levels with likelihood terms may also change whether likelihood terms are interpreted in line with the IPCC guidance note (Smithson et al. 2012; Hohle and Teigen 2017; Spence and Pidgeon 2010).

When likelihood terms are presented out of context, people agree more about what they mean than when likelihood terms are presented in context, such as in statements about political forecasts (Beyth-Marom 1982) or in IPCC report sentences (Budescu et al. 2014). It has been posited that pre-existing beliefs about a context can inform the way users interpret scientific evidence and related uncertainties (Beyth-Marom 1982). Perhaps as a result, interpretations of likelihood terms may vary across individuals, who tend to interpret likelihood terms in line with their pre-existing beliefs (Budescu et al. 2014; Kause et al. 2021).

Because the IPCC guidance note (Mastrandrea et al. 2010) aims to help experts to write IPCC reports, it is important to understand how they assign confidence levels and likelihood terms. Here, we quantitatively surveyed how experts contributing to the IPCC’s Sixth Assessment Report cycle (AR6) view the uncertainty language laid out in the IPCC guidance note (Mastrandrea et al. 2010). We assessed (1) the reported familiarity, clarity, usefulness, and frequency of use of the IPCC guidance note for assigning confidence levels, among experts from different disciplines; (2) how experts assign confidence levels to different combinations of evidence and agreement; and (3) the probability intervals experts estimated for seven combinations of likelihood terms and confidence levels, when combinations were presented out of context or in the context of sentences from an IPCC report. We also assessed whether the experts’ estimated probability intervals were in line with intervals specified in the IPCC guidance note (Mastrandrea et al. 2010).

2 Materials and methods

2.1 Experimental design

In an online survey, we asked experts to share their interpretations of the uncertainty language proposed in Mastrandrea et al.’s (2010) IPCC guidance note (Table A.1F in Appendix).

2.1.1 Reporting familiarity, clarity, usefulness, and frequency of use of the IPCC guidance note for assigning confidence levels

We asked experts how familiar, clear, and useful they found the IPCC guidance note for assigning confidence levels (Mastrandrea et al. 2010). Specifically, we asked “How familiar are you with the IPCC uncertainty guidance note for communicating confidence in IPCC reports?”, “How clear do you find the IPCC uncertainty guidance note for communicating confidence in IPCC reports?”, and “How useful do you find the IPCC uncertainty guidance note for communicating confidence in IPCC reports?” Response options ranged from 1 = “not at all [familiar/clear/useful]” to 5 = “very [familiar/clear/useful].” We also asked experts “How often do you follow the IPCC uncertainty guidance note for communicating confidence in IPCC reports?” Response options ranged from 1 = “never” to 5 = “always” (Table A.1E in Appendix).

2.1.2 Assigning confidence levels

We assessed how the experts assign confidence levels to different combinations of evidence and agreement. Specifically, we presented seven of nine possible combinations of evidence levels (“low,” “medium,” “robust”) and scientific agreement levels (“low,” “medium,” “high”), selected from the IPCC guidance note (Mastrandrea et al. 2010). To keep the survey as short as possible, we did not include combinations that were used less frequently in IPCC reports, such as “low evidence, medium agreement” and “medium evidence, low agreement.” The seven combinations are displayed in Fig. 3. Experts assigned confidence levels ranging from 1 = “very low confidence” to 5 = “very high confidence,” or they could choose the option of “I would not use a confidence statement” (Table A.1B in Appendix).

2.1.3 Estimating probability intervals for combinations of likelihood terms and confidence levels

In a within-subjects design, we presented each expert with two likelihood terms (“likely” vs. “very unlikely”) and two confidence levels (“high confidence” or “medium confidence),” for a total of four combinations. We presented each of the four combinations to each expert twice, including out of context (Table A.1C in Appendix) and in the context of IPCC report sentences. As an example, a presentation of an in context-combination read: “It remains very unlikely that the AMOC [Atlantic Meridional Overturning Circulation] will undergo an abrupt transition or collapse in the twenty-first century for the scenarios considered (high confidence)” (Table A.1D in Appendix). When combinations were presented out of context, experts were asked “What numerical likelihood do you think best represents a finding that is described as very unlikely with high confidence?” followed by “My best estimate for a range is: lower end of range: ____ / upper end of range:___.” They were also asked to estimate precise probability values associated with each combination (Budescu et al. 2014), with questions such as: “What numerical likelihood do you think best represents a finding that is described as very unlikely with high confidence? My best estimate is ___.” When combinations were presented in context of IPCC report sentences experts were asked “What numerical likelihood do you think best represents this finding?” Because the IPCC guidance note (Mastrandrea et al. 2010) focuses on translating likelihood terms into probability intervals, we report experts’ probability intervals in the paper and the precise probability values in Appendix tables A.6A, A.6B, A.8A, and A.8B.

2.1.4 Personal information

Experts were asked to indicate the type and number of disciplines they identified with. They could choose one or more from: earth sciences, physical sciences, life sciences, social sciences; engineering, chemistry, or other. They were also asked to indicate the decade in which they received their PhD, their academic position, the part(s) of the IPCC reports they co-authored, which IPCC working group best fitted their expertise, their gender, and the country in which they were based at the time of the survey (Table A.2 in Appendix).

2.1.5 Use of criteria

We asked the experts about their use of criteria [“Type of evidence”/ “Quality of evidence”/ “Quantity of evidence”/ “Consistency between different types of evidence”/ “Scientific agreement”] (Mastrandrea et al. 2010). Specifically, we asked “To what extent do you typically consider each of the following when evaluating findings from your discipline?”. Response options ranged from 1 = “not at all” to 5 = “very much” (Table A.1A in Appendix). We also asked experts how often they used descriptions of the type of variables, such as “A) A variable is ambiguous, or the processes determining it are poorly known or not amenable to measurement” to “F) A probability distribution or a set of distributions can be determined for the variable either through statistical analysis or through use of a formal quantitative survey of expert views” (Table 1; Table A.1F in Appendix). Due to a programming error, F) was missing in the online survey. We therefore do not report these findings here.

2.2 Sample

In April and May of 2018, experts contributing to the Special Report on Climate Change and Land (SRCCL) received an email to participate in our survey. This email was sent by our fifth author [LO], who also co-authored the SRCCL report. Experts contributing to the IPCC Sixth Assessment Report and to the Special Reports in the Sixth Assessment Report cycle were contacted in October 2018 by our first author [AK]. Contact information was obtained from the IPCC website (https://www.ipcc.ch/authors/; accessed October–December 2018). Overall, N = 346 experts clicked on the survey link sent in the email. Because the survey software allowed experts to skip questions, the number of responses varied by question. We therefore report the sample size alongside each analysis. Table A.2 in the Appendix describes experts’ characteristics. Thirty-six percent (N = 126) reported their gender as male, and 16% (N = 54) reported it as female; 47% (N = 164) chose not to report their gender. Experts reported having obtained their PhD on average M = 18.46 years ago (N = 179; SD = 10.87, range 5–55 years). Overall, 14% (N = 49) experts indicated that they were physical scientists (including 1 who selected chemistry), 21% (N = 74) were earth scientists, 9% (N = 32) were life scientists, 18% (N = 62) were social scientists, 9% (N = 33) were engineers, and 4% (N = 14) came from other disciplines. Forty-seven percent chose to not report their discipline.

3 Results

All analyses were conducted in R, using the packages ggplot2 (Wickham and Winston 2019), lmerTest (Kuznetova et al. 2017) and lme4 (Bates et al. 2022).

3.1 Reported familiarity, clarity, usefulness, and frequency of use of the IPCC guidance note for assigning confidence levels

To answer our first research question, we examined experts’ reported familiarity, clarity, usefulness, and frequency of use of the IPCC guidance note for assigning confidence levels (Fig. 2; Mastrandrea et al. 2010). We found that the physical scientists were most familiar with the IPCC guidance note: 87% said they were “very familiar” or “familiar” with the IPCC guidance note, compared to 73% of the earth scientists, 66% of the life scientists, 64% of the social scientists, and 64% of the engineers (Fig. 2; Table A.3 in Appendix). Clarity and usefulness of the IPCC guidance note about confidence (Mastrandrea et al. 2010) were rated quite similar by experts from all disciplines. Yet, 81% of the physical scientists indicated using the IPCC guidance note “always” or “very frequently,” while that figure was 69% for the earth scientists, 52% for the life scientists, 53% for the social scientists, and 50% for the engineers (Fig. 2). Thus, experts from different disciplines may have different needs for guidelines on communicating confidence about climate evidence (Swart et al. 2009).

Mean ratings, by discipline, of the IPCC guidance note (Mastrandrea et al. 2010). Ratings of familiarity (1 = “not familiar at all” to 5 = “very familiar”), clarity (1 = “not clear at all” to 5 = “very clear”), usefulness (1 = “not useful at all” to 5 = “very useful”) and frequency of use (1 = “never” to 5 = “always”). Error bars represent 95% confidence intervals (CIs). Numbers at the bottom of the y-axis indicate the number of answers per discipline. The only expert identifying with chemistry was classified as a physical scientist

3.2 Confidence levels assigned to evidence and agreement

To answer our second research question about how experts’ assignment of confidence levels varied with the presented levels of evidence (“low,” “medium,” “robust”) and scientific agreement (“low,” “medium,” “high”) from the IPCC guidance note (Mastrandrea et al. 2010; Fig. 1, and in “Materials and methods”), we computed multilevel linear regressions to predict experts’ assigned confidence levels from the presented level of evidence, agreement, their interaction, and experts’ type and number of disciplines. In all models that included type of discipline, earth scientists were used as the reference group because they were the largest group. All models also controlled for demographic variables, such as years since PhD and gender. In line with the IPCC guidance note (Mastrandrea et al. 2010), experts assigned higher confidence levels when presented with statements declaring more robust evidence and higher agreement (Fig. 3). Relationships between assigned confidence levels and presented levels of agreement were stronger when the evidence was robust (evidence [low vs. medium vs. robust] × agreement [low vs. medium vs. high]: b = 0.15; SE = 0.02; 95% CI [0.11, 0.19], Fig. 4, Table A.4A in Appendix). We also examined whether experts from different disciplines varied in their assigned confidence levels, given presented levels of evidence and agreement. We found a stronger positive relationship between the level of evidence and assigned confidence levels among life scientists as compared to earth scientists (evidence × life sciences: b = 0.13, SE = 0.07, 95%CI [− 0.01, 0.27]), and among engineers as compared to earth scientists (evidence x engineering: b = 0.14, SE = 0.07, 95%CI [0.01, 0.27], Table A.4B in Appendix).

Boxplots showing confidence levels assigned by IPCC experts to seven different combinations of scientific evidence and agreement (x-axis). Each expert assigned confidence levels ranging from 1 = “Very low confidence” to 5 = “Very high confidence” (y-axis) for combinations of evidence and agreement (x-axis). Boxes represent the first and third quartiles, whiskers represent values within 1.5 × the interquartile range, black dots represent outliers, and gray dots represent individual data points

Boxplots showing the experts’ estimates (y-axis) of lower and upper probability interval bounds (x-axis) in response to two likelihood terms (“likely” vs. “very unlikely”) accompanied by two confidence levels (“high confidence” vs. “medium confidence”) from the IPCC guidance note (Mastrandrea et al. 2010). The experts estimated probability intervals for combinations presented a out of context (N = 1584 answers) vs. b in the context of IPCC report sentences (N = 1466 answers). Gray dots show the individual data points

We also assessed how many experts refrained from assigning a confidence level to each combination of evidence and agreement. Only 3% refrained from assigning a confidence level for “robust evidence/high agreement,” 6% refrained for “robust evidence/medium agreement” and for “medium evidence/high agreement,” and 4% refrained for “medium evidence/medium agreement.” For combinations where evidence and agreement appeared to be more contradictory, experts were less likely to assign a confidence level. For example, 14% assigned no confidence level for “robust evidence/low agreement,” 12% assigned none for “low evidence/high agreement,” and 12% assigned none for “low evidence/low agreement.” We computed multilevel linear regressions to predict the experts’ likelihood to refrain from assigning a confidence level, including evidence, agreement, their interaction, and experts’ type and number of disciplines. In all models that included type of discipline, earth scientists were used as the reference group because they were the largest group. All models also controlled for demographic variables, such as years since PhD and gender. Overall, experts were less likely to assign a confidence level when the evidence was less robust and the agreement was lower (evidence x agreement: b = − 0.67, SE = 0.14, 95%CI [− 0.95, − 0.40]; Table A.5A in Appendix). This pattern was similar across the type and number of disciplines (all p > 0.13; Table A.5B in Appendix).

3.3 Estimated probability intervals for combinations of likelihood terms and confidence levels, when presented out of context or in context

Our third research question concerned the relationship between the experts’ estimated probability intervals in response to combinations of likelihood terms (e.g., “likely,” “very unlikely”) and confidence levels (e.g., “high confidence,” “medium confidence”), which were presented out of context or in the context of IPCC report sentences. We analyzed descriptive statistics of the lower and upper bounds of estimated probability intervals. For all likelihood terms, the lower and upper bounds regressed to 50% when experts were presented with “medium confidence” rather than “high confidence” (Fig. 4).

We also calculated probability intervals by subtracting the experts’ lower bound estimates from their upper bound estimates (Harris et al. 2017). We assessed whether probability intervals differed with the presented likelihood terms and confidence levels, and out of context or in the context of IPCC report sentences (Fig. 4). We computed multilevel linear regressions to predict the experts’ probability intervals, including the likelihood term, confidence level, out of vs. in context presentation, their interactions, and the experts’ type and number of disciplines. In all linear models with type of discipline, the earth scientists were used as the reference because they were the largest group. All models also controlled for demographic variables, such as years since PhD and gender. Overall, probability intervals were wider in response to the term “likely” (M = 24.53, SD = 16.30) than to the term “very unlikely” (M = 16.29, SD = 14.90; likelihood term: b = − 8.12, SE = 0.87, 95%CI [− 9.82, − 6.42]; Table A.7A in Appendix). Furthermore, probability intervals were wider when likelihood terms were combined with “medium confidence” compared to “high confidence” (confidence level: b = 3.43, SE = 0.87, 95%CI [1.72, 5.13]; Table A.7A in Appendix). The interval ranges were also, on average, wider for out of than in context presentations (M = 21.44, SD = 16.79 vs. M = 19.36, SD = 15.29; context: b = − 1.98, SE = 0.62, 95%CI [− 3.18, − 0.77]; Table A.7A in Appendix, but please note that medians looked slightly different to what models indicated; Fig. 4). Probability intervals were slightly wider for earth scientists compared to life scientists (life scientists: b = − 6.25, SE = 3.45, 95%CI [− 12.93, 0.43]); Table A.7B in Appendix).

We also examined the accuracy of the experts’ estimated probability intervals. Intervals were coded as accurate when they fell within intervals specified in the IPCC guidance note (Mastrandrea et al. 2010; Fig. 1). We computed multilevel linear regressions to predict the accuracy of the experts’ estimated probability intervals, including the likelihood term, confidence level, context, their interactions, and the type and number of disciplines. In all linear models with type of discipline, the earth scientists were used as the reference because they were the largest group. All models also controlled for demographic variables, such as years since PhD and gender. Fifty percent of intervals for the “likely and high confidence” term fell within the interval specified in the IPCC guidance note. This was also true for 18% of intervals for the “likely and medium confidence” term, 42% of intervals for the “very unlikely and high confidence” term, and 20% of intervals for the “very unlikely and medium confidence” term (likelihood term x confidence level: b = 1.27, SE = 0.31, 95%CI [0.66, 1.89]). Probability intervals in response to “medium confidence” were more likely to be accurate when presented in context (confidence level x context: b = 0.68, SE = 0.31, 95%CI [0.07, 1.29]; Table A.9A in Appendix). Earth scientists were more likely to accurately estimate probability intervals than social scientists (social sciences: b = − 0.75, SE = 0.38, 95%CI [− 1.49, − 0.01]). Estimates by experts who self-identified with several disciplines were more accurate than those by experts who self-identified with one discipline (number of disciplines [one vs. several]: b = 0.87, SE = 0.36, 95%CI [0.18, 1.57]; Table A.9B in Appendix). Figure 5 displays cumulative proportions of accurate probability-interval estimates across eight combinations, separated by one discipline vs. several disciplines (see also Fig. A.1B in Appendix).

Cumulative percentages of experts who accurately estimated probability intervals for four combinations of likelihood terms and confidence levels, presented either out of context or in the context of IPCC report sentences. Probability intervals were estimated by experts who self-identified with one or several disciplines. The x-axis displays the number of accurately estimated probability intervals, which were in line with the numbers specified in the IPCC guidance note (Mastrandrea et al. 2010; Fig. 1). The y-axis displays the cumulative percentage of experts. Cumulative percentages for accurately estimated probability intervals are based on answers by N = 111 experts who identified with one discipline (represented by circles) and N = 39 experts who identified with several disciplines (represented by triangles). Experts indicated their discipline(s) by selecting one or more disciplines from a list of seven. Experts’ estimated probability intervals were coded as accurate when they fell within the probability interval specified in the IPCC guidance note (Mastrandrea et al. 2010) for these terms. Fig. S1 in the Appendix additionally displays cumulative percentages across estimated probability intervals, separated by the seven disciplines

4 Discussion

The IPCC Guidance Note for Lead Authors of the IPCC Fifth Assessment Report on Consistent Treatment of Uncertainties (Mastrandrea et al. 2010) provides one of the most comprehensive guidelines for communicating confidence levels and likelihood terms to experts from different disciplines (Mach et al. 2017). In an expert elicitation survey, we examined how experts from different disciplines contributing to IPCC reports evaluated the IPCC guidance note’s terms of evidence/agreement and how they assigned confidence levels to integrate assessments of evidence and agreement (Mastrandrea et al. 2010). We also studied how they estimated probability intervals, when likelihood terms were combined with different confidence levels, and when combinations were presented out of context or in the context of sentences from IPCC reports. We report on three key findings, provide possible explanations of why these may have occurred, and suggest avenues for further research.

First, we found that experts from different disciplines varied in how they evaluated the IPCC guidance note about confidence (Mastrandrea et al. 2010). Experts with a background in physical sciences were more familiar with the IPCC guidance note, and reported using it more often, compared to experts from other disciplines. Our results relate to findings from previous studies suggesting that experts from different disciplines vary in their understandings of and approaches to scientific evidence and related uncertainties (Adler and Hirsch-Hadorn 2014). For example, evidence reported by social scientists might also reflect uncertainties associated with human choice. Unlike causal processes in natural systems, human choice can also be driven by intention, optimization, strategic interaction (Ha-Duong et al. 2007), or simple rules of thumb (Gigerenzer and Gaissmaier 2011). This was also indicated in experts’ comments to our survey such as: “For social science-related variables core to understanding options for averting, minimizing, and addressing the adverse effects of climate change, it can be a real challenge to apply current IPCC confidence language. Variables like choice, human behavior, values, preferences, perception play a significant role and we struggle to convey the factors that matter from our assessments and how much they matter, in part related to being shoehorned into current confidence language guidelines” (Comment 2; Table A.10 in Appendix). Future research needs to address whether the IPCC guidance note (Mastrandrea et al. 2010) could benefit from adding uncertainty language that applies to findings from different disciplines, including the social sciences and engineering, and that includes differences between theoretical paradigms and methodological traditions in these disciplines. For example, one expert noted “The questions are a bit limiting from a social and engineering science perspective. In many cases it is not about certainty and likelihood but rather exposing or elaborating different perspectives. What is the likelihood that mainstream neoclassic economics is right about things?” Comment 18, Table A.10 in Appendix; Swart et al. 2009; Grimaldi et al. 2015).

Also, comments from experts confirm that our survey format may have failed to “trap some of the nuances of different attitudes to uncertainty language between researchers associated with quantitative (the majority) and qualitative (the minority) research approaches” (Comment 1; Table A.10 in Appendix). We thus suggest that future research builds on a mixed-methods approach where interviews with experts using qualitative and quantitative research methods (such as in Janzwood 2020, see also Bruine de Bruin and Bostrom 2013) complement quantitative study designs such as this expert elicitation survey. This will enable a better understanding of uncertainties associated with scientific evidence from the social sciences, including how this evidence is produced and communicated (Borie et al. 2021).

Second, when selecting confidence levels, experts gave slightly more weight to evidence than agreement. Possibly, this may have occurred because the IPCC guidance note suggests to evaluate evidence based on the “type, quality, quantity, and consistency of evidence.” It provides less information about how to evaluate “the degree of agreement” (Mastrandrea et al. 2010). Addressing this issue empirically would require more research on the experts’ perceptions of these tasks, including their interpretations of what scientific agreement actually is, and on differences in how hard it is for them to make evaluations of evidence versus agreement. This research could also examine how different methods of synthesizing evidence inform experts’ assessments of evidence and agreement. For example, evidence can be informed by the transparency and replicability of evidence (Munafo et al. 2017), or by the range of measures employed for answering a specific research question, as reported in systematic reviews. Agreement can be informed by analyses of effect sizes in meta-analyses, or by expert surveys about issues such as scientific consensus (such as Cook et al. 2013).

Third, experts’ estimated probability intervals for likelihood terms were also affected by the presented confidence levels. Specifically, experts’ probability intervals for “likely” and “very unlikely” were wider in response to “medium confidence” than in response to “high confidence.” Possibly, the use of likelihood terms in IPCC reports is therefore shaped—implicitly or explicitly—by confidence in reported scientific evidence (Janzwood 2020; Mach et al. 2017; Fischhoff 2016; Kandlikar et al. 2005).

Estimated intervals were only partially in line with probability intervals provided in the IPCC guidance note (Fig. 1, Mastrandrea et al. 2010). Accuracy was lower for likelihood terms presented with “medium confidence” than with “high confidence” even though estimated probability intervals were wider for likelihood terms presented with “medium confidence” rather than “high confidence.” This stands in contrast to Bradley, Helgeson, and Hill (2018), who suggested that larger intervals are more likely to include the true estimated value and are thus associated with higher confidence. Findings from cognitive science suggest that confidence levels accompanying likelihood terms may influence how likely a climate event is perceived to be (Smithson et al. 2012; Hohle and Teigen 2017; Spence and Pidgeon 2010).

Also, for the likelihood term “unlikely,” estimates changed when it was presented out of context, as it is in the IPCC guidance note (e.g., “very unlikely” with “medium confidence”), compared to when it was presented in actual IPCC report sentences (e.g., “It is very unlikely that it [the mean global mean surface air temperature for the period 2016–2035] will be more than 1.5 °C above the 1850–1900 mean (medium confidence).” This may be for example because experts associate different base rates with described climate variables (Tversky and Kahneman 1981). In previous studies, members of the general public in different countries were asked to translate different likelihood terms used in IPCC reports into numbers (Budescu et al. 2014; Harris et al. 2017). The findings indicate that it could be difficult to standardize what likelihood terms such as “very likely” actually mean, unless those likelihood terms are presented together with the equivalent intervals such as “very likely (66–100%)” (Budescu et al. 2011; Budescu et al. 2014). Future research needs to address whether such joint presentation formats also help experts and their target audiences to interpret likelihood terms consistently, both out of and in the context of IPCC report sentences.

More generally, our findings and previous studies (Mach et al. 2017; Janzwood 2020; Swart et al. 2009; Kandlikar et al. 2005), indicate a general need to understand IPCC authors’ perceptions of how the IPCC guidance note’s different metrics relate to one another (Mach et al. 2017; Janzwood 2020; Swart et al. 2009; Kandlikar et al. 2005). For example, one of our participating experts commented: “I only use the two-phase level of evidence-level of agreement categories. I do not use the % likelihood language or the ‘confidence’ language. I think it is important to scale level of evidence and level of agreement separately and I do not believe they can be squashed into a single confidence statement” (Comment 3, Table A.10 in Appendix) Mach et al. (2017) or Helgeson et al. (2018) suggest that the IPCC guidance note could be simplified, while still providing scope to describe evidence and associated uncertainties from different disciplines.

Future research also needs to address the fact that a guidance note such as the one from the IPCC may have multiple aims. On the one hand, it seeks to provide a nuanced picture of uncertainties associated with evidence from different disciplines. On the other hand, it should improve the communication and uptake of evidence and associated uncertainties in policy- and decision-making, and should increase transparency rather than distrust—as one author commented: “Uncertainty statements undermined the findings for the general public and media, and are often used to fully dismiss the findings of IPCC reports” (Comment 7, Table A.10 in Appendix). This requires research by behavioral scientists about the perception and communication of confidence and likelihood to members of the general public (such as Budescu et al. 2014). It could also motivate future research into how sensitive estimates are to the different presentation formats (numerical, verbal, or visual) from IPCC reports (van der Bles et al. 2019). Carefully evaluated formats may facilitate the communication of scientific evidence and related uncertainties.

Finally, training in the use of uncertainty language (Janzwood 2020) could help experts to apply it to findings from their discipline(s), in particular if they have not previously contributed to IPCC reports. This may have been the case for a substantial number of the Sixth Assessment Report experts who participated in our study. Such training could involve discussing what the concept of likelihood means and how suitable it is for describing findings from different disciplines; and how to differentiate between uncertainties from physical systems and uncertainties from social systems, which may reflect intentionality of human choices (Swart et al. 2009). This type of training could also involve familiarizing experts with evidence about the cognitive mechanisms that drive perceptions and interpretations of uncertainty language (Bruine de Bruin and Bostrom 2013), including confidence judgments (Hertwig 2012) and verbal frames (McKenzie and Nelson 2003). In IPCC reports and related outlets, experts could also jointly present numerical likelihood intervals and verbal likelihood terms (Budescu et al. 2014; Harris and Corner 2011; Budescu et al. 2011). As well as making the intervals easier to understand, this could also reduce variability in interpretations and prevent both communicators and audiences from regressing towards central estimates (Budescu et al. 2014). Expert estimates may also benefit from empirically evaluated estimation methods. These help to avoid overly precise or biased estimates by, for example, pooling individual estimates (Haran et al. 2010; Park and Budescu 2015; Mach et al. 2017; Litvinova et al. 2020).

In conclusion, the IPCC guidance note (Mastrandrea et al. 2010) provides a first step towards helping experts from different disciplines to communicate scientific evidence and related uncertainties transparently and congruently to policymakers and the general public.

Data availability

To ensure the participants’ anonymity, the dataset generated during the current study is not publicly available; the participants could be identified from their field, the chapter(s) of the IPCC report to which they contributed, and their country of residence. The dataset is available from the corresponding author on reasonable request.

Materials availability

The full survey is included in the Appendix.

References

Adler CE, Hirsch-Hadorn G (2014) The IPCC and treatment of uncertainties: topics and sources of dissensus. Wiley Interdiscip Rev Clim Chang 5:663–676. https://doi.org/10.1002/wcc.297

Anttila S, Persson J, Vareman N, Sahlin NE (2018) Challenge of communicating uncertainty in systematic reviews when applying GRADE ratings. Evid Based Med 23:125–126. https://doi.org/10.1136/bmjebm-2018-110894

Bates, D et al. (2022) Package ‘lme4’. https://github.com/lme4/lme4/

Beyth-Marom R (1982) How probable is probable? A numerical translation of verbal probability expressions. J Forecast 1:257–269. https://doi.org/10.1002/for.3980010305

Borie M, Mahony M, Obermeister N, Hulme M (2021) Knowing like a global expert organization: comparative insights from the IPCC and IPBES. Glob Environ Chang 68:102261. https://doi.org/10.1016/j.gloenvcha.2021.102261

Bradley R, Helgeson C, Hill B (2017) Climate change assessments: Confidence, probability, and decision. Philos Sci 84:500–522. https://doi.org/10.1086/692145

Bruine de Bruin W, Bostrom A (2013) Assessing what to address in science communication. Proc Natl Acad Sci 110:14062–14068. https://doi.org/10.1073/pnas.1212729110

Budescu DV, Por HH, Broomell SB (2011) Effective communication of uncertainty in the IPCC reports. Clim Change 113:181–200. https://doi.org/10.1007/s10584-011-0330-3

Budescu DV, Por HH, Broomell SB, Smithson M (2014) The interpretation of IPCC probabilistic statements around the world. Nat Clim Chang 4:508–512. https://doi.org/10.1038/nclimate2194

Budescu DV, Wallsten TS (1985) Consistency in interpretation of probabilistic phrases. Organ Behav Hum Decis Process 36:391–405. https://doi.org/10.1016/0749-5978(85)90007-X

Cokely E, Galesic M, Schulz E, Ghazal S, Garcia-Retamero R (2012) Measuring risk literacy: the Berlin Numeracy Test. Judgement Decis Mak 7:25–47. https://doi.org/10.1037/t45862-000

Cook J, Nuccitelli D, Green SA, Richardson M, Winkler B, Painting R, Way R, Jacobs P, Skuce A (2013) Quantifying the consensus on anthropogenic global warming in the scientific literature. Env Res Letters 8:024024. https://doi.org/10.1088/1748-9326/8/2/024024

Fischhoff B (2016) Conditions for sustainability science. Environment 58:20–24. https://doi.org/10.1080/00139157.2016.1112168

Galesic M, Kause A, Gaissmaier W (2016) A sampling framework for uncertainty in individual environmental decisions. Top Cogn Sci 8:242–258. https://doi.org/10.1111/tops.12172

Gigerenzer G, Gaissmaier W (2011) Heuristic decision making. Ann Rev of Psych 62:451–482. https://doi.org/10.1146/annurev-psych-120709-145346

Grimaldi P, Lau H, Basso MA (2015) There are things that we know that we know, and there are things that we do not know we do not know: confidence in decision-making. Neurosci Biobehav Rev 55:8897. https://doi.org/10.1016/j.neubiorev.2015.04.006

Ha-Duong M, Swart R, Bernstein L, Petersen A (2007) Uncertainty management in the IPCC: Agreeing to disagree. Glob Environ Chang 17:8–11. https://doi.org/10.1007/s10584-008-9444-7

Haran U, Moore DA, Morewedge CK (2010) A simple remedy for overprecision in judgment. Judgm Decis Mak 5:467–476. https://doi.org/10.1037/e615882011-200

Harris AJL, Corner A (2011) Communicating environmental risks: clarifying the severity effect in interpretations of verbal probability expressions. J Exp Psychol Learn Mem Cogn 37:1571–1578. https://doi.org/10.1037/a0024195

Harris AJL, Corner A, Xu JM, Du XF (2013) Lost in translation? Interpretations of the probability phrases used by the Intergovernmental Panel on Climate Change in China and the UK. Clim Change 121:415–425. https://doi.org/10.1007/s10584-013-0975-1

Harris AJL, Por HH, Broomell SB (2017) Anchoring climate change communications. Clim Change 140:387–398. https://doi.org/10.1007/s10584-016-1859-y

Helgeson C, Bradley R, Hill B (2018) Combining probability with qualitative degree-of-certainty metrics in assessment. Clim Change 149:517–525. https://doi.org/10.1007/s10584-018-2247-6

Hertwig R (2012) Tapping into the wisdom of the crowd–with confidence. Science 80:303–304. https://doi.org/10.1126/science.1221403

Hohle S, Teigen KH (2017) More than 50% or less than 70% chance: pragmatic implications of single bound probability estimates. J Behav Decis Mak 31:138–150. https://doi.org/10.1002/bdm.2052

Howe LC, MacInnis B, Krosnick JA, Markowitz EM, Socolow R (2019) Acknowledging uncertainty impacts public acceptance of climate scientists’ predictions. Nat Clim Chang 9:863–867. https://doi.org/10.1038/s41558-019-0587-5

Janzwood S (2020) Confident, likely, or both? The implementation of the uncertainty language framework in IPCC special reports. Clim Change 162:1655–1675. https://doi.org/10.1007/s10584-020-02746-x

Kandlikar M, Risbey J, Dessai S (2005) Representing and communicating deep uncertainty in climate-change assessments. Comptes Rendus Geosci 337(443):455. https://doi.org/10.1016/j.crte.2004.10.010

Kause A et al (2021) Communications about uncertainty in scientific climate-related findings: a qualitative systematic review. Environ Res Lett 16:053005. https://doi.org/10.1088/1748-9326/abb265

Kuznetova A, Brockhoff PB, Christensen RHB (2017) Package ‘lmerTest’. https://cran.r-project.org/web/packages/lmerTest/index.html

Litvinova A, Herzog SM, Kall A, Hertwig R (2020) How the “wisdom of the inner crowd” can boost accuracy of confidence judgments. Decision 7:183–211. https://doi.org/10.1037/dec0000119

Mach KJ, Mastrandrea MD, Freeman PT, Field CB (2017) Unleashing expert judgment in assessment. Glob Environ Chang 44:1–14. https://doi.org/10.1016/j.gloenvcha.2017.02.005

Mastrandrea MD, Field CB, Stocker TF, Edenhofer O, Ebi KL, Frame DJ, Held H, Kriegler E, Mach KJ, Matschoss PR, Plattner GK (2010) Guidance note for lead authors of the IPCC fifth assessment report on consistent treatment of uncertainties. https://www.ipcc.ch/site/assets/uploads/2017/08/AR5_Uncertainty_Guidance_Note.pdf

Mastrandrea MD, Mach KJ (2011) Treatment of uncertainties in IPCC Assessment Reports: past approaches and considerations for the Fifth Assessment Report. Clim Change 108:659–673. https://doi.org/10.1007/s10584-011-0177-7

Mastrandrea MD et al (2011) The IPCC AR5 guidance note on consistent treatment of uncertainties: a common approach across the working groups. Clim Change 108:675–691. https://doi.org/10.1007/s10584-011-0178-6

McKenzie CRM, Nelson JD (2003) What a speaker’s choice of frame reveals: reference points, frame selection, and framing effects. Psych Bull Rev 10:596–602. https://doi.org/10.3758/BF03196520

Molina T, Abadal E (2021) The evolution of communicating the uncertainty of climate change to policymakers: a study of IPCC synthesis reports. Sust 13(5):1–12. https://doi.org/10.3390/su13052466

Morton TA, Rabinovich A, Marshall D, Bretschneider P (2011) The future that may (or may not) come: how framing changes responses to uncertainty in climate change communications. Glob Environ Chang 21:103–109. https://doi.org/10.1016/j.gloenvcha.2010.09.013

Munafò MR, Nosek BA, Bishop DVM, Button KS, Chambers CD, Percie Du Sert N, Simonsohn U, Wagenmakers EJ, Ware JJ, Ioannidis JPA (2017) A manifesto for reproducible science. Nat Hum Behav 1(0021). https://doi.org/10.1038/s41562-016-0021

Ogunbode CA, Doran R, Böhm G (2020) Exposure to the IPCC special report on 1.5°C global warming is linked to perceived threat and increased concern about climate change. Clim Change 158:361–375. https://doi.org/10.1007/s10584-019-02609-0

Okan Y, Janssen E, Galesic M, Waters EA (2019) Using the short graph literacy scale to predict precursors of health behavior change. Med Decis Mak 39:183–195. https://doi.org/10.1177/0272989X19829728

Park S, Budescu DV (2015) Aggregating multiple probability intervals to improve calibration. Judgm Decis Mak 10:130–143 (http://journal.sjdm.org/14/141223/jdm141223.pdf)

Patt A, Dessai S (2005) Communicating uncertainty: lessons learned and suggestions for climate change assessment. Comptes Rendus - Geosci 337:425–441. https://doi.org/10.1016/j.crte.2004.10.004

Pidgeon N, Fischhoff B (2011) The role of social and decision sciences in communicating uncertain climate risks. Nat Clim Chang 1:35–41. https://doi.org/10.1038/nclimate1080

Smithson M, Budescu DV, Broomell SB, Por HH (2012) Never say ‘not’: impact of negative wording in probability phrases on imprecise probability judgments. Int J Approx Reason 53:1262–1270. https://doi.org/10.1016/j.ijar.2012.06.019

Spence A, Pidgeon N (2019) Framing and communicating climate change: the effects of distance and outcome frame manipulations. Glob Environ Chang 20:656–667. https://doi.org/10.1016/j.gloenvcha.2010.07.002

Swart R, Bernstein L, Ha-Duong M, Petersen A (2009) Agreeing to disagree: uncertainty management in assessing climate change, impacts and responses by the IPCC. Clim Change 92:1–29. https://doi.org/10.1007/s10584-008-9444-7

Tversky A, Kahneman D (1981) The framing of decisions and the psychology of choice. Science 80:453–458. https://doi.org/10.1126/science.7455683

van der Bles AM et al (2019) Communicating uncertainty about facts, numbers and science. R Soc Open Sci 6:181870. https://doi.org/10.1098/rsos.181870

Wickham H, Winston C (2019) Package ‘ggplot2’. https://ggplot2.tidyverse.org

Yohe G, Oppenheimer M (2011) Evaluation, characterization, and communication of uncertainty by the intergovernmental panel on climate change-an introductory essay. Clim Change 108:629–639. https://doi.org/10.1007/s10584-011-0176-8

Acknowledgements

We thank all participating experts, as well as Michael Mastrandrea, Jan Minx, Piers Forster, Hermann Held, and Lindsay Stringer for their advice during the conceptualization of the survey, Michael Mastrandrea and Jan Minx specifically for comments on a previous version of the manuscript, Daniel Cook for supporting us during data collection, and Justin Hopper and Jen Metcalfe for editing the manuscript.

Funding

Open Access funding enabled and organized by Projekt DEAL. The research was funded by the Swedish Research Council Formas Linnaeus grant LUCID, Lund University Centre of Excellence for Integration of Social and Natural Dimensions of Sustainability (259–2008-1718; to AK, JP, HT, LO, and NV); the Swedish Foundation for Humanities and Social Sciences’ VBE program, Science and Proven Experience (M14-0138:1; to WBdB, JP, NV, and AW); the Center for Climate and Energy Decision Making (CEDM) through a cooperative agreement between the National Science Foundation and Carnegie Mellon University (SES–0949710 and SES–1463492; to WBdB).

Author information

Authors and Affiliations

Contributions

AK and WBdB developed the initial survey. Input was provided by all team members. AK implemented the survey. LO and AK distributed the survey. AK analyzed the survey data and wrote a first draft of the manuscript. Revisions were made by all team members.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Ethics approval

Because this survey was an expert elicitation, the chair of the third author’s ethical review board confirmed that no ethical review was necessary. The ethical review board of the first author’s previous university approved the survey.

Consent to participate and consent for publication

Consent was sought by informing participants that their answers were anonymous and voluntary, and that they could end their participation any time.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Kause, A., Bruine de Bruin, W., Persson, J. et al. Confidence levels and likelihood terms in IPCC reports: a survey of experts from different scientific disciplines. Climatic Change 173, 2 (2022). https://doi.org/10.1007/s10584-022-03382-3

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s10584-022-03382-3