Abstract

In the last decade, research on the use of artificial intelligence technologies in education has steadily grown. Many studies have demonstrated the potential of these technologies to improve school administration processes, enhance students' learning experiences, simplify teachers' daily tasks, and broaden opportunities for lifelong learning. However, the enthusiasm surrounding these possibilities may overshadow the ethical challenges posed by these systems. This systematic literature review is designed to explore the ethical dimensions surrounding the utilisation of these technologies within the defined timeframe (2011–022) in the field of education. It undertakes a thorough analysis of various applications and objectives, with a particular focus on pinpointing any inherent shortcomings within the existing body of literature. The paper discusses how cultural differences, inclusion, and emotions have been addressed in this context. Finally, it explores the capacity building efforts that have been put in place, their main targets, as well as guidelines and frameworks available for the ethical use of these systems. This review sheds light on the research's blind spots and provides insights to help rethink education ethics in the age of AI. Additionally, the paper explores implications for teacher training, as educators play a critical role in ensuring the ethical use of AI in education. This review aims to stimulate ethical debates around artificial intelligence that recognise it as a non-neutral tool, and to view it as an opportunity to strengthen the debates on the ethics of education itself.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

Introduction

In the late 1950s, artificial intelligence (AI) emerged as both a promise and a threat based on the expectation of having a powerful agent that would facilitate everyday tasks while exercising its decision-making power over people. As an autonomous, adaptive, and interactive human-designed software system, AI today can make decisions in complex situations relying on perception, interpretation, and reasoning based on data (Dignum, 2021). AI in education (AIED), in particular, is being used in administration, to track the goals of the school in conjunction with policies, to pursue student interests, to assist teachers in daily tasks, and to facilitate lifelong learning (Miao et al., 2021). Promises surrounding these systems include identifying and enhancing competencies and talents, freeing up teachers from workload, addressing student diversity, predicting underachievement in students and institutions, facilitating the transition to professional life, enabling access to cheaper and better-quality education for poorer people, and more engaging learning experiences. With the COVID-19 pandemic, debates about the role of these resources in facilitating online assessment and student learning experience have increased (García-Peñalvo et al., 2021). For example, a study conducted in Romania showed that due to the educational needs caused by the pandemic, the use of AI-driven platforms increased between 2019 and 2020 among both teachers and students, even in less developed geographic areas (Pantelimon et al., 2021). Discussions on whether AI can offer a fair learning experience in health emergencies are going on. The suitability of AIED for cases of forced migration resulting from environmental or social crises may also emerge as a concern in the near future, underscoring the pressing need for discussions on AIED ethics. So, what are the trade-offs between all the opportunities and solutions these technologies can offer to some and the challenges posed by its design, implementation practices and non-universality in terms of access? And what type of changes can we expect in education and in the role of its actors, when introducing these technologies into pedagogical practice?

Lurking within all these advances are ideologies, fantasies, and projections about how the future should be or is likely to be (Mouta et al., 2020). As early as 1960, Weiner (1960) observed that our ability to keep up with and understand what is happening decreases as machines become more powerful and technologies become smarter. Therefore, thinking about AI is expected to consider compromises between ethics and the movements of transhumanism in order to understand how AI participates in this pursuit of perfection that Western philosophy sees as human's innate nature (Byk, 2021). And once perfectibility is considered the ultimate social and human goal of education, are intelligent machines given a free pass in the process? Ethical reasoning seems to be able to offer such considerations and possible transitions a sustainable conceptual support, especially in education where the “when, how, and to what end” of the pedagogical use of AI should always be asked (Holmes et al., 2021).

When thinking about AIED, it is important to understand what concept of intelligence lives on and has prevailed in these AI advances. Is it inclusive enough? Is it ethically considered to suit learning diversity and respect emotional expression? And why is intelligence the artificial layer we are promoting and what message does it bring to education? Cave (2020) argues that intelligence is value-laden, with links to colonialism, racism and patriarchy. Another reason for concern about the rapid advances in AI has to do with the complexity that has been added to discussions about the digital divide: now the gap is not just between users, but between those generating the data – users who supposedly should own it – and tech corporations who process it – those that objectively own it (Abboud et al., 2020). Disparities are no more just about access, but about ownership and rights, and the abilities to deliver, process, and access particular data. According to Miao et al. (2021), this divide encompasses developed and developing countries, socio-economic groups within countries, owners, and users of technologies, and those having jobs enhanced by AI against those who may be replaced by it.

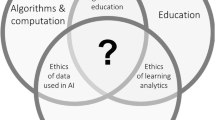

An analysis of the role of education in AI policies conducted by Schiff (2021) found that global policy does not address the ethics of AIED and that it mainly focuses on education as an instrumental strategy to guarantee the supply of AI talent to ensure manpower. There is also a shortage of education and training opportunities for teachers, parents, and the general public on this matter (Miao et al., 2021). Moreover, a systematic review approach of AIED from 1970 to 2020 presents the main research topics of the field: 1) adaptive learning and personalisation, 2) deep learning and machine learning algorithms used online, 3) educational human-AI interaction, 4) AI-generated data educational use, and 5) AI in higher education. Ethics on AIED seemed to be an almost absent field of research (Bozkurt et al., 2021). Having AI systems detecting students’ emotions could support emotional preparedness for the educational process. However, this may bring concerns related to emotional privacy, the elicitation of emotions, and virtual connections between a person and a digital assistant (Hudlicka, 2016). Remembering children’s rights is a call to reflect on educational ethics through a cross-cultural and mutual perspective in a globalised world (Nizhnikov, 2018). Thus, AIED studies are expected to discuss the decisions made regarding pedagogy, purposes of learning, the role of technology in relation to teachers, and access to education (Holmes et al., 2021). According to Weber (2020), AIED ethics should take into account: 1) legal frameworks for the use of AIED in learning institutions; 2) cloud-based data clear terms for privacy, security and trust; 3) power asymmetries and misuse by malicious actors; 4) equity and social justice; 5) machine liability and accountability; and 6) educational programmes for students on technology ethical dimensions.

The aim of these reflections is to prioritise contextual pedagogical factors in any potential reconfiguration of learning practices with the emergence of AIED. How can ethical frameworks for the use of these systems incorporate dialogic ethics that reflect the principles of participation from diverse educational communities? Is it possible to incorporate these technologies in a manner that not only supports personal ethical development but also fosters an environment in which educational stakeholders can embrace and respect the diverse interests and entities within the educational landscape? This encompasses not only various educational systems but also extends to the different stakeholders such as governance bodies, the technology industry, and policy-makers. Can the integration of these technologies create conditions that cater to the needs and values of diverse educational communities, balancing interests, while promoting ethical growth? To explore these issues, the following lines will examine published literature on AIED and ethics from 2011 to 2022, considering conceptual assumptions and the impact assessment of programmes that use AI technologies.

Method

Following the discipline of a systematic literature review (SLR), this research screens the most relevant data from the existing literature, responding to pre-defined eligibility criteria (Ramírez-Montoya & García-Peñalvo, 2018). The next lines acknowledge the nature of the debates on AIED ethics and how ethics is screened in AIED studies.

Planning

As shown in Table 1, the planning phase began with four mapping questions (MQs) and a set of four inclusion and exclusion criteria to globally screen what has been published in AIED and ethics over the last 10 years. To ensure a comprehensive review considering the global scope of this study, the researchers’ language proficiency was taken into account and multiple languages were included. Due to the time frame for completing the review, it was not possible to add more than four languages, as translation would depend on resources not immediately available. To allow for a more in-depth analysis, five research questions (RQs) were added. These research questions are intricately connected to the themes and concerns raised in the introduction. They provide the necessary direction and focus to fulfill the paper's primary motivation, which is a thorough examination of the shortcomings in the literature, limited to the chosen timeframe, regarding the ethical aspects of AIED and their impact on educational practices. These questions offer a structured approach to identifying current applications and the challenges they may bring (RQ1), disparities in terms of data ownership, access, implementation quality and contextualisation (RQ2), emotion surveillance (RQ3), focus and targets of capacity building for an ethical use of AIED (RQ4) and available regulations to safeguard the compliance of ethical principles in AIED design and implementation (RQ5). As stated in the introduction, the knowledge obtained from these RQs would be beneficial for promoting ethical responsibility through dialogic practice, as it addresses several unresolved aspects in AIED use, including the balance between access and quality (of implementation), the tension between universality and context, the interplay of cognition and emotions, and the normative guidance provided by targeted training and regulations.

The PICOC method (Petticrew & Roberts, 2008) was used to accurately structure this review. The deliberate use of broader keywords in this SLR was necessary because including research question terms directly in the search strings limited the results. Broader keywords were chosen to encompass the terminology variations used by authors when addressing the same research questions. This approach allowed for a comprehensive exploration of the AIED ethics field and the flexibility to fine-tune the search based on the results obtained. Considering the comprehensive focus of the research, Scopus and Web of Science were the selected databases as they correspond to all-inclusive digital libraries that cover a wide range of academic disciplines within the scope of this study; they were also selected for having logical expressions and both full-length and field-specific articles.

Conducting and Analysing

To manage the final cluster of articles, the results were gathered into a spreadsheet (cf. https://docs.google.com/spreadsheets/d/1u6ArXbQ5w4bxhHbKAcRUCZLUSACK0AoG/edit?pli=1#gid=1005218435), which presents the analysis in several stages (cf. Figure 1). In the identification phase, 250 publications were retrieved from Scopus and 249 from WoS. The duplicate papers were eliminated, and a selection of 410 papers was made based on their title and abstract. For the preliminary screening phase, the alignment of the paper's topic with the research goal, the PICOC elements (population, intervention, comparison, outcomes, and context), the paper's implicit (e.g., using terms such as “AIED challenges”, “FATE”) or explicit relation to AIED ethics, and the fulfilment of the inclusion/exclusion criteria (cf. Table 1) were taken into account. If in doubt, the entire article was read. This preliminary screening phase (abstracts) enabled the gathering of 156 contributions. After this stage, a quality assessment screening was conducted for several purposes, including ensuring relevance (i.e., that the selected papers directly align with the research goals, objectives, and topic), assessing methodological rigor (i.e., whether the selected papers meet specific quality criteria), evaluating reliability (using a predefined scoring system to measure the assessment's reliability), ensuring a high-quality and relevant sample selection, and verifying data integrity (i.e., that the selected papers are reliable and meet established quality standards). In the eligibility stage, the retrieved articles were thoroughly read and analysed according to the five-criteria quality assessment checklist presented in Table 2. Three options were available for each criterion: Yes (1 point), Partially (0.5 point), and No (0 point). Five points were awarded to items that fully met the defined quality parameters. All papers with four or more points were considered; since four is the cut-off point, all papers with a lower score were excluded from the final sample and from the corresponding RQs. This quality assessment phase allowed the collection of 83 papers. Finally, 24 articles were added to this cluster: they were first or second references, chosen whenever they engaged in relevant work surrounding the RQs, particularly on topics requiring a deeper understanding (e.g., AIED and cultural differences) or further examples (e.g., AIED technologies in use). The reasons for choosing these papers are presented in Table 3. As a result of this process, 107 papers make up this sample.

PRISMA 2020 flow diagram for the SLR process: identification of AIED ethics papers. Note: Page MJ, McKenzie JE, Bossuyt PM, Boutron I, Hoffmann TC, Mulrow CD, et al. The PRISMA 2020 statement: an updated guideline for reporting systematic reviews. BMJ 2021;372:n71. https://doi.org/10.1136/bmj.n71. For more information, visit: http://www.prisma-statement.org/

Mapping Analysis

-

MQ1. What has been the evolution of the number of research articles concerning ethics in AIED since 2011?

As Fig. 2 shows, between 2011 and 2018, the number of publications on this subject exhibited some fluctuations. This period can be seen as a foundational phase, where discussions around the ethical dimensions of AI in education began to take shape. However, starting in 2019, there was a noticeable shift in the trajectory. AIED research began to experience exponential growth, although there was a small retraction in 2022, with a remarkable surge in the number of articles in 2021. This explosion in publications can be attributed to several factors. Firstly, AI technologies continued to advance rapidly, permeating various educational settings. As these technologies gained traction, there was a corresponding surge of interest in their ethical implications. Additionally, the global adoption of AI in education became more widespread, with researchers and educators worldwide focusing on the ethical aspects that should accompany its integration. This broader reach led to a surge in research outputs, as experts from various geographies contributed to the discourse. Moreover, the spotlight on AI ethics in mainstream media and entertainment further fuelled academic research and discourse in the field of AIED.

-

MQ2. Who are the key authors in AIED ethics?

Identifying key contributors allows to recognise those who play a significant role in shaping the discourse within this domain. It provides valuable context to comprehend the methodologies employed in these studies and the perspectives from which they were elaborated. Furthermore, it opens the door to assisting researchers in tracking experts in this specific field of study. In this SLR, Holmes, W. has contributed the most publications with 4, followed by Tuomi, I. with 3. Additionally, there are some authors who have two publications each, including Cukurova, M., Dignum, V., Luckin, R., Mouta, A., Pinto Llorente, A. M., Shum, S. B., and Torrecilla Sánchez, E. M..

-

MQ3. Which countries exhibit the most significant productivity in terms of disseminating conceptual or theoretical approaches, frameworks, or interventions in the realm of AIED ethics?

The inclusion of this mapping question aligns with the need emphasised in the introduction to delve into how cultural differences are addressed within the context of AIED ethics. Figure 3 displays that 47% of the publications originate from the United Kingdom, with Finland contributing 20%. Spain and Sweden account for 13% of the papers collectively, while Australia contributes 7%. If the majority of papers in AIED during the specified research period can be attributed to the USA, it's noteworthy that, when the ethical dimension is introduced, a European discourse predominates. This can be understood due to Europe's long history of philosophical and ethical inquiry, ranging from the works of Enlightenment thinkers to contemporary ethical philosophy. These traditions often emphasise concepts such as human rights, individual autonomy, and the ethical responsibilities of technology developers and educators. These values align with the European Union's General Data Protection Regulation (GDPR), which places a strong emphasis on data privacy and ethics.

The concentration of research in AIED ethics within Europe suggests the presence of regional perspectives and priorities in addressing ethical considerations in educational technology. This also underscores the necessity for a global dialogue that encompasses diverse cultural viewpoints, as the ethical implications of AIED extend beyond geographical boundaries.

-

MQ4. From which subject areas are coming the main studies in AIED ethics?

The analysis of subject areas relied on the International Standard Classification of Education (ISCED), developed by UNESCO in 2012. This classification system encompasses 25 fields of education organised into 9 broad groups. The main areas that ethical discussions surrounding the use of AIED relate to are shown in Fig. 4: Science (e.g., computing, life sciences, mathematics) takes the lead, with over 50%. It is followed by Education where 32% of articles may be found. Engineering, Manufacturing, and Construction (e.g., architecture, engineering, manufacturing) follow with 5%. Health and Welfare (e.g., medicine, nursing, social work) and Humanities and Arts (e.g., languages, literature, history, philosophy, performing and visual arts, cultural studies) each cover 4% of the total number of articles. Finally, Social Sciences, Business and Law (e.g., economics, journalism, political science, psychology, sociology) collectively have a total of 2% of the articles.

The diverse distribution of ethical discussions across academic domains in AIED underscores the need for a comprehensive ethical framework that addresses the unique challenges and concerns within each field. The dominant discourse in science, particularly computing, comprising over 50% of ethical discussions, highlights the central role of technology and data-driven approaches in AIED. While science, notably computing, dominates the discourse, it's crucial to broaden the conversation to ensure that other areas are not overlooked. The scarcity of ethical discussions in humanities and arts suggests a potential gap in recognising the human-centred dimension of learning and pedagogy.

SLR Research Analysis

The results obtained after analysing and studying the articles supporting this SLR are presented below according to the research questions.

-

RQ1. Which AIED technologies, targets and applications are being considered and what challenges do they pose in general?

AI technologies and the overall ethical challenges they pose have been the second most studied topic of research on the ethics of AIED between 2011 and 2022. Table 4 summarises 36% of the studies in this SLR, presenting AIED technologies, their targets, the primary educational purposes, and potential ethical challenges. In terms of targets, AIED technologies are being applied to a wide spectrum, including school institutions (K-12) and universities, as well as individual students and teachers. This highlights the broad scope of AIED's influence within the educational ecosystem. AIED applications are designed to serve a multitude of educational purposes. The claims include personalised learning, meta-cognition improvement, support for students with learning disabilities, enhancement of writing skills, promotion of STEM subjects and computer programming, virtual schooling, and collaborative learning experiences. These applications are tailored to meet diverse educational needs and objectives.

The risks associated with the use of such technologies are frequently overlooked in the literature, revealing a substantial gap in understanding how automated measurement processes could potentially compromise well-being (Burr et al., 2020). For instance, while profiling higher education students may seem promising in terms of career advancement, it can also be viewed as manipulative, as it fails to acknowledge individuals' freedom of expression and choice (Tundrea, 2020). Sensitive information can also be inferred from seemingly innocuous data, as activity logs can be leveraged to deduce someone's political beliefs, ethnic identity, health status, or sexual orientation (Tundrea, 2020). Some authors draw attention to the potential conflict between the obligation of higher education institutions to fulfil their mandate of ensuring learning and their fiduciary duty to act ethically (Prinsloo & Slade, 2017).

While doubts persist among neuroscientists regarding the potential of certain technologies to improve learning, the risks of inaccurate results or unintended consequences linked to electroencephalography (EEG) remain significant. Despite these concerns, EEG sensors were already incorporated into headbands for detecting students' brain activity (Miao et al., 2021). Therefore, AI has faced criticism for being "dehumanising" in this context, as it often promotes prescriptive teaching with minimal interaction and automated pathways, ultimately diminishing students' agency (Miao et al., 2021).

Another worrisome issue pertains to children aged four to ten who perceive social robots as trustworthy. For example, a talking doll might influence children to reconsider their moral judgments (Williams et al., 2018). This raises further concerns about whether these robots are primarily profit-driven. Additionally, questions regarding privacy emerge as AI delves into children's socio-emotional and cognitive characteristics, as well as aspects of their home environment. Furthermore, transparency issues arise regarding who has access to this data (Mohammed & Watson, 2019).

The significance of robust assessment tools has been underscored by the COVID-19 pandemic. Gudiño Paredes et al. (2021) conducted a mixed-methods study to examine the impact of remote AI-powered proctored exams on the learning processes and academic integrity of online graduate students. This technology offers facial recognition, audio analysis, eye movement tracking, and object/face detection in the surroundings. While the results indicated a substantial reduction in dishonesty, students reported feeling compelled to cheat under observation, lacking internal motivation or a personal reflective process. They also expressed concerns about privacy and anxiety during assessments conducted in this manner.

Regarding teachers, the possibility of letting these AI technologies automate ineffective pedagogical practices (based in data incompleteness and bias, for example) is also a reason for concern. In the long run, AI-based education may have the potential to disempower teachers (Miao et al., 2021). Another concern revolves around the development of learners' cyborg identities as they engage with AI, the changing relationship between humans and AI systems in a posthuman hybrid dynamic and how it may impact teachers and students (Adams et al., 2022). Finally, most studies assessing the effects of technology are typically carried out by the creators of that technology, often affiliated with commercial entities and involving a limited number of participants (Holmes & Tuomi, 2022).

These insights shed light on the transformative potential of AI in education, recognising how important it is to consider the ethical dimensions that come into play when implementing these technologies.

-

RQ2. How is AIED considering cultural differences and inclusion?

Although “the greatest good for the greatest number” is a concern when introducing these technologies, what can be considered good in an educational context is a challenging question. Equity, cultural and interpersonal differences, which are the leitmotiv of 17% of the papers under analysis, are an issue when it comes to massive technologies that may not easily adapt and respond to the specifics of the context and the people who are meant to use them, potentially undermining the fairness and fundamental rights of individuals. Table 5 demonstrates that out of the 18 articles included in the SLR, there are four distinct categories of ethical challenges pertaining to cultural and inclusion responsiveness. The “intercultural” challenge has to do with these systems’ capacity to accommodate different cultural background and learners’ values. Approximately 53% of the papers within this subject area highlight that AI solutions for educational purposes may not sufficiently consider cross-cultural variations. Another challenge has to do with “cultural realism” and the difficulty of representing the diversity of learners’ particular physical characteristics. Some authors defend that AIED is best suited to Western, educated, industrialised, wealthy, and democratic nations (Nye, 2015; Ogan et al., 2015). For example, limited broadband access will leave people out of data sets and AI will be unintentionally biased against them (Miao et al., 2021). There's also the issue of reported misidentifications from facial recognition software in relation to darker skin tones. This imperatively requires the choice of suitable workaround solutions or the omission of facial recognition (Coghlan et al., 2021). Sanusi and Olaleye (2022) discuss the role of cultural competence and ethics in AI education, and how these factors influence students' learning of AI. It also touches upon the disparities between rural and urban students in terms of cultural and ethical competence. Holmes and Tuomi (2022) discuss AIED colonialism, which includes the adoption of single products in state education systems, language biases, and the imposition of specific pedagogical approaches. This colonialism varies in extent but often results in well-funded Global North AIED tools overshadowing locally sensitive alternatives.

The “inclusion” challenge refers to the effort of guaranteeing that AIED features recognise learners’ neurological, physiological and psychological diversity and become accessible and accurate to all. Although it's a challenging and early-stage process, robots and empathic intelligent learning environments (ILEs) are already being designed to recognise emotional patterns and respond accordingly (Pham & Wang, 2017). This is especially beneficial for children with special needs. For instance, socially assistive robots proved more effective in reducing repetitive and stereotyped behaviours in autistic children compared to interactions with people (Costa et al., 2018). In the case of autism spectrum disorder, educational games are being used to enhance children's ability to distinguish emotions in a simulated learning environment, aiming to facilitate their transition between the virtual and real-world contexts (Epp & Makos, 2013). Another study showed that children engaging in a 30-min daily interaction with a caregiver and a social robot over one month improved their attention skills when the robot was not present (Johnson & Lester, 2016). The robot encouraged emotional storytelling, perspective-taking, and tailored the difficulty of activities based on past performance. These studies demonstrate that empathic robots can create more engaging and fearless learning experiences, especially for K-12 students (Johnson & Lester, 2016). While these advancements offer promising perspectives for inclusion in special education, challenges arise in the context of online proctoring. The technology may not be adequately prepared to interpret the behaviours of neurodiverse individuals or those with disabilities, potentially leading to false positives for cheating (Coghlan et al., 2021).

Finally, these systems may not be able to address issues of harassment, bullying, or discrimination that may occur in online educational environments and ensuring that AIED technologies have mechanisms in place to prevent or respond to such incidents. The European project ACACIA (Restrepo et al., 2019), funded in part by Erasmus + , features a chatbot named Artemisa designed to address sexual harassment and recruit volunteers to promote diversity and tolerance at the Peruvian National University of San Marcos. However, like other chatbots, it presents accessibility challenges for users, underscoring the need for training in accessibility, tolerance, and diversity acceptance to mitigate biases.

Despite the various difficulties mentioned earlier, there is a growing recognition of the importance of culturally inclusive research in the field of AIED (Nye, 2015). The data presented in Table 6 showcases a diversity of studies that underscore the need to develop AI systems that are socially and culturally aware. Some of the examples, include enculturated agents which can adapt their interactions and responses to align with the cultural background and preferences of the users, enhancing the effectiveness of communication and learning (Mohammed & Watson, 2019). Empathic robots and ILEs can also be tailored to meet the needs of students with special educational needs. These could include individuals with conditions such as autism, hearing or oral communication problems, and dyslexia (Epp & Makos, 2013; Johnson & Lester, 2016; Pham & Wang, 2017; Radford et al., 2021; Rello et al., 2016). Chatbots can also be used as a means of providing support to victims of sexual harassment, ensuring anonymity, accessibility, and responsiveness that can be particularly valuable in sensitive and emotionally challenging situations (Restrepo et al., 2019).

-

RQ3. How does AIED monitor emotions and what ethical challenges may be at stake?

Since AIED is primarily justified as a response to learner holistic needs, this section aims to understand how the affective dimension is addressed alongside the cognitive and performance dimensions. Regarding ethical considerations, only 4% of all papers analysed in this SLR address the particular ethical concerns that may arise from AIED's handling of emotions. The main concerns include negative feelings related to surveillance, direct correlations between behaviour and emotions, a lack of respect for affective privacy, and the induction of emotions (cf. Table 7). The biggest percentage of papers related to this topic, concentrate on the emotional outcomes of affective surveillance. Automated monitoring designed to enhance productivity and well-being has been associated with increased levels of stress and anxiety (Burr et al., 2020) and may result in nervousness during evaluation (Gudiño Paredes et al., 2021). Furthermore, despite advancements in AIED, there seems to be a missing context for understanding emotions and their meaning beyond the "signalling paradigm" of matching emotions with their corresponding behavioural signals (Dobrosovestnova & Hannibal, 2020). Furthermore, positive emotions, motivation, academic performance, and school achievements drive the integration of AI into formal education. Empirical studies suggest that the affective component in artificial agents enhances learning compared to experiences without emotional and social aspects. However, there is an excessive optimism surrounding these automated systems. While learners and teachers experience a range of emotions, from joy to frustration, the design of affective behaviour in educational robots has largely concentrated on conveying positive emotions. Given that complex and non-positive emotions are also relevant in designing social robots for educational purposes, it is critical to accurately model these ambivalent emotional traits (Dobrosovestnova & Hannibal, 2020).

According to Hudlicka (2016), another potential area of concern pertains to how interactions with an agent can jeopardise the privacy of an individual's emotional experiences. Agents within the AIED context also possess the capability to induce or manipulate emotions, and virtual relationships with these agents may blur the lines between reality and fiction, potentially resulting in psychological challenges. These studies emphasise that the discussions regarding the management of emotions in educational technology play a crucial role within the larger ethical conversation in this domain.

-

RQ4. How is capacity building on AIED ethics being covered?

Table 8 presents the 41% of studies in this SLR covering AI ethics education and the targets, content, skills, and delivery methods of such education. Through a systematic policy review, Schiff (2021) found that education for AI includes training AI professionals (e.g., computer scientists), preparing the workforce for AI, and broader public AI literacy. Many authors stress the need for ethics in Engineering education to bridge the gap between technology and society (Antoniou, 2021; Dignum, 2021; Dignum, 2020; Hoeschl, 2017; Park et al., 2021; Williams et al., 2020). Qualitative data reveals that US Information and Computer Science students often fail to consider the ethical implications of AI design for privacy and well-being without explicit guidance (McDonald & Pan, 2020). Another exploration in the US analysed 31 standalone AI ethics classes and 20 AI/Machine Learning technical courses, revealing both commendable practices and notable omissions, such as accessibility, diversity in the AI workforce, and sustainability (Garrett et al., 2020). In 2020, research conducted across 12 Australian universities indicated a lack of ethics education in Computer Science courses or a focus solely on micro-ethical concepts linked to professionalism and industry standards (Gorur et al., 2020). The University of North Carolina piloted an AI ethics course for Computer Science students, focusing on explicit ethical agents, and suggested the value of prototyping/hands-on approaches and challenging students to employ diverse ethical approaches and summarise their implications (Green, 2021). Similarly, the University of Oulu in Finland introduced an AI ethics pilot course, covering a range of AI applications, legislative and ethics aspects, and the pros and cons of AI applications (Tuovinen & Rohunen, 2021). Future implementations were recommended to incorporate unexpected ethical issues, including methods like case study analyses and role reversal between defenders and opponents.

Studies in various fields of health, including anatomy, psychiatry, and clinical psychology, highlight the growing importance of ethics and education in the context of AI adoption. While AI holds promise for improving diagnoses and prognoses, it must align with medical epistemological frameworks to address emerging ethical and clinical concerns (Gauld et al., 2021). Research with clinical psychology Master's students at the University of Basel has shown that despite some familiarity with AI/ML tools, they require education to assist patients in making informed choices regarding mental health AI/ML applications, taking into account issues like privacy, equality, and discrimination (Blease et al., 2021). Other authors suggest that when preparing curricula for AI education, it is crucial to consult with students to understand their needs and their perceived readiness for AI-related topics. To facilitate this, Karaca et al. (2021) have developed the MAIRS-MS, a reliable tool for assessing students' readiness for AI and its applications in medicine.

Schiff’s (2021) study found that ethical training for the appropriate implementation of AI in the education sector was almost absent. Ng et al. (2023) conducted a SLR that includes thematic and content analysis of 49 publications from 2000 to 2020. The review highlights that AI teaching and learning primarily focused on computer science education at the university level before 2021. The findings presented in this review emphasise the significance of educating individuals in AI literacy and AI ethics. So, there are not as many programmes reporting training on ethical issues around AI, and even fewer on best practices for ethical integration of AI resources. Additionally, there are no reported tools to assess pedagogical practices using AI. Even in training projects conducted in schools, there is a noticeable absence of emphasis on the ethical considerations associated with AIED. Furthermore, this training still addresses shortcomings in countries where AI is already a reality in the classroom, such as China. Its unsystematic nature, non-intentionality and the lousy quality of its supporting materials show there is still a long way to go (Gong et al., 2021). Furthermore, when not absent, it appears that training specifically for the use of AIED is only being provided to teachers, school administrators, and researchers. Loftus and Madden (2020) emphasise the importance of ensuring that students also understand the datafication of their own lives and learning processes, and advocate for placing students at the heart of the construction of AI-powered models, which again draws attention to the importance of sense of agency.

According to Dignum (2021), the digital age is no longer compatible with the separation of STEM from humanities, arts, or social sciences. In fact, arts seem to be a great platform to promote AI education, considering it an expression medium and its role fostering empathy, diversity, and inclusion in the AI pipeline (Srinivasan & Uchino, 2021). Xu (2020) also suggests that all ethical challenges in introducing AIED must be considered from a humanistic educational perspective. Following this principle, some examples of AI ethics training in schools were conceived. Research from Ottenbreit-Leftwich et al. (2023) focuses on introducing AI education to K-12 students and explores the potential starting point for teachers to teach AI and computer science concepts. It suggests that AI ethics can be a compelling entry point. Teachers showed more confidence in discussing AI ethics with their students, which led to engaged discussions. The research aims to lay the groundwork for elementary AI education by considering students' ideas, experiences, and ethics as essential components for curriculum design in K-12 education.

Nevertheless, another topic of concern has been exposed. Although 42 studies were dedicated to the topic, only four of them covered the impact assessment phase of these training programmes. A study by Lee et al. (2021) considered the impact of a summer workshop on AI literature on middle school students, mainly from underrepresented groups in STEM. Certain benefits included a notable improvement in students' grasp of AI and its potential biases, enhanced adaptability to future AI-related employment, a better understanding of the consequences of their actions on others, improved capacity to discuss ethical AI issues with their families, and the ability to leverage their family's resources for self-improvement. Another study (Kong et al., 2023) highlights the potential of AI literacy education for senior secondary students, emphasising that programming knowledge is not a prerequisite for understanding AI concepts. The results suggest that with sufficient learning time and project-based pedagogy, senior secondary students can develop AI literacy, although there may be challenges in comprehending complex AI ethical principles, which require further guidance and time. The work of Lucic et al. (2022), presents a course at the University of Amsterdam aiming at providing students with a comprehensive understanding of FACT-AI topics and algorithmic harm through lectures, paper discussions, and a reproducibility project. Students engage with the open-source and research communities, creating a public code repository. The course emphasises the importance of reproducibility and received positive feedback from students who appreciate the critical perspectives gained and insights into AI research. The course successfully motivates students and promotes critical thinking in AI. Finally, using a phenomenographic approach, Yau et al. (2022) studied 28 in-service teachers from 17 secondary schools in Hong Kong after implementing an AI curriculum. They identified six categories of teacher conceptions related to teaching AI, including technology bridging, knowledge delivery, interest stimulation, ethics establishment, capability cultivation, and intellectual development. The study presents a hierarchical outcome space that illustrates the range of surface to deep conceptions held by teachers. It offers insights into cultivating both technical and non-technical teachers' competence in AI education, aiding teacher educators and policymakers in enhancing AI education for K-12 students.

-

RQ5. What principles, regulations and frameworks are there for AIED?

The concerns described in the previous RQs have justified the adoption of guidelines for the use of AI in education. The first attempts to adopt general ethical usage guidelines were unsuccessful, as it was quickly realised that the specifics of the education sector required a specific framework. So, educational actors are now requested to produce workable ethical frameworks to tackle AI potential risks, improving educational institutions and student outcomes (Weber, 2020). However, it is crucial to develop consistent terminology and scope in formal standardisation efforts, especially in the context of Information Technology for Learning, Education, and Training, within and across standardisation bodies (Mason et al., 2020). Furthermore, it's worth noting that specific discussions on AIED in education policies are taking place in countries like China, India, Italy, Kenya, Malta, Singapore, South Korea, Spain and the United States. Although these nine countries are discussing some version of AI for education, only four or five are discussing it in depth beyond superficial mentions (Schiff, 2021).

As indicated in Table 9, which pertains to the 8% of articles discussing this subject, some ethical frameworks show tensions and gaps concerning the ethical advancement and application of learning analytics, while others are not appropriate for use in the field of education. For example, the GDPR is too complex when used in education (Kitto & Knight, 2019) and it can be difficult to apply these guidelines in sensitive cases where the primary concern is the safety of people (Al-Omran et al., 2019). As of 2019, there were no specific policies or regulations regarding AIED, despite efforts to ensure trustworthy AI (Holmes et al., 2018). In response to this absence, guidelines such as the "Beijing Consensus on Artificial Intelligence and Education" (UNESCO, 2019b) and "The Ethical Framework for Al in Education"(The Institute for Ethical Al in Education, 2021) have been introduced. The UNESCO Consensus on AI in education was developed through the collaboration of multiple stakeholders, including government ministers, international representatives, and experts. It outlines seven key principles for UNESCO's member states: prioritise AI in education policies to achieve SDG 4 goals, support AIED-enhanced pedagogies when benefits outweigh risks, promote AI tools for lifelong and personalised learning, base policies on evidence, provide comprehensive AI training for teachers, cultivate critical skills for the AI era, and encourage equitable, transparent, and ethical use of AIED, with a focus on gender equality (UNESCO, 2019b). “The Ethical Framework for Al in Education” (The Institute for Ethical Al in Education, 2021) appears to be the most sophisticated and up-to-date tool to closely monitor AI technologies used in the various stages of AI adoption in education, from pre-procurement to implementation and impact evaluation. Launched in 2018 by the University of Buckingham, this framework became publicly available in 2021. It resulted from a two-and-a-half-year effort, which included collecting interviews from policymakers, academics, philosophers, ethicists, industry experts, and educators to establish a consensus regarding the integration of AI into the education sector. The framework addresses ethical design, privacy, equity, transparency, and accountability concerns, considering the sector's specific needs. For example, in relation to privacy, it states that even if an institution is required to continuously assess students, it must also establish safe spaces where learners are not assessed. When it comes to autonomy and agency, the framework draws attention to the actions that should be taken when the AI system predicts an unfavourable outcome. It also underscores the importance of ensuring that AI systems are designed to benefit learning without leading to addictive behaviours. This framework is the first step in addressing the ethical challenges of AIED and ensuring it is educationally sound from the ground up.

Finally, Miller and Tuomi (2022) emphasise the importance of sense-making when envisioning the future. They highlight the significance of employing various forms of anticipation to perceive the world from different perspectives. This approach is rooted in a shift in the field of futures studies towards an ontological perspective, which allows to reconceptualise the future as a point of origin. It positions anticipation and anticipatory processes about the future as integral aspects of the present. This challenge could be integrated into the ongoing enhancement of frameworks related to AIED and educational ethics, fostering broader, more creative, and innovative processes that aren't limited by predefined objectives.

Discussion

The following sections delve into various aspects concerning the implications of the findings from this SLR. They encompass the integration of a theoretical model for analysing pedagogical implications based on the collected data, the identification of research gaps, recommendations for teacher training in AIED ethics, and a critical examination of potential limitations in this research.

Implications of this SLR for rethinking the ethics of education: using a theoretical model

The introduction of a new technology in the realm of education provides an opportunity not only to reconsider the specific ethical consequences it introduces but also to contemplate the broader ethics associated with education itself. The ik.model (Mouta et al., 2015) represents an extended framework building upon the TPACK model, encompassing the human element within educational technology integration. This framework comprises four pedagogical dimensions: "technologies and resources", "content knowledge", "learning processes and strategies", and "educational actors and their relationships". Subsequently, this theoretical framework is applied to assess the potential consequences of the aforementioned results.

Technologies and Resources Dimension

RQ1 and RQ3 emphasise the critical importance of creating inclusive AI systems in education, underscoring the significance of diversity in decision-making. Yet, ethical discussions on AI in education are primarily Western-centric and STEM-dominated, as indicated by MQ2, MQ3, and MQ4, potentially resulting in biases and hindering inclusive AI system development. RQ5 stress the importance of collaboration in evaluating ethical frameworks for AIED, highlighting the need to balance student interests, meaningful innovation, and overcoming resistance to change in education. Furthermore, it points out the challenges of applying these frameworks across diverse educational contexts and levels. In light of these findings, three key outcomes emerge: (1) funding for research and educational programmes including underrepresented areas (e.g., arts, health, humanities), populations and groups, exemplified by AIED DEIA mentoring fellowships (“Call for Fellow Nominations”, 2023); (2) systematic collaboration among AI researchers, developers, and practitioners, drawing from school fieldwork to create technically robust and culturally sensitive AI systems. This would be an opportunity to build on teachers' own ethical development, by creating opportunities for them to exercise their skills as judging actors (Arendt, 1958). (3) It is recommended to develop an assessment tool that can adapt to different contexts and monitor the positive impact of these technologies, involving a wide range of stakeholders. These frameworks should also ensure seamless integration with educational standards as students progress through their educational journey and into their jobs.

Content Knowledge Dimension

The results from RQ4 indicate that while there are training programmes addressing ethical challenges in AIED for teachers, school administrators, and researchers, students usually receive limited exposure to the ethical use of AI. Their education primarily focuses on deontological and on-the-job ethics. This approach overlooks the valuable perspective that students can bring to the development of AIED environments, considering that they are the primary beneficiaries of these technologies. To bridge this gap, curriculum infusion is proposed as a suitable strategy. It allows for the inclusion of emerging topics in a meaningful way, while accommodating the busy academic calendar. By incorporating AI concepts across various subjects and applying them to tasks utilising AIED resources, educators can facilitate discussions about AI's functions, impacts, and ethics from diverse subject perspectives. Ultimately, this approach enhances students' acquisition of content knowledge, application to practical tasks, and cultivates a comprehensive understanding of AI systems and their effects on individuals and groups.

Learning Processes and Strategies Dimension

The results of RQ1, RQ2, RQ3, and RQ5 underscore the importance of preserving students' diversity and sense of agency in AI-based education. For personalisation in education to be effective, it needs to strike a balance between tailoring content to individual needs and ensuring that students have the opportunity to explore diverse perspectives and develop a holistic self-understanding. Exploring one's own interests, competencies, and values – three critical dimensions of identity – is essential for informed decision-making throughout life and fostering a strong attachment to the learning experience. Thus, there is a need for epistemological reflection as an essential aspect of contemplating the significance of pedagogical innovations in the contemporary world (Trindade & Cosme, 2010). This goal can bring teachers and students together in project-based learning, encouraging them to explore diverse sources of knowledge and engage with them using various processes and literacies. This is even more important given the access to language models like ChatGPT. The challenges it entails present an opportunity to place questioning and critical thinking at the core of education. Approaches like the flipped classroom can help students engage meaningfully with AI technologies, fostering thoughtful exploration. Independent learning methods, followed by teacher-led discussions focused on problem-solving, offer a dual benefit: they allow students to deepen their understanding independently and encourage dialogic developmental processes when they share and explore their learning outcomes with their peers. Developing these skills empowers students as citizens, while assisting societies in navigating the evolving technology landscape.

Moreover, adapting evaluation criteria to accommodate diverse student expressions is critical when AI offers customised learning experiences. This process entails analysing AI-generated data and presenting it to students, teachers, and families for input and a systemic understanding. Effective use of AIED assessment tools can encourage self-assessment as a valuable learning tool, allowing students to critically evaluate technology, promoting self-reflection, self-regulation, and citizenship. In the era of dataism, educators face the challenge of exploring dataism's onto-epistemic grammar with their students, including its anthropocentric perspective, the drive for ontological security, and the thirst for absolute knowability (Andreotti et al., 2015; Lados et al., 2022; and Stein et al., 2017).

Only 4% of the papers in this field address the ethical aspects of AIED's emotional management (cf. RQ3), indicating a predominant focus on performance as the primary rationale for using AIED. However, this emphasis on performance neglects the fact that it results from a complex interplay of both cognitive and emotional factors. Emotional well-being and social skills are key for overall achievement. Currently, these AI technologies often align with an educational paradigm that prioritises performance and global rankings. Adhering to established and conventional assumptions about knowledge, teacher and learner roles, educational goals, and learning methods when incorporating AI systems may exacerbate existing issues, making distances wider, instead of addressing broader educational and developmental needs. Such an approach also hinders the potential of these technologies to foster a decentralised and self-directed approach to education, as advocated by thinkers like Illich (1971). By solely concentrating on the short-term advantages, educators and policymakers might overlook or underestimate the broader impacts and ethical considerations associated with AI in education, hindering its beneficial potential.

Educational Actors and their Relationships Dimension

RQ1 and RQ2 shed light on the importance of recognising the significance of shared values, both explicit and implicit educational agreements, and the involvement of diverse stakeholders in decision-making concerning the use of AIED. MQ3 has also suggested that achieving geographical diversity in research on AIED ethics remains a persistent challenge. This recognition should be coupled with an awareness of disparities in access and variations in pedagogical quality. According to Christakopoulou et al. (2001), a school is a multifaceted entity encompassing aspects of a social, economic, and political community. Additionally, it serves as a personal environment where attachments are formed, and memories are created. The introduction of AI-based education challenges all these dimensions, prompting a critical examination of the roles, relationships, and power dynamics of various stakeholders involved in the educational ecosystem. It calls for a revaluation of how education is not only about imparting knowledge but also about nurturing a sense of belonging, empowerment, and active participation within a rapidly evolving educational landscape.

Uncovering research blind spots on AIED ethics

To tackle the challenge of rethinking education ethics in the age of AIED, it is crucial to carefully consider both the insights gained from this SLR and the aspects that remain concealed or overlooked. One of those missing aspects has to do with the lack of incorporation of AIED ethics in philosophical or psychological paradigms of moral or ethical development. This vagueness in discussions may limit the definition of criteria to thoroughly evaluate the impacts of AIED use. Moreover, there is a notable oversight when it comes to discussions about the broader concept of transhumanism introduced by AI systems. There was only one paper that raised awareness of the question of whether there should be limits on using technology to extend or enhance cognitive abilities, as well as the dynamics of relationships in hybrid environments. How are issues of equity addressed when only a privileged few will have access to competitive AI features, granting them a significant advantage? Additionally, discussions often lack critical perspectives on the ethical boundaries of the concept of intelligence as defined by AIED research and design. Overreliance on behaviourist and cognitive approaches may sideline aesthetics, emotions, morality, and social development, potentially reinforcing reductionist views of human intelligence. Prioritising optimisation may undermine the value of reflective and contemplative thinking, which is crucial to develop strategies for solving complex problems. Another perspective that can be gained from this study is that by adopting a participatory approach involving educators and students in research and inquiry-based learning, the full potential of AIED can be harnessed while encouraging a reflexive and critical attitude that supports comprehensive ethical growth.

Recommendations for educators on AIED ethics

It becomes clear that the ethical implementation of AIED requires a comprehensive approach. The results emphasise the importance of participatory methods and dialogic ethics within this context. The following recommendations on AIED ethics are based on the insights derived from this study and will be followed on the continuation of this project research: (1) engaging educators through focus groups – respecting the insights from this research, it is recommended arranging focus groups sessions with educators to provide them with a platform to engage in meaningful discussions about the ethical use of AIED in educational institutions; (2) developing a pilot training programme – data generated from these focus group sessions will form the basis for creating a pilot training programme aimed at promoting ethical considerations and practices in the integration of AIED within educational settings; (3) exploring content and delivery methods accordingly – during the programme's development, it is critical to align with research findings, adhering to a socio-constructivist framework that emphasises active participation and engagement when designing content, delivery methods, duration, and activities; (4) training in comprehensive topics – develop the training curriculum to cover a wide range of topics, including the ones that were overlooked in previous work (AIED technologies, potentialities, and challenges; ethical considerations specific to education; interdisciplinary perspectives on AIED; stakeholders in AIED, power dynamics, interests, and needs; effects of AIED ethical challenges on student agency, self-determination, and emotional well-being; AIED's role in pedagogy and innovative learning processes; learning analytics, data collection, analysis, and interpretation in the context of AIED; evaluation of AIED effectiveness using informed criteria; promotion of communities of practice in AIED for knowledge sharing and addressing challenges); (5) role-playing with students – incorporate challenging and hands-on engaging tasks that encourage reflection on how AIED-specific applications could impact students' lives, which can then be further discussed in training; (6) evaluating through self and peer assessment – align with the training's defined criteria, facilitating an evaluation of the created resources' responsiveness and effectiveness; (7) promoting inclusive participation – invite a diverse group of teachers as trainees, including individuals from typically underrepresented countries and representing various fields; (8) assessing attitudes towards AIED ethics – include quantitative data collection moments at the beginning and end of the training programme to facilitate the measurement of its impact and inform future improvements; (9) gathering teacher perspectives – involve teachers in a final semi-structured interview to gain insights into their perspectives regarding key criteria that should be prioritised in a continuing professional development programme on AIED ethics, with a specific focus on their training experiences.

Potential limitations of this research

In conclusion, it is important to acknowledge that, despite the inclusion of over 100 papers in this study, there are certain limitations to consider. Since our research covers papers published until the end of 2022, studies currently being published are not taken into account. The most recent research can provide updated insights as the field is growing both conceptually and in school practice. Another limitation of this SLR can be some articles left behind. In fact, the general search term “artificial intelligence” was chosen because it would be difficult to cover all its technologies, such as “robots”, “educational chatbots”, “machine learning”, “intelligent tutoring systems”, “exploratory learning environments”, “teachable agents”, “dual-teacher model”, “speech/image recognition”, “autonomous agents”, “affect detection”, and so on. The idea was that papers covering a specific AIED technology would mention the word “artificial intelligence” at least once. Finally, 24 papers were added as first or second references in the later stage of the review. There is a possibility that other papers with similar characteristics were overlooked during the initial screening process, which relied on titles and abstracts for assessment. The implication of potentially missing such papers is that the review may not have captured the full spectrum of relevant literature, and valuable insights or perspectives on AIED and ethics could have been omitted from the analysis. To mitigate this limitation, multiple rounds of screening and comprehensive search strategies were employed. However, despite best efforts, it is possible that some relevant papers may still have been inadvertently omitted.

Conclusion

Research interest in the ethics of designing, developing, and implementing AIED has been steadily increasing since 2018. This interest has experienced a surge after 2020, as a result of the COVID-19 pandemic and the rise of AIED technologies, leading to a significant increase in AIED ethics research. The majority of papers analysed in this SLR presented concerns about dimensions such as fairness, inclusion, autonomy, and agency. The publications analysed also showed efforts to implement AIED ethical programmes in K-12 and higher education. The main conclusions drawn from this SLR highlight the significance of participatory processes in researching and implementing AIED. To achieve this, it is essential to engage a range of educational stakeholders, including students, teachers, school administrators, parents, and researchers. Although regulatory ethical frameworks for AIED have been introduced, they have come quite late and do not account for the specific needs of learners and pedagogy. Generic AI ethics principles do not adequately address the part of agency responsible for learning, which no technology with promises of better personalisation or guidance should struggle against. Therefore, ethical frameworks for the design and use of AIED should be developed through participatory processes that recognise the specific needs and tasks of its main actors (students and teachers), and respect each learning community's heritage, as well as the will and capacity for innovation.

Furthermore, this SLR uncovered the importance of providing teachers with support to effectively utilise AIED technologies while preserving students' sense of agency and promoting lifelong learning potential. Additionally, rethinking and aligning evaluation parameters is crucial to ensure that ethical concerns are taken into account and that data is incorporated from multiple feedback sources. The SLR also revealed a failure to incorporate AIED ethics into a philosophical or psychological paradigm of moral or ethical development. The lack of a defined conceptual corpus that fits the educational landscape makes it difficult to understand the extent to which AIED meaningfully addresses ethical aspects of individual, community, and organisational development. Moreover, using AI technologies can facilitate self-reflection, reflection on the actions of other agents (persons and machines), and balance personal standards and value systems while considering interpersonal connections and mutual obligations.

In conclusion, this systematic literature review sheds light on the key topics that should be included in teacher training on education ethics when using AIED. It also suggests that while AIED research has been addressing ethical considerations, there is still significant room for growth in terms of analysing its unique heuristics within the context of education ethics.

Data availability

The database supporting this SLR is publicly available at: https://docs.google.com/spreadsheets/d/1u6ArXbQ5w4bxhHbKAcRUCZLUSACK0AoG/edit?pli=1#gid=1005218435.

References

Abboud, R., Arya, A., & Pandi, M. (2020). Redefining the Digital Divide in the age of AI: the harvest of the 25th anniversary. In L. Gómez Chova, A. López Martínez & I. Candel Torres (Eds.), INTED2020 Proceedings (pp. 4483–4492). IATED Academy. https://doi.org/10.21125/inted.2020.1241

Adams, C., Pente, P., Lemermeyer, G., Turville, J., & Rockwell, G. (2022). Artificial Intelligence and Teachers’ New Ethical Obligations. The International Review of Information Ethics, 31(1). https://doi.org/10.29173/irie483

Ali, S., Williams, R., Payne B., Park H., & Breazeal, C. (2019). Constructionism, ethics, and creativity: Developing primary and middle school artificial intelligence education. Proceedings of the International Workshop on Education in Artificial Intelligence K-12. Retrieved January 19, 2022, from https://www.media.mit.edu/publications/constructionism-ethics-and-creativity/

Al-Omran, G., Al-Abdulhadi, S., & Jan, M.R. (2019). Ethics in artificial intelligence. Proceedings of the International Conference on Industrial Engineering and Operations Management, 940–949. Retrieved January 19, 2022, from https://www.ieomsociety.org/gcc2019/papers/337.pdf

Andreotti, V., Stein, S., Ahenakew, C., & Hunt, D. (2015). Mapping interpretations of decolonization in the context of higher education. Decolonization: Indigeneity, Education & Society, 4(1), 21–40.

Antoniou, J. (2021). Dealing with emerging AI technologies: Teaching and learning ethics for AI. In J. Antoniou (Ed.), EAI/Springer Innovations in Communication and Computing (pp. 79–93). Springer. https://doi.org/10.1007/978-3-030-52559-0_6

Arendt, H. (1958). The human condition. University of Chicago Press.

Bates, R. A. (2011, June). AI & SciFi: Teaching Writing, history, Technology, Literature, and Ethics [Paper presentation]. ASEE Annual Conference & Exposition, Vancouver, BC. https://doi.org/10.18260/1-2—17433

Belpaeme, T., Kennedy, J., Ramachandran, A., Scassellati, B., & Tanaka, F. (2018). Social robots for education: A review. Science Robotics, 3(21). https://doi.org/10.1126/scirobotics.aat5954

Blease, C., Kharko, A., Annoni, M., Gaab, J., & Locher, C. (2021). Machine Learning in Clinical Psychology and Psychotherapy Education: A Mixed Methods Pilot Survey of Postgraduate Students at a Swiss University. Frontiers in Public Health, 9, 623088. https://doi.org/10.3389/fpubh.2021.623088

Bogina, V., Hartman, A., Kuflik, T., & Shulner-Tal, A. (2021). Educating Software and AI Stakeholders About Algorithmic Fairness, Accountability, Transparency and Ethics. International Journal of Artificial Intelligence in Education. https://doi.org/10.1007/s40593-021-00248-0

Bozkurt, A., Karadeniz, A., Bañeres, D., Guerrero-Roldán, A., & Rodríguez, M. E. (2021). Artificial Intelligence and Reflections from Educational Landscape: A Review of AI Studies in Half a Century. Sustainability, 13, 800. https://doi.org/10.3390/SU13020800

Bucea-Manea-Tonis, R., Kuleto, V., Gudei, S. C. D., Lianu, C., Lianu, C., Ilic, M. P., & Paun, D. (2022). Artificial Intelligence Potential in Higher Education Institutions Enhanced Learning Environment in Romania and Serbia. Sustainability, 14(10), 5842. https://doi.org/10.3390/su14105842

Burr, C., Taddeo, M., & Floridi, L. (2020). The Ethics of Digital Well-Being: A Thematic Review. Science and Engineering Ethics, 26, 2313–2343. https://doi.org/10.1007/s11948-020-00175-8

Call for Fellow Nominations (2023, 31 march). AIED 2023. Retrieved April 28, 2023, from https://www.aied2023.org/c_mentoring_fellowship.html

Cave, S. (2020). The Problem with Intelligence: Its Value-Laden History and the Future of AI. In A. Markham, J. Powles, T. Walsh, A.L. Washington (Eds.), Proceedings of the AAAI/ACM Conference on AI, Ethics, and Society (AIES '20) (pp. 29–35). Association for Computing Machinery. https://doi.org/10.1145/3375627.3375813

Charisi, V., Malinverni, L., Rubegni, E. & Schaper, M.M. (2020). Empowering Children’s Critical Reflections on AI, Robotics and Other Intelligent Technologies. In I. Šmorgun & G. Berget (Eds.), Proceedings of the 11th Nordic Conference on Human-Computer Interaction: Shaping Experiences, Shaping Society (pp. 1–4). Association for Computing Machinery. https://doi.org/10.1145/3419249.3420090

Chiu, T. K. F., Meng, H., Chai, C., King, I., Wong, S., & Yam, Y. (2021). Creation and Evaluation of a Pre-tertiary Artificial Intelligence (AI) Curriculum. IEEE Transactions on Education, 65(1), 30–39. https://doi.org/10.1109/TE.2021.3085878

Chounta, I. A., Bardone, E., Raudsep, A., & Pedaste, M. (2022). Exploring Teachers’ Perceptions of Artificial Intelligence as a Tool to Support their Practice in Estonian K-12 Education. International Journal of Artificial Intelligence in Education, 32, 725–755. https://doi.org/10.1007/S40593-021-00243-5

Christakopoulou, S., Dawson, J., & Gari, A. (2001). The Community Well-Being Questionnaire: Theoretical Context and Initial Assessment of Its Reliability and Validity. Social Indicators Research, 56, 319–349. https://doi.org/10.1023/A:1012478207457

Coghlan, S., Miller, T., & Paterson, J. (2021). Good Proctor or “Big Brother”? Ethics of Online Exam Supervision Technologies. Philosophy & Technology, 34, 1581–1606. https://doi.org/10.1007/s13347-021-00476-1

Córdova, P.R., & Vicari, R.M. (2022). Practical Ethical Issues for Artificial Intelligence in Education. In A. Reis, J. Barroso, P. Martins, A. Jimoyiannis, R.YM. Huang, & R. Henriques (Eds.), Technology and Innovation in Learning, Teaching and Education. TECH-EDU 2022. Communications in Computer and Information Science (pp. 437–445). Springer, Cham. https://doi.org/10.1007/978-3-031-22918-3_34

Costa, A.P., Charpiot, L., Lera, F.J., Ziafati, P., Nazarikhorram, A., van der Torre, L., & Steffgen, G. (2018). More Attention and Less Repetitive and Stereotyped Behaviors using a Robot with Children with Autism. In J.J. Cabibihan, F. Mastrogiovanni, A.K. Pandey, S. Rossi, M. Staffa (Eds.), 27th IEEE International Symposium on Robot and Human Interactive Communication (RO-MAN) (pp. 534–539). e IEEE Xplore Digital Library. https://doi.org/10.1109/ROMAN.2018.8525747

Dieterle, E., Dede, C., & Walker, M. (2022). The cyclical ethical effects of using artificial intelligence in education. AI & society, 1–11. Advance online publication. https://doi.org/10.1007/s00146-022-01497-w

Dignum, V. (2020). AI is multidisciplinary. AI Matters, 5(4), 18–21. https://doi.org/10.1145/3375637.3375644

Dignum, V. (2021). ‘The role and challenges of education for responsible AI’. London Review of Education, 19(1), 1, 1–11. https://doi.org/10.14324/LRE.19.1.01

Dobrosovestnova, A. & Hannibal, G. (2020). Teachers’ Disappointment: Theoretical Perspective on the Inclusion of Ambivalent Emotions in Human-Robot Interactions in Education. In T. Belpaeme, J. Young, H. Gunes & L. Riek (Eds.), Proceedings of the 2020 ACM/IEEE International Conference on Human-Robot Interaction (HRI), 471–480. Association for Computing Machinery. https://doi.org/10.1145/3319502.3374816

Epp, C. D., & Makos, A. (2013). Using simulated learners and simulated learning environments within a special education context. CEUR Workshop Proceedings, 1009, 1–10. Retrieved January 19, 2022, from https://ceur-ws.org/Vol-1009/0401.pdf

Gallastegui, G., Miguel, L., & Forradellas, F. R. (2021). Business Methodology for the Application in University Environments of Predictive Machine Learning Models Based on an Ethical Taxonomy of the Student’s Digital Twin. Administrative Sciences, 11(4), 118. https://doi.org/10.3390/admsci11040118

García-Peñalvo, F.J., Corell, A., Abella-García, V. & Grande-De-Prado, M. (2021). Recommendations for Mandatory Online Assessment in Higher Education During the COVID-19 Pandemic. In D. Burgos, A. Tlili, & A. Tabacco (Eds.), Radical Solutions for Education in a Crisis Context. COVID-19 as an Opportunity for Global Learning (pp. 85–89). Springer Nature. https://doi.org/10.1007/978-981-15-7869-4_6

Garrett, N., Beard, N., & Fiesler, C. (2020). More Than "If Time Allows": The Role of Ethics in AI Education. In A. Markham, J. Powles, T. Walsh, A.L. Washington (Eds.), Proceedings of the AAAI/ACM Conference on AI, Ethics, and Society (AIES '20) (pp. 272–278). Association for Computing Machinery. https://doi.org/10.1145/3375627.3375868

Gary, E. (2019). Ethics to prepare educators for professional service robots in classrooms. In E. Miyoshi, E. G. Kowch, J. C. Liu, Q. Jin, Z. Li, H. Zhang (Eds.), International Joint Conference on Information, Media, and Engineering Proceedings (pp. 478–484). INSTITUTE OF ELECTRICAL AND ELECTRONICS ENGINEERS. https://doi.org/10.1109/IJCIME49369.2019.00102

Gauld, C., Micoulaud-Franchi, J., & Dumas, G. (2021). Comment on Starke et al.: ‘Computing schizophrenia: Ethical challenges for machine learning in psychiatry’: From machine learning to student learning: Pedagogical challenges for psychiatry. Psychological Medicine, 51(14), 2509–2511. https://doi.org/10.1017/S0033291720003906

Ghotbi, N., & Ho, T. (2021). Moral Awareness of College Students Regarding Artificial Intelligence. Asian Bioethics Review, 13(4). https://doi.org/10.1007/s41649-021-00182-2.

Goldsmith, J., Burton, E., Dueber, D., Goldstein, B., Sampson, S., & Toland, M. (2020). Assessing Ethical Thinking about AI. Proceedings of the AAAI Conference on Artificial Intelligence, 34(09), 13525–13528. 13525–13528. https://doi.org/10.1609/aaai.v34i09.7075.

Gong, X., Tang, Y., Liu, X., Jing, S., Cui, W., Liang, J., & Wang, F. Y. (2021). K-9 Artificial Intelligence Education in Qingdao: Issues, Challenges and Suggestions. 2020 IEEE International Conference on Networking, Sensing and Control, 1–6. https://doi.org/10.1109/ICNSC48988.2020.9238087.

Gorur, R., Hoon, L., & Kowal, E. (2020). Computer Science Ethics Education in Australia – A Work in Progress. In H. Mitsuhara, Y. Goda, Y. Ohashi, Ma. M. T. Rodrigo, J. Shen, N. Venkatarayalu, G. Wong, M. Yamada, C.U. Lei (Eds.), 2020 IEEE International Conference on Teaching, Assessment, and Learning for Engineering (TALE), 945–947. IEEE. https://doi.org/10.1109/TALE48869.2020.9368375

Green, N. L. (2021). An AI Ethics Course Highlighting Explicit Ethical Agents. In M. Fourcade, B. Kuipers, S. Lazar, D. Mulligan (Eds.), Proceedings of the 2021 AAAI/ACM Conference on AI, Ethics, and Society. Association for Computing Machinery (pp. 519–524). https://doi.org/10.1145/3461702.3462552

Gudiño Paredes, S., Jasso Peña, F. D., & de La Fuente Alcazar, J. M. (2021). Remote proctored exams: Integrity assurance in online education?. Distance Education, 42, 200–218. https://doi.org/10.1080/01587919.2021.1910495

Herzog, C., Leinweber, N. A., Engelhard, S. A., & Engelhard, L. H. (2022). Autonomous Ferries and Cargo Ships: Discovering Ethical Issues via a Challenge-Based Learning Approach in Higher Education. IEEE International Symposium on Technology and Society (ISTAS), 2022, 1–6. https://doi.org/10.1109/ISTAS55053.2022.10227124

Holmes, W., & Tuomi, I. (2022). State of the art and practice in AI in education. European Journal of Education, 57, 542–570. https://doi.org/10.1111/ejed.12533

Holmes, W., Porayska-Pomsta, K., Holstein, K., Sutherland, E., Baker, T., Shum, S. B., Santos, O. C., Rodrigo, M. T., Cukurova, M., Bittencourt, I. I., & Koedinger, K. R. (2021). Ethics of AI in Education: Towards a Community-Wide Framework. International Journal of Artificial Intelligence in Education. https://doi.org/10.1007/s40593-021-00239-1

Holmes, W., Bektik, D., Whitelock, D., & Woolf, B. (2018). Ethics in AIED: Who cares? [Workshop]. 19th International Conference on Artificial Intelligence in Education (AIED’18). London. Retrieved January 19, 2022, from https://oro.open.ac.uk/53443/1/AIED_2018_paper_14%20%283%29.pdf

Hood, D., Lemaignan, S., & Dillenbourg, P. (2015). When Children Teach a Robot to Write: An Autonomous Teachable Humanoid Which Uses Simulated Handwriting. In J. Adams, W. Smart, B. Mutlu, L. Takayama (Eds.), ACM/IEEE International Conference on Human-Robot Interaction (pp. 83–90). Association for Computing Machinery. https://doi.org/10.1145/2696454.2696479

Hudlicka, E. (2016). Virtual affective agents and therapeutic games. In D. D. Luxton (Ed.), Artificial Intelligence in Behavioral and Mental Health Care (pp. 81–115). Elsevier.

Illich, I. (1971). Deschooling society. Harper & Row.