Abstract

Schizophrenia is a severe psychiatric disorder affecting 21 million people worldwide. People with schizophrenia suffer from symptoms including psychosis and delusions, apathy, anhedonia, and cognitive deficits. Strikingly, schizophrenia is characterised by a learning paradox involving difficulties learning from rewarding events, whilst simultaneously ‘overlearning’ about irrelevant or neutral information. While dysfunction in dopaminergic signalling has long been linked to the pathophysiology of schizophrenia, a cohesive framework that accounts for this learning paradox remains elusive. Recently, there has been an explosion of new research investigating how dopamine contributes to reinforcement learning, which illustrates that midbrain dopamine contributes in complex ways to reinforcement learning, not previously envisioned. This new data brings new possibilities for how dopamine signalling contributes to the symptomatology of schizophrenia. Building on recent work, we present a new neural framework for how we might envision specific dopamine circuits contributing to this learning paradox in schizophrenia in the context of models of reinforcement learning. Further, we discuss avenues of preclinical research with the use of cutting-edge neuroscience techniques where aspects of this model may be tested. Ultimately, it is hoped that this review will spur to action more research utilising specific reinforcement learning paradigms in preclinical models of schizophrenia, to reconcile seemingly disparate symptomatology and develop more efficient therapeutics.

Similar content being viewed by others

Introduction

Moving towards a cohesive understanding of how differences in dopamine signalling in discrete circuits could contribute to the paradoxical symptoms of schizophrenia

Schizophrenia is a severe debilitating psychiatric disorder that affects 21 million people, with a prevalence of around 1% worldwide [1, 2]. People with schizophrenia experience an unemployment rate of ~80–90% [3, 4], and a 15–20 year shorter life expectancy compared to the general population [2, 5,6,7]. The disorder is characterised by a set of core features, including hallucinations and delusions (i.e., positive symptoms), apathy, anhedonia, avolition (i.e., negative symptoms), and cognitive deficits [8,9,10]. Reinforcement learning deficits in particular are strongly linked to the development of both positive and negative symptoms, and are often present in first episode and frank psychosis, and in populations at risk of developing psychosis [11,12,13,14,15]. Aberrant dopaminergic signalling has long been linked to the pathophysiology of schizophrenia [16,17,18,19,20,21]. Indeed, the primary mechanism of action of current atypical antipsychotics is contingent upon reducing activity at the D2 receptor [22]. Whilst these antipsychotics are somewhat effective in the treatment of positive symptoms of schizophrenia, they are accompanied by intolerable side effects [23], and cognitive impairments are often unaffected or worsened [24]. Therefore, there is an urgent need to develop our understanding of cognitive dysfunction in schizophrenia to guide alternative therapeutics [24].

The fact that current antipsychotic treatments targeting dopamine activity alleviate positive symptoms, but do not generally impact significantly on negative symptoms or cognitive impairments, illustrates the difficulties that we face in trying to develop a cohesive understanding of the neural basis of schizophrenia. The complexity of this disorder is also reflected in the learning paradox that we see in people with schizophrenia. Specifically, patients show an increase in learning about irrelevant information (correlated with positive symptoms), and a decrease in learning about reward-predictive information (correlated with negative/cognitive symptoms; [25,26,27,28,29,30]). While there has been recent interest in thinking about how nuanced changes in subcortical dopamine might contribute to schizophrenia symptomatology [27, 31], it is generally difficult to explain this dissociation within existing models of reinforcement learning. As a result, the field still lacks a coherent framework that can help account for this learning paradox seen in schizophrenia.

Recent research emerging from basic neuroscience may be able to help us to refine models of how changes in dopamine circuits could produce the learning paradox seen in schizophrenia. Specifically, this research has demonstrated that we can no longer explain phasic dopamine signalling as a homogenous signal that broadcasts salience or value of a current event [32,33,34,35,36,37,38,39,40,41,42,43]. It is now believed that dopamine signalling can function in qualitatively different ways in different neural circuits to produce learning in many different situations [35, 44], not previously envisioned by traditional theories of dopamine function [45,46,47,48]. From this emerges the exciting possibility that a change in the balance of inputs and outputs to the dopamine system could produce the paradoxical changes in learning seen in schizophrenia, without the need to appeal to other neurotransmitter systems or dissociative effects in subcortical and prefrontal areas. Accordingly, in this review, we make the case for how we might envision specific changes in particular dopamine circuits as contributing to the reinforcement-learning paradox seen in schizophrenia, building on recent works that have begun to conceptualise a more nuanced role for dopamine in schizophrenia [27, 49]. As such, this is a “call to action” to utilise cutting-edge basic neuroscience techniques in the context of reinforcement learning to investigate circuit-defined neural changes in preclinical models of schizophrenia. It is our hope that this will provide a new direction for developing therapeutics that target particular dopaminergic circuits to simultaneously alleviate positive and negative symptoms and cognitive deficits associated with schizophrenia.

The reinforcement-learning deficits and their neural correlates

The first studies that revealed deficits in reinforcement learning in people with schizophrenia demonstrated enhancements in learning about irrelevant stimuli, or neutral information, which healthy controls usually ignore. This is manifest by failures of latent inhibition, overshadowing, blocking, and learned irrelevance tasks. Indeed, these deficits have become characteristic of schizophrenia [50, 51]. These tasks all have unique associative bases [52, 53], and likely also involve attentional mechanisms [54,55,56]. However, what they have in common is that they all require the ability to filter out irrelevant information. For example, latent inhibition is the phenomenon whereby humans and other animals take longer to acquire a stimulus-reward association when the stimulus has previously been established as irrelevant by repeatedly presenting it alone (i.e., pre-exposure), which is thought to result in the development of a stimulus-no reward association [52, 57,58,59,60]. People with schizophrenia show faster rates of learning about pre-exposed stimuli and their associations with reward [61,62,63,64,65,66,67,68]. Importantly, clinical studies have shown that latent inhibition is also disrupted in otherwise healthy volunteers who score highly on measures of psychoticism and schizotypy [61, 63,64,65,66, 69], whilst medicated patients with schizophrenia show intact latent inhibition, indicating that this disruption is related to positive symptoms [62, 67, 70]. Moreover, the administration of haloperidol, a dopamine antagonist that treats psychotic symptoms in schizophrenia, has been shown to enhance latent inhibition in healthy participants [68].

Similarly, people with schizophrenia fail to show the blocking effect, a fundamental associative paradigm that involves both associative and attentional components [53,54,55, 71, 72]. Blocking involves first teaching subjects that a cue leads to reinforcement. Then, this predictive cue is paired with another, novel cue and the same reward. Here, blocking is evident when subjects do not learn about the novel cue, as it does not predict anything over and above the predictive cue and is deemed irrelevant [53, 54]. However, people experiencing acute, but not chronic, schizophrenia display deficits in blocking, characterised by enhancements in learning about the novel cue, during visual discrimination and spatial navigation tasks [73,74,75,76,77,78,79,80]. It was initially unclear whether the blocking deficit seen in patients was related to the negative or positive symptoms of the disorder. This was likely because task-related differences can change the dominant learning mechanism at play during blocking, resulting in either more reliance on a reinforcement learning [53], or on an attentional process [54]. Indeed, when the blocking task is accompanied by a general deficit in reinforcement learning in subjects with schizophrenia, poor blocking is associated with negative symptoms of the disorder [79, 80]. However, when the deficits in reinforcement learning are not present (due to changes in task structure or amounts of training), blocking is still impaired in people with schizophrenia, and is correlated predominantly associated with positive symptoms of the disorder and an inability modulate attention to the novel, blocked cue [74, 81]. This differentiates the blocking deficit in people with schizophrenia from that seen in latent inhibition, where a lack of latent inhibition in patients is attributed to disruptions in an associative process [52]. This makes sense as blocking and latent inhibition paradigms are dependent on different neural circuits (discussed below). These data demonstrate that the enhancement of learning about irrelevant cues in schizophrenia (including blocking and latent inhibition) correlates with positive symptoms of the disorder [81, 82], where it is thought that delusions and hallucinations that characterise positive symptoms arise as patients try to make sense of these aberrant learning experiences [83, 84].

On the other hand, people with schizophrenia display a consistent reduction in learning about cues that are predictive of reinforcement, which is thought to be related to the negative/cognitive symptoms of schizophrenia. Specifically, studies have reported relatively intact learning in people with schizophrenia when one cue-outcome contingency is available [85,86,87,88,89,90,91,92,93,94,95,96]. However, deficits are evident when complexities are introduced. For example, schizophrenia patients display robust deficits in reinforcement learning during probabilistic selection tasks [14, 29, 97,98,99,100], designed to assess a participant’s ability to learn from positive and negative feedback with changing probabilities of reinforcement [29, 101]. Even when patients are given an excess number of trials to learn probabilistic reinforcement contingencies, they still exhibit learning deficits, suggesting that deficiencies are the result of impaired learning from more complex rewarding outcomes, and not simply the result of slower stimulus-response learning, or basic working memory deficits that are frequently found in schizophrenia [97, 98]. With regards to reward-paired cues, these findings reveal that people with schizophrenia fail to make distinctions between events that are motivationally significant (e.g., rewarding), and display decreased updating of stimulus-outcome associations in response to changing reinforcement contingencies [83, 102, 103].

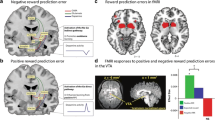

Collectively, these findings demonstrate a paradoxical deficit in schizophrenia; increases in learning about irrelevant stimuli, and a concomitant decrease in learning about reward-predictive information. Typically, these deficits are explained by changes in distinct neural circuits. For example, increases in learning about irrelevant stimuli are argued to result from increases in subcortical dopamine activity, supported by findings of elevated dopamine synthesis capacity in striatal regions specific to D2/3 receptors [104], which correlates with positive symptoms [31, 105]. On the other hand, reductions in learning about reward-predictive stimuli are often attributed to hypo-frontality, contributed to by a reduction in D1 receptor density, linked to negative symptoms and cognitive deficits [102, 106,107,108]. Studies using functional magnetic resonance imaging (fMRI) during reinforcement learning have corroborated this physiological evidence in some respects. For example, there is some evidence for hypo-frontality during learning in patients with schizophrenia [11, 82, 99, 109,110,111], though this does not appear to be the case for all frontal regions; while activity in some frontal regions is decreased, other show an increase in activity [112]. Further, fMRI data reveals that ventral striatal activity during reinforcement-learning tasks does not suggest an increase in dopamine function per se [82, 113]. Striatal activity is increased to irrelevant or neutral information and decreased to reward-predictive information [82]. Whilst this makes sense from a functional perspective of the schizophrenia learning paradox, it is difficult to reconcile a subcortical notion of hyperdopaminergic signalling, which would predict increased learning to both neutral and reward-paired cues. In the following sections, we will make the case that both positive and negative symptoms, and their corresponding deficits in reinforcement learning, instead fit within a cohesive model of dysfunction within specific dopaminergic circuits.

Dopamine: a complex system subserving many different forms of reinforcement learning

To drive learning, dopamine neurons in the ventral tegmental area (VTA) exhibit a phasic error signal when an unexpected event has occurred [46, 114,115,116]. That is, dopamine neurons exhibit a signal that reflects the difference between what you thought was going to happen, and what happened in reality [46, 117]. This effectively instructs the brain to learn and update current expectations. Traditionally, this signal was only thought to contribute to what is referred to in the field as “model-free” learning [46]. This means that dopamine errors only instruct learning about something that has value, like food or money, allowing that numerical or scalar value to backpropagate to an antecedent cue [46]. However, recent studies have shown that this dopamine error acts more like a teaching signal to instruct humans and other animals to associate events together (e.g., stimulus-reward or stimulus-stimulus associations), regardless of whether either of those events contain something valuable or rewarding, and without endowing those events with value [33,34,35, 39,40,41,42, 118]. Further, dopamine errors in both humans and rodents contain information about predicted rewards [42], suggesting this signal serves to instruct neural regions on what to learn about, as well as when to learn [35]. This demonstrates that the dopamine prediction error does not act as a homogenous signal that broadcasts the value or salience of an event (or even allocations of lasting attention to a stimulus [43]), but as a teaching signal that is received throughout the brain to drive learnt associations that two constructs in the world are related (Fig. 1).

In the adult brain, dopaminergic neurons exist as a heterogenous group of cells localised predominately in the VTA and substantia nigra [190, 204, 205]. From these areas, dopaminergic projections arising from the VTA extend to limbic (mesolimbic) and cortical (mesocortical) regions, and from the substantia nigra to striatal (nigrostriatal), regions of the brain [206]. Given the overlap in common projections between the dopaminergic mesolimbic and mesocortical pathways, these two systems are often referred to the mesocorticolimbic pathway collectively [204] (Fig. 1). Mesolimbic and mesocortical pathways originating in the VTA send dopamine projections to the NAc and olfactory tubercle, and to limbic regions including the amygdala, hippocampus and frontal cortices through the medial forebrain bundle [207,208,209]. The VTA also sends and receives extensive reciprocal innervations from these same brain areas, as well as many others areas including the lateral hypothalamus, lateral habenula, dorsal raphe nucleus and periaqueductal grey [207,208,209]. In essence, dopamine neurons in the midbrain are densely connected with the rest of brain, where dopamine signalling is heavily influenced by descending projections, and in turn heavily influences processing in these regions to drive associative learning [34, 35, 39, 40, 44, 47, 118, 146, 210, 211]. Abbreviations: Amyg Amygdala, DRN Dorsal Raphe Nucleus, LDTg laterodorsal tegmentum nucleus, LH Lateral Hypothalamus, LHb Lateral Habenula, NAc Nucleus Accumbens, mPFC Medial Prefrontal Cortex, PAG Periaqueductal Grey, RMTg Rostromedial Mesopontine Tegmental Nucleus, VP Ventral Pallidum, VTA Ventral Tegmental Area.

The evidence in favour of phasic dopamine acting as an instructive teaching signal to stamp in complex associations between events or actions are two-fold. The first comes from findings using optogenetic manipulation of VTA dopamine neurons during reinforcement learning [34, 39, 40]. Such studies have shown that stimulation of VTA dopamine neurons as a prediction error can facilitate the development of associations between predictive stimuli and their specific outcomes [34], and between two neutral sensory stimuli [39, 40], without endowing those antecedent stimuli with value [40]. Further, optogenetic inhibition designed to silence the dopamine prediction error across the transition between two neutral sensory stimuli, reduces the association between such stimuli [39], suggesting a physiological role for dopamine in the development of these associations. The second line of evidence supporting a role for dopamine in instructing learned associations comes from recording of neural activity in VTA (or nucleus accumbens) of mice, rats, and humans [42, 119, 120]. For instance, patterns of firing across ensembles of midbrain dopamine neurons have been shown to contain identity information of sensory prediction errors [42]. In addition, exciting new research has reported the presence of wave-like spatiotemporal dopamine dynamics in the dorsal striatum, with the propagation of specific wave trajectories (i.e., whether waves propagated from medial to lateral dorsal striatum, or lateral to medial) dependant on the demands of a learning task [119]. Both these findings suggest that spatiotemporal differences in dopamine signals may determine the timing and strength of subcircuit teaching signals, which ultimately defines what and when to learn. Consistent with this, regional differences in phasic dopamine responses within the striatum have been observed in response to novel cues [121], and predicted and unpredicted rewards [122, 123], with often opposing dopamine dynamics dependant on temporal aspects of the task and subjective experience. Together, this research demonstrates that the dopamine prediction error is not only capable of facilitating the development of specific sensory associations, but also that the information needed to learn these associations is present in the dopamine error itself, before it is received by the downstream circuit.

In this light, the paradoxical learning dichotomy seen in schizophrenia cannot be explained by a general increase in subcortical dopamine, nor an aberrant salience account, even with dissociable changes in the prefrontal cortex. This is because both these models would predict that deficits or enhancements in learning should occur in the same direction. For example, if people with schizophrenia exhibit a general increase in subcortical dopamine, then one would expect that learning about irrelevant or neutral cues, and reward-paired cues, would both be enhanced (or deficient) when this signal is altered, which is not observed in the clinical population. And in the aberrant salience model, it is hypothesised that chaotic dopamine firing leads to attribution of significance to stimuli that would otherwise be considered irrelevant [124,125,126]. However, the same chaotic firing should also enhance other aspects of learning at random, including learning from rewards. Maia and Frank attempted to resolve this by developing a model that described the subcortical dopaminergic changes occurring in schizophrenia as similar to what occurs in amphetamine [27]. Specifically, that an increase in dopamine could be exhibited spontaneously, enhancing sporadic associations that are linked to positive symptoms, but blunted to relevant information, which reduces learning about reward-paired cues. However, in light of the new data implicating the dopamine prediction error in learning about both neutral and reward-paired information, we would take this one step further. Specifically, we would argue that distinct dopamine circuits could encode learning about neutral information, and others could encode reward-related information, which is supported by emerging data [127, 128], and that the balance of these circuits could be changed in schizophrenia. Essentially, we believe it is likely that different circuits and brain regions utilise the dopamine prediction error as a qualitative teaching signal in specific ways, and that circuit-specific dysfunction in schizophrenia alters how the dopamine signal is received and interpreted, and ultimately the content and direction of what is learned. This would also be consistent with theories that propose more nuanced circuit-specific changes in dopamine function in schizophrenia could underpin the disparate symptomology observed in the disorder [31].

We would advocate for taking an approach that makes predictions from the reinforcement-learning deficits seen in schizophrenia as to the nature of the circuit-specific changes in dopamine circuits. The reasons for this are two-fold: (1) implicating circuit-specific changes in patients is hard to do in clinical research, owed to the lack of invasive techniques for probing biological characteristics in humans, and (2) we could then make predictions as to how manipulation of particular circuits in rodent models of schizophrenia could restore normal learning processing, with an ultimate goal of developing more targeted therapeutic compounds for the treatment of the disorder in humans. With that view in mind, we now know that dopamine signalling is both necessary and sufficient to drive associative learning between contiguous events, whether valuable or not [34, 38, 40, 43, 44, 118]. So, a model of the learning paradox in schizophrenia cannot assume a role for subcortical dopamine in learning about reward-paired information and not neutral information. Within this, we also know that different areas of the brain that interact with the dopaminergic circuits regulate learning about irrelevant, neutral, and rewarding information, respectively. For example, the prelimbic cortex of the rat, considered to be analogous to the dorsolateral prefrontal cortex (DLPFC) [129,130,131,132,133], regulates the allocation of attention to cues, facilitating performance in learned irrelevance tasks, overshadowing, and blocking [55, 56, 72]. Here, a reduction in prelimbic function in rodents- via lesion, functional inactivation, or dopamine depletion- produces deficits in attentional set shifting [134,135,136,137], blocking [55, 132, 138, 139], and overshadowing [55, 56], similar to what is reported in schizophrenia [81, 82, 87, 89,90,91, 140,141,142]. Indeed, people with schizophrenia show a reduction in DLPFC function during attentional set shifting and learned irrelevance [142,143,144], particularly those experiencing first-episode psychosis [145].

On the other hand, the orbitofrontal cortex is important for learning about the general structure of the environment, value-based decision-making, and goal-directed behaviour [33, 146,147,148,149,150,151,152,153,154,155,156,157,158]. This includes sensory-sensory associations, which extends to learning about associations between neutral stimuli [127]. The OFC is a functionally heterogenous structure divided into three prominent divisions: the medial orbital (MO), ventral orbital (VO), and lateral orbital (LO) cortices (with the LO overlapping with portions of insular cortex that share similar projection profiles, consistent with the human orbitofrontal cortex; e.g., [156, 159]). All three of these regions exhibit differential projection profiles and are thought to underlie distinct reinforcement-learning processes [159, 160]. In terms of relevance for our model, the encoding of sensory-sensory associations has been attributed to the LO. For instance, we recently found that optogenetic inactivation of LO reduced learning to associate neutral cues pairs [127], in a task very similar to that recently found to be disrupted in people experiencing hallucinations, including those with psychosis [28]. Further, reducing LO activity through lesions or inactivation in rodents also leads to an enhancement of latent inhibition [161, 162]. Such research demonstrates that OFC contributes in important ways to learning about neutral information, and that increases in OFC activity could produce an enhancement in learning about neutral information, and deficits in latent inhibition. Importantly, this has been supported by some imaging studies suggesting larger OFC volumes in people with schizophrenia [163, 164], and increases in OFC activity during reinforcement learning [112]. Finally, delusional ideation in healthy individuals has been associated with enhanced connectivity between the lateral OFC and visual cortex [165], where enhancements in updating beliefs about ambiguous neutral stimuli is correlated with the severity of positive symptoms in people with schizophrenia [166]. These findings demonstrate that overactivity in OFC circuits can enhance spurious associations about neutral stimuli, resulting in a bias towards prior experiences more heavily influencing future learning episodes, which may contribute to hallucinations and delusions. However, despite the extensive work looking at DLPFC and schizophrenia, there are fewer studies looking at OFC activity (and even less that dissect differential OFC subregions) in schizophrenia, particularly in the context of reinforcement learning, which make it difficult to draw concrete conclusions about the nature of OFC changes in schizophrenia.

In terms of learning about rewards, recent data has implicated the lateral hypothalamus as a novel structure that is critical to reward learning, and opposing learning about neutral or irrelevant information. The lateral hypothalamus has long been implicated in responding to rewards [167,168,169,170,171,172,173,174,175,176], and recently this has been extended to biasing learning towards the predictors of rewards [128, 175]. For instance, optogenetic inhibition of GABAergic neurons in the lateral hypothalamus decreases learning of reward-predictive cues, whilst enhancing associations formed between neutral cues and abolishing latent inhibition [128, 175]. Importantly, the lateral hypothalamus is a very diverse region and contains many other neuronal populations that have been implicated in motivated behaviour [177,178,179,180,181,182,183,184]. Thus, it is likely that many neuronal populations within the lateral hypothalamus contribute to these effects on learning. It may be that the GABAergic neurons receive information from the many distinct populations within the lateral hypothalamus, which is then relayed to other neural structures via the dense projections that GABAergic neurons in this region send throughout the brain, to influence ongoing learning and behaviour [175]. These data implicate the lateral hypothalamus, and GABAergic neurons in particular, as a critical arbitrator of learning about reward-predictive information and neutral information, and are strikingly similar to the paradoxical deficits we see in schizophrenia [27, 29, 30, 81, 113]. That is, changes in hypothalamic activity in people with schizophrenia has the capacity to change the balance in learning about reward-paired and neutral stimuli, producing both sides of the reinforcement learning deficits of the disorder. However, there are very few imaging studies looking at hypothalamic changes in schizophrenia, and those that have looked at hypothalamus in people with schizophrenia have elicited mixed results [185,186,187]. This is likely related to the difficulty in imaging this structure, requiring manual quantification [188, 189]. Given there are few studies investigating the potential for hypothalamic dysfunction in schizophrenia, and no studies that have looked at lateral hypothalamic dysfunction in the context of the reinforcement-learning deficits seen in schizophrenia, this is a particularly promising direction for future research.

So then, how might we reconcile these region-specific roles in the reinforcement learning deficit seen in schizophrenia to put forward a new theoretical framework? The circuits comprising dopamine neurons and the prelimbic cortex, orbitofrontal cortex, and lateral hypothalamus are complex [72, 131, 175, 176, 190]. However, from a functional perspective, a particularly interesting locus for parallel and/or competitive interactions between these systems could be the nucleus accumbens in ventral striatum. The nucleus accumbens receives dense projections from midbrain dopamine neurons, and is generally considered a proxy for midbrain dopamine activity [170, 191,192,193,194,195,196]. Indeed, most of the imaging data in reinforcement-learning studies in the schizophrenia literature look at activity in ventral striatum [82, 83, 113] (but see [31] for evidence in changes in the dorsal striatum).

Importantly, prelimbic cortex, orbitofrontal cortex, and lateral hypothalamus all send projections to different areas of the accumbens [175, 197, 198], and the areas of the nucleus accumbens that receive these projections appear to be functionally distinct [162, 199,200,201]. For example, the prelimbic cortex sends dense projections to the medial portions of the nucleus accumbens core (and to a lesser extent, shell) [198]. This same portion of the nucleus accumbens has been implicated in the attentional mechanisms of the blocking and overshadowing effects, which are also dependent on the prelimbic cortex [55, 56, 132, 138, 139, 202, 203]. The orbitofrontal cortex projects to the nucleus accumbens core, though a more lateral region from that receiving projections from prelimbic cortex [198]. A reduction in activity in this portion of the ventral striatum produces enhanced latent inhibition [162, 200], similarly to a loss of function in OFC [161, 162]. Further, neurons in nucleus accumbens core also reflect learning of neutral associations [187], where this form of learning is known to be dependent on OFC function also [127]. Finally, GABAergic neurons in the lateral hypothalamus project to yet another region of the ventral striatum, the shell of the nucleus accumbens, critical for responding to specific cue-reward associations and latent inhibition [200]. Thus, prelimbic, orbitofrontal, and lateral hypothalamic areas all project to distinct regions of the nucleus accumbens that appear to mirror the roles in learning subserved by these afferent structures.

To put forward a new framework then, it may be the case that the prediction error from VTA dopamine neurons terminating in the nucleus accumbens serves to facilitate learned associations in the form of synaptic plasticity [205, 212, 213], and these sites receives a form of top-down modulation by afferents from prelimbic, orbitofrontal, and lateral hypothalamic inputs. These top-down afferents could compete to influence the balance of learning between predictive, neutral, and irrelevant information. In reference to schizophrenia, it may be that these circuits are differentially weighted, such that the circuits comprising orbitofrontal cortex and ventral striatum are overweighted relative to prelimbic and hypothalamic striatal circuits, which would produce an increase in learning about neutral and irrelevant information, while reducing learning about reward-predictive cues. This could occur in at least two ways: (1) the inputs from the orbitofrontal, prelimbic, or lateral hypothalamic region onto ventral striatum could be changed, or (2) the bottom-up inputs from ventral tegmental area onto different portions of ventral striatum could be differentially changed (Fig. 2). Overall, this view would be in line with clinical evidence suggesting that dopamine receptor blockers, whilst somewhat effective against positive symptoms, offer little to ameliorate (and can even exacerbate) negative symptoms [214]. Specifically, by building on recent models [27], this could be because dopamine receptor antagonists exacerbate the already attenuated phasic dopamine responses in the ventral striatum that underpin reward learning and are facilitated by prelimbic cortex and hypothalamic inputs. On the other hand, this would be effective in reducing phasic dopamine responses in overactive circuits comprising OFC that contribute to the increase in learning about neutral information, which are theorised to underlie positive symptoms. In the below section, we discuss the research that is needed to investigate these possibilities. If our hypothesis is supported by future work, this would mean that pharmacotherapies targeting dopamine need to be designed to modulate dopamine differentially in distinct circuits in order to combat both positive and negative symptoms of the disorder (but see promising effects of deep brain stimulation in dopamine circuits, which may influence dopamine circuits through multiple mechanisms; [215]).

People with schizophrenia show a learning paradox, characterised by increases in learning about neutral or irrelevant information, and a decrease in learning about reward-predictive information. This is associated with an increase in activity in ventral striatum to neutral or irrelevant information, and a decrease in activity to reward-predictive information [27, 29, 30, 81, 113]. One way to account for this deficit is to hypothesise that the inputs to the ventral striatum that regulate the balance between learning about reward-predictive, neural, and irrelevant information, are changed. Specifically, we would argue that the paradox seen in schizophrenia is consistent with a strengthening of orbitofrontal inputs to the ventral striatum, and a decrease in the inputs from prelimbic and lateral hypothalamic circuits. The feasibility of such a model is supported by the separable nature of afferents coming from these regions to ventral striatum [156, 159, 175, 197, 198]. Further research is necessary to test the validity of this model using preclinical studies in the context of reinforcement learning.

A “call to action”: the need for studies using preclinical models of schizophrenia in the context of reinforcement learning

Preclinical models of schizophrenia are particularly useful for studying the neural underpinnings of particular reinforcement-learning tasks and how a change in particular circuits could produce learning deficits that mirror what we see in this disorder. Indeed, in the 80 s and 90 s there was a concerted effort to use reinforcement-learning tasks like latent inhibition and blocking in tandem with drugs that target dopamine systems to mimic the deficits seen in schizophrenia [51, 57,58,59, 216, 217]. This literature served as a cornerstone for understanding the neural bases of the early deficits seen in reinforcement learning in schizophrenia, and were some of the first to provide support for the dopamine hypothesis of schizophrenia [58, 217]. However, in the last few decades there has been a shift in the field away from using preclinical models in the context of reinforcement learning, towards assays that assess emotionality-related behaviours, such as those concerned with anxiety-like phenotypes and learned helplessness or behavioural despair, and hyperactivity and sensitivity to psychotomimetic drugs [218,219,220]. Those studies that do assess learning-related phenomena typically implement behavioural assays specific to working, reference and spatial memory (e.g., Cheeseboard, Morris Water, Radial Arm, T- and Y-mazes), many of which do not map onto tasks used in clinical populations. In Fig. 3, we represent a breakdown of paradigms used in the field, sampling for over 3000 preclinical studies using rodent models of schizophrenia. This summary gives a general representation of the state of the current fieldFootnote 1. This clearly shows that reinforcement-learning tasks, which can be directly replicated in humans and are closely related to both the positive and negative/cognitive symptoms of schizophrenia, fall behind in relation to tasks that assess basic emotional reactivity.

The sensorimotor assay described as the prepulse inhibition test makes up the single most cited test in preclinical schizophrenia research. Other behavioural assays include locomotor activity, and emotionality-related behaviour in the open field test, elevated plus maze, forced swim test, sucrose preference test, tail suspension test and foot shock aversion test. Working, spatial and reference memory contribute to the majority of behavioural assays designed to test memory that are cited by preclinical research, with the Morris water maze, cheeseboard maze, radial arm maze, T maze, Y maze and novel object recognition the most commonly cited. Reinforcement-learning tasks, which make the most contact with the clinical literature, comprise only 12% of citations, which includes latent inhibition, reversal learning, reinforcement learning and intradimensional/extradimensional set-shift tasks that have been directly tested in people with schizophrenia.

Another challenge facing the literature is that it is difficult to find a preclinical model of schizophrenia that exhibits changes consistent with both the positive and negative/cognitive symptoms of schizophrenia. For example, most preclinical models of schizophrenia in the early years were based on dopamine agonists, like amphetamine, or glutamate (NMDA) antagonists, like PCP and MK-801 [221,222,223,224]. Those that enhance dopamine signalling found increases in attention or learning about irrelevant information [217, 225,226,227,228,229,230,231]. On the other hand, those reducing glutamate signalling tended to find changes consistent with negative/cognitive symptoms, including reversal learning [232,233,234,235], associative fear learning [236] and working memory [237,238,239]. However, the advent of genetic neuroscience has the potential to provide models that more closely mimic the human disorder, which may prove to show deficits consistent with both sides of the learning paradox seen in people with schizophrenia. For example, there is now a rodent model of the 22q11.2 deletion syndrome, considered to be the strongest copy number variant occurring in the human population that is directly linked to schizophrenia, accounting for 1 in 100 cases [240]. People with the 22q11.2 deletion show significant deficits in learning consistent with schizophrenia, including those consistent with changes in predictive coding, working memory, reward processing, and pre-pulse inhibition, some of which has now been replicated in the 22q11.2 rodent model [241, 242]. While early days, models like the 2211.2 deletion could be used in combination with emerging tools that can exert specific control over neuronal populations to test some of the prediction outlined in our framework (Fig. 2), discussed below.

New tools and approaches for investigating Schizophrenia

Pharmacological studies have been invaluable in schizophrenia research. However, they are limited by the fact that pharmacological agents alter dopamine signalling over extended timescales, and as a result, cannot directly be linked to specific patterns of neuron activity [118, 243, 244]. However, the optogenetic revolution now affords manipulation of particular neuronal populations and their projections to distinct regions of the brain with millisecond temporal resolution [243, 244]. The importance of this development cannot be overstated. For example, the use of transgenic rodents expressing Cre recombinase under the control of the tyrosine hydroxylase (TH-Cre+) or glutamate decarboxylase (GAD-Cre+) promoter, allows for the Cre-dependent expression of opsins such as channelrhodopsin-2 (ChR2) or halorhodopsin (NpHR) in dopamine or GABA neurons, respectively [175, 211, 245]. The expression of ChR2 and NpHR in these rats would allow for the ability to activate and inhibit, respectively, dopamine or GABA neurons with millisecond resolution in behaving rodents [118, 243, 244, 246, 247]. Importantly, the Cre-dependent expression of these opsins in the cell body will also travel in the anterograde direction along the axons of the cell body, reaching the afferent terminals of the region receiving those afferents [243]. This allows inhibition of downstream terminals while leaving upstream neuronal cell bodies intact (Fig. 4) [245]. Cell-type specific optogenetics is a particularly useful tool in the context of schizophrenia, as it allows for targeting of particular dopaminergic circuits that underlie different aspects of reinforcement learning, which could elucidate the ways in which these circuits are likely to be affected in the disorder. Whilst there are technical challenges yet to be overcome before optogenetics are applied for therapeutic purposes in the clinic [248], advances for tracing neural connections in humans (i.e., diffusion tensor imaging; [249]), provides a parallel in which disrupted circuits identified in the clinic can be probed using optogenetics in animal models. For instance, endophenotypes commonly observed in schizophrenia patients could be precisely replicated in rodents, and tested to determine if aberrant changes in neural circuits play a causal role in specific symptoms [250, 251]. These changes could then be used as biomarkers in preclinical drug screening to determine the efficacy of novel therapeutics, which could rely on genetic approaches more amenable to clinical trials, like Designer Receptors Exclusively Activated by Designer Drugs (DREADDs) [250,251,252].

The advent of optical methods for manipulating and visualising cell-type specific neuronal activity have revolutionised behavioural neuroscience and provide critical means with which to record and manipulate activity in specific dopamine circuits. This paves the way to investigate (1) how different dopamine circuits contribute in unique ways to associative learning, and (2) how specific dopamine circuits in rodent models of schizophrenia may be altered, contributing to the learning paradox seen in the disorder. For instance, one way to test the hypothesis that the inputs to NaCC from the prelimbic cortex are weakened in schizophrenia, would be to inhibit prelimbic terminals in NAcc and determine the resultant effect on attentional related process. One could then compare the phenotype produced by such manipulation to that of schizophrenia to elucidate neural substrates underpinning learning deficits. Abbreviations: LH Lateral Hypothalamus, NAcc Nucleus Accumbens, OFC Orbital Frontal Cortex, PL Prelimbic Cortex, VTA Ventral Tegmental Area.

Similar optical approaches can be used to image activity in particular neuronal populations, or the image release of particular neuro-modulatory chemicals in vivo at high spatiotemporal resolution [245]. For example, fibre photometry, or single-photon imaging using miniscopes, can be used to image calcium activity in dopamine neurons. Specifically, injection of either a Cre-dependent AAV carrying the calcium sensory GCaMP into a TH-Cre rat can facilitate imaging of activity in dopamine neurons [245, 253]. Further, the very recent development of several genetically encoded dopamine sensors [e.g., dLight, G-protein-coupled receptor-activation-based (GRAB) DA] enables rapid optical recording of dopamine dynamics without the use of transgenic rodents [254,255,256]. This may be particularly advantageous for investigating changes in dopamine signalling seen in genetic models of schizophrenia that are not usually founded on a Cre line and therefore not as easily used in combination with Cre-dependent optogenetic manipulation and calcium imaging of dopamine neurons. While dopamine sensors do not facilitate imaging of projection-specific dopamine release itself, it can also be used to detect physiological relevant dopamine transients caused by optogenetic manipulation of particular terminals, or adjacent to terminals that have been tagged by viral vectors [254]. These techniques constitute a veritable arsenal for investigating how activity in dopaminergic circuits influences reinforcement learning, and how these dynamics may be changed in preclinical models of schizophrenia.

Conclusions and remaining questions

Recent evidence has changed the way we think about the dopamine prediction error from a functional perspective [33, 34, 39,40,41,42,43, 118, 257]. We now know that the dopamine error acts as a teaching signal to instruct the development of learned associations in many different contexts not traditionally envisioned by theories of dopamine function, including learning about neutral information. Accordingly, we may now be able to garner a better understanding of how dysfunction in specific dopamine circuits could contribute to the reinforcement learning paradox seen in people with schizophrenia. Here, we argue that this paradox can be accounted for by an imbalance in the circuits that utilise the dopamine prediction error signal in the nucleus accumbens to stamp in distinct learned associations. We hypothesise that circuits comprising orbitofrontal modulation of nucleus accumbens are strengthened, heightening the development of associations between neutral information. On the other hand, we argue that prelimbic and hypothalamic inputs to the nucleus accumbens are compromised relative to orbitofrontal inputs, reducing the ability of people with schizophrenia to modulate attention and learning towards stimuli in the environment that are the best predictors of outcomes. There are many questions that remain for this theoretical model. For example, in the current manuscript, we have focussed on the potential contribution of phasic dopamine signals to the deficits in schizophrenia. However, levels of tonic dopamine play integral roles in general motivation and decision making, and are in some cases distinct (or modulatory) to the phasic error signal [258,259,260]. Thus, future discussions of how particular dopamine circuits contribute to the reinforcement-learning deficits seen in schizophrenia should comprise discussion of potential changes in tonic dopamine function. In addition, there is evidence for dysfunction in other regions of the brain seen in schizophrenia than those discussed here. For example, studies have shown that function is compromised in the ventromedial prefrontal cortex of people with schizophrenia (vmPFC; considered analogous to infralimbic cortex in the rat [131, 132, 261]). This region also sends dense projections to the nucleus accumbens shell [198], and plays important roles in reinforcement learning, which may suggest an integral role in the deficits seen in schizophrenia and our framework presented here. Recent technological advances have granted new avenues for investigating cell-type specific activity in cell bodies and their projections. By using these techniques, we can now explore how projection-specific activity contributes to learning deficits seen in animal models of schizophrenia. Importantly, these techniques will help identify circuit-specific biomarkers of the disorder not previously envisioned by current models, which could be used in preclinical drug screening. Ultimately, this approach could pave the way for more novel therapeutics targeting dopamine activity that could prove efficacious for both positive and negative/cognitive symptoms.

Notes

(A Web of Science indexed search was performed using the following keywords: “Schizophrenia AND/OR Open Field Test, Elevated Plus Maze, Forced Swim Test, Morris Water Maze, Cheeseboard Maze, Radial Arm Maze, T Maze, Y Maze, PPI, Sucrose Preference Test, Tail Suspension Test, Novel Object Recognition, Foot Shock, ID/ED, Latent Inhibition, Reversal Learning, Conditioned Place Preference, Reinforcement Learning”. Keywords “Rat OR Mice” were used to exclude clinical studies. Only original manuscripts were considered, reviews were excluded from the search).

References

McGrath J, Saha S, Chant D, Welham J. Schizophrenia: a concise overview of incidence, prevalence, and mortality. Epidemiol Rev. 2008;30:67–76.

World Health Organization. Schizophrenia - WHO. 2018. https://www.who.int/news-room/fact-sheets/detail/schizophrenia. Accessed September 2019.

Carmona VR, Gómez-Benito J, Huedo-Medina TB, Rojo JE. Employment outcomes for people with schizophrenia spectrum disorder: a meta-analysis of randomized controlled trials. Int J Occup Med Environ Health. 2017;30:345–66.

Evensen S, Wisløff T, Lystad JU, Bull H, Ueland T, Falkum E. Prevalence, employment rate, and cost of schizophrenia in a high-income welfare society: a population-based study using comprehensive health and welfare registers. Schizophr Bull. 2016;42:476–83.

Lee EE, Liu J, Tu X, Palmer BW, Eyler LT, Jeste DV. A widening longevity gap between people with schizophrenia and general population: a literature review and call for action. Schizophr Res. 2018;196:9–13.

Oakley P, Kisely S, Baxter A, Harris M, Desoe J, Dziouba A, et al. Increased mortality among people with schizophrenia and other non-affective psychotic disorders in the community: a systematic review and meta-analysis. J Psychiatr Res. 2018;102:245–53.

Saha S, Chant D, McGrath J. A systematic review of mortality in schizophrenia: is the differential mortality gap worsening over time? Arch Gen Psychiatry. 2007;64:1123–31.

American Psychiatric Association. Diagnostic and statistical manual of mental disorders (DSM-5®). American Psychiatric Pub; 2013. https://doi.org/10.1176/appi.books.9780890425596

Owen MJ, Sawa A, Mortensen PB. Schizophrenia. Lancet Lond. 2016;388:86–97.

Patel KR, Cherian J, Gohil K, Atkinson D. Schizophrenia: overview and treatment options. Pharm Ther. 2014;39:638–45.

Ermakova AO, Knolle F, Justicia A, Bullmore ET, Jones PB, Robbins TW, et al. Abnormal reward prediction-error signalling in antipsychotic naive individuals with first-episode psychosis or clinical risk for psychosis. Neuropsychopharmacology 2018;43:1691–9.

Montagnese M, Knolle F, Haarsma J, Griffin JD, Richards A, Vertes PE, et al. Reinforcement learning as an intermediate phenotype in psychosis? Deficits sensitive to illness stage but not associated with polygenic risk of schizophrenia in the general population. Schizophr Res. 2020;222:389–96.

Murray GK, Cheng F, Clark L, Barnett JH, Blackwell AD, Fletcher PC, et al. Reinforcement and reversal learning in first-episode psychosis. Schizophr Bull. 2008;34:848–55.

Strauss GP, Frank MJ, Waltz JA, Kasanova Z, Herbener ES, Gold JM. Deficits in positive reinforcement learning and uncertainty-driven exploration are associated with distinct aspects of negative symptoms in schizophrenia. Biol Psychiatry. 2011;69:424–31.

Waltz J, Demro C, Schiffman J, Thompson E, Kline E, Reeves G, et al. Reinforcement learning performance and risk for psychosis in youth. J Nerv Ment Dis. 2015;203:919–26.

Davis K, Kahn R, Ko G, Davidson M. Dopamine in schizophrenia: a review and reconceptualization. Am J Psychiatry. 1991;148:1474–86.

Howes OD, McCutcheon R, Owen MJ, Murray RM. The role of genes, stress, and dopamine in the development of schizophrenia. Biol Psychiatry. 2017;81:9–20.

Howes OD, Kapur S. The dopamine hypothesis of schizophrenia: version iii—the final common pathway. Schizophr Bull. 2009;35:549–62.

Meltzer HY, Stahl SM. The dopamine hypothesis of schizophrenia: a review*. Schizophr Bull. 1976;2:19–76.

Owen F, Crow TJ, Poulter M, Cross AJ, Longden A, Riley GJ. Increased dopamine-receptor sensitivity in schizophrenia. Lancet. 1978;312:223–6.

Snyder S. The dopamine hypothesis of schizophrenia: focus on the dopamine receptor. Am J Psychiatry. 1976;133:197–202.

Horacek J, Bubenikova-Valesova V, Kopecek M, Palenicek T, Dockery C, Mohr P, et al. Mechanism of action of atypical antipsychotic drugs and the neurobiology of schizophrenia. CNS Drugs. 2006;20:389–409.

Bjornestad J, Lavik KO, Davidson L, Hjeltnes A, Moltu C, Veseth M. Antipsychotic treatment – a systematic literature review and meta-analysis of qualitative studies. J Ment Health. 2020;29:513–23.

Gray J, Roth B. Molecular targets for treating cognitive dysfunction in schizophrenia. Schizophr Bull. 2007;33:1100–19.

Corlett PR, Fletcher PC. Delusions and prediction error: clarifying the roles of behavioural and brain responses. Cogn Neuropsychiatry. 2015;20:95–105.

Laruelle M, Kegeles LS, Abi-Dargham A. Glutamate, dopamine, and schizophrenia: from pathophysiology to treatment. Ann NY Acad Sci. 2003;1003:138–58.

Maia TV, Frank MJ. An integrative perspective on the role of dopamine in schizophrenia. Biol Psychiatry. 2017;81:52–66.

Powers AR, Mathys C, Corlett PR. Pavlovian conditioning–induced hallucinations result from overweighting of perceptual priors. Science. 2017;357:596–600.

Waltz JA, Frank MJ, Robinson BM, Gold JM. Selective reinforcement learning deficits in schizophrenia support predictions from computational models of striatal-cortical dysfunction. Biol Psychiatry. 2007;62:756–64.

Waltz JA, Schweitzer JB, Gold JM, Kurup PK, Ross TJ, Salmeron BJ, et al. Patients with schizophrenia have a reduced neural response to both unpredictable and predictable primary reinforcers. Neuropsychopharmacol Publ Am Coll Neuropsychopharmacol. 2009;34:1567–77.

McCutcheon RA, Abi-Dargham A, Howes OD. Schizophrenia, dopamine and the striatum: from biology to symptoms. Trends Neurosci. 2019;42:205–20.

Chang CY, Gardner M, Di Tillio MG, Schoenbaum G. Optogenetic blockade of dopamine transients prevents learning induced by changes in reward features. Curr Biol. 2017;27:3480–3486. e3

Howard JD, Kahnt T. Identity prediction errors in the human midbrain update reward-identity expectations in the orbitofrontal cortex. Nat Commun. 2018;9:1–11.

Keiflin R, Pribut HJ, Shah NB, Janak PH. Ventral tegmental dopamine neurons participate in reward identity predictions. Curr Biol. 2019;29:93–103. e3

Langdon AJ, Sharpe MJ, Schoenbaum G, Niv Y. Model-based predictions for dopamine. Curr Opin Neurobiol. 2018;49:1–7.

Sadacca BF, Jones JL, Schoenbaum G. Midbrain dopamine neurons compute inferred and cached value prediction errors in a common framework. ELife. 2016;5:e13665.

Sharp ME, Foerde K, Daw ND, Shohamy D. Dopamine selectively remediates ‘model-based’ reward learning: a computational approach. Brain J Neurol. 2016;139:355–64.

Sharpe MJ, Batchelor HM, Schoenbaum G. Preconditioned cues have no value. ELife. 2017;6:e28362.

Sharpe MJ, Chang CY, Liu MA, Batchelor HM, Mueller LE, Jones JL, et al. Dopamine transients are sufficient and necessary for acquisition of model-based associations. Nat Neurosci. 2017;20:735–42.

Sharpe MJ, Batchelor HM, Mueller LE, Yun Chang C, Maes EJP, Niv Y, et al. Dopamine transients do not act as model-free prediction errors during associative learning. Nat Commun. 2020;11:1–10.

Sharpe MJ, Schoenbaum G. Evaluation of the hypothesis that phasic dopamine constitutes a cached-value signal. Neurobiol Learn Mem. 2018;153:131–6.

Stalnaker TA, Howard JD, Takahashi YK, Gershman SJ, Kahnt T, Schoenbaum G. Dopamine neuron ensembles signal the content of sensory prediction errors. ELife. 2019;8:e49315.

Takahashi YK, Batchelor HM, Liu B, Khanna A, Morales M, Schoenbaum G. Dopamine neurons respond to errors in the prediction of sensory features of expected rewards. Neuron. 2017;95:1395–1405. e3

Saunders BT, Richard JM, Margolis EB, Janak PH. Dopamine neurons create Pavlovian conditioned stimuli with circuit-defined motivational properties. Nat Neurosci. 2018;21:1072–83.

Schultz W. Dopamine reward prediction-error signalling: a two-component response. Nat Rev Neurosci. 2016;17:183–95.

Schultz W, Dayan P, Montague PR. A neural substrate of prediction and reward. Science. 1997;275:1593–9.

Tian J, Huang R, Cohen JY, Osakada F, Kobak D, Machens CK, et al. Distributed and mixed information in monosynaptic inputs to dopamine neurons. Neuron. 2016;91:1374–89.

Watabe-Uchida M, Zhu L, Ogawa SK, Vamanrao A, Uchida N. Whole-brain mapping of direct inputs to midbrain dopamine neurons. Neuron. 2012;74:858–73.

Robison AJ, Thakkar KN, Diwadkar VA. Cognition and reward circuits in schizophrenia: synergistic, not separate. Biol Psychiatry. 2020;87:204–14.

Gray JA. Integrating schizophrenia. Schizophr Bull. 1998;24:249–66.

Weiner I. The ‘two-headed’ latent inhibition model of schizophrenia: modeling positive and negative symptoms and their treatment. Psychopharmacology. 2003;169:257–97.

Killcross S, Balleine B. Role of primary motivation in stimulus preexposure effects. J Exp Psychol Anim Behav Process. 1996;22:32–42.

Rescorla R, Wagner A. A theory of Pavlovian conditioning: variations in the effectiveness of reinforcement and non-reinforcement. Cl Cond II Curr Res Theory. 1972;2:64–99.

Mackintosh NJ. A theory of attention: variations in the associability of stimuli with reinforcement. Psychol Rev. 1975;82:276–98.

Sharpe MJ, Killcross S. The prelimbic cortex contributes to the down-regulation of attention toward redundant cues. Cereb Cortex NY N. 2014;24:1066–74.

Sharpe MJ, Killcross S. The prelimbic cortex directs attention toward predictive cues during fear learning. Learn Mem. 2015;22:289–93.

Killcross AS, Dickinson A, Robbins TW. Effects of the neuroleptic!a-flupenthixol on latent inhibition in aversively- and appetitively-motivated paradigms: evidence for dopamine-reinforcer interactions. Psychopharmacology. 1994;115:196–205.

Lubow RE. Construct validity of the animal latent inhibition model of selective attention deficits in schizophrenia. Schizophr Bull. 2005;31:139–53.

Lubow RE, Gewirtz JC. Latent inhibition in humans: data, theory, and implications for schizophrenia. Psychol Bull. 1995;117:87–103.

Lubow RE, Moore AU. Latent inhibition: the effect of nonreinforced pre-exposure to the conditional stimulus. J Comp Physiol Psychol. 1959;52:415–9.

Baruch I, Hemsley DR, Gray JA. Latent inhibition and “psychotic proneness” in normal subjects. Personal Individ Differ. 1988;9:777–83.

Gal G, Barnea Y, Biran L, Mendlovic S, Gedi T, Halavy M, et al. Enhancement of latent inhibition in patients with chronic schizophrenia. Behav Brain Res. 2009;197:1–8.

Kraus M, Rapisarda A, Lam M, Thong JYJ, Lee J, Subramaniam M, et al. Disrupted latent inhibition in individuals at ultra high-risk for developing psychosis. Schizophr Res Cogn. 2016;6:1–8.

Lipp OV, Siddle DAT, Arnold SL. Psychosis proneness in a non-clinical sample II: a multi-experimental study of “Attentional malfunctioning”. Personal Individ Differ. 1994;17:405–24.

Lipp OV, Vaitl D. Latent inhibition in human Pavlovian differential conditioning: effect of additional stimulation after preexposure and relation to schizotypal traits. Personal Individ Differ. 1992;13:1003–12.

Lubow RE, Ingberg-Sachs Y, Zalstein-Orda N, Gewirtz JC. Latent inhibition in low and high “psychotic-prone” normal subjects. Personal Individ Differ. 1992;13:563–72.

Vaitl D, Lipp O, Bauer U, Schüler G, Stark R, Zimmermann M, et al. Latent inhibition and schizophrenia: Pavlovian conditioning of autonomic responses. Schizophr Res. 2002;55:147–58.

Williams JH, Wellman NA, Geaney DP, Cowen PJ, Feldon J, Rawlins JNP. Antipsychotic drug effects in a model of schizophrenic attentional disorder: a randomized controlled trial of the effects of haloperidol on latent inhibition in healthy people. Biol Psychiatry. 1996;40:1135–43.

Lipp OV, Siddle DA, Vaitl D. Latent inhibition in humans: single-cue conditioning revisited. J Exp Psychol Anim Behav Process. 1992;18:115–25.

Leumann L, Feldon J, Vollenweider FX, Ludewig K. Effects of typical and atypical antipsychotics on prepulse inhibition and latent inhibition in chronic schizophrenia. Biol Psychiatry. 2002;52:729–39.

Kamin L. Predictability, surprise, attention, and conditioning. In B. A. Campbell, & R. M. Church (Eds), Punishment Aversive Behavior. (pp. 279–296). New York: Appleton-Century-Crofts, 1967.

McNally GP, Johansen JP, Blair HT. Placing prediction into the fear circuit. Trends Neurosci. 2011;34:283–92.

Jones SH, Hemsley D, Ball S, Serra A. Disruption of the Kamin blocking effect in schizophrenia and in normal subjects following amphetamine. Behav Brain Res. 1997;88:103–14.

Jones SH, Gray JA, Hemsley DR. Loss of the Kamin blocking effect in acute but not chronic schizophrenics. Biol Psychiatry. 1992;32:739–55.

Serra AM, Jones SH, Toone B, Gray JA. Impaired associative learning in chronic schizophrenics and their first-degree relatives: a study of latent inhibition and the Kamin blocking effect. Schizophr Res. 2001;48:273–89.

Bender S, Müller B, Oades RD, Sartory G. Conditioned blocking and schizophrenia: a replication and study of the role of symptoms, age, onset-age of psychosis and illness-duration. Schizophr Res. 2001;49:157–70.

Oades RD, Zimmermann B, Eggers C. Conditioned Blocking in patients with paranoid, non-paranoid psychosis or obsessive compulsive disorder: associations with symptoms, personality and monoamine metabolism. J Psychiatr Res. 1996;30:369–90.

Oades RD, Rao ML, Bender S, Sartory G, Müller BW. Neuropsychological and conditioned blocking performance in patients with schizophrenia: assessment of the contribution of neuroleptic dose, serum levels and dopamine D2-receptor occupancy. Behav Pharm. 2000;11:317–30.

Moran PM, Al-Uzri MM, Watson J, Reveley MA. Reduced Kamin blocking in non paranoid schizophrenia: associations with schizotypy. J Psychiatr Res. 2003;37:155–63.

Haselgrove M, Pelley MEL, Singh NK, Teow HQ, Morris RW, Green MJ, et al. Disrupted attentional learning in high schizotypy: evidence of aberrant salience. Br J Psychol. 2016;107:601–24.

Morris R, Griffiths O, Le Pelley ME, Weickert TW. Attention to irrelevant cues is related to positive symptoms in schizophrenia. Schizophr Bull. 2013;39:575–82.

Murray GK, Corlett PR, Clark L, Pessiglione M, Blackwell AD, Honey G, et al. Substantia nigra/ventral tegmental reward prediction error disruption in psychosis. Mol Psychiatry. 2008;13:267–76.

Corlett PR, Murray GK, Honey GD, Aitken MRF, Shanks DR, Robbins TW, et al. Disrupted prediction-error signal in psychosis: evidence for an associative account of delusions. Brain. 2007;130:2387–2400.

Corlett PR, Krystal JH, Taylor JR, Fletcher PC. Why do delusions persist? Front Hum Neurosci. 2009;3:12.

Barch DM, Carter CS, Gold JM, Johnson SL, Kring AM, MacDonald AW, et al. Explicit and implicit reinforcement learning across the psychosis spectrum. J Abnorm Psychol. 2017;126:694–711.

Collins AGE, Brown JK, Gold JM, Waltz JA, Frank MJ. Working memory contributions to reinforcement learning impairments in schizophrenia. J Neurosci. 2014;34:13747–56.

Elliott R, McKenna PJ, Robbins TW, Sahakian BJ. Neuropsychological evidence for frontostriatal dysfunction in schizophrenia. Psychol Med. 1995;25:619–30.

Heerey EA, Bell-Warren KR, Gold JM. Decision-making impairments in the context of intact reward sensitivity in schizophrenia. Biol Psychiatry. 2008;64:62–9.

Hutton SB, Puri BK, Duncan L-J, Robbins TW, Barnes TRE, Joyce EM. Executive function in first-episode schizophrenia. Psychol Med. 1998;28:463–73.

Jazbec S, Pantelis C, Robbins T, Weickert T, Weinberger DR, Goldberg TE. Intra-dimensional/extra-dimensional set-shifting performance in schizophrenia: Impact of distractors. Schizophr Res. 2007;89:339–49.

Joyce E, Hutton S, Mutsatsa S, Gibbins H, Webb E, Paul S, et al. Executive dysfunction in first-episode schizophrenia and relationship to duration of untreated psychosis: The West London Study. Br J Psychiatry. 2002;181:s38–s44.

Somlai Z, Moustafa AA, Kéri S, Myers CE, Gluck MA. General functioning predicts reward and punishment learning in schizophrenia. Schizophr Res. 2011;127:131–6.

Turner DC, Clark L, Pomarol-Clotet E, McKenna P, Robbins TW, Sahakian BJ. Modafinil improves cognition and attentional set shifting in patients with chronic schizophrenia. Neuropsychopharmacology. 2004;29:1363–73.

Tyson PJ, Laws KR, Roberts KH, Mortimer AM. Stability of set-shifting and planning abilities in patients with schizophrenia. Psychiatry Res. 2004;129:229–39.

Waltz JA, Gold JM. Probabilistic reversal learning impairments in schizophrenia. Schizophr Res. 2007;93:296–303.

Weiler JA, Bellebaum C, Brüne M, Juckel G, Daum I. Impairment of probabilistic reward-based learning in schizophrenia. Neuropsychology. 2009;23:571–80.

Cicero DC, Martin EA, Becker TM, Kerns JG. Reinforcement learning deficits in people with schizophrenia persist after extended trials. Psychiatry Res. 2014;220:760–4.

Collins AG, Albrecht MA, Waltz JA, Gold JM, Frank MJ. Interactions among working memory, reinforcement learning, and effort in value-based choice: a new paradigm and selective deficits in schizophrenia. Biol Psychiatry. 2017;82:431–9.

Koch K, Schachtzabel C, Wagner G, Schikora J, Schultz C, Reichenbach JR, et al. Altered activation in association with reward-related trial-and-error learning in patients with schizophrenia. NeuroImage. 2010;50:223–32.

Waltz JA, Frank MJ, Wiecki TV, Gold JM. Altered probabilistic learning and response biases in schizophrenia: Behavioral evidence and neurocomputational modeling. Neuropsychology. 2011;25:86–97.

Frank MJ, Seeberger LC, O’Reilly RC. By carrot or by stick: cognitive reinforcement learning in Parkinsonism. Science. 2004;306:1940–3.

Barch DM, Dowd EC. Goal representations and motivational drive in schizophrenia: the role of prefrontal–striatal interactions. Schizophr Bull. 2010;36:919–34.

Griffiths KR, Morris RW, Balleine BW. Translational studies of goal-directed action as a framework for classifying deficits across psychiatric disorders. Front Syst Neurosci. 2014;8:101.

Fusar-Poli P, Meyer-Lindenberg A. Striatal presynaptic dopamine in schizophrenia, part ii: meta-analysis of [18F/11C]-DOPA PET studies. Schizophr Bull. 2013;39:33–42.

Sarpal DK, Argyelan M, Robinson DG, Szeszko PR, Karlsgodt KH, John M, et al. Baseline striatal functional connectivity as a predictor of response to antipsychotic drug treatment. Am J Psychiatry. 2016;173:69–77.

Slifstein M, van de Giessen E, Van Snellenberg J, Thompson JL, Narendran R, Gil R, et al. Deficits in prefrontal cortical and extrastriatal dopamine release in schizophrenia: a positron emission tomographic functional magnetic resonance imaging study. JAMA Psychiatry. 2015;72:316.

Strauss GP, Waltz JA, Gold JM. A review of reward processing and motivational impairment in schizophrenia. Schizophr Bull. 2014;40:S107–S116.

Waltz JA, Kasanova Z, Ross TJ, Salmeron BJ, McMahon RP, Gold JM, et al. The roles of reward, default, and executive control networks in set-shifting impairments in schizophrenia. PLOS ONE. 2013;8:e57257.

Dowd EC, Frank MJ, Collins A, Gold JM, Barch DM. Probabilistic reinforcement learning in patients with schizophrenia: relationships to anhedonia and avolition. Biol Psychiatry Cogn Neurosci Neuroimaging. 2016;1:460–73.

Katthagen T, Kaminski J, Heinz A, Buchert R, Schlagenhauf F. Striatal dopamine and reward prediction error signaling in unmedicated schizophrenia patients. Schizophr Bull. 2020. https://doi.org/10.1093/schbul/sbaa055.

Moran PM, Rouse JL, Cross B, Corcoran R, Schürmann M. Kamin blocking is associated with reduced medial-frontal gyrus activation: implications for prediction error abnormality in schizophrenia. Plos One. 2012;7:e43905.

Morris RW, Quail S, Griffiths KR, Green MJ, Balleine BW. Corticostriatal control of goal-directed action is impaired in schizophrenia. Biol Psychiatry. 2015;77:187–95.

Morris RW, Vercammen A, Lenroot R, Moore L, Langton JM, Short B, et al. Disambiguating ventral striatum fMRI-related BOLD signal during reward prediction in schizophrenia. Mol Psychiatry. 2012;235:280–9.

Mirenowicz J, Schultz W. Importance of unpredictability for reward responses in primate dopamine neurons. J Neurophysiol. 1994;72:1024–7.

Schultz W. Dopamine neurons and their role in reward mechanisms. Curr Opin Neurobiol. 1997;7:191–7.

Schultz W. Predictive reward signal of dopamine neurons. J Neurophysiol. 1998;80:1–27.

Lak A, Stauffer WR, Schultz W. Dopamine neurons learn relative chosen value from probabilistic rewards. ELife. 2016;5:e18044.

Steinberg EE, Keiflin R, Boivin JR, Witten IB, Deisseroth K, Janak PH. A causal link between prediction errors, dopamine neurons and learning. Nat Neurosci. 2013;16:966–73.

Hamid AA, Frank MJ, Moore CI. Wave-like dopamine dynamics as a mechanism for spatiotemporal credit assignment. Cell. 2021;184:2733–49. e16

Engelhard B, Finkelstein J, Cox J, Fleming W, Jang HJ, Ornelas S, et al. Specialized coding of sensory, motor and cognitive variables in VTA dopamine neurons. Nature. 2019;570:509–13.

Menegas W, Babayan BM, Uchida N, Watabe-Uchida M. Opposite initialization to novel cues in dopamine signaling in ventral and posterior striatum in mice. ELife. 2017;6:e21886.

Brown HD, McCutcheon JE, Cone JJ, Ragozzino ME, Roitman MF. Primary food reward and reward-predictive stimuli evoke different patterns of phasic dopamine signaling throughout the striatum. Eur J Neurosci. 2011;34:1997–2006.

Tsutsui-Kimura I, Matsumoto H, Akiti K, Yamada MM, Uchida N, Watabe-Uchida M. Distinct temporal difference error signals in dopamine axons in three regions of the striatum in a decision-making task. ELife. 2020;9:e62390.

Jensen J, Willeit M, Zipursky RB, Savina I, Smith AJ, Menon M, et al. The formation of abnormal associations in schizophrenia: neural and behavioral evidence. Neuropsychopharmacology. 2008;33:473–9.

Kapur S. Psychosis as a state of aberrant salience: a framework linking biology, phenomenology, and pharmacology in schizophrenia. Am J Psychiatry. 2003;160:13–23.

Shaner A. Delusions, superstitious conditioning and chaotic dopamine neurodynamics. Med Hypotheses. 1999;52:119–23.

Hart EE, Sharpe MJ, Gardner MP, Schoenbaum G. Responding to preconditioned cues is devaluation sensitive and requires orbitofrontal cortex during cue-cue learning. ELife. 2020;9:e59998.

Sharpe MJ, Batchelor HM, Mueller LE, Gardner MPH, Schoenbaum G. Past experience shapes the neural circuits recruited for future learning. Nat Neurosci. 2021;24:391–400.

Balleine BW, O’Doherty JP. Human and rodent homologies in action control: corticostriatal determinants of goal-directed and habitual action. Neuropsychopharmacology. 2010;35:48–69.

Ongür D, Price JL. The organization of networks within the orbital and medial prefrontal cortex of rats, monkeys and humans. Cereb Cortex NY N. 2000;10:206–19.

Sharpe MJ, Stalnaker T, Schuck NW, Killcross S, Schoenbaum G, Niv Y. An integrated model of action selection: distinct modes of cortical control of striatal decision making. Annu Rev Psychol. 2019;70:53–76.

Sharpe MJ, Killcross S. Modulation of attention and action in the medial prefrontal cortex of rats. Psychol Rev. 2018;125:822–43.

Uylings HBM, Groenewegen HJ, Kolb B. Do rats have a prefrontal cortex? Behav Brain Res. 2003;146:3–17.

Delatour B, Gisquet-Verrier P. Functional role of rat prelimbic-infralimbic cortices in spatial memory: evidence for their involvement in attention and behavioural flexibility. Behav Brain Res. 2000;109:113–28.

Floresco SB, Magyar O, Ghods-Sharifi S, Vexelman C, Tse MTL. Multiple dopamine receptor subtypes in the medial prefrontal cortex of the rat regulate set-shifting. Neuropsychopharmacol Publ Am Coll Neuropsychopharmacol. 2006;31:297–309.

Floresco SB, Block AE, Tse MTL. Inactivation of the medial prefrontal cortex of the rat impairs strategy set-shifting, but not reversal learning, using a novel, automated procedure. Behav Brain Res. 2008;190:85–96.

Ragozzino ME, Detrick S, Kesner RP. The effects of prelimbic and infralimbic lesions on working memory for visual objects in rats. Neurobiol Learn Mem. 2002;77:29–43.

Furlong TM, Cole S, Hamlin AS, McNally GP. The role of prefrontal cortex in predictive fear learning. Behav Neurosci. 2010;124:574–86.

Yau JO-Y, McNally GP. Pharmacogenetic excitation of dorsomedial prefrontal cortex restores fear prediction error. J Neurosci. 2015;35:74–83.

Ceaser AE, Goldberg TE, Egan MF, McMahon RP, Weinberger DR, Gold JM. Set-shifting ability and schizophrenia: a marker of clinical illness or an intermediate phenotype? Biol Psychiatry. 2008;64:782–8.

Le Pelley ME, Schmidt-Hansen M, Harris NJ, Lunter CM, Morris CS. Disentangling the attentional deficit in schizophrenia: pointers from schizotypy. Psychiatry Res. 2010;176:143–9.

Weinberger DR. Physiologic dysfunction of dorsolateral prefrontal cortex in schizophrenia: I. Regional cerebral blood flow evidence. Arch Gen Psychiatry. 1986;43:114.

Berman KF, Zec RF, Weinberger DR. Physiologic dysfunction of dorsolateral prefrontal cortex in schizophrenia. II. Role of neuroleptic treatment, attention, and mental effort. Arch Gen Psychiatry. 1986;43:126–35.

Berman KF, Illowsy BP, Weinberger DR. Physiological dysfunction of dorsolateral prefrontal cortex in schizophrenia: IV. Further evidence for regional and behavioral specificity. Arch Gen Psychiatry. 1988;45:616–22.

Keedy SK, Reilly JL, Bishop JR, Weiden PJ, Sweeney JA. Impact of antipsychotic treatment on attention and motor learning systems in first-episode schizophrenia. Schizophr Bull. 2015;41:355–65.

Takahashi YK, Roesch MR, Stalnaker TA, Haney RZ, Calu DJ, Taylor AR, et al. The orbitofrontal cortex and ventral tegmental area are necessary for learning from unexpected outcomes. Neuron. 2009;62:269–80.

Howard JD, Gottfried JA, Tobler PN, Kahnt T. Identity-specific coding of future rewards in the human orbitofrontal cortex. Proc Natl Acad Sci USA. 2015;112:5195–5200.

Lichtenberg NT, Pennington ZT, Holley SM, Greenfield VY, Cepeda C, Levine MS, et al. Basolateral amygdala to orbitofrontal cortex projections enable cue-triggered reward expectations. J Neurosci. 2017;37:8374–84.

Murray EA, O’Doherty JP, Schoenbaum G. What we know and do not know about the functions of the orbitofrontal cortex after 20 years of cross-species studies. J Neurosci. 2007;27:8166–9.

Panayi MC, Killcross S. Functional heterogeneity within the rodent lateral orbitofrontal cortex dissociates outcome devaluation and reversal learning deficits. ELife. 2018;7:e37357.

Pickens CL, Saddoris MP, Setlow B, Gallagher M, Holland PC, Schoenbaum G. Different roles for orbitofrontal cortex and basolateral amygdala in a reinforcer devaluation task. J Neurosci. 2003;23:11078–84.

Rushworth MFS, Behrens TEJ, Rudebeck PH, Walton ME. Contrasting roles for cingulate and orbitofrontal cortex in decisions and social behaviour. Trends Cogn Sci. 2007;11:168–76.

Schoenbaum G, Roesch MR, Stalnaker TA, Takahashi YK. A new perspective on the role of the orbitofrontal cortex in adaptive behaviour. Nat Rev Neurosci. 2009;10:885–92.

Schuck NW, Cai MB, Wilson RC, Niv Y. Human orbitofrontal cortex represents a cognitive map of state space. Neuron. 2016;91:1402–12.

Sharpe MJ, Wikenheiser AM, Niv Y, Schoenbaum G. The state of the orbitofrontal cortex. Neuron. 2015;88:1075–7.

Stalnaker TA, Cooch NK, Schoenbaum G. What the orbitofrontal cortex does not do. Nat Neurosci. 2015;18:620–7.

Wang F, Schoenbaum G, Kahnt T. Interactions between human orbitofrontal cortex and hippocampus support model-based inference. PLOS Biol. 2020;18:e3000578.

Wilson RC, Takahashi YK, Schoenbaum G, Niv Y. Orbitofrontal cortex as a cognitive map of task space. Neuron. 2014;81:267–79.

Reynolds SM, Zahm DS. Specificity in the projections of prefrontal and insular cortex to ventral striatopallidum and the extended amygdala. J Neurosci. 2005;25:11757–67.

Izquierdo A. Functional heterogeneity within rat orbitofrontal cortex in reward learning and decision making. J Neurosci. 2017;37:10529–40.

Schiller D, Weiner I. Lesions to the basolateral amygdala and the orbitofrontal cortex but not to the medial prefrontal cortex produce an abnormally persistent latent inhibition in rats. Neuroscience. 2004;128:15–25.

Schiller D, Zuckerman L, Weiner I. Abnormally persistent latent inhibition induced by lesions to the nucleus accumbens core, basolateral amygdala and orbitofrontal cortex is reversed by clozapine but not by haloperidol. J Psychiatr Res. 2006;40:167–77.

Hoptman MJ, Volavka J, Weiss EM, Czobor P, Szeszko PR, Gerig G, et al. Quantitative MRI measures of orbitofrontal cortex in patients with chronic schizophrenia or schizoaffective disorder. Psychiatry Res. 2005;140:133–45.

Kanahara N, Sekine Y, Haraguchi T, Uchida Y, Hashimoto K, Shimizu E, et al. Orbitofrontal cortex abnormality and deficit schizophrenia. Schizophr Res. 2013;143:246–52.

Schmack K, Gòmez-Carrillo de Castro A, Rothkirch M, Sekutowicz M, Rössler H, Haynes J-D, et al. Delusions and the role of beliefs in perceptual inference. J Neurosci. 2013;33:13701–12.

Schmack K, Rothkirch M, Priller J, Sterzer P. Enhanced predictive signalling in schizophrenia. Hum Brain Mapp. 2017;38:1767–79.

Barbano MF, Wang H-L, Morales M, Wise RA. Feeding and reward are differentially induced by activating GABAergic lateral hypothalamic projections to VTA. J Neurosci. 2016;36:2975–85.

Jennings JH, Rizzi G, Stamatakis AM, Ung RL, Stuber GD. The inhibitory circuit architecture of the lateral hypothalamus orchestrates feeding. Science. 2013;341:1517–21.

Margules DL, Olds J. Identical ‘feeding’ and ‘rewarding’ systems in the lateral hypothalamus of rats. Science. 1962;135:374–5.

Nieh EH, Vander Weele CM, Matthews GA, Presbrey KN, Wichmann R, Leppla CA, et al. Inhibitory input from the lateral hypothalamus to the ventral tegmental area disinhibits dopamine neurons and promotes behavioral activation. Neuron. 2016;90:1286–98.

Olds J, Milner P. Positive reinforcement produced by electrical stimulation of septal area and other regions of rat brain. J Comp Physiol Psychol. 1954;47:419–27.

Petrovich GD. Lateral hypothalamus as a motivation-cognition interface in the control of feeding behavior. Front Syst Neurosci. 2018;12:14.