Abstract

Assessment of the upper limb is critical to guiding the rehabilitation cycle. Drawbacks of observation-based assessment include subjectivity and coarse resolution of ordinal scales. Kinematic assessment gives rise to objective quantitative metrics, but uptake is encumbered by costly and impractical setups. Our objective was to investigate feasibility and accuracy of computer vision (CV) for acquiring kinematic metrics of the drinking task, which are recommended in stroke rehabilitation research. We implemented CV for upper limb kinematic assessment using modest cameras and an open-source machine learning solution. To explore feasibility, 10 neurotypical participants were recruited for repeated kinematic measures during the drinking task. To investigate accuracy, a simultaneous marker-based motion capture system was used, and error was quantified for the following kinematic metrics: Number of Movement Units (NMU), Trunk Displacement (TD), and Movement Time (MT). Across all participant trials, kinematic metrics of the drinking task were successfully acquired using CV. Compared to marker-based motion capture, no significant difference was observed for group mean values of kinematic metrics. Mean error for NMU, TD, and MT were − 0.12 units, 3.4 mm, and 0.15 s, respectively. Bland–Altman analysis revealed no bias. Kinematic metrics of the drinking task can be measured using CV, and preliminary findings support accuracy. Further study in neurodivergent populations is needed to determine validity of CV for kinematic assessment of the post-stroke upper limb.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Introduction

The upper limb serves many essential roles during daily life—eating lunch, opening a door, and embracing another. Each year 800,000 people experience a new stroke in the US alone, which affects upper limb ability in the majority of cases1,2.

Rehabilitation has been described as a cycle predicated by assessment3. Assessment of the post-stroke upper limb has cultivated the development of many validated methods, which span across the important domains of the International Classification of Function4. According to a centralized database, over 20 methods are documented for assessment of the post-stroke upper limb and a majority involve observation-based assessment5. While human observation is relatively simple, inherent drawbacks of this approach include subjectivity due to human evaluators and poor resolution for subtle change due to course ordinal scales6. While a compliment of instrument-based methods for assessment are present in research literature, clinical practice continues to rely heavily on observation-based assessments.

Kinematic assessment involves quantitative study of the body, limbs and joints during movement. Over the last two decades, researchers have adapted advancing technologies to study upper limb movement7. With potential of increased objectivity, these advances offer exciting complementary tools to existing assessment methods. Perhaps unsurprising, the rehabilitation research community is now leveraging kinematics assessment to better understand recovery trends for the upper limb among stroke survivors. This has led to well-documented protocols, established normative data, and clinically validated kinematic metrics8,9.

The application of kinematic assessment in research is benefiting our understanding of post-stroke rehabilitation. Compensatory movement and restitution of pre-stroke movement have been recognized as important and unique phenomena—the former illustrated by a stroke survivor’s truncal lean during reaching tasks to offset reduced arm extension at elbow10. While this behavior may be observable, it is not well-captured via observation-based scales, which typically employ ordinal scales and often emphasize task completion over task quality. However, using kinematic measures, behaviors can be quantified on a granular scale to determine the status of an individual’s motor recovery along a continuous spectrum between compensation and restitution11,12,13.

Advances in kinematic assessment are gaining momentum and recently culminated in consensus statements recommending kinematic metrics for standardized use in stroke research14. However, the authors concede that several barriers exist to these recommendations. Namely, kinematic assessment relies traditionally on marker-based optoelectronic systems that are considered time-consuming and impractical for broad use15.

To potentially reduce these barriers, computer vision (CV) has been considered as means towards markerless motion capture16,17,18,19,20. CV is a subfield of artificial intelligence that seeks to extract useful information from a digital image21. For markerless motion capture applications, the fundamental ingredients include ordinary cameras (i.e. RGB cameras in smartphones) and human pose estimation—a machine learning solution capable of estimating anatomic keypoints of the human body from a sequence of two-dimensional images (i.e. video). A variety of machine learning solutions are now available for this human pose estimation including OpenPose22, MediaPipe23, PoseNet24, and MoveNet25. When using two or more cameras, the two-dimensional human pose estimates can then be lifted to the three-dimensional space, which bookends a CV pipeline capable of capturing kinematics of the human body and limbs without need for markers.

Numerous studies support the potential application of CV as an assistive tool in medicine26. For example, CV has been used to estimate risk of musculoskeletal disorders by assessing the ergonomics of posture27. To support in-home rehabilitation, another study introduced a telehealth system based on CV28. To support applications in human kinematic assessment, several studies have explored the concurrent validity of CV systems versus traditional marker-based motion capture systems for locating joint positions, which have revealed errors on the scale of 20–30 mm16,17. In recent works, locomotion studies with healthy subjects have demonstrated the potential of CV for predicting important kinematic-based clinical metrics such as walking speed and gait deviation index29.

With regards to stroke rehabilitation, prior studies have applied CV to evaluate upper limb movement. Many early implementations leveraged a popular off-the-shelf camera containing an integrated depth sensor (i.e. Microsoft Kinect RGB-D) along with its associated software (i.e. Microsoft Kinect Skeleton Tracking algorithm)30,31,32,33,34. While one of these studies considers using CV to achieve a well-recognized upper limb assessment in stroke rehabilitation research33, none of these studies consider CV to achieve the kinematic metrics recommended for standardized use in stroke rehabilitation research14.

In more recent implementations of CV, studies have utilized common hardware (e.g. handheld RGB cameras) for assessment during stroke rehabilitation. For example, by applying human pose estimation (i.e. AlphaPose) to extract movement data of the hands, body, and face from camera footage, investigators then used this data to train a deep neural network to estimate the possibility of stroke35. In another study, movements of the body were again extracted from RGB camera footage using a similar human pose estimation (i.e. MediaPipe), and the investigators trained a binary classification model to detect compensatory upper limb movements among stroke survivors36. In a similar study using the same human pose estimation and a single RGB camera, investigators created virtual alternatives for common tests of distal upper limb dexterity (e.g. Box and Block Test)37. In a recent feasibility study using two RGB cameras, a CV system was utilized for 3D motion tracking of fine hand motor skills38. This study provided initial evidence for accurate tracking in both the coronal and sagittal planes, and it demonstrated feasibility of object tracking during manipulation (e.g. moving small block with chopsticks).

Despite promising validation efforts, there remain limited studies to support use of CV in rehabilitation populations39. Our objective is to move forward validation efforts of CV for post-stroke upper limb assessment. Leveraging an open-source CV solution, common RGB cameras, and collaboration between medicine and engineering, we present a pilot study of a markerless motion capture system developed in-house for capturing kinematic metrics recommended for standardized assessment of the post-stroke upper limb14, and our primary goal is to investigate feasibility in a neurotypical population and obtain preliminary evidence on accuracy.

Methods

Each participant performed the activity five times during a single session located at a clinical research laboratory inside a freestanding inpatient rehabilitation facility. The study was advertised via flyers posted across campus of a nearby major academic institution. In total, 10 participants were recruited from a sample of convenience, and all participants completed the data collection. Inclusion criteria were age between 18 and 80 years old, self-assessed absence of neurologic and musculoskeletal conditions affecting the upper limb, and ability to perform a simple reaching activity (e.g. drinking water from a cup). Participant demographics are shown in Table 1. As a primary outcome, this pilot study examined feasibility of CV to obtain upper-limb kinematic metrics during the drinking task, which have been recommended for standardized use in post-stroke rehabilitation research14. As a secondary outcome, this pilot study sought to collect preliminary data about the accuracy of CV in this application as well as collect estimates on the intraclass correlation within repeated task performances by participants. The protocol for data collection was reviewed and approved by the University of Kentucky Institutional Review Board (IRB #63176), and informed written consent was obtained from all participants prior to data collection.

Drinking task kinematics

The drinking task activity has been previously protocolized and involves the following five phases of movement: reaching to grasp a cup of water, forward transport of cup to mouth, drinking, back transport of cup to table, and return to starting position11. The drinking task activity is depicted in Fig. 1. For start position, participants were seated with arms at side, elbows bent and hands located in a pronated position such that wrist crease coincided with edge of tabletop. Using a height-adjustable stool, the seat height was set such that elbows, hips and knees were flexed at approximately 90°. The cup was filled with 100 mL of water, and its starting location was midline in front of participant in a 100 mm by 100 mm bounding box with centroid located 300 mm from the edge of tabletop. Limited instruction was provided to participants so as to foster self-selected movements and reduce potential distraction to the participant’s usual behavior. Each participant was permitted up to two practice reps before the formal data acquisition. Based on motion capture data obtained during this drinking task, several kinematic metrics can be obtained. We focus on primarily three kinematic metrics: movement time (MT), number of movement units (NMU), and trunk displacement (TD). In prior work utilizing principal component analysis techniques, these kinematic metrics have been shown to substantially capture the variance in the drinking task activity, to have high discriminant ability, and to be valid compared to current clinical assessments for individuals with post-stroke upper limb impairment40,41.

Computer vision system

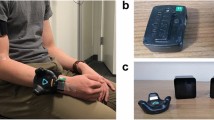

To capture kinematics of the upper limb, our study employed a CV system developed in-house. The system can be described according to three factors: cameras, human pose estimation, and three-dimensional key point determination (“lifting”). A dual-camera approach was employed in our system, and each camera relied on a digital image sensor similar to that found in common smartphones. Each camera (Blackfly S, FLIR Systems, Oregon, USA) was fitted with an adjustable lens (Fujinon Varifocal Lens, Fujifilm, Tokyo, Japan). Camera resolution was 1280 × 1024 and frame capture speed was 60 frames per second. Cameras were located in front of the subject and oriented with a downward oblique perspective so as to optimize visibility of joint locations throughout task. Distance between subject and cameras was about 1.2 m, and the cameras were spaced about 0.8 m apart from each other. Figure 2 shows the laboratory setup for simultaneous collection of upper limb kinematic data using a CV system and a marker-based motion capture system. As this simultaneous collection was crucial to our validation approach, subjects wore sleeveless shirts throughout the study to accommodate the marker-based motion capture system.

A calibration step was used to extract the extrinsic and intrinsic parameters of the camera—the former describes where the physical camera is in space and the latter describes how pixels of the digital camera image map to the real-world. Human pose estimation involves the identification of key points of the human body by applying advanced machine learning solutions to a two-dimensional digital image. Several of these machine learning solutions are now available in open-source format22,24,25. We have applied one of these to detect key upper limb landmark locations including bilateral shoulders, elbows, and wrists23. Lastly, to arrive at three-dimensional coordinates of key points, a lifting procedure is performed, which was achieved in our multi-camera setup using a direct linear transformation method42. Additional detailed explanation about our CV system is beyond the scope of the current manuscript, which aims to explore feasibility of this system for clinically relevant kinematic assessment.

Marker-based motion capture system

To collect preliminary data on accuracy, kinematic assessment of the upper limb was captured using a conventional marker-based motion capture system (Qualisys AB, Gothenburg, Sweden), which was implemented simultaneously with the CV system. This marker-based system comprised of five optoelectronic cameras with infrared sensors to track reflective markers applied to the participants using double-side tape. The marker setup duplicated those described in prior literature, which have been developed for clinical use and involve 10 reflective markers placed on landmarks of limbs and thorax as well as 2 reflective markers on the cup11. Landmarks included, from distal to proximal: 3rd metacarpophalangeal joint (bilateral), ulnar styloid (bilateral), lateral epicondyle (bilateral), midway of the acromion (bilateral), sternal notch, and midline between the eyes in line with supraorbital ridge.

Post processing

For both CV and marker-based motion capture systems, the raw data comprised a time series of three-dimensional data corresponding to the position of the participant’s body and limbs during the drinking task. The origin and three-dimensional coordinate axes were defined as shown in Fig. 2. For the marker-based motion capture system, this raw position data was post-processed in a Matlab environment (Matlab 2022b, Natick, Massachusetts, USA) using a 2nd order, zero-phase lag butterworth low pass filter with 6Hz cutoff frequency11,43. For the CV system, this raw position data was post-processed in a Matlab environment using a bespoke filter optimized for each kinematic metric. A variety of filters for biomechanical signal filtering have been previously described in literature including Butterworth, Kalman, Moving Average, and Savitsky Golay44,45. For the NMU metric and MT metric, a Kalman filter was applied in accordance to published implementation steps46. Specifically, the state vector was composed of position and velocity for each axis (i.e., statex = [px,vx ], where px and vx represent the position and velocity values on the x-axis), the control vector was set as [1.5, 1.5], and both the process and the measurement noise were set to be zero-mean Gaussian with covariance of 10002*[1/60, (1/60)3/2; (1/60)3/2, (1/60)2] and 602*[1,1; 1,1], respectively. For the TD metric, a Moving Average filter was applied to the raw position data with a 40-count window size. Temporal synchronization of the systems was performed using movement initiation of the upper limb in the y-axis (parallel to tabletop edge) as the synchronizing event. To align reference frames of each system, transformation was applied based on an optimized rotational matrix calculated per the Kabsch method47.

Calculation of the drinking task kinematic metrics (MT, NMU, TD) was completed using Matlab routines custom built according to prior literature descriptions11. For the NMU metric, this measure of movement smoothness is based on the definition of a movement unit. For the drinking task activity, a movement unit is a local minimum in the hand velocity profile followed by a local maximum, which represents an instance of hand acceleration and deceleration. For the MT metric, this measure is based on detecting movement start and stop times, which have previously been defined as when hand velocity exceeds or falls below 2% of peak velocity, respectively. In CV, this detection of movement start and stop can be adversely impacted by signal artefact known as “jitter”48, and to reduce this artefact in data from our CV system, the velocity threshold for start/stop detection was increased from 2 to 5.5%. For the TD metric, this is based on the truncal lean a participant demonstrates while performing the drinking task, which is measurable based on tracking position of the participant’s chest.

To facilitate the comparative analysis, the kinematic metrics calculated for each system were based on anatomic consistency between surface markers and key points. For example, the NMU metric has been defined according to a hand velocity profile created using a surface marker adhered to the third metacarpophalangeal joint. As the CV system in this study did not identify finger key points, the NMU metric for the marker-based motion capture and CV system was calculated as a function of the ulnar styloid marker and wrist key point, respectively. Similarly, the MT metric was also based on use of the ulnar styloid marker and wrist key point. Lastly, as the CV system did not identify a sternal key point, a midpoint between shoulders was used to determine the TD metric for both systems.

Statistical analysis

To evaluate accuracy of the joint position measured by the CV system, the three-dimensional joint position data, as defined in the global coordinate space, was compared against that of the synchronized marker-based motion capture system using a root mean square error (RMSE). As the drinking task activity involved movement of the dominant right upper limb for all participants, the RMSE data was grouped according to the major key points of the right upper limb, e.g. shoulder, elbow, and wrist. To compare RMSE among these major key points, comparisons were calculated using a difference of least square means and p-value less than 0.05 was considered statistically significant.

To evaluate accuracy of the kinematic metrics of the drinking task, the metrics obtained from the CV system were compared against those obtained from the marker-based motion capture system. The kinematic metrics between the two systems were modeled and tested using repeated measures analysis of variance using a compound symmetry correlation structure. In addition, the error for each trial was calculated as the CV system value subtracted from the marker-based motion capture system value as a measure of accuracy. For mean comparisons, p-values less than 0.05 were considered significant, and given the preliminary nature of this study, no correction for multiple comparisons was implemented.

To visually compare the CV system versus the marker-based motion captures system, Bland–Altman plots were constructed for each kinematic metric, which included a full complement of 95% confidence intervals with necessary correction methods due to multiple observations per individuals49. In addition, to improve sample size estimation in future studies, the intraclass correlation coefficient was calculated from the repeated measures analyses for each kinematic metric.

Results

Feasibility

A total of 10 participants were recruited and all participants successfully completed the study protocol. Each data collection session was completed during a morning or afternoon based on convenience to participants. The duration of each session was less than two hours, which included calibration of equipment by research personnel and performance of 5 trials of the drinking task activity by the participant. There were no adverse events during sessions. The raw data from the CV system and marker-based motion capture system was successfully post-processed for all trials across all participants. The desired kinematic metrics of the drinking task activity were achieved from each system, and an illustration of these metrics is shown for a single participant trial in Fig. 3.

Data acquisition. For each participant, time-series of 3D joint position data during the drinking task was acquired using both the computer vision system (CV) and a gold-standard marker-based motion capture system (MB-MoCap) (panel A). By post-processing this joint position data, relevant kinematic metrics could be obtained including a metric to quantify movement quality (panel B) and metrics to quantify movement compensation (panel C and panel D).

Accuracy

The accuracy of joint position was determined for the right shoulder, right elbow, and right wrist. Based on all trials across all participants, the average RMSE for the right shoulder was 52.3 ± 12.0 mm. The average RMSE for the right elbow was 80.2 ± 14.8 mm, and the average RMSE for the right wrist was 60.9 ± 10.6 mm. Comparing between these joint locations, the right elbow RMSE was significantly higher than both the right shoulder and the right wrist (p’s = 0.0002 & 0.0035, respectively). No statistically significant difference was observed between RMSE of the right shoulder and right wrist, p = 0.11.

The accuracy of kinematic metrics obtained from the CV system was determined by comparing measures for the right upper limb with the same measures obtained from the synchronized marker-based motion capture system. Comparison is illustrated in Fig. 4. For the NMU metric obtained by CV and marker-based motion capture, the mean units were 4.42 and 4.54, respectively. The mean values for the TD metric were 33.63 mm and 30.19 mm, respectively. Finally, the mean values for the MT metric were 6.78 s and 6.63 s, respectively. For all kinematic measures, none of the mean values were significantly different between the CV and marker-based motion capture systems (p’s > 0.23). Across all participants and trials, the mean error for the NMU metric, TD metric, and MT metric were -0.12 units (95% CI − 0.38, 0.14), 3.4 mm (95% CI − 0.12, 7.01), and 0.15 s (95% CI − 0.06, 0.36), respectively.

Using Bland–Altman analysis, no significant bias was found for any of the kinematic metrics based on presence of the line of equality within the mean difference confidence intervals for each metric (see Fig. 5). For the NMU metric, the limits of agreement ranged from − 1.93 (95% CI [− 2.38, − 1.48]) to 1.69 (95% CI [1.24, 2.14]). For the TD metric, the limits of agreement ranged from − 21.66 mm (95% CI [− 27.96, − 15.35]) to 28.54 mm (95% CI [22.24, 34.85]). For the MT metric, the limits of agreement ranged from − 1.33s (95% CI [− 1.70, − 0.96]) to 1.63s (95% CI [1.26, 2.00]). The intraclass correlation coefficients (ICCs) varied across the kinematic measures with the CV system having lower ICCs except for NMU. For NMU, the ICC was relatively small (0.21 for the CV system and 0.11 for the marker-based motion capture system). However, for TD, the ICCs were quite consistent at 0.36 and 0.40, and for the MT, the marker-based motion capture system had a much higher ICC of 0.63 while the CV system was almost half at 0.34.

Bland–Altman analysis. For each kinematic metric, Bland–Altman plots provide a comparison of potential bias in computer vision system, which is represented by comparison of the mean difference line (solid red line) to the line of equality (solid blue line). Additionally, the random error of the data is illustrated by the limits of agreement (dotted green lines) and confidence intervals for both mean difference and limits of agreement are shown in shaded bands (red band and green band, respectively). Due to considerable overlapping of data points when plotting NMU (left), a “jitter plot” option has been applied to reveal the individual data points.

Discussion

This pilot study investigated the application of CV technology for measuring post-stroke kinematic metrics of the upper limb that have been recommend for standardized use14. Our primary objective was to determine feasibility of this approach among a sample of adult neurotypical participants, and our secondary objective was to assess accuracy of this approach. As evidence of feasibility, the data collection protocol was well-tolerated by all participants, and joint position data was successfully extracted by a CV system for all trials attempted. Furthermore, for all trials across all participants, three kinematic metrics for the post-stroke upper limb were successfully obtained. Based on comparison with a synchronized, gold-standard marker-based motion capture system, preliminary evidence suggests no significant difference between the kinematic measurements by CV and those by the gold-standard system.

As with any assessment during stroke rehabilitation, the clinical uptake of kinematic assessment depends on a balance between acceptability and accuracy50. Conventional marker-based approaches have often served as a gold standard approach to acquire kinematics51, but this approach presents obvious challenges to clinical acceptability including high expense and burdensome marker placement15. Electromagnetic systems represent a potentially portable option with adequate accuracy to measure large dynamic movements in a single reference plane52. These systems do require specialized hardware susceptible to electromagnetic interference, and lower sampling rates may be problematic to high frequency human movements53. Wearable sensors offer a relatively affordable and highly mobile solution capable of measuring upper limb joint angles with an RMSE less than 7°54,55. While price and miniaturization continue to progress, wearable sensors inherently require placement of and maintenance of physical devices on an individual, which is not a trivial issue when considering neurodivergent populations or when considering small anatomic landmarks such as the hand joints during reach-to-grasp movements.

By eliminating the need for physically worn devices, CV technology offers inherent benefits to acceptability and studies have explored potential tradeoffs in accuracy. Several studies have examined the accuracy of CV systems that combine cameras and depths sensors in a single device (i.e. RGB-D cameras). Using a single such device to measure the lower limb compared to a marker-based system, the average absolute difference in hip and knee flexion among healthy participants was 4.3 and 1.4°, respectively56. In a similar study comparing an RGB-D camera versus an electrogoniometer, the sagittal-plane hip and knee angles for healthy participants revealed an RMSD of 1.76 and 2.04°, respectively57. When measuring the upper limbs during a lifting and truncal lean activity, an RGB-D camera was found to have an RMSE of 27 and 47 mm, respectively, as compared to a gold standard. In a study measuring joints of the hand, a single healthy participant performed hand spreading and pincer grip activities; compared to a marker-based system, the RGB-D camera demonstrated an average absolute deviation of 2.4, 4.8, and 4.8° at the MCP, PIP, and DIP, respectively58. In a recent preliminary study using a single device during drinking task activity, the RMSE for elbow flexion measured in a single health participant was 16.9°59.

With advances in human pose estimation, kinematic assessment can be done with more basic cameras akin to those found in consumer webcams and smartphone cameras. Studies have explored the accuracy of CV systems that use such modest cameras. When evaluating treadmill walking in healthy participants with multiple monochrome cameras, kinematic metrics in sagittal and frontal planes were comparable to that of a marker-based system60. In a similar study of overground walking, the joint locations of the upper and lower limbs were measured with a root mean square difference (RMSD) up to 24 mm and 36 mm, respectively, and the RMSD for joint angles ranged from a minimum 2.6° (hip flexion/extension) to maximum 13.2° (knee internal/external rotation)16. In studies of more diverse activities (jumping, ball throwing), a collection of modest cameras successfully measured joint locations with a mean absolute error ranging from 25 to 67 mm (upper limbs) and from 25 to 42 mm (lower limbs)17. In a related study measuring joint angles during functional mobility activities (stepping down, run/cut), an 8-camera setup measured ankle and knee flexion in healthy participants with RMSD < 6°19.

In our study of a modest dual-camera setup, errors in joint position were comparable to the aforementioned studies with exception of slightly increased error in elbow joint position. There is a likely explanation to the increased error in our study—namely, the definition of joint position. For our experimental CV approach, the joint position is based on a joint center approximated by human pose estimation solutions. That is, joint position of the shoulder is based on an estimated center of the glenohumeral joint. For our gold standard approach, we implemented a well-protocolized, clinically oriented setup for marker-based motion capture, which relies on a limited number of reflective surface markers adhered to the participant’s skin. In this case, joint position of the shoulder is based on a superficial marker placed near the midpoint of the lateral acromion. The surface marker position was used in our validation setup as a surrogate for joint center position, which inherently introduces an offset into our error calculation. While it is possible to use multiple surface markers and biomechanical modeling software to model the joint center position61, this was not applicable to our validation setup.

For joint position of the elbow, our CV approach revealed less accuracy compared to joint position of the shoulder and wrist. This is consistent with prior studies in which participants performed other activities of the upper limb such as arm swing during walking16. This suggests a potentially important phenomenon specific to the elbow, which may be activity-agnostic. A plausible explanation is labeling error in the training data for human pose estimation solutions48. Regardless of the cause, knowledge about joint-specific trends in error may be helpful as future kinematic metrics are developed. Metrics that minimize reliance on more error-prone joint positions may be prioritized. For example, MT and NMU depend solely on the wrist joint position and are independent of the elbow joint position.

Regarding errors in the kinematic metrics (NMU, TD, MT) of the drinking task, the authors are unaware of previous literature that has compared a CV approach to a gold standard approach. As mentioned above, the definition of joint position may contribute error to the drinking task kinematics. In addition, surface markers on the skin introduce known artifacts due to movement of the skin over underlying bony structures, and these skin artifacts are known to contribute errors to marker-based kinematic metrics62. Thus, skin artifacts in our gold-standard comparator may contribute to our error calculations.

While direct comparison to other studies is limited, the errors in kinematic metrics can be clinically interpreted based on foundational studies of the drinking task. Namely, in a series of marker-based motion capture studies by Alt Murphy, the discriminant properties and clinical correlates of drinking task kinematics have been determined40,43. Considering metrics of compensation, participants with mild-moderate stroke demonstrated a TD approximately 50 mm more than a cohort of healthy controls, which far exceeds the mean difference and RMSE determined in our study. Similarly, duration of the drinking task (MT) for individuals affected by stroke is approximately 4.9 s more than for individuals without stroke, which again far exceeds the mean difference and RMSE for MT as measured by our CV approach. Based on correlations with the Action Research Arm Test, real clinical improvement in TD, MT, and NMU has been quantified as changes of 20–50 mm, 2.5–5 s, and 3–7 units, respectively, which exceed the error of the CV approach in the present study43.

Previous studies with CV have suggested potential bias when compared to marker-based approaches60. This pilot study, however, revealed no significant bias in the Bland–Altman analysis. Of note, the MT metric exhibited a mean difference that uptrends with magnitude of the mean value, which suggests heteroskedasticity. While between session reliability was not possible in the current pilot study, prior studies in gait kinematics suggest CV may excel when compared to marker-based motion capture63. This has historically been attributed to variations in marker placement, which may be dependent on the anatomic knowledge and experience of the assessor.

Future opportunities

There are several opportunities to build upon the data acquisition protocol of our preliminary validation study. As mentioned, a marker-based approach is often considered a gold-standard for kinematic assessment, and by increasing the number of markers, biomechanical modeling can be used to compensate for the drawbacks of surface markers. Additionally, line-of-sight is a common challenge to both marker-based and CV approaches. A marker-based system becomes ineffective when markers are hidden from camera view, such as might happen when loose clothing shifts or when limbs rotate from view, e.g. a dorsal wrist marker that escapers sight when the forearm supinates. While a CV approach is able to handle visibility issues that might present from loose-fitting garments, the accuracy is likely to benefit from form-fitting clothing. For research purposes, line-of-sight issues can be mitigated in both approaches by increasing the number of cameras, by improving calibration, and by employing cameras with higher resolution and frame rate. However, evaluators should be mindful of a balance among expense, complexity, accuracy, and acceptability. Regarding synchronization of the validation setup, a manual approach was utilized in our study, and an automated synchronization would likely benefit efficiency of the data collection.

Beyond data acquisition, there are also several opportunities to advance the signal processing methods of our validation study. We utilized a single solution for human pose estimation, and by exploring alternative solutions, the accuracy and performance of the CV system may be enhanced. For signal filtering, several options exist as demonstrated in the bespoke filters we applied for the different kinematic metrics. Rigid body filtering has been described in literature. Rigid body filtering employs biomechanical modeling with scaled virtual skeletons, which may mitigate signal artefact (e.g. “jitter”) by leveraging the existence of anatomic constraints during data post-processing18.

Limitations

There are important limitations to this pilot study. Our data acquisition focused on a small sample of neurotypical participants. While important groundwork is laid, the generalizability of our findings to individuals with history of stroke is subsequently limited. For example, if comparing our participant sample and a hypothetical sample of stroke survivors, the accuracy estimates of the CV system at the group level would expectedly be different for stroke survivors based on the wider movement variations between trials (e.g. wider variety of movement unit patterns, wider extent of trunk displacement). To improve generalizability, future studies will benefit from increased sample size, inclusion of neurodivergent populations, and consideration of more diverse demographics (e.g. age, laterality of hand dominance). In our pilot study, we considered only a single session of data acquisition with each participant, which limits our analysis of repeatability. For our gold standard approach, we chose a reduced marker setup (e.g. single marker on sternal notch) to replicate prior study protocols on the drinking task, but this subsequently limits the available kinematic information (e.g. trunk rotation is not measured) and limits more sophisticated biomechanical modeling (e.g. inverse kinematics). Likewise, our CV system identifies only a limited number of key points and excludes many other landmarks such as digits of the distal hand. While in our pilot study, digits of the hands were less important for the kinematic metrics of interest (e.g. NMU, MT, TD), these landmarks are an important future target to consider given that the manipulation of objects is prevalent throughout daily life. Fortunately, these landmarks can be achieved depending on the machine learning solution utilized for human pose estimation. Lastly, the drinking task is a single reach-to-grasp activity, which represents a limited view of a person’s activities of daily living. Future studies may consider a wider spectrum of upper limb activities of daily life as well as activities that are personalized according to individual’s interests and values.

Conclusion

Based on a pilot study in neurotypical participants, computer vision is a feasible method for measuring kinematic metrics that have been recommended for standardized use in rehabilitation research involving the post-stroke upper limb. Future research is needed to investigate the validity of this technology in people affected by stroke.

Data availability

The datasets generated and analyzed during the current study are available from the corresponding author on reasonable request.

References

Kwakkel, G., Kollen, B. J., van der Grond, J. & Prevo, A. J. Probability of regaining dexterity in the flaccid upper limb: Impact of severity of paresis and time since onset in acute stroke. Stroke 34(9), 2181–2186 (2003).

Tsao, C. W. et al. Heart disease and stroke statistics—2022 update: A report from the American Heart Association. Circulation 145(8), e153–e639 (2022).

World Health Organization. World Report on Disability 2011 (World Health Organization, 2011).

World Health Organization. International Classification of Functioning, Disability, and Health: ICF 2001 (World Health Organization, 2001).

Moore, J. L., Raad, J., Ehrlich-Jones, L. & Heinemann, A. W. Development and use of a knowledge translation tool: The rehabilitation measures database. Arch. Phys. Med. Rehabil. 95(1), 197–202 (2014).

Alt Murphy, M., Sunnerhagen, K. S., Johnels, B. & Willén, C. Three-dimensional kinematic motion analysis of a daily activity drinking from a glass: A pilot study. J. Neuroeng. Rehabil. 3, 18 (2006).

Schwarz, A., Kanzler, C. M., Lambercy, O., Luft, A. R. & Veerbeek, J. M. Systematic review on kinematic assessments of upper limb movements after stroke. Stroke (1970) 50(3), 718–727 (2019).

Alt Murphy, M. (eds) Development and validation of upper extremity kinematic movement analysis for people with stroke. Reaching and drinking from a glass (2013).

Thrane, G., Sunnerhagen, K. S. & Murphy, M. A. Upper limb kinematics during the first year after stroke: The stroke arm longitudinal study at the University of Gothenburg (SALGOT). J. Neuroeng. Rehabil. 17(1), 1–12 (2020).

Levin, M. F., Kleim, J. A. & Wolf, S. L. What do motor “recovery” and “compensation” mean in patients following stroke?. Neurorehabil. Neural Repair 23(4), 313–319 (2009).

Alt Murphy, M., Murphy, S., Persson, H. C., Bergström, U. B. & Sunnerhagen, K. S. Kinematic analysis using 3D motion capture of drinking task in people with and without upper-extremity impairments. J. Vis. Exp. https://doi.org/10.3791/57228-v (2018).

Ellis, M. D., Lan, Y., Yao, J. & Dewald, J. P. A. Robotic quantification of upper extremity loss of independent joint control or flexion synergy in individuals with hemiparetic stroke: A review of paradigms addressing the effects of shoulder abduction loading. J. Neuroeng. Rehabil. 13(1), 95 (2016).

Xu, J. et al. Separable systems for recovery of finger strength and control after stroke. J. Neurophysiol. 118(2), 1151–1163 (2017).

Kwakkel, G. et al. Standardized measurement of quality of upper limb movement after stroke: Consensus-based core recommendations from the second stroke recovery and rehabilitation roundtable. Int. J. Stroke 14(8), 783–791 (2019).

Krakauer, J. W., Carmichael, S. T., Corbett, D. & Wittenberg, G. F. Getting neurorehabilitation right: What can be learned from animal models?. Neurorehabil. Neural Repair 26(8), 923–931 (2012).

Kanko, R. M., Laende, E. K., Davis, E. M., Selbie, W. S. & Deluzio, K. J. Concurrent assessment of gait kinematics using marker-based and markerless motion capture. J. Biomech. 127, 110665 (2021).

Nakano, N. et al. Evaluation of 3D Markerless motion capture accuracy using OpenPose with multiple video cameras. Front. Sports Act. Living https://doi.org/10.3389/fspor.2020.00050 (2020).

Needham, L. et al. The development and evaluation of a fully automated markerless motion capture workflow. J. Biomech. 144, 111338 (2022).

Song, K., Hullfish, T. J., Silva, R. S., Silbernagel, K. G. & Baxter, J. R. Markerless motion capture estimates of lower extremity kinematics and kinetics are comparable to marker-based across 8 movements. bioRxiv. 2023.02.21.526496 (2023).

Uhlrich, S. D., Falisse, A., Kidziński, Ł., Muccini, J., Ko, M., Chaudhari, A. S. et al. OpenCap: 3D human movement dynamics from smartphone videos. bioRxiv. 2022.07.07.499061 (2022).

Prince, S. Computer Vision: Models, Learning, and Inference (Cambridge University Press, 2012).

Cao, Z., Hidalgo, G., Simon, T., Wei, S. E. & Sheikh, Y. OpenPose: Realtime multi-person 2D pose estimation using part affinity fields. IEEE Trans. Pattern Anal. Mach. Intell. 43(1), 172–186 (2021).

Lugaresi, C., Tang, J., Nash, H., McClanahan, C., Uboweja, E., Hays, M. et al. MediaPipe: A Framework for Building Perception Pipelines. https://doi.org/10.48550/arXiv.1906.08172. Available from: https://ui.adsabs.harvard.edu/abs/2019arXiv190608172L (2019).

Kendall, A., Grimes, M. & Cipolla, R. (eds) PoseNet: A convolutional network for real-time 6-DOF camera relocalization. In 2015 IEEE International Conference on Computer Vision (ICCV) 7–13 (2015).

Bajpai, R. & Joshi, D. MoveNet: A deep neural network for joint profile prediction across variable walking speeds and slopes. IEEE Trans. Instrum. Meas. 70, 1–11 (2021).

Chung, J.-L., Ong, L.-Y. & Chew, L. Comparative analysis of skeleton-based human pose estimation. Future Internet 14, 380 (2022).

Kim, W., Sung, J., Saakes, D., Huang, C. & Xiong, S. Ergonomic postural assessment using a new open-source human pose estimation technology (OpenPose). Int. J. Ind. Ergon. 84, 103164 (2021).

Jiaming, C., Ong, L.-Y. & Chew, L. Telehealth Using posenet-based system for in-home rehabilitation. Future Internet 13, 173 (2021).

Kidziński, Ł et al. Deep neural networks enable quantitative movement analysis using single-camera videos. Nat. Commun. 11(1), 4054 (2020).

Baptista, R. et al. Home self-training: Visual feedback for assisting physical activity for stroke survivors. Comput. Methods Progr. Biomed. 176, 111–120 (2019).

Capecci, M. et al. The KIMORE dataset: Kinematic assessment of movement and clinical scores for remote monitoring of physical rehabilitation. IEEE Trans. Neural Syst. Rehabilit. Eng. 27(7), 1436–1448 (2019).

Cary, F., Postolache, O. & Girão, P. S. (eds) Kinect based system and Artificial Neural Networks classifiers for physiotherapy assessment. In 2014 IEEE International Symposium on Medical Measurements and Applications (MeMeA) 11–12 (2014).

Eichler, N., Hel-Or, H., Shmishoni, I., Itah, D., Gross, B. & Raz, S. (eds). Non-invasive motion analysis for stroke rehabilitation using off the shelf 3D sensors. In 2018 International Joint Conference on Neural Networks (IJCNN) 8–13 (2018).

Pogrzeba, L., Neumann, T., Wacker, M. & Jung, B. Analysis and quantification of repetitive motion in long-term rehabilitation. IEEE J. Biomed. Health Inform. 23(3), 1075–1085 (2019).

Lee, T., Jeon, E. T., Jung, J. M. & Lee, M. Deep-learning-based stroke screening using skeleton data from neurological examination videos. J. Pers. Med. 12(10), 1691 (2022).

Lin, H. P., Zhao, L., Woolley, D., Zhang, X., Cheng, H. J., Liang, W. et al. Exploring the feasibility of computer vision for detecting post-stroke compensatory movements. In IEEE International Conference on Rehabilitation Robotics, Vol. 2023 1–6 (2023).

Zestas, O. N., Soumis, D. N., Kyriakou, K. D., Seklou, K. & Tselikas, N. D. A computer-vision based hand rehabilitation assessment suite. AEU Int. J. Electron. Commun. 169, 154762 (2023).

Kim, B. & Neville, C. Accuracy and feasibility of a novel fine hand motor skill assessment using computer vision object tracking. Sci. Rep. 13(1), 1813 (2023).

Debnath, B., O’Brien, M., Yamaguchi, M. & Behera, A. A review of computer vision-based approaches for physical rehabilitation and assessment. Multimed. Syst. 28(1), 209–239 (2022).

Alt Murphy, M., Willén, C. & Sunnerhagen, K. S. Kinematic variables quantifying upper-extremity performance after stroke during reaching and drinking from a glass. Neurorehabil. Neural Repair 25(1), 71–80 (2011).

Alt Murphy, M., Willén, C. & Sunnerhagen, K. S. Movement kinematics during a drinking task are associated with the activity capacity level after stroke. Neurorehabil. Neural Repair 26(9), 1106–1115 (2012).

Shapiro, R. Direct linear transformation method for three-dimensional cinematography. Res. Q. 49(2), 197–205 (1978).

Alt Murphy, M., Willén, C. & Sunnerhagen, K. S. Responsiveness of upper extremity kinematic measures and clinical improvement during the first three months after stroke. Neurorehabil. Neural Repair 27(9), 844–853 (2013).

Cerveri, P., Pedotti, A. & Ferrigno, G. Robust recovery of human motion from video using Kalman filters and virtual humans. Hum. Mov. Sci. 22(3), 377–404 (2003).

Crenna, F., Rossi, G. B. & Berardengo, M. Filtering biomechanical signals in movement analysis. Sensors (Basel) 21(13), 4580 (2021).

Youngjoo, K. & Hyochoong, B. Introduction to Kalman filter and its applications. In Ch. 2 Introduction and Implementations of the Kalman Filter (ed. Felix, G.) (IntechOpen, 2018).

Kabsch, W. A solution for the best rotation to relate two sets of vectors. Acta Crystallogr. Sect. A 32(5), 922–923 (1976).

Needham, L., Evans, M., Cosker, D. P. & Colyer, S. L. Can Markerless pose estimation algorithms estimate 3D mass centre positions and velocities during linear sprinting activities?. Sensors 21(8), 2889 (2021).

Bland, J. M. & Altman, D. G. Agreement between methods of measurement with multiple observations per individual. J. Biopharm. Stat. 17(4), 571–582 (2007).

Sivan, M., O’Connor, R. J., Makower, S., Levesley, M. & Bhakta, B. Systematic review of outcome measures used in the evaluation of robot-assisted upper limb exercise in stroke. J. Rehabilit. Med. 43(3), 181–189 (2011).

Yang, S. et al. Markerless motion capture systems for tracking of persons in forensic biomechanics: An overview. Comput. Methods Biomech. Biomed. Eng. 2, 46–65 (2013).

Urbanczyk, C. A., Bonfiglio, A., McGregor, A. H. & Bull, A. M. J. Comparing optical and electromagnetic tracking systems to facilitate compatibility in sports kinematics data. Int. Biomech. 8(1), 75–84 (2021).

Hindle, B. R., Keogh, J. W. L. & Lorimer, A. V. Inertial-based human motion capture: A technical summary of current processing methodologies for spatiotemporal and kinematic measures. Appl. Bionics Biomech. 2021, 6628320 (2021).

Cutti, A. G., Giovanardi, A., Rocchi, L., Davalli, A. & Sacchetti, R. Ambulatory measurement of shoulder and elbow kinematics through inertial and magnetic sensors. Med. Biol. Eng. Comput. 46(2), 169–178 (2008).

El-Gohary, M. & McNames, J. Shoulder and elbow joint angle tracking with inertial sensors. IEEE Trans. Biomed. Eng. 59(9), 2635–2641 (2012).

Schmitz, A. et al. The measurement of in vivo joint angles during a squat using a single camera markerless motion capture system as compared to a marker based system. Gait Posture 41(2), 694–698 (2015).

Natarajan, S. K., Wang, X., Spranger, M. & Gräser, A. (eds) Reha@Home—A vision based markerless gait analysis system for rehabilitation at home. In 2017 13th IASTED International Conference on Biomedical Engineering (BioMed) 20–21 (2017).

Metcalf, C. D. et al. Markerless motion capture and measurement of hand kinematics: Validation and application to home-based upper limb rehabilitation. IEEE Trans. Biomed. Eng. 60(8), 2184–2192 (2013).

Scott, B., Chadwick, E., McInnes, M. & Blana, D. Assessing single camera markerless motion capture during upper limb activities of daily living. Gait Posture 106, S184 (2023).

Sandau, M. et al. Markerless motion capture can provide reliable 3D gait kinematics in the sagittal and frontal plane. Med. Eng. Phys. 36(9), 1168–1175 (2014).

Rab, G., Petuskey, K. & Bagley, A. A method for determination of upper extremity kinematics. Gait Posture 15(2), 113–119 (2002).

Benoit, D. L. et al. Effect of skin movement artifact on knee kinematics during gait and cutting motions measured in vivo. Gait Posture 24(2), 152–164 (2006).

Kanko, R. M., Laende, E., Selbie, W. S. & Deluzio, K. J. Inter-session repeatability of markerless motion capture gait kinematics. J. Biomech. 121, 110422 (2021).

Acknowledgements

Not Applicable

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: JH was supported by the National Center for Advancing Translational Sciences, National Institutes of Health, through grant number UL1TR001998 and the University of Kentucky College of Medicine Deans Office. The content is solely the responsibility of the authors and does not necessarily represent the official views of the NIH.

Author information

Authors and Affiliations

Contributions

Study design and planning was led by J.H. Data acquisition was performed by J.H. Data post-processing was performed by J.H. and J.B. Data analysis was performed by S.S., and data interpretation was led by J.H. with review by J.B. Manuscript initial preparation was conducted by J.H. Manuscript revisions were performed by J.H., S.S., and J.B. All authors approved the final manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Ethics approval and consent to participate

Research has been performed in accordance with the Declaration of Helsinki. The protocol was reviewed by the University of Kentucky Institutional Review Board (IRB# 63176). Informed consent authorizing participation and authorizing use of photographs/videos was obtained from each participant and secured.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Huber, J., Slone, S. & Bae, J. Computer vision for kinematic metrics of the drinking task in a pilot study of neurotypical participants. Sci Rep 14, 20668 (2024). https://doi.org/10.1038/s41598-024-71470-8

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-024-71470-8

- Springer Nature Limited