Abstract

Background

Standardization of procedures for data abstraction by cancer registries is fundamental for cancer surveillance, clinical and policy decision-making, hospital benchmarking, and research efforts. The objective of the current study was to evaluate adherence to the four components (completeness, comparability, timeliness, and validity) defined by Bray and Parkin that determine registries’ ability to carry out these activities to the hospital-based National Cancer Database (NCDB).

Methods

Tbis study used data from U.S. Cancer Statistics, the official federal cancer statistics and joint effort between the Centers for Disease Control and Prevention (CDC) and the National Cancer Institute (NCI), which includes data from National Program of Cancer Registries (NPCR) and Surveillance, Epidemiology, and End Results (SEER) to evaluate NCDB completeness between 2016 and 2020. The study evaluated comparability of case identification and coding procedures. It used Commission on Cancer (CoC) standards from 2022 to assess timeliness and validity.

Results

Completeness was demonstrated with a total of 6,828,507 cases identified within the NCDB, representing 73.7% of all cancer cases nationwide. Comparability was followed using standardized and international guidelines on coding and classification procedures. For timeliness, hospital compliance with timely data submission was 92.7%. Validity criteria for re-abstracting, recording, and reliability procedures across hospitals demonstrated 94.2% compliance. Additionally, data validity was shown by a 99.1% compliance with histologic verification standards, a 93.6% assessment of pathologic synoptic reporting, and a 99.1% internal consistency of staff credentials.

Conclusion

The NCDB is characterized by a high level of case completeness and comparability with uniform standards for data collection, and by hospitals with high compliance, timely data submission, and high rates of compliance with validity standards for registry and data quality evaluation.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Medical practices and advances in health care are information dependent, and both rely on high-quality data. In recent years, the availability of health care data and analytic platforms has grown exponentially with increasing use of electronic medical records and insurance claims. However, just as the evidence generated by clinical trials is rigorously tested through a set of preexisting data quality procedures,1,2 other sources of data also could be graded in a uniformly defined and regulated manner.

The usability of all data sources is crucial to understanding strengths and limitations. With new data sources becoming more accessible among clinicians and researchers to help shape the future of health care, ensuring data quality through a standardized evaluation plays an increasingly critical role. One such standardized approach to assessing the quality of data collected by cancer registries is the framework described by Bray and Parkin3,4 in 2009.

The Bray and Parkin registry and data quality framework was developed with four unique domains: completeness, comparability, timeliness, and validity.3,4 Completeness represents the extent to which all the incidences of cancer occurring in the population are included in a registry.3,4 Completeness is crucial for ensuring that estimates approximate the true value in the population.3,4 Comparability represents the extent to which statistics generated for different populations, using data from different sources and over time, can be compared.3,4 Comparability is achieved using standardized guidelines on classification procedures, maintaining consistency for coding cancer cases.3,4 Timeliness relates to the rapidity through which a registry can abstract and report reliable cancer data, which is crucial for decision-making.3,4 Validity represents the proportion of cases in a dataset with a given characteristic that truly has that attribute, which is crucial for relevant interpretation of estimates calculated using the data.3,4 Importantly, this framework has been applied across numerous cancer registries worldwide, demonstrating its ability to affirm, document, and benchmark data quality.5,6,7

The processes that ensure data quality of both population- and hospital-based cancer registries in the United States of America (USA) have been consistent for several decades and include standardization of data-field definitions, quality checks executed during data abstraction, and case monitoring after submission (Fig. 1). The principal aim of a population-based cancer registry is to record all new cases in a geographic area or state, with an emphasis on epidemiology and public health.8,9 By contrast, a hospital-based registry is designed to improve patient quality of care at the institutional level.8,9 Both population- and hospital-based cancer registries adhere to uniform procedures during the record abstraction and coding process to ensure accuracy but serve different purposes.

National Cancer Registry quality processes. The quality of cancer data in the United States is supported by a large, multi-agency, National Cancer Registry stakeholder community in the United States that works collaboratively to ensure consistent, high-quality cancer data that can be applied across diverse utilities. These National Cancer Registry stakeholders standardize cancer data definitions, abstraction and coding rules, and registry-based quality procedures as well as registrar education, training, and certification. These national standards are monitored at the hospital level through compliance with quality procedures during the record abstraction and coding process as well at the national level during the process of data aggregation for quality and reporting. AJCC American Joint Committee on Cancer, CDC Centers for Disease Control and Prevention, CoC Commission on Cancer, NAACCR North American Association of Central Registries, Inc.; NCDB National Cancer Data Base, NCRA National Cancer Registrars Association, SEER Surveillance, Epidemiology, and End Results Program, STORE Standards for Oncology Registry Entry, SSDI Site-Specific Data Item, WHO World Health Organization

The reporting of cancer cases to the population-based central cancer registry (CCR) is mandated by legislation in the USA and territories.10,11 The cases identified by these CCRs are then reported to national cancer registries.10,11,12 The reporting of cancer cases within a hospital is mandated by the hospital-based National Cancer Database (NCDB) to maintain accreditation from the Commission on Cancer (CoC).13,14 Although the Bray and Parkin quality control criteria were written primarily with population-based registries in mind, we propose their use for large hospital-based registries, such as the NCDB.

Cancer surveillance programs collaborate to standardize definitions of relevant cancer data items and closely monitor estimates of cancer trends and outcomes calculated using different data sources.9 Each cancer surveillance program works with oncology data specialist (ODS)-certified cancer registrars who are educated, trained, and certified in abstracting cancer data following established definitions and rules.9,15 Although these processes, among many others, have demonstrated consistency over time, they also are dynamic and undergo periodic revisions to incorporate advances in cancer care and ensure the availability of contemporary cancer data.9,15

The NCDB is a hospital-based cancer registry and contains approximately 40 million records, collecting data on patients with cancer since 1989.16,17 The NCDB is jointly maintained by the American College of Surgeons CoC and the American Cancer Society.13,17 To earn voluntary CoC accreditation, a hospital must meet quality of patient care and data quality standards.13 Hospitals are evaluated on their compliance with the CoC standards on a triennial basis through a site visit process to maintain levels of excellence in the delivery of comprehensive patient-centered care.13 The CoC standards are designed to ensure that the processes of the hospital’s cancer program support multidisciplinary patient-centered care.13 Adherence to these standards is required to maintain accreditation in the CoC. The standards demonstrate a hospital’s investment in structure along a full continuum from cancer prevention to survivorship.13

Overall, approximately 1500 CoC-accredited hospitals submit data to the NCDB each year.16 The NCDB collects data from patients in all phases of first-course treatment in cancer care and cancer surveillance and includes the addition of roughly 1.5 million records with newly diagnosed cancers annually.14,16,17 Reportable cancer diagnoses will originate from single- and multi-institution cancer registries.18 The fundamental purpose of the NCDB is to capture data designed to improve patient outcomes.18

Evidence-based quality measures representing clinical best practice are reported from the NCDB through interactive benchmarking reports.13 This includes the Rapid Cancer Reporting System (RCRS), a web-based tool designed to facilitate real-time reporting of cancer cases.13

Although registrars who submit data to the NCDB are involved in aspects of both the population-based registries and the hospital-based registries, not all quality procedures performed by registrars pertain to the NCDB (Table 1). Quality procedures identified by Bray and Parkin that are relevant only to population-based cancer registries include assessment of age-specific curves, incidence rates of childhood cancers, mortality incidence ratio stability, number and average sources per case, and death certificate methods.11 Death certificate-only analyses are performed routinely across all population-based registries.11 Death certificate analysis as a quality indicator does not directly affect the NCDB. However other quality procedures are performed after data submission as part of data aggregation, quality assessment, and reporting within the NCDB.

The NCDB is part of a multi-agency, National Cancer Registry community in the USA that works collaboratively to ensure that consistent, high-quality cancer data can be applied across diverse utilities (Fig. 1). This surveillance community comprises the central cancer registries, including the Centers for Disease Control and Prevention (CDC), National Program of Cancer Registries (NPCR), and the Surveillance, Epidemiology, and End Results (SEER) Program of the National Cancer Institute (NCI); the National Cancer Registrars Association (NCRA); and the CoC.19 The North American Association of Central Cancer Registries (NAACCR) is also part of this community and serves a vital role as a consensus organization.11 The NAACCR facilitates standardization of data definitions, abstraction and coding rules, quality procedures, and registry certification, which in turn ensures uniform registry processes and establishes data quality standards.11 Instructions to support standardized data definitions, abstraction, and coding rules, as well as quality procedures, are detailed in key manuals and documents.11

An assessment of existing quality processes and procedures is fundamentally important to ensuring that the best possible data are being used to inform cancer practices and policies. The principal aim of this study was to assess the quality of cancer data collected by the NCDB using the Bray and Parkin framework.

Methods

Completeness

Completeness, defined as a measure of representation, is the extent to which all the incident cancer cases occurring in the population are included in the registry. Case-finding procedures are considered critical to both cancer registry coverage and survival accuracy. Completeness includes nine quality procedures (Table 1).3,4

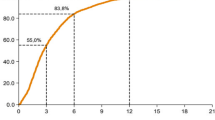

Because of the legislative mandate to report cancer cases to population-based cancer registries in the USA, population-based cancer registries are regarded as the gold standard for data completeness.11 We evaluated data completeness within the NCDB by comparing the number of incident cancer cases from participating central registries included in the United States Cancer Statistics (USCS), the official federal cancer statistics.12 These statistics include cancer registry data from the CDC’s NPCR and the NCI SEER program.12 The USCS internal quality control file includes cases from all 50 states and the District of Columbia, providing information on demographic and tumor characteristics.12

Cancers diagnosed at a Veterans Affairs hospital were excluded from the NCDB analysis. Cases were further limited to malignant disease except for benign and borderline brain and other nervous system cancers and female in situ breast cancers. Only male and female cancers diagnosed within the USA between 2016 and 2020 were included.

The percentage of cancer cases captured within the NCDB from 2016 to 2020 were compared against prior reports, which included diagnostic years 2012 to 2014.14 Comparisons were made by primary disease site using the SEER definitions of the World Health Organization (WHO) International Classification of Diseases for Oncology, third-edition (ICD-O-3) site recodes.20 Additional stratification included sex, diagnosis year, patient age, race/ethnicity, and state of diagnosis corresponding to the patient’s residence.

Outcomes for other measures of completeness that affect all registries (Table 1) have been previously reported.21 Incidence case ascertainment for the NCDB is continuously verified with CoC special studies, which are required for accreditation, and specifically capture additional data on previously submitted cancer diagnoses. This provides an extra level of detail and audit of abstraction accuracy. Independent studies using data from the NCDB have demonstrated case ascertainment compared with trials and claims data.22,23,24 This type of auditing may be extended to assess registry completeness.

Comparability

The study ensured comparability by using standardized international guidelines on coding and classification procedures for cancer data abstraction.3,4 Cancers reported to the NCDB are identified by the WHO ICD-O-3 topography, morphology, behavior, and grade codes.25 The ICD-O-3 and topography and histology codes are categorized into cancer types.15,26,27,28 Coding rules are maintained in registry manuals so that data items are abstracted and submitted to the registry with universal rules and codes.15,26,27,28 Staging standards are defined by the American Joint Committee on Cancer (AJCC).29 The rules for coding include timing relative to initiation of treatment. Clinical staging includes the extent of cancer information before initiation of definitive treatment or within 4 months after the date of diagnosis, whichever is shorter.29,30 Pathologic staging includes any information obtained about the extent of cancer through completion of definitive surgery or within 4 months after the date of diagnosis, whichever is longer.29,30 Secondary diagnosis codes are captured by the cancer registry as International Classification of Diseases, 10th Revision codes.30 The CoC also requires registries to submit up to 10 comorbid conditions to the NCDB. These conditions influence the health status of the patient and treatment complications.30

An interactive drug database maintained by SEER facilitates the proper coding of treatment fields.31 The rules for diagnostic confirmation require the reportability of both clinically diagnosed and microscopically confirmed tumors.30 Clinically diagnosed tumors are those with the diagnosis based only on diagnostic imaging, laboratory tests, or other clinical examinations, whereas microscopically confirmed tumors include all tumors with positive histopathology.11,30 Cancer registries reference both “ambiguous terms at diagnosis” to determine case reportability and “ambiguous terms describing tumor spread” for staging purposes.30 For reportability, the NCDB follows rules for class of case to describe the patient’s relationship to the facility. Rules exist for the reporting of multiple primary tumors to the NCDB.32 These solid tumor rules are aimed at promoting consistent and standardized coding by cancer registrars and are intended to guide registrars through the process of determining the correct number of primary tumors.32

Timeliness

No international guidelines for cancer registry data submission timeliness exist, although the cancer surveillance community has specific timeliness standards for their respective registries.11 Timeliness of NCDB data submission was assessed using compliance with CoC standard 6.4 (Table 1).13

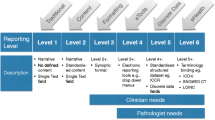

Validity

Validity is defined by Bray and Parkin3,4 as the proportion of cases in a dataset with a given characteristic that has this characteristic. Data validity is maintained through procedures specific to quality control that are integral to the registry and tied to CoC standards 3.2, 4.3, 5.1, and 6.1 for CoC accreditation (Table 1).13

Accreditation for anatomic pathology by a qualifying organization is a component of standard 3.2, designed to further structure quality assurance protocols.13 Histologic verification also is assessed in compliance with CoC standard 3.2 and ensures that each hospital provides diagnostic imaging services, radiation oncology services, and systemic therapy services on site with accreditation by a qualifying organization for anatomic pathology.13

Compliance with CoC standard 4.3 is assessed for internal consistency, which ensures that all case abstraction is performed by cancer registrars who hold current certification by the NCRA.13,15 This ensures that registrars use, maintain, and continue their formal education through NCRA and thus continue working toward correct interpretation and coding of cancer diagnoses.13,15

Standard 5.1 requires College of American Pathologists33 synoptic reporting and for each hospital to perform an annual internal audit, confirming that at least 90 % of all cancer pathology reports are in synoptic format.13

The database validity criteria for re-abstracting, recoding, and reliability procedures identified by Bray and Parkin are measured in compliance with CoC standard 6.1. Additionally, data edits are integrated to maintain quality control.11 These electronic logical rules evaluate internal consistency of values or data items.11 For instance, a biologic woman with a diagnosis of prostate cancer will fail edits. Edits are currently maintained by NAACCR based on edits originally developed by SEER.34 The NAACCR Edits’ Metafile comprises validation checks applied to cancer data.34 The CDC develops and maintains software (EditWriter and GenEDITS Plus) for registries to obtain edit reports on their cases using the standards maintained by NAACCR.34,35 The NCDB assigns scores that are applied to the call for data and to RCRS reporting requirements, causing a case to be rejected or accepted into either dataset.36 An edit score of 200 will cause a record to be rejected from the NCDB.36

All data were analyzed using SAS version 9.4 (SAS Institute, Cary, NC, USA)37 or SEER Surveillance Research Program, National Cancer Institute SEER*Stat software version 8.4.2.38

Results

The exclusion and inclusion criteria resulted in 9,269,442 cases from the USCS and 6,828,507 cases from the NCDB. Compared with the USCS, the official cancer statistics,39 the NCDB demonstrated 73.7 % completeness of cancer cases diagnosed in the USA between 2016 and 2020 (Table 2). Among the top 10 major cancer sites, breast cancer in males and females had the highest coverage, at 81.9%, and the lowest coverage was found for melanoma of the skin in males and females, at 52.0% (Table 2). In aggregate, coverage steadily increased from 73.0% in 2016 to 74.3% in 2020 (Table 3). Age group comparisons showed the lowest coverage (61.1%) for the patients 85 years of age or older, with the highest coverage for those 20–74 years of age (73.1–80.4%) (Table 3). Race and ethnicity comparisons showed coverage to be 68.4% for white patients, 73.7% for black patients, 41.0% for American Indian/Alaskan Native patients, 70.7% for Asian/Pacific Islander patients, and 56.4% for Hispanic patients (Table 3). Finally, by state, Arkansas demonstrated the lowest coverage (24.0%), and North Dakota demonstrated the highest coverage (98.9%) (Table 4).

For timeliness, CoC standard 6.4 was assessed on the requirement for timely data submission, with compliance at 92.7% (Table 5).13 This standard has three components. The first criterion assesses compliance with monthly data submissions of all new and updated cancer cases.13 The second criterion ensures that all analytic cases are submitted to the NCDB’s annual call for data.13 The third criterion requires hospitals at least twice each calendar year to review the quality measures performance rates, which are affected by timeliness of data submission.13

Validity was assessed on compliance with CoC standards 3.2, 4.3, 5.1, and 6.1 at more than 90% (range, 93.6–99.1%) (Table 5). The compliance rate for CoC standard 6.1, which requires review of at least 10% of cases each year and CoC hospitals to establish a cancer registry quality control plan, was 94.2%.13 The re-abstracting and recoding auditing approaches involve data captured by the registry compared with data collected by a designated auditor.11 Compliance with histologic verification standards was high, at 93.6% for CoC standard 5.1 pathologic synoptic reporting and 99.1% for CoC standard 3.2 accreditation for anatomic pathology by a qualifying organization. The synoptic format must be structured and must include all core elements reported in a “diagnostic parameter pair” format.13 Each diagnostic parameter pair must be listed together in synoptic format at one location in the pathology report.13 Compliance with CoC standard 4.3 was at 99.1%. This standard for credentials may additionally include participation in reliability studies designed to measure abstractor and coder compliance with existing coding rules.11

Reproducibility is a goal in assessing the reliability study measures to help identify ambiguity or inadequacy of existing data definitions and rules as well as education needs.11 Edits checks at the time of data submission are part of the NCDB validity criteria and are covered in the Bray and Parkin criteria.3 During the 2023 annual call for data, which began in March 2023, the NCDB processed 12,151,768 records consisting of 2021 diagnoses and follow-up resubmissions from prior years. Of the total, 71,854 cases failed the NCDB edits score, representing less than 1 %.

Discussion

The current study characterized the NCDB data quality in all four domains defined by Bray and Parkin,3,4 including high rates of completeness, comparability, timeliness, and validity. The cancer registry stakeholder community, demonstrated in Fig. 1 collaborates to standardize abstraction practice with universal coding definitions. The CoC accreditation standards layer an additional component to quality assurance with regard to histologic verification, registry staff credentials, synoptic reports, and inclusion of submission timeliness. Altogether, nearly all framework that applies to the hospital-based NCDB, identified by the Bray and Parkin criteria, is maintained with results indicative of consistency and stability over time.

The CoC standards for data quality that we examined are associated with high compliance and are a necessary component to maintain accreditation by the CoC. Cancer hospitals of the CoC are diverse by region, patient case mix, and volume, yet still display unified adherence to compliance with metrics designed to promote high quality of data.

Many of the countries that previously reported on national registry data quality have universal health care coverage with a single or two-tiered national provider.5,6 Norway has an 11-digit personal identification assigned to all newborns and people residing in the country.5 In contrast, the USA has a complex system of insurance options and eligibility criteria that patients navigate on their own or through their employer. The USA has no national patient identifier, and the gathering of cancer data could be further complicated by the variability in electronic health record systems, which may not be interoperable.

Despite these challenges, registrars that submit data to the NCDB demonstrate the effectiveness of quality control mechanisms developed in partnership with the registry stakeholder community, yielding high-quality data. Hospitals are required to follow standard processes and procedures to abstract and report data to the NCDB, including treatment information, and are therefore a valuable resource for evaluating cancer treatment patterns. Although central registries capture treatment information, this varies by state and therefore is not routinely available in the public facing NPCR and SEER data.

This study had limitations to be noted. First, the NCDB does not capture data beyond those hospitals accredited by the CoC. The USA has approximately 6000 hospitals,40 with variable definitions and practices. Through this study, we determined that the NCDB captures 73.7% of cancer patients in the USA compared with national data.

A second limitation was that the NCDB does not collect direct patient identifiers, including name. The patient’s name is necessary to run the NAACCR algorithm used by population-based registries to identify Hispanic identity, demonstrated to be of lower coverage in the NCDB.

Finally, the NCDB is not designed to assess changes in clinical practices or quality of care in real time, although with the launch of RCRS, more timely evaluation of sudden changes in cancer care and outcomes, such as those that occurred during the first months of the COVID-19 pandemic, is increasingly feasible. Mandatory concurrent data abstraction rules are in place and required of hospitals accredited by the CoC. Data submission rules are currently in place that require all new and updated cancer cases to be submitted monthly.13 Additional progress with timeliness is expected as the CoC standards for concurrent abstraction are adjusted to include the diagnostic and first treatment phase of care. There are plans for future studies to evaluate the completeness, comparability, validity, and timeliness of RCRS data and the feasibility of using real-time data in research.

Advances in cancer control are information dependent. As new data sources and analytic platforms become available, it is imperative that data quality be considered alongside data availability to ensure information validity and reliability. The data quality standards described in this report and adhered to by the NCDB facilitate reporting to hospital administration personnel for decision-making, researchers and epidemiologists, and quality analysts, as well as to governments that mandate reporting of cancer.

Registry data must be comprehensive, granular, and valid. High-quality data allows use of the NCDB during the CoC accreditation process to include reports on quality-of-care measures and patient outcomes assessments. The NCDB provides a comprehensive view of cancer care in the USA within CoC-accredited hospitals.

References

Pollock BH. Quality assurance for interventions in clinical trials: multicenter data monitoring, data management, and analysis. Cancer. 1994;74(9 Suppl):2647–52.

Menard T, Barmaz Y, Koneswarakantha B, Bowling R, Popko L. Enabling data-driven clinical quality assurance: predicting adverse event reporting in clinical trials using machine learning. Drug Saf. 2019;42:1045–53.

Bray F, Parkin DM. Evaluation of data quality in the cancer registry: principles and methods: part I. comparability, validity and timeliness. Eur J Cancer. 2009;45:747–55.

Parkin DM, Bray F. Evaluation of data quality in the cancer registry: principles and methods: part II. completeness. Eur J Cancer. 2009;45:756–64.

Larsen IK, Smastuen M, Johannesen TB, et al. Data quality at the cancer registry of Norway: an overview of comparability, completeness, validity, and timeliness. Eur J Cancer. 2009;45:1218–31.

Dimitrova N, Parkin DM. Data quality at the Bulgarian National cancer registry: an overview of comparability, completeness, validity, and timeliness. Cancer Epidemiol. 2015;39:405–13.

Wanner M, Matthes KL, Korol S, Dehler S, Rohrmann S. Indicators of data quality at the Cancer Registry Zurich and Zug in Switzerland. Biomed Res Int. 2018;1:11.

SEER Cancer Registration and Training Modules. https://training.seer.cancer.gov/registration/types/hospital.html. Accssed 13 Nov 2023.

Cancer Surveillance Programs. https://www.cancer.org/cancer/managing-cancer/making-treatment-decisions/clinical-trials/cancer-surveillance-programs-and-registries-in-the-united-states.html#:~:text=The%20National%20%20Cancer%20%20Institute%E2%80%99s%20%20(NCI,Survival. Accessed 17 July 2023.

Duggan MA, Anderson WF, Altekruse S, Penberthy L, Sherman ME. The surveillance, epidemiology, and end results (SEER) program and pathology: toward strengthening the critical relationship. Am J Surg Pathol. 2016;40:e94–102.

North American Association of Central Cancer Registries (NAACCR) Standards for cancer registries volume III: standards for completeness, quality, analysis, management, security, and confidentiality of data. https://www.naaccr.org/wp-content/uploads/2016/11/Standards-for-Completeness-Quality-Analysis-Management-Security-and-Confidentiality-of-Data-August-2008PDF.pdf. Accessed 17 July 2023.

U.S. Cancer Statistics Working Group. U.S. Cancer Statistics Data Visualizations Tool, based on 2022 submission data (1999–2020): U.S. Department of Health and Human Services, Centers for Disease Control and Prevention and National Cancer Institute. https://www.cdc.gov/cancer/dataviz. Accessed 14 Nov 2023.

Optimal Resources for Cancer Care, 2020 Standards. https://acs.wufoo.com/forms/download-optimal-resources-for-cancer-care/. Accessed 17 July 2023.

Mallin K, Browner A, Palis B, et al. Incident cases captured in the National Cancer Database compared with those in U.S. population-based central cancer registries in 2012–2014. Ann Surg Oncol. 2019;26:1604–12.

National Cancer Registrars Association (NCRA) Cancer registry manual principles and practices fourth edition. The CTR certification. https://www.ncra-usa.org/CTR. Accessed 22 Aug 2023.

National Cancer Database. https://www.facs.org/quality-programs/cancer-programs/national-cancer-database/about/. Accessed 14 Nov 2023.

Lerro CC, Robbins AS, Phillips JL, Stewart AK. Comparison of cases captured in the National cancer data base with those in population-based central cancer registries. Ann Surg Oncol. 2013;20:1759–65.

Bilimoria KY, Stewart AK, Winchester DP, Ko CY. The National Cancer Data Base: a powerful initiative to improve cancer care in the United States. Ann Surg Oncol. 2008;15:683–90.

SEER Cancer Registration and Training Modules. https://training.seer.cancer.gov/operations/standards/setters/. Accessed 14 Nov 2023.

SEER Site Recodes. https://seer.cancer.gov/siterecode/. Accessed 14 Nov 2023.

Islami F, Ward EM, Sung H, et al. Annual report to the nation on the status of cancer: part 1. National Cancer Statistics. J Natl Cancer Inst. 2021;113:1648–69.

Shi Q, You YN, Nelson H, et al. Cancer registries: a novel alternative to long-term clinical trial follow-up based on results of a comparative study. Clin Trials. 2010;7(6):686–95. https://doi.org/10.1177/1740774510380953.

Mallin K, Palis BE, Watroba N, et al. Completeness of American Cancer Registry treatment data: implications for quality-of-care research. J Am Coll Surg. 2013;216:428–37.

Lin CC, Virgo KS, Robbins AS, Jemal A, Ward EM. Comparison of comorbid medical conditions in the National Cancer Database and the SEER-Medicare Database. Ann Surg Oncol. 2016;23:4139–48.

Fritz A, Percy C, Jack A, Shanmugaratnam K, Sobin LH, Parkin DM, Whelan S, editors. International classification of diseases for oncology. 3rd ed. World Health Organization; 2000.

Surveillance and Epidemiology End Result Program Coding and Staging Manual 2023. https://seer.cancer.gov/tools/codingmanuals/. Accessed 17 July 2023.

North American Association of Central Cancer Registries (NAACCR) SSDI/Grade Manual. https://www.naaccr.org/data-standards-data-dictionary/. Accessed 17 July 2023.

North American Association of Central Cancer Registries (NAACCR) Data Standards and Data Dictionary. https://www.naaccr.org/data-standards-data-dictionary/. Accessed 17 July 2023.

Amin MB, Edge S, Greene F, Byrd DR, Brookland RK, Washington MK, Gershenwald JE, Compton CC, Hess KR, Sullivan DC, Jessup JM, Brierley JD, Gaspar LE, Schilsky RL, Balch CM, Winchester DP, Asare EA, Madera M, Gress DM, Meyer LR, editors. AJCC Cancer Staging Manual. 8th ed. Springer International Publishing: American Joint Commission on Cancer; 2017.

Standards for Oncology Registry Entry (STORE 2022). https://www.facs.org/media/weqje4pk/store-2022-12102021-final.pdf. Accessed 17 July 2023.

Surveillance and Epidemiology End Result (SEER) *Rx Drug Database. https://seer.cancer.gov/seertools/seerrx/. Accessed 17 July 2023.

Surveillance and Epidemiology End Result (SEER) Solid Tumor Rules. https://seer.cancer.gov/tools/codingmanuals/historical.html#solid. Accessed 17 July 2023.

CAP Protocol Templates. https://www.cap.org/protocols-and-guidelines/cancer-reporting-tools/cancer-protocol-templates. Accessed 14 Nov 2023.

North American Association of Central Cancer Registries (NAACCR) Standards for Cancer Registries, Standard Data Edits, volume IV. https://www.naaccr.org/standard-data-edits/. Accessed 17 July 2023.

Centers for Disease Control and Prevention National Program of Cancer Registries Edits, 2023. https://www.cdc.gov/cancer/npcr/tools/edits/index.htm. Accessed 14 Dec 2023.

American College of Surgeons v22b and v23b NCDB/RCRS edits and RCRS Data Submission Requirements, 2023. https://www.facs.org/media/qw5bqvew/v22b-and-v23b-ncdb-rcrs-edits-and-rcrs-data-submission-requirements-07112023.pdf. Accessed 17 July 2023

SAS Academic Programs, 2023. https://sas.com. Accessed 14 Dec 2023

Surveillance, Epidemiology, and End Results Program SEER*Stat Software. 2023. SEER*Stat Software (cancer.gov). Acessed 14 Dec 2023.

About US Cancer Statistics. https://www.cdc.gov/cancer/uscs/about/index.htm. Accessed 14 Nov 2023

Fast Facts on US Hospitals, 2023. https://www.aha.org/statistics/fast-facts-us-hospitals. Accessed 21 Sept 2023.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Disclosure

The findings and conclusions in this article are those of the authors and do not necessarily represent the official position of the Centers for Disease Control and Prevention. Daniel J. Boffa, MD, MBA, FACS, received a stipend to attend a panel discussion from Iovance in May 2022. The remaining authors have no conflicts of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Palis, B.E., Janczewski, L.M., Browner, A.E. et al. The National Cancer Database Conforms to the Standardized Framework for Registry and Data Quality. Ann Surg Oncol 31, 5546–5559 (2024). https://doi.org/10.1245/s10434-024-15393-8

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1245/s10434-024-15393-8