Abstract

Automatic machine learning of empirical models from experimental data has recently become possible as a result of increased availability of computational power and dedicated algorithms. Despite the successes of non-parametric inference and neural-network-based inference for empirical modelling, a physical interpretation of the results often remains challenging. Here, we focus on direct inference of governing differential equations from data, which can be formulated as a linear inverse problem. A Bayesian framework with a Laplacian prior distribution is employed for finding sparse solutions efficiently. The superior accuracy and robustness of the method is demonstrated for various cases, including ordinary, partial, and stochastic differential equations. Furthermore, we develop an active learning procedure for the automated discovery of stochastic differential equations. In this procedure, learning of the unknown dynamical equations is coupled to the application of perturbations to the measured system in a feedback loop. We show that active learning can significantly improve the inference of global models for systems with multiple energetic minima.

Similar content being viewed by others

Introduction

Throughout the natural sciences, mathematical models are frequently formulated as differential equations. For example, with stochastic, ordinary, and partial differential equations (SDEs, ODEs, and PDEs). In physics, governing differential equations are often derived from first principles, for instance, from conservation of energy and momentum, and thermodynamic considerations. However, for complex systems studied, e.g., in biophysics, climate science, and neuroscience, first principles determining the system properties are typically not fully known. For example, because such systems are in a driven non-equilibrium state, highly nonlinear, and because dynamics may occur on multiple scales that are not well separated. In these cases, one can resort to phenomenological, effective descriptions that may result from some level of coarse graining and are based on experimental data. Recently, the increased availability of computational power has made it possible to construct such models in an automated fashion, which is known as data-driven discovery of governing equations.

Various approaches have been developed for inferring the differential equations that govern a non-linear dynamical system directly from measured data1,2,3,4,5,6. In a popular approach that is called “symbolic regression”, function libraries are employed to automatically extract the terms in a governing equation that best represents the measured data according to some optimization criterion1,2. Recently, the use of sparse regression techniques for symbolic regression has received considerable scientific attention3,4. In symbolic regression, the physical quantity z, which is for illustration taken to be a scalar here, is assumed to obey an equation of the general form

where \({\check{z}}\) can be, e.g., a time derivative \({\check{z}}=\frac{\partial z}{\partial t}\) for describing an ODE. \({\mathcal{F}}(z,\,{\textbf{x}},\,t,\,c)\) is an unknown function whose arguments \({\textbf{x}}\) represent space coordinates while t represents time and c is a constant parameter. Vectors and arrays are denoted by bold letters. The aim of symbolic regression is to estimate the function \({\mathcal{F}}(\ldots )\) from a data set \({\textbf{z}}\), which could be a measured sequence of values of z at different time-space coordinates. The vector \(\check{\textbf{z}}\) is either measured or estimated from \(\textbf{z}\), e.g., with a discrete difference scheme. For inference of \({\mathcal{F}}(\cdots )\), a so-called “library” matrix \({\varvec{\Theta }}({\varvec{z}})\) is constructed from a suitable set of functions of \({\textbf{z}}\), e.g., various powers of \({\textbf{z}}\), combinations of partial derivatives, or trigonometric functions. Assuming that the governing Eq. (1) can be expressed as a linear superposition of library terms, we write

where \({\varvec{\xi }}\) is a weight vector. The inference of the governing equation is thus reduced to a regression problem for the optimal \({\varvec{\xi }}\), given \({\check{\textbf{z}}}\) and \({\varvec{\Theta }}({\varvec{z}})\). In general, solving the inverse problem in Eq. (2) is not straight-forward since the matrix \({\varvec{\Theta }}\) should represent many equation terms and can have a large condition number \(\kappa ({\varvec{\Theta }})\).

In Ref.3, a method called sparse identification of nonlinear dynamics (SINDy) has been proposed. The method works iteratively. At each iteration, \({\varvec{\xi }}\) is first obtained from a least-squares optimization involving Eq. (2) and \({\varvec{\xi }}\) is subsequently thresholded such that values smaller than a cutoff \(\varkappa\) are set to zero. The iteration is continued until convergence conditions are satisfied. SINDy has been shown to be a powerful and versatile method that is applicable for inference of various types of ODEs3. However, the method requires the user to manually select the thresholds \(\varkappa\). For the identification of PDEs, an alternative algorithm called train sequential threshold ridge regression (TrainSTRidge) has been described in Ref.4. This method is a variant of a least-squares optimization procedure for ridge regression called Sequential Threshold Ridge regression (STRidge). In STRidge, the vector \({\varvec{\xi }}\) is first calculated by using ridge regression with a fixed regularization parameter. Then, all elements in \({\varvec{\xi }}\) that have a smaller absolute value than a threshold \(\varkappa\) are set to zero. Both, the regularization parameter and the threshold \(\varkappa\) need to be provided by the user in STRidge. TrainSTRidge4 employs L0 regularization and a training step to automatically determine the threshold \(\varkappa\) while the regularization parameter remains to be set by the expert user. Conversely, a method called threshold sparse Bayesian regression, which also was employed for identification of PDEs7, requires no input of a regularization parameters but some thresholds remain to be provided by the user.

The first aim of this work is to provide a method to solve the inverse problem associated with data-driven discovery of governing physical equations, Eq. (2), by combining a Bayesian approach with a automatic thresholding procedure. We call this method automatic threshold sparse Bayesian learning (ATSBL). Our algorithm does not require any manual fine-tuning of parameters to correctly infer governing differential equations from measured data. The method can be employed to identify ODEs, PDEs, and SDEs.

The case of SDEs requires particular attention, since the above-mentioned methods of equation inference are mainly designed for deterministic processes and some moderate amount of additive noise. The question of how to reconstruct the force fields for stochastic processes has been investigated in numerous studies, e.g., for application in soft matter physics and biophysics5,8,9,10,11,12,13. Recently, sophisticated methods have been proposed for dealing with discretization and the inference problem in the context of SDEs for second-order dynamics14,15,16,17. Here, we focus on the use of symbolic regression for the inference of analytical expressions of SDEs of the overdamped Langevin-type. One approach to symbolic regression in this context is based on dividing the phase space into small hypercubes which are also called bins in the one-dimensional case. Average values of the state variables and of their derivatives are estimated in each hypercube and the regression is defined with respect to these averages5. This kind of averaging generally depends on the chosen discretization and the averaging may lead to a substantial loss of information. Furthermore, application of this method to non-stationary processes requires a large ensemble of trajectories and considerable numerical effort to sample the time-dependent probability distribution in phase space. The difficulties related to the averaging in phase space motivate the investigation of the question to what extent the above-mentioned inference tools can be used in the context of noisy data without the need to perform ad hoc averaging, and, eventually, how the robustness of the inference methods may be improved in this context. We show that imposing Laplacian or Gaussian prior distributions on the inferred models is generally sufficient to identify the correct SDEs directly from trajectories without phase-space binning and we provide a comparison of the accuracy of results obtained with the two types of prior distributions. A remarkable performance of the Laplacian prior is demonstrated with several examples, including Brownian motion in time-dependent potentials.

A major challenge for the inference of SDEs is that the phase space is often sampled very inhomogeneously in available data. This problem is encountered, e.g., for systems where the long-term dynamics is dominated by transitions between different, locally stable states, while the short-term dynamics are dominated by fluctuations around individual stable states. In such cases, the inferred equation may be meaningful only locally, i.e, within the region covered by the measurement trajectory, and it may be a priori impossible to infer the global dynamics from a given data set. To enable an automatic inference of a global model under these conditions, we consider the question of how to design an external perturbation to the system, also called “control force”, such that the state variables are forced to explore the full phase space in a shortened sampling time. Established Umbrella sampling routines used for this purpose rely on quadratic control forces and involve non-trivial design steps for the control force18,19,20,21. See, e.g., Refs.22,23 for alternative approaches. This kind of methodology has proved useful, e.g., in the context of computational studies of nucleation24 and growth25 processes. We develop an alternative adaptive control technique that recursively infers the governing equation and adapts the external control solely based on inferred equations. The adaptation loop consists of inference of the governing equation and a subsequent update of the control force such that it is directly opposite to the inferred force. No parameters need to be tuned for designing the control with this adaptive scheme. Using the adaptive control scheme, we demonstrate a substantial improvement of the inference of SDEs for several different simulations of Brownian motion.

This work is organized as follows. The “Methods” section provides details on the the construction of function libaries and the casting of the inference problem into a system of linear equations. The inference algorithm is summarized and it is explained how Laplacian prior distributions can be used to impose the sparsity condition on the inferred models. In the “Results” section, the performance of the described method is illustrated by means of numerical examples and a comparison with previously described methods is presented. An adaptive sampling technique for improving the inference of SDEs is proposed and the usefulness of this approach is demonstrated.

Methods for data-driven identification of differential equations

Ordinary and partial differential equations

Measurement data from a system of interest is presumed to be recorded as a time series of states, for example, a time-dependent position vector. In a data-driven approach to model a system, the data is used to automatically infer the a priori unknown dynamical equations that govern the observed process. In this work, inference is based on libraries of candidate functions for the governing equations. The data used for inference of differential equations is assumed to contain additive noise but no systematic errors.

For inference of ODEs, we generalize the introductory example for a scalar variable z, Eq. (1), to a system with M compontents that are assumed to be sampled with the same regular time interval for all \(\ell \in \{1\ldots M\}\) components. To distinguish discrete measurements from continuous variables, a subscript notation is employed in the following. The \(\ell\)-th component measured in an ordered time series \([t_{1},\dots ,t_{N}]\) is written as \({\textbf{z}}_{\ell } =[z_{\ell ,t_{1}},\;z_{\ell ,t_{2}},\;\dots ,\;z_{\ell ,t_{N}}]\). Vectors or arrays containing multiple variable measurements, e.g., at different time points, are denoted with bold letters. The whole data can then be written in matrix form as

Our approach also requires derivatives of the measured data. For simplicity, finite-difference approximations are used throughout this work. Approximate derivatives are denoted by the operator \({\mathcal{D}}_{\ldots }\), which represents here a fourth-order finite central difference scheme. For example, a time derivative of the \(\ell\)-th state component, \({\textbf{z}}_{\ell }\), at the i-th timepoint \(t_{i}\) is written as \({\dot{z}_{\ell }(t)}|_{t=t_{i}} \approx {\mathcal{D}}_{t}{\textbf{z}}_{\ell }|_{t=t_{i}}\). For the entire dataset, we write the time derivative as

A governing ODE for the vector containing the trajectory of the \(\ell\)-th state component may be written as a linear combination of elementary functions of all \(\{{\textbf{z}}_{\ell '}\}\), e.g., as

where the indices \(\ell '\), \(\ell ''\), and \(\ell '''\) cover the M system dimensions, \(\odot\) denotes an element-wise product, and c represents a constant. \({\mathcal{F}}_{\ell }\) can also depend on time, but we focus mostly on autonomous differential equations in the following. Since \({\mathcal{F}}_{\ell }(\cdot )\) represents a linear combination of functions that can be calculated from the data, \({\mathcal{F}}_{\ell }(\cdot )\) can be expressed with the help of a library matrix \({\varvec{\Theta }}({\textbf{Z}})\) multiplied with a sparse vector \({\varvec{\xi }}_{\ell }\). Thus, we obtain for Eq. (3) in discretized form

where the terms of the library matrix \({\varvec{\Theta }}({\textbf{Z}})\) are calculated from the measurement data by evaluating the functions of \(\{{\textbf{z}}_{\ell '}\}\) and the non-zero elements of \({\varvec{\xi }}_{\ell }\) characterize the dynamics of the system. Since Eq. (4) refers to ODEs, no derivative terms are contained in the library on the right-hand side of the equation. Given \({\mathcal{D}}_{t}{{\textbf{z}}}_{\ell }\) and \({\varvec{\Theta }}({\textbf{z}})\), the aim is to calculate a sparse vector \({\varvec{\xi }}_{\ell }\) with a minimal number of non-zero coefficients corresponding to a minimal number of terms necessary to describe the dynamics.

For inference of PDEs, the library matrix \({\varvec{\Theta }}\) has to contain partial-derivative terms. Thus, data is required that allows the numerical estimation of derivative expressions with respect to two or more variables, for example, with respect to time and space. Usually, measurements therefore consist of discrete space-time series recordings of system variables. For example, an array \({\textbf{Z}}^{P}\) representing the M-dimensional state vector that is measured at N time points in R positions of one space coordinate x is written as

With a finite-difference approximation, vectors of time derivatives of every component, \({\mathcal{D}}_{t}{\textbf{z}}_{\ell }\), and various orders of x derivatives are calculated, for example, \({\mathcal{D}}_{x}{\textbf{Z}}^{P},\;{\mathcal{D}}_{xx}{\textbf{Z}}^{P},\;\dots\). These derivative terms are added to the library \({\varvec{\Theta }}^{P}\). Like for ODEs, inference of the dynamical equation governing the component \({\textbf{z}}_{\ell }\) is then based on the linear equation

with a sparse coefficient vector \({\varvec{\xi }}_{\ell }\) to be determined.

Note that a robust estimation of derivatives from noisy data is an important prerequisite for data-driven inference of ODEs and PDEs in this framework. The fourth order finite-difference approximations employed here may be supplemented or replaced with other methods, including denoising procedures and Gaussian-process regression models.

Stochastic differential equations

We focus on Langevin-type SDEs to describe the time evolution of continuous, real state variables \({\textbf{X}}(t)\), representing, e.g., the position of a Brownian particle in space26. Trajectories, denoted by \({\textbf{X}}(t)\), are time-ordered sequences of values of space coordinates \({\textbf{x}}\). The general form of the considered SDEs is

where we employ the Einstein sum convention and \(X_{\ell }(t)\) denotes the \(\ell\)-th component of the system state at time t. The trajectories \({\textbf{X}}\) are calculated by making use of Ito’s interpretation of stochastic integrals26. The \(g_{\ell }({\textbf{X}}(t),t)\) represent the deterministic parts of the differential equations. For example, for a Brownian particle undergoing overdamped motion in the presence of conservative forces with a potential \(U({\textbf{x}},t)\), we have \(g_{\ell }({\textbf{X}},t)= -\nabla _{x_{\ell }} U({\textbf{x}},t)|_{{\textbf{x}}={\textbf{X}}(t)}\). The stochastic perturbations are assumed to result from a Wiener process with a noise source \(\Gamma _{\ell }(t)\) and \({\textrm{d}} W_{\ell } =\Gamma _{\ell }(t)\,{\textrm{d}} t\). The noise is assumed to obey a Gaussian distribution with a vanishing mean and a \(\delta\)-correlated variance as

respectively. The coefficient matrix \(h_{\ell ,\ell '}\) in Eq. (6) scales the magnitude of the stochastic perturbations and is assumed to be diagonal, for simplicity. Further noise sources, e.g., resulting from an experimental measurement of a trajectory, are not explicitly considered throughout this work.

The Fokker–Planck equation that corresponds to Eq. (6) and describes the evolution of a probability density function \(f({\textbf{x}},t)\) is given by

where the Fokker–Planck operator \(\hat{L}\) acting on \(f({\textbf{x}},t)\) has the form

The functions \(D_{\ell }^{(1)}({\textbf{x}},t)\) and \(D_{\ell ,\ell '}^{(2)}({\textbf{x}},t)\) are called Kramers–Moyal (KM) coefficients or drift and diffusion coefficients. Under the assumption of perfect knowledge of the trajectories \({\textbf{X}}(t)\), the KM coefficients can be calculated from the incremental changes \(\Delta X_{\ell }(t) \equiv X_{\ell }(t+\tau )-X_{\ell }(t)\) in an infinitesimal time interval \(\tau\) as

where \(\langle \ldots \rangle _{{\textbf{X}}(t)={\textbf{x}}}\) denotes averages over the stochastic trajectories. The KM coefficients are related to the functions \(g_{\ell }\) and \(h_{\ell ,\ell '}\) in the Langevin equation as

We consider only diagonal diffusion matrices, but the KM coefficients can depend explicitly on space and time. To estimate the coefficients, M-dimensional trajectories \(X_{\ell ,i}\), \(\ell \in \{1,\dots ,M\}\) are sampled with a small, regular time step s at time points \(i \in \{1,\dots ,N\}\). Trajectory samples \(X_{\ell ,i}\) are distinguished from the original stochastic variable \(X_{\ell }(t)\) by the index i, representing the i-th time point. Therewith, two new sequences are constructed as

where s is a small time step5. The \(\varvec{F}_\ell ^{(1)}\) and \(\varvec{F}_\ell ^{(2)}\) are constructed with sample trajectories from random processes that are not differentiable. Use of these quantities for estimation of the KM coefficients in the spirit of Eq. (10) makes it necessary to first sample the stochastic process extensively to then approximate the average \(\langle \ldots \rangle _{{\textbf{X}}(t)={\textbf{x}}}\) over different realizations of the process.

Note that in basing the estimation on Eq. (10), we are neglecting two problems that occur for time series measured in the “real world”. Firstly, measurement noise may render the assumption of a Markov process invalid on small scales27. Secondly, the finite sampling interval s cannot be made arbitrarily small in practice and therefore the estimated KM coefficients deviate systematically from the true coefficients28,29. Procedures for correcting finite-sampling-time errors are available for various stochastic processes30,31,32. While the focus of this work is on the inference problem for governing equations, finite sampling-time corrections should be employed in practical applications.

In the following, we employ two different methods for estimating the drift and diffusion coefficients. Firstly, a method is described in the next subsection that is based on binning of the data in phase space to produce histograms. Secondly, we compare the results obtained from data binning with results from direct estimation of the KM coefficients.

Estimation of KM coefficients from binned data

A classical method for the characterization of stationary, Markovian time series resulting from Langevin dynamics is based on binning of the trajectory data in space intervals5,33,34. For this approach, we focus on problems with only one space dimension (\(M=1\)). To estimate probability distributions, the data from multiple sample trajectories of the stochastic process is grouped into Q bins and the values in each bin are averaged as

where \(\bar{X}_{k}\), \(\bar{F}^{(2)}_{k}\), and \(\bar{F}^{(2)}_{k}\) are bin-wise averages. The estimated probability for finding trajectory parts in the k-th bin, \(p_{k}\), is normalized as \(\sum _{k=1}^{Q}p_{k}=1\) with \(0\le p_{k}\le 1\). Histograms resulting from data binning directly yield the curves for the drift and diffusion coefficients, see Refs.5,33. The equations for the KM coefficients, \(D^{(1)}(x)\) and \(D^{(2)}(x)\), are inferred by finding analytical expressions for \({\bar{\textbf{F}}}^{(1,2)}\) as functions of \({\bar{\textbf{X}}}\). For this purpose, a library \({\varvec{\Theta }} \in {\mathbb {R}}^{Q\times K}\) is constructed from the binned data, where Q is the number of bins and K is the number of terms in the library. For example, \({\varvec{\Theta }}({\bar{\textbf{X}}})=[{\textbf{1}},\;{\bar{{\textbf{X}}}},\;{\bar{\textbf{X}}}{\odot {\bar{\textbf{X}}}},\;{\bar{{\textbf{X}}}}\odot \bar{{\textbf{X}}}\odot {\bar{\textbf{X}}},\; \sin ({\bar{\textbf{X}}}),\; \dots ]\) where \(\odot\) again denotes an element-wise product. If the library contains all the function expressions that are necessary to describe the KM coefficients analytically, the governing equations can be written as

where \({\textbf{W}}^{(1)}\) and \({\textbf{W}}^{(2)}\) are two sparse vectors whose non-zero entries correspond to the library terms to be included in the sought-for analytical expressions for the KM coefficients. Equation (14a) yields \(D^{(1)}(x)\) and Eq. (14b) yields \(D^{(2)}(x)\). The inverse problems of finding optimal \({\textbf{W}}^{(1,2)}\) in Eq. (14) have the same form as the problem in Eq. (2).

The binning of trajectories can produce significant errors in sparsely sampled regions, both in the interior and at the boundaries of the sampled phase space. We propose that the identification of SDEs can be improved by removal or filtering of the bins with high uncertainty. To substantiate this suggestion, we implement the inference procedure for unfiltered histograms and, additionally, implement a straight-forward extension that essentially consists of fixing a small probability threshold, below which all the data is discarded. While the probability threshold can can be determined in different ways, we employ here an automatic heuristic that was originally designed for edge detection in images35. The procedure that is described in Ref.35 consists of dividing the data according to probability thresholds to maximize the Shannon and Tsallis entropy, respectively. Maximization of the Shannon entropy produces thresholds that divide the data into “foreground” and “background”, corresponding to signal-dominated and noise-dominated phase-space regions, respectively. The threshold value determining the “background” is then improved in a second step by maximizing the Tsallis entropy, whose pseudo additivity reportedly improves the analysis of data containing long-range correlations, see also Ref.36. While we found that this method for determining a probability threshold is useful in practice, its theoretical underpinnings are to our knowledge not entirely clear. Thus, a manual selection of the probability threshold based on the results may be preferable in some cases.

Estimation of KM coefficients without data binning

A more direct approach for estimating the KM coefficients is based on the use of the trajectories \({\textbf{F}}^{(1)}_{\ell }\) and \({\textbf{F}}^{(2)}_{\ell }\) without binning or filtering. Since we do not intend to study transient initial dynamics, we mostly employ as input data a single, long trajectory generated from the stochastic process. For inference of the KM coefficients from the space-time trajectories, we construct a library \({\varvec{\Theta }} \in {\mathbb {R}}^{N\times K}\), where N is the length of the trajectory and K is the number of terms in the library. For example, \({\varvec{\Theta }}(\{{\textbf{X}}_{\ell '}\})=[{\textbf{1}},\;{\textbf{X}}_{1},\;\ldots ,{\textbf{X}}_{M},\;{\textbf{X}}_{1}\odot {\textbf{X}}_{2},\; \dots ,\; \sin (\mathbf {X_{1}}),\ldots ]\), where \(\ell '\) covers all M components of the stochastic process. Note that the library is constructed such that \(F^{1}_{\ell ,i}\) and \(F^{2}_{\ell ,i}\) at the i-th time point depend only on functions involving coordinates \(\{X_{\ell ',i}\}_{\ell '}\) at the same time point. Thus, a velocity dependence or a history dependence of the estimators for the drift and diffusion coefficients is excluded. Under the assumption that the library contains all necessary terms describing the drift and diffusion coefficients, the coefficients for the \(\ell\)-th component of the stochastic process can be inferred with

where \(\ell \in \{1 \ldots M\}\) and the vectors \({\textbf{W}}^{(1,2)}_{\ell }\) are non-zero in those entries that correspond to the terms in the libary that are required for the analytical description of the KM coefficient. The determination of the \({\textbf{W}}^{(1,2)}_{\ell }\) is again an inverse optimization problem.

Solution of the inference problems with automatic threshold sparse Bayesian learning

For identification of the relevant library terms as, e.g., for Eq. (15), we propose a method that we call automatic threshold sparse Bayesian learning (ATSBL). The method consists of two main steps. First, the inverse problem is solved with an efficient algorithm called Bayesian compressive sensing using Laplace priors (BCSL)37. Since the library is large, the solution vector generated by the BCSL algorithm typically still contains quite a few non-vanishing but small entries. Therefore, in a second step, the negligible contributions to the resulting governing equations are removed by an automatic thresholding procedure3,5,7. These two steps of the method are detailed below.

Bayesian compressive sensing using Laplace priors (BCSL)

We consider a generic linear equation system involving a given vector \({\textbf{g}}\) and matrix \({\varvec{\Phi }}\) and an unknown, sparse vector \({\textbf{w}}\) as

where the vector \({\textbf{s}}\) represents noise or measurement errors. Here, \({\textbf{w}}\) can be thought of as a solution vector appearing in an iterative solution procedure for Eqs. (5), (14), or Eq. (15). Various methods can be used to calculate sparse solution vectors \({\textbf{w}}\) from Eq. (16). In particular research on compressive sensing, which deals with the reconstruction of sparse signals from underdetermined systems, has yielded broadly applicable, efficient methods for finding sparse solution vectors \({\textbf{w}}\). Among these are Bayesian methods based on the relevance vector machine (RVM)38,39. Very sparse result vectors are obtained if a Laplace distribution is used as a prior probability distribution for \({\textbf{w}}\). Here, we employ a method called Bayesian compressive sensing using Laplace priors (BCSL)37. Specifically, we employ a variant of BCSL that interatively calculates approximate solutions, which is very computationally efficient and yields accurate results for our type of applications.

Briefly, the mathematical basis of BCSL is as follows, see Ref.37. The method is based on a three-stage hierarchical model. It is assumed that the errors \({\textbf{s}}\) are drawn from a zero-mean Gaussian distribution with unknown variance \(1/\beta > 0\). Therefore, the likelihood function for finding a vector \({\textbf{g}}\) is given by

The unknown vector \({\textbf{w}}\) is assigned a prior distribution, which represents our knowledge on the nature of this quantity. To encode sparsity, one would like to employ a Laplace prior \(p({\textbf{w}}|\lambda )= \lambda /2\exp (-\lambda \sum _{i}|w_{i}|/2)\) with a hyperparameter \(\lambda\). However, the evaluation of integrals using this choice of a Laplace prior is not readily achieved since the Laplace prior is not conjugate to the Gaussian likelihood, Eq. (17). Therefore, an auxiliary vector of non-negative hyperparameters \({\varvec{\gamma }}\) with the same dimension as \({\textbf{w}}\) is employed to express the prior as the convolution of the two different distributions \(p({\textbf{w}}|{\varvec{\gamma }})=\Pi _{i}\left[ \exp {(-w_{i}^{2}/(2 \gamma _{i}))}/\sqrt{2 \pi \gamma _{i}} \right]\) and \(p({\varvec{\gamma }}|\lambda )=\Pi _{i}\left[ \lambda \exp {(-\lambda \gamma _{i}/2)}/2\right]\). These two distributions together result in a Laplace prior after marginalizing out \({\varvec{\gamma }}\) as

see Ref.40. Overall, the joint probability density results as

where the parameters \(\lambda\) and \(\beta\) are both assumed to obey Gamma distributions. To infer values for the most probable solution vector \({\textbf{w}}\) as well as the hyperparameters, an evidence procedure is employed wherein the posterior probability \(p({\textbf{w}},{\varvec{\gamma }},\lambda ,\beta |{\textbf{g}})\) is maximized with respect to \({\textbf{w}}\), \({\varvec{\gamma }}\), \(\lambda\), and \(\beta\), given the data. By making use of the expression

together with Eq. (19), one sees that the value of \({\textbf{w}}\) that maximizes the posterior can be determined by simply maximizing \(p({\textbf{g}}| {\textbf{w}},\beta ) p({\textbf{w}}|{\varvec{\gamma }})\). This calculation yields for the result vector the expression \({\textbf{w}}^{*}=\beta {\varvec{\Sigma }}\varvec{\Phi ^{T}}{\textbf{g}}\) with \({\varvec{\Sigma }} = (\beta \varvec{\Phi ^{T}\Phi }+{\varvec{\Lambda }})^{-1}\) and \(\varvec{\Lambda} = \text {diag}(1/\gamma _{i})\). This step corresponds to a Ridge regression that depends on the unknown values of \({\varvec{\gamma }}\), \(\lambda\), and \(\beta\). Determination of these hyperparameters proceeds by maximizing

with respect to \({\varvec{\gamma }}\), \(\lambda\), and \(\beta\). Here, \(p({\varvec{\gamma }},\lambda ,\beta , {\textbf{g}})\) is calculated from the right hand side of Eq. (19) by integrating out \({\textbf{w}}\). With the fast, approximate version of BCSL, the equations determining the optimal values of the hyperparameters are solved iteratively, where only one entry of the vector \({\varvec{\gamma }}\) is adjusted in every step.

Automatic thresholding

Solution of the inverse problem (16) with BCSL typically yields vectors \({\textbf{w}}\) that contain only a few large entries, but also a number of very small, non-zero entries. Removal of these negligible entries is desirable and we improve the solution sparsity with an iterative thresholding procedure4. The pseudocode 1 illustrates how the thresholding procedure proposed in in Ref.4 is combined with BCSL proposed in Ref.37. Briefly, the thresholding algorithm works as follows. The input is given by \({\textbf{g}}\), the library matrix \({\varvec{\Theta }}\), an initial increment \(d_{\textrm{tol}}\) for the threshold tol, and the maximum number of iterations \(n_{\text {iters}}\). The data \({\textbf{g}}\) and \({\varvec{\Theta }}\) is spilt into two parts for training and test, respectively. Usually, 80% of the data is used for training and 20% for testing. Thresholds are calculated iteratively from the training data and the validity of the thresholds is evaluated based on the error resulting from their application to the test data. The core part of the algorithm is a loop for iterative calculation of the sparse vector \({\textbf{w}}\) and the threshold tol. In each iteration step, the approximate, fast BCSL routine is first employed to obtain an estimate of \({\textbf{w}}\) from the training data. The quality of this solution estimate is evaluated by calculating the resulting error with the test data

where the penalty factor of the solution norm is chosen to depend on the condition number as \(\eta =10^{-3}\,\kappa ({\varvec{\Theta }})\), as suggested for the original algorithm4. If the error of the current solution is smaller than the error of previous iterations, the new solution is accepted and the threshold tol is increased. In the opposite case, the threshold is decreased and the increment \(d_{\textrm{tol}}\) is refined. The final solution \({\textbf{w}}_{\textrm{best}}\) is the sparse vector that determines the terms in the governing differential equations, SDEs, ODEs, and PDEs.

Quality score for identified governing equations

The error of the inference procedure can be directly quantified by comparison of the results with a known set of original differential equations in test cases. For this purpose, we define the deviation of identified coefficient (DIC) as

where every \(w_{i}\) is a coefficient of one term in the identified equation and \(w'_{i}\) is the related coefficient in the original equation that was used to generate the test data. Here, at least one of the coefficients in each pair \(\{w_{i}, w'_{i}\}\) is required to be non-zero and the sum only runs over these coefficients. K represents the number of these coefficients. The DIC lies in the range \([0,\infty ]\) where 0 indicates a perfectly identified equation.

Results

Inference of SDEs from noisy trajectories

We first illustrate data-driven identification of SDEs by the example of overdamped Brownian motion of a particle inside a one-dimensional double-well potential with coordinate x. The drift and diffusion coefficients of this system are given by

The trajectory data that is to be used for inferring the governing equation is generated by integrating the Langevin equation with the Euler-Maruyama method. A trajectory \({\textbf{X}}\) is shown in Fig. 1a-i (\(10^{6}\) time steps). The trajectories \({\textbf{F}}^{(1)}\) and \({\textbf{F}}^{(2)}\) are shown in Fig. 1a-ii,iii. To visualize the x- dependence of the estimator for the drift coefficient, we plot \({\textbf{F}}^{(1)}\) against \({\textbf{X}}\), see Fig. 1c-i. Similarly, \({\textbf{F}}^{(2)}\) is plotted against \({\textbf{X}}\) as estimator of the diffusion coefficient \(D^{(2)}(x)\) in Fig. 1c-ii. Both plots exhibit large fluctuations around the true drift and diffusion coefficients and the resulting averages are clearly prone to errors, particularly at the boundaries of the sampled domain.

Data-driven discovery of a one-dimensional SDE with automatic threshold sparse Bayesian learning (ATSBL). (a-i) Trajectory of a particle undergoing overdamped diffusive motion in a double-well potential (\(10^{6}\) time steps). (a-ii,a-II) Values of the \({\textbf{F}}^{(1)}\) and \({\textbf{F}}^{(2)}\) generated with discrete differences from the same trajectory. (b) The library matrix \(\Theta\) is constructed by evaluating a given set of functions for all values of the trajectory. Thereby, one obtains linear equation systems that relate the known sequences \({\textbf{F}}^{(1,2)}\) to unknown, sparsely populated coefficient vectors \({\textbf{W}}^{(1,2)}\). The determination of the non-zero entries of \({\textbf{W}}^{(1,2)}\) yields a set of library functions that together describe the drift and diffusion coefficients \(D^{(1)}\) and \(D^{(2)}\). (c) Exemplary results of the inference procedure. Despite the large noise amplitude, accurate predictions can be made directly from the trajectory data. (d) Comparison of the use of a Laplacian and Gaussian prior distribution in the inference procedure. The deviation of the identified coefficient (DIC) for the drift coefficient is plotted against the number of data points used for training. The Laplace prior in ATSBL decreases the error and reduces the required sample size. (e) Convergence rate of the thresholding procedure for Laplacian and Gaussian prior distributions. (e-i) Laplace priors result in fast threshold convergence. (e-ii) The error e defined in Eq. (22) decreases during the iterations. Errors achieved with Gaussian- and Laplacian priors are comparable.

Using the trajectory data, we next construct a library consisting of 11 terms for the drift coefficient and 6 terms for the diffusion coefficient as illustrated in Fig. 1b. Then, we employ ATSBL to identify \({\textbf{W}}^{(1)}\) and \({\textbf{W}}^{(1)}\) directly from the trajectory without binning. The identified x-dependent functions for the drift and diffusion coefficients are plotted in Fig. 1c-i,ii. They agree well with the original functions used for creating the data. The identified equations with estimated uncertainties are shown in Fig. 1c.

The main distinction of ATSBL as compared to established inference techniques is the assumption of a Laplacian distribution for the prior of the library coefficients. The more direct, albeit theoretically less sparsity-promoting procedure is to employ a Gaussian prior, corresponding to a ridge regression with fixed regularization parameter, for inference of the solution vector \({\textbf{w}}\) in Eq. (16) prior to automatic thresholding, as done, e.g., in Ref.4. To compare the performance of these two approaches for inference of SDEs, we evaluate the deviation of the identified coefficients, DIC, as a function of the number of data points used for inference. The results shown in Fig. 1d indicate that the Laplacian prior is preferable over the Gaussian prior since it requires less data and results in a smaller DIC. To further establish the robustness of ATSBL, we consider the convergence of the iterative thresholding procedure for each of the two prior distributions. The result shown in Fig. 1e-i,ii demonstrate a better convergence achieved in the case of the Laplacian prior. For both, Gaussian and Laplacian prior distributions, the threshold and the error oscillate during the iteration process, which is due to the adaptive step size during the thresholding.

Inference of SDEs with time-dependent drift coefficient

In the previous section, an example is provided of how the KM coefficients can be obtained by performing a regression directly with the trajectory data. The direct use of the trajectory data becomes particularly important for the treatment of the more complex situation of a time-varying force. In such a situation, the probability distributions change over time and a histogram-based approximation of the dynamic distributions can be technically challenging and requires the availability of many sample trajectories for the same conditions. In order to explore the validity of our approach in this situation, we consider the example of a particle diffusing within a time-dependent one-dimensional potential. The drift and diffusion coefficients of this system are given by

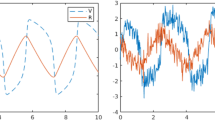

with \(a_{0}=5 \times 10^{-3}\). The potential has a double-well shape, where the positions of the two minima vary in time. The two minima start at separate positions and merge periodically into one minimum before separating again to reach the initial positions. Each time the potential wells come close to each other, the transition probability becomes large and the particle is likely to change from one well to another. This gives rise to stochastic oscillations between the two potential wells. To test whether the underlying equations can be inferred with a library constructed from a single trajectory, we assume that the frequency \(\omega\) at which the potential changes is known. Inference of this frequency from the data is in principle also possible, but requires excessive computational power since high-order terms with explicit time-dependence must be accounted for in the library. We construct a library consisting of a time-dependent and a time-independent part. The first half of the library is simply a polynomial basis, the second half corresponds to the polynomial basis multiplied with a \(\cos (\omega t)\) factor. The results of the inference procedure are shown in Fig. 2a. The inferred equation is in agreement with the correct equation. For illustration, we plot snapshots of the drift and diffusion for the original equations and the inferred equations in Fig. 2a-ii,iii. Note that the inference procedure for first-order SDEs shows a remarkable performance even though only one sample trajectory is used for inference.

Inference of KM coefficients for one-dimensional SDEs. (a) System with a time-dependent force field. (a-i) Trajectory of an overdamped motion in a time-varying double-well potential. (a-ii,iii) Using an appropriate function library, the functional forms of the KM coefficients can be faithfully reconstructed. Blue dots are values of \(F^{(1,2)}\) estimated from the trajectory. (b) Advantage of data binning for analysis of short trajectories. (b-i) Trajectory resulting from overdamped motion in a double-well potential with space-dependent diffusion coefficient. (b-ii,iii) Inferred x-dependence of the KM coefficients for short trajectories (\(2 \times 10^{5}\) time steps). The unpopulated regions in phase space are characterized by a high uncertainty of inference and therefore lead to large deviations in the coefficients. (b-iv) Histogram of particle positions for the trajectory shown in (i). (b-iv,v,vi) Binned distributions can be used to infer the KM coefficients, but large errors occur in regions that are not well-sampled. Inference errors due to incomplete phase-space sampling for short trajectories can be accounted for by excluding the data below a probability threshold, corresponding to large uncertainty. (b-vii) Performance of the inference with data binning and without data binning for short and long trajectories (\(2 \times 10^{5}\) and \(2 \times 10^{7}\) time steps, respectively). The shown DIC is the average of the DICs for \(D^{(1)}\) and \(D^{(2)}\). For long trajectories, data binning does not reduce the error.

Data binning for inference of SDEs from short trajectories

An inference method that relies on direct use of sample trajectories for a regression can become unreliable when confronted with short trajectories in an inhomogeneous force field. In such a situation, we find that it is more appropriate to employ data binning. We illustrate this procedure with Brownian motion of a particle in a one-dimensional double-well potential where the diffusion coefficient depends on space. The drift and diffusion coefficients of the model are given by

We first consider short trajectories that have \(2\times 10^{5}\) time steps, exemplified by the plot in Fig. 2b-i. The raw data and the binned data are shown in Fig. 2b-ii,iii and b-v,vi, respectively. For this example, 200 data bins are employed. The distributions approximated by the binned data clearly deviate from the known functions \(D^{(1)}(x)\) and \(D^{(2)}(x)\) in undersampled regions. Therefore, the binned data is filtered to remove data points with high uncertainty. This filtering is done as described in the “Methods” section by discarding bins below a probability threshold \(p^{*}\) that is determined by entropy maximization35, see Fig. 2b-iv. To assess if binning and filtering is also beneficial for inference of SDEs from long trajectories, we also use data from trajectories with \(2\times 10^{7}\) time steps. Figure 2b-vii shows the errors of the identified coefficients.

For short trajectories, binning is advantageous in combination with a filtering procedure to suppress data with high uncertainty. The reason for this result can be understood from inspection of Fig. 2b-v,vi, where the inferred functions match the correct functions only in the most populated regions of phase space. Thus, the exclusion of data points with high uncertainty prevents overfitting and improves the inference of the underlying dynamical equations if the trajectory is not long enough to allow a sufficient sampling of the whole phase space. Conversely, data binning with or without filtering is disadvantageous for the analysis of long trajectories that sample the whole phase space, see Fig. 2b-vii.

Active sampling improves the identification of SDEs

We have so far restricted our attention to the extraction of estimates from data that was generated prior to the analysis, e.g., in experiments. Thereby, we have assumed that the size of the data set is large enough to allow some form of inference of the governing equations. In a different scenario one might have the ability to perturb the studied system, either in a computer simulation or in an experimental setup, while simultaneously recording the data. Then, one may enhance the sampling efficiency by means of an appropriately designed perturbation that is applied to the system. Generally, this methodology is expected to be useful whenever the system exhibits an energy landscape with multiple local minima that can trap the trajectory for long times. We describe an adaptive control method where the inference of the dynamical equations together with a simultaneous perturbation of the system recursively results in a global exploration of the phase space to provide sufficient sampling everywhere.

Since the probability distribution tends to be peaked around local energy minima, the dynamical equations can be estimated locally near these minima. To take advantage of this local estimation while iteratively extending the sampled region, we re-sample repeatedly while applying in each sampling round a control force that is opposite to a force from the system that is estimated locally from previous rounds. The difficulty with a straight-forward application of this method is that the control force can admit large deviations away from the initial estimation region. This effect produces large errors, slows down convergence, and may even lead to divergence problems. We overcome this problem by weighting the control force with a Gaussian distribution, such that the control force vanishes away from the current estimation region. This local control force expels the trajectory from the energy minimum where the estimation has been performed and the trajectory eventually reaches another local minimum.

The method, which we call automatic iterative sampling optimization (AISO), is illustrated in Fig. 3a,i–iii and the pseudocode is provided in Algorithm 2. At each iteration, the underlying dynamical equations are estimated from the data accumulated during all previous iterations. The negative of the inferred drift term is employed locally as control force. The center and width of the Gaussian weight of the control force is calculated only from the mean and standard deviation of the trajectory of the previous step. Thus, we define our control force acting on the component \(\ell\) as

where the index i indicates that values are to be taken at the iteration number i; \(\mu _{\ell }^{i}\) and \(\zeta _{\ell }^{i}\) stand for the mean and variance of the trajectory extracted from the step i in each iteration. After a sufficient number of iterations, the data points accumulated from all iterations are combined and the equation of motion is extracted from the accumulated data. This procedure is repeated for a predefined number of iteration steps. For the examples presented in the following, the iteration step number has been fixed to \(N=10\), since convergence has been achieved within less than 10 steps in these cases.

Active learning with automatic iterative sampling optimization (AISO). (a) Schematic presentation of automatic iterative sampling optimization for the case of Brownian diffusion (a-i). Initially, the particle is trapped in a local energetic minimum and the functional form of the potential can therefore only be inferred locally. (a-ii) After the first iteration step, the potential hypersurface near the estimated minimum is flattened and the particle can thus explore other regions of phase space. The same procedure is repeated iteratively and the control is always applied at the minimum estimated during the previous iteration. (a-iii) Schematic representation of the main feedback control loop. (b-i) Trajectory of a particle undergoing Brownian motion in a one-dimensional three-well potential. The green curve shows a trapped trajectory while the blue curve shows a trajectory in the presence of control forces. (b-ii) The deviation of the inferred coefficients (DIC) decreases during the iterations. (b-iii) The identified drift field converges to the correct function during the iteration. (b-iv) Trajectory of a particle undergoing diffusion in a two-dimensional force field. The green curve exemplifies a trapped trajectory for plain sampling. The blue curve shows an example of a trajectory in the presence of control forces. The color of the background only represents part of the force field, namely a Mexican hat potential \(V(x, y) = -(x^{2} + y^{2})/2 + (x^{2} + y^{2})^{2}/4\) that generates radial forces. (b-v) Evolution of the of the DIC during the iterations. (b-vi) Streamlines of the identified drift field (pink) and streamlines of the correct drift field (black) after the first iteration step. (b-vii) Streamlines of identified drift field (pink) and streamlines of the correct drift field (black) after the tenth iteration step. The identified force field at the end of the iteration closely matches the original one.

For a first demonstration of our method, we employ a three-well potential \(U(x)=x^{6}-6x^{4}+0.5x^{3}+8x^{2}\) with a constant diffusion coefficient for simulating the trajectory of a particle in one dimension. The drift and diffusion coefficients are

Next, we also consider a two-dimensional drift field, consisting of a radially symmetric component and a shear component in the x, y-plane. The drift and diffusion coefficients are given by

Using these driving forces, we simulate trajectories with \(10^{5}\) time steps with one time step being \(\Delta t=5\times 10^{-3}\). Parts of the trajectories on the potential maps are shown in Fig. 3b-i,iv. Results for the intermediate iteration steps are shown together with the drift field in Fig. 3b-iii,vi. As the algorithm proceeds through more iterations, the coefficients of the control potential approach the coefficients of the correct drift field, and the expulsion from each local minimum becomes more efficient, Fig. 3b-iii,vii. This results in an enhancement of rare events where the particle crosses the saddle points, as illustrated in Fig. 3b-i,iv by the controlled and uncontrolled trajectories. The error is quantified by calculating the coefficients \({\tilde{D}}^{(1)}(x)\) and \({\tilde{D}}^{(2)}(x)\) in each iteration. The DIC reduces from 1 to nearly 0.01 during the iterations, see Fig. 3b-ii,v. Thus, the terms of the identified equations approach those given in the original equations.

Identification of ordinary and partial differential equations

It is next shown that the sparse inference scheme based on Laplace priors that is implemented with ATSBL can also be used for data-driven discovery of ordinary and partial differential equations. The identification of ODEs from trajectory data is demonstrated with a Lorenz system, which is a paradigm for chaotic behavior41. The Lorenz equations are given by

where the parameters are fixed as \(a=10\), \(b=28\), and \(c=3/8\). We numerically integrate these equations to obtain a trajectory as shown in Fig. 4a. The chaotic system involves two attractors. For data-driven system identification, we utilize three identical libraries \({\varvec{\Theta }}\) for each of the variables, x, y and z. \({\varvec{\Theta }}\) is constructed from the simulated trajectory and includes 56 terms containing up to fourth powers of all variables. Time-derivatives are calculated using fourth-order central-difference approximation. The general ODEs constructed from the library as in Eq. (4) are represented by three linear equation systems. The estimated equations resulting from an application of the inference procedure to noise-free data have small errors that are in magnitude comparable to the time step \(\Delta t = 2 \times 10^{-4}\), see Fig. 4b. The same inference procedure is then repeated for a trajectory with additive Gaussian noise. The standard deviation of the noise in each coordinate is chosen to be 2% of the standard deviation of the noise-free data in the same coordinate. For this case, the ODEs identified with ATSBL still contain all the correct terms and the errors in the inferred system parameters are in the percent range, see Fig. 4b.

Example for data-driven discovery of ODEs with ATSBL. (a) Plot of a numerically integrated trajectory for \(t\in [0, 25]\) with a time step of \(\Delta t=2 \times 10^{-4}\) and an initial condition as \([x_{0},\;y_{0},\;z_{0}]=[-8,\;8 ,\;27]\). (b) The table shows the original ODEs, i.e., the Lorenz system, and the identified ODEs from noise-free data and data with 2% Gaussian noise.

Finally, we demonstrate data-driven discovery of PDEs with ATSBL. Reaction–diffusion equations have attracted interest as prototypic models for pattern formation in biochemical systems, where constituents are locally transformed into each other through chemical reactions and transported in space by diffusion. Here, we consider the popular \(\lambda -\omega\) system, given by

where \(\beta\) is equal to 2. A two-dimensional, planar, rectangular area with periodic boundary conditions is considered. The initial values of u and v are shown in Fig. 5a-i,ii. The reaction–diffusion equations are solved numerically by using a spectral method. Snapshots of u and v are shown in Fig. 5a-iii,iv. For inference of the governing PDEs, a library matrix \({\varvec{\Theta }}\) is constructed containing 35 terms each for the time derivatives of \(u\) and \(v\). Then, using ATSBL, the reaction–diffusion equations are inferred from the simulated data, as illustrated in Fig. 5b. For noise-free data, the identified equations deviate from the original equations only at the fourth decimal place and this error is due to discretization. However, if u and v are corrupted with additive noise, identification of the correct PDEs becomes challenging4. In Ref.4, it has therefore been suggested to include a denoising step prior to the inference step. Accordingly, we employ a curvelet denoising method42, which permits reconstruction of the reaction–diffusion equations from data with 2% noise with ATSBL, as illustrated in Fig. 5b.

Demonstration of data-driven discovery of PDEs with ATSBL using a reaction–diffusion system. (a-i,ii) Snapshots of the initial conditions for the variables u and v, respectively. (a-iii,iv) u and v at time \(t=0.3\). (b) The table shows the original PDEs for the reaction–diffusion system and the identified PDEs for noise-free data and data with 2% Gaussian noise. Inference is conducted with a library containing 35 terms. The numerical calculations are done with a time step \(\Delta t=0.0034\) in the time interval \(t=[0,0.6]\). The space domain has size \(20\times 20\) and is covered with a \(256\times 256\) grid with periodic boundary conditions.

Summary and outlook

Data-driven, automatic discovery of governing equations has become a viable tool for studying complex systems if first-principle derivations are intractable, e.g., for biological systems or epidemiological data. The aim is here generally to construct an analytical model that characterizes the observed dynamics and extends to parameter- and phase space regions that are hard to access experimentally.

Our main contribution is an inference method that makes use of Laplacian prior distributions in a Bayesian framework to find a minimal set of governing equations without the need for user input. We establish the validity of this approach and compare it to other methods. Regarding data-driven discovery of Langevin-type SDEs, we show that the proposed sparse method converges faster than other methods based on ridge regression. Maximum likelihood methods for the estimation of parameters in SDEs are not considered here, see Ref.8 for an introduction to those methods. For the studied Langevin SDEs, we find that a binning of the trajectory data for inference of the drift and diffusion coefficients is only advantageous if the phase space is sampled sparsely. In that case, the error of the inference procedure can vary strongly in phase space since the relative uncertainties of the probabilities vary. A filtering procedure consisting of the exclusion of data with high uncertainty results in a significantly improved inference accuracy.

Next, we investigate how active-learning procedures can be useful when a direct inference of the global dynamics is difficult because of a trapping of trajectories in local energetic minima. This problem can be solved with well-established umbrella-sampling methods where quadratic bias potentials are employed to reduce the energetic barriers in the original potential landscape18,43. However, an appropriate parameterization of such bias potentials can be challenging. For example, if the bias potentials are intended to smoothen an unknown, rough energy landscape. Instead, we employ the methods for data-driven identification of governing equations to calculate time-dependent external perturbations that force the trajectory to explore the full phase space. The main feature of our method is that the parameters that determine the applied perturbations correspond to the parameters that define the energy landscape. The combination of iterative inference with system perturbations can significantly improve the speed and accuracy of the overall inference procedure. We therefore hope that the suggested active learning procedure will extend the applicability of data-driven methods, in particular in the context of computer simulations.

A central challenge related to the improvement of the library-based methodology for identification of analytical models is to find automated approaches for tailoring the employed function space to the problem at hand. Recent methodological advances suggest that a possible solution is the integration of physical constraints, such as symmetries, conservation laws, or even thermodynamics, into a generic framework for statistical learning of governing equations44. Data-driven identification of analytical models thus has the potential to become a popular tool for closing the gap between non-parametric, empirical modeling and first-principles-based modeling in the coming years.

Data availability

Source code and data are available at https://doi.org/10.5281/zenodo.10732552.

Change history

29 March 2024

A Correction to this paper has been published: https://doi.org/10.1038/s41598-024-57801-9

References

Schmidt, M. & Lipson, H. Distilling free-form natural laws from experimental data. Science 324, 81 (2009).

Bongard, J. & Lipson, H. Automated reverse engineering of nonlinear dynamical systems. Proc. Natl. Acad. Sci. U.S.A. 104, 9943 (2007).

Brunton, S. L., Proctor, J. L. & Kutz, J. N. Discovering governing equations from data by sparse identification of nonlinear dynamical systems. Proc. Natl. Acad. Sci. U.S.A. 113, 3932 (2016).

Rudy, S. H., Brunton, S. L., Proctor, J. L. & Kutz, J. N. Data-driven discovery of partial differential equations. Sci. Adv. 3, e1602614 (2017).

Boninsegna, L., Nüske, F. & Clementi, C. Sparse learning of stochastic dynamical equations. J. Chem. Phys. 148, 241723 (2018).

Raissi, M., Yazdani, A. & Karniadakis, G. E. Hidden fluid mechanics: Learning velocity and pressure fields from flow visualizations. Science 367, 1026 (2020).

Zhang, S. & Lin, G. Robust data-driven discovery of governing physical laws with error bars. Proc. Math. Phys. Eng. 474, 20180305 (2018).

Bishwal, J. P. Parameter Estimation in Stochastic Differential Equations (Springer, 2007).

Friedrich, R., Peinke, J., Sahimi, M. & Tabar, M. R. R. Approaching complexity by stochastic methods: From biological systems to turbulence. Phys. Rep. 506, 87 (2011).

Stephens, G. J., De Mesquita, M. B., Ryu, W. S. & Bialek, W. Emergence of long timescales and stereotyped behaviors in Caenorhabditis elegans. Proc. Natl. Acad. Sci. U.S.A. 108, 7286 (2011).

Sarfati, R., Bławzdziewicz, J. & Dufresne, E. R. Maximum likelihood estimations of force and mobility from single short Brownian trajectories. Soft Matter 13, 2174 (2017).

Pérez García, L., Donlucas Pérez, J., Volpe, G., Arzola, A. V. & Volpe, G. High-performance reconstruction of microscopic force fields from Brownian trajectories. Nat. Commun. 9, 1 (2018).

Baldovin, M., Puglisi, A. & Vulpiani, A. Langevin equations from experimental data: The case of rotational diffusion in granular media. PLoS ONE 14, e0212135 (2019).

Frishman, A. & Ronceray, P. Learning force fields from stochastic trajectories. Phys. Rev. X 10, 021009 (2020).

Ferretti, F., Chardès, V., Mora, T., Walczak, A. M. & Giardina, I. Building general Langevin models from discrete datasets. Phys. Rev. X 10, 031018 (2020).

Brückner, D. B., Ronceray, P. & Broedersz, C. P. Inferring the dynamics of underdamped stochastic systems. Phys. Rev. Lett. 125, 058103 (2020).

Brückner, D. B. et al. Learning the dynamics of cell–cell interactions in confined cell migration. Proc. Natl. Acad. Sci. U.S.A. 118, e2016602118 (2021).

Torrie, G. M. & Valleau, J. P. Nonphysical sampling distributions in Monte Carlo free-energy estimation: Umbrella sampling. J. Comput. Phys. 23, 187 (1977).

Valsson, O. & Parrinello, M. Variational approach to enhanced sampling and free energy calculations. Phys. Rev. Lett. 113, 090601 (2014).

Dama, J. F., Parrinello, M. & Voth, G. A. Well-tempered metadynamics converges asymptotically. Phys. Rev. Lett. 112, 240602 (2014).

Invernizzi, M., Piaggi, P. M. & Parrinello, M. Unified approach to enhanced sampling. Phys. Rev. X 10, 041034 (2020).

Besold, G., Risbo, J. & Mouritsen, O. G. Efficient Monte Carlo sampling by direct flattening of free energy barriers. Comput. Mater. Sci. 15, 311 (1999).

Goedecker, S. Minima hopping: An efficient search method for the global minimum of the potential energy surface of complex molecular systems. J. Chem. Phys. 120, 9911 (2004).

Blaak, R., Auer, S., Frenkel, D. & Löwen, H. Crystal nucleation of colloidal suspensions under shear. Phys. Rev. Lett. 93, 068303 (2004).

Klymko, K., Geissler, P. L., Garrahan, J. P. & Whitelam, S. Rare behavior of growth processes via umbrella sampling of trajectories. Phys. Rev. E 97, 032123 (2018).

Risken, H. Fokker–Planck equation. In The Fokker–Planck Equation 63–95 (Springer, 1996).

Kleinhans, D., Friedrich, R., Wächter, M. & Peinke, J. Markov properties in presence of measurement noise. Phys. Rev. E 76, 041109 (2007).

Ragwitz, M. & Kantz, H. Indispensable finite time corrections for Fokker–Planck equations from time series data. Phys. Rev. Lett. 87, 254501 (2001).

Friedrich, R., Renner, C., Siefert, M. & Peinke, J. Comment on “indispensable finite time corrections for Fokker–Planck equations from time series data’’. Phys. Rev. Lett. 89, 149401 (2002).

Gottschall, J. & Peinke, J. On the definition and handling of different drift and diffusion estimates. New J. Phys. 10, 083034 (2008).

Honisch, C. & Friedrich, R. Estimation of Kramers–Moyal coefficients at low sampling rates. Phys. Rev. E 83, 066701 (2011).

Rydin Gorjão, L., Witthaut, D., Lehnertz, K. & Lind, P. G. Arbitrary-order finite-time corrections for the Kramers–Moyal operator. Entropy 23, 517 (2021).

Rinn, P., Lind, P., Wächter, M. & Peinke, J. The Langevin approach: An R package for modeling Markov processes. J. Open Res. Softw. 4 (2016).

Gradišek, J., Siegert, S., Friedrich, R. & Grabec, I. Analysis of time series from stochastic processes. Phys. Rev. E 62, 3146 (2000).

El-Sayed, M. A. A new algorithm based entropic threshold for edge detection in images. Int. J. Comput. Sci. 8, 71 (2011).

Hamza, A. B. Nonextensive information-theoretic measure for image edge detection. J. Electron. Imaging 15, 013011 (2006).

Babacan, S. D., Molina, R. & Katsaggelos, A. K. Bayesian compressive sensing using Laplace priors. IEEE Trans. Image Process. 19, 53 (2009).

Tipping, M. E. Sparse Bayesian learning and the relevance vector machine. J. Mach. Learn. Res. 1, 211 (2001).

Ji, S., Xue, Y. & Carin, L. Bayesian compressive sensing. IEEE Trans. Signal 56, 2346 (2008).

Figueiredo, M. A. Adaptive sparseness for supervised learning. IEEE Trans. Pattern Anal. Mach. Intell. 25, 1150 (2003).

Lorenz, E. N. Deterministic nonperiodic flow. J. Atmos. Sci. 20, 130 (1963).

Peyré, G. The numerical tours of signal processing-advanced computational signal and image processing. IEEE Comput. Sci. Eng. 13, 94 (2011).

Bussi, G. & Laio, A. Using metadynamics to explore complex free-energy landscapes. Nat. Rev. Phys. 2, 200 (2020).

Karniadakis, G. E. et al. Physics-informed machine learning. Nat. Rev. Phys. 3, 422 (2021).

Acknowledgements

Funding by the European Research Council through a starting grant for BS is gratefully acknowledged (BacForce, g.a.No. 852585).

Funding

Open Access funding enabled and organized by Projekt DEAL.

Author information

Authors and Affiliations

Contributions

All authors designed the study, performed the research and wrote the manuscript together.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

The original online version of this Article was revised: The Data Availability section in the original version of this Article was incomplete. Full information regarding the corrections made can be found in the correction notice for this Article.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Huang, Y., Mabrouk, Y., Gompper, G. et al. Sparse inference and active learning of stochastic differential equations from data. Sci Rep 12, 21691 (2022). https://doi.org/10.1038/s41598-022-25638-9

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-022-25638-9

- Springer Nature Limited

This article is cited by

-

Extrapolating tipping points and simulating non-stationary dynamics of complex systems using efficient machine learning

Scientific Reports (2024)

-

Benchmarking sparse system identification with low-dimensional chaos

Nonlinear Dynamics (2023)