Abstract

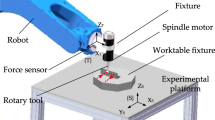

Positioning objects in industrial handling applications is often compromised by elasticity-induced oscillations reducing the possible motion time and thereby the performance and profitability of the automation solution. Existing approaches for oscillation reduction mostly focus on the elasticity of the handling system itself, i.e. the robot structure. Depending on the task, elastic parts or elastic grippers like suction cups strongly influence the oscillation and prevent faster positioning. In this paper, the problem is investigated exemplarily with a typical handling robot and an additional end effector setup representing the elastic load. The handling object is modeled as a base-excited spring and mass, making the proposed approach independent from the robot structure. A model-based feed-forward control based on differential flatness and a machine-learning method are used to reduce oscillations solely with a modification of the end effector trajectory of the robot. Both methods achieve a reduction of oscillation amplitudes of 85% for the test setup, promising a significant increase in performance. Further investigations on the uncertainty of the parameterization prove the applicability of the not yet widely-used learning approach in the field of oscillation reduction.

Chapter PDF

Similar content being viewed by others

Keywords

References

BECKMANN, D., SCHAPPLER, M., DAGEN, M., AND ORTMAIER, T. New approach using flatness-based control in high speed positioning: Experimental results. In International Conference on Industrial Technology (2015), IEEE, pp. 351–356.

CHEN, S. J. Time-Optimized Generation of Robot Trajectories Considering Object Dynamic Constraints. Universität Karlsruhe, 2007. PhD thesis.

FAUST, A., PALUNKO, I., CRUZ, P., FIERRO, R., AND TAPIA, L. Learning swingfree trajectories for UAVs with a suspended load. In International Conference on Robotics and Automation (may 2013), IEEE.

HASSELT, H. V. Double q-learning. In Advances in Neural Information Processing Systems 23, J. D. Lafferty, C. K. I. Williams, J. Shawe-Taylor, R. S. Zemel, and A. Culotta, Eds. Curran Associates, Inc., 2010, pp. 2613–2621.

JI LIN, L. Self-improving reactive agents based on reinforcement learning, planning and teaching. In Machine Learning (1992), pp. 293–321.

LÉVINE, J. Analysis and Control of Nonlinear Systems. Springer Berlin Heidelberg,2009.

MATTNER, J., LANGE, S., AND RIEDMILLER, M. Learn to swing up and balance a real pole based on raw visual input data. In Neural Information Processing (2012), T. Huang, Z. Zeng, C. Li, and C. S. Leung, Eds., Springer Berlin Heidelberg, pp. 126–133.

MNIH, V., KAVUKCUOGLU, K., SILVER, D., RUSU, A. A., VENESS, J., BELLEMARE, M. G., GRAVES, A., RIEDMILLER, M., FIDJELAND, A. K., OSTROVSKI, G., PETERSEN, S., BEATTIE, C., SADIK, A., ANTONOGLOU, I., KING, H., KUMARAN, D., WIERSTRA, D., Legg, S., and Hassabis, D. Human-level control through deep reinforcement learning. Nature 518, 7540 (feb 2015), 529–533.

NGUYEN-TUONG, D., PETERS, J., SEEGER, M., AND SCHÖLKOPF, B. Learning inverse dynamics: A comparison, in proceedings of the european symposium on artificial neural networks. In European Symposium on Artificial Neural Networks (2008), IEEE.

ORR, G. B., AND MÜLLER, K.-R., Eds. Neural Networks: Tricks of the Trade. Springer, 1999.

SUTTON, R. S., AND BARTO, A. G. Reinforcement Learning: An Introduction (Adaptive Computation and Machine Learning series). A Bradford Book, 2018.

VAN HASSELT, H., GUEZ, A., AND SILVER, D. Deep reinforcement learning with double q-learning. In Thirtieth AAAI conference on artificial intelligence (2016).

ZHANG, Y., HUANG, R., LOU, Y., AND LI, Z. Dynamics based time-optimal smooth motion planning for the delta robot. In International Conference on Robotics and Biomimetics (2012), IEEE.

ÖLTJEN, J., KOTLARSKI, J., AND ORTMAIER, T. On the reduction of vibration of parallel robots using flatness-based control and adaptive inputshaping. In International Conference on Advanced Intelligent Mechatronics (jul 2016), IEEE.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Open Access This chapter is licensed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license and indicate if changes were made.

The images or other third party material in this chapter are included in the chapter's Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the chapter's Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder.

Copyright information

© 2020 The Author(s)

About this paper

Cite this paper

Kaczor, D., Bensch, M., Schappler, M., Ortmaier, T. (2020). Trajectory Optimization for the Handling of Elastically Coupled Objects via Reinforcement Learning and Flatness-Based Control. In: Schüppstuhl, T., Tracht, K., Henrich, D. (eds) Annals of Scientific Society for Assembly, Handling and Industrial Robotics. Springer Vieweg, Berlin, Heidelberg. https://doi.org/10.1007/978-3-662-61755-7_29

Download citation

DOI: https://doi.org/10.1007/978-3-662-61755-7_29

Published:

Publisher Name: Springer Vieweg, Berlin, Heidelberg

Print ISBN: 978-3-662-61754-0

Online ISBN: 978-3-662-61755-7

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)