Abstract

Incremental Sheet Forming (ISF) has attracted attention due to its flexibility as far as its forming process and complexity in the deformation mode are concerned. Single Point Incremental Forming (SPIF) is one of the major types of ISF, which also constitutes the simplest type of ISF. If sufficient quality and accuracy without defects are desired, for the production of an ISF component, optimal parameters of the ISF process should be selected. In order to do that, an initial prediction of formability and geometric accuracy helps researchers select proper parameters when forming components using SPIF. In this process, selected parameters are tool materials and shapes. As evidenced by earlier studies, multiple forming tests with different process parameters have been conducted to experimentally explore such parameters when using SPIF. With regard to the range of these parameters, in the scope of this study, the influence of tool material, tool shape, tool-end corner radius, and tool surface roughness (Ra/Rz) were investigated experimentally on SPIF components: the studied factors include the formability and geometric accuracy of formed parts. In order to produce a well-established study, an appropriate modeling tool was needed. To this end, with the help of adopting the data collected from 108 components formed with the help of SPIF, Artificial Neural Network (ANN) was used to explore and determine proper materials and the geometry of forming tools: thus, ANN was applied to predict the formability and geometric accuracy as output. Process parameters were used as input data for the created ANN relying on actual values obtained from experimental components. In addition, an analytical equation was generated for each output based on the extracted weight and bias of the best network prediction. Compared to the experimental approach, analytical equations enable the researcher to estimate parameter values within a relatively short time and in a practicable way. Also, an estimate of Relative Importance (RI) of SPIF parameters (generated with the help of the partitioning weight method) concerning the expected output is also presented in the study. One of the key findings is that tool characteristics play an essential role in all predictions and fundamentally impact the final products.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

Incremental Sheet Forming (ISF) is suitable for low-volume production and is ideal for complicated designs. ISF was patented in 1967 [1], and one of the crucial types of ISF is Single Point Incremental Forming (SPIF). Emerging manufacturing technologies like ISF developed in the past few decades. Researchers have shown that an unconventional sheet forming process like ISF is economically feasible for producing prototypes; ISF is also versatile and can produce custom and complex products [2, 3]. Given this, a comprehensive literature review about ISF is presented in [4]. Also, a brief review of the history of ISF with a focus on the technological progress involved is found in [1]. This review, however, focuses on the mechanism of the deformation, modeling techniques, forming force prediction, and an investigation of SPIF. Furthermore, the articles reviewed in this paper all state that ISF is suitable for economical prototyping and is suitable for preparing customized and complex sheet products.

Shrivastava and Tandon [5] investigated components experimentally formed by SPIF and analyzed them using a finite element analysis in order to understand the characteristics of sheet deformation, forming behavior, and dominant deformation mechanisms. Shrivastava and Tandon concluded that ISF is a process capable of fulfilling industry demand for highly complex, economic, and customized products. Duflou et al. [6] assert that one of the most significant factors which influence the geometric accuracy of SPIF is tool diameter. Maqbool and Bambach [7] investigated various SPIF process parameters, including tool diameter on a pyramidal frustum, and found that in relation to geometry, smaller tool diameter positively affects geometrical accuracy in the range (5 mm, 10 mm, and 20 mm) of the tool tip diameter. Brendan et al. [8] examined two tool tip types (parabolic and angle radius) and compared the results using hemispherical and flat-bottomed tool tips. They found that the angular profile tool tip improves formability but concluded that formability is highest when the contact surface is decreased in the parabolic tool tip. Najm and Paniti [9] studied the effect of flat-end tools on SPIF components of thin sheets and found that the smallest corner radius of the flat tool in a range of (0.1 mm, 0.3 mm, and 0.5 mm) gives the best results in terms of forming depth and geometric accuracy. Two different tool ends (flat and hemispherical) were used by Ziran et al. [10] to form an AA-3003O aluminum sheet. They found that better geometric accuracy and formability can be achieved by using a flat tool rather than applying a hemispherical one. Moreover, they also established that relatively low forming force is needed when flat ends are used as compared to hemispherical ends. On the other hand, Wu et al. [11] claimed that SPIF is a process that exhibits flexibility in sheet forming, which in turn enables the process to be used for producing customized complex dimensional shape parts utilizing different materials. Many studies have been conducted to understand SPIF, but a majority of them deal with a sheet thickness of over 0.5 mm. Similarly, many researchers have studied SPIF parameters, but there is less research related specifically to the class of process parameters. For instance, there is no research studying the prediction of forming depth when a flat tool and SPIF are applied with an initial sheet thickness of less than 0.5 mm. No satisfactory solution has been found to improve the geometric accuracy of sheets below 0.5 mm under various conditions in the case of SPIF. In fact, the effects of tool materials and shape on the final product quality have not been discussed in any of the above-mentioned studies. Even if SPIF is quite flexible, it has limitations: its drawbacks, for instance, include the accuracy of the geometry of components and the planned achievable depth.

Recently, various techniques of artificial intelligence have been used in many industries, including the metal forming industry: specifically, Artificial Neural Network (ANN) is used for developing predictive models for end-milling machining, powder metallurgy, and high-speed machining [12,13,14]. In addition, machine learning techniques have dominated manufacturing in an attempt to develop the most effective predictive models [15,16,17,18,19]. There are also different optimization algorithms commonly used in manufacturing processes. For example, the Johnson-Cook model constants (J-C constants) of ultra-fine-grained titanium were researched by Ning et al. [20]: based on the chip formation model, they identified such constants via enforcing the gradient search method using a Kalman filter. Later on, Ning and Liang [21] developed an inverse identification method for J-C constants by replacing the exhaustive search method with an iterative gradient search method in the Kalman Filter algorithm. They predicted machining forces using the modified chip formation model and the J-C constants. They found a close correspondence between predicted forces and experimental forces. In another study, Gok [22] introduced a new method for determining optimal cutting parameters by applying fuzzy TOPSIS and gray relational analysis. He found that the lowest values of cutting velocity, feed rate, and cutting depth produce the smallest Ra, Rt, Ff, and Fc values in terms of surface roughness. The results obtained using fuzzy TOPSIS are in accordance with the Gray relational analysis. Zuo et al. [23] presented a new approach to reduce design space and guarantee topology outcomes concerning manufacturability and engineering, i.e., they recommended a design that conforms to accepted principles, tests, or standards. They also introduced manufacturing and machining constraints to the topology optimization method formula. Their investigations suggest that modified topology optimization can solve non-manufacturing and non-machining problems related to engineering applications.

Kurra et al. [24] incrementally predicted surface roughness of Extra Deep Drawing (EDD) steel under various forming conditions. They evaluated the performance of ANN, SVR, and a model developed in the scope of the study (Genetic Programming) using an R-squared value. Using a feed-forward neural network with a Backpropagation algorithm, Nasrollahi and Arezoo [25] used training data for two different ANN models to predict the springback of bending in the case of sheet metals with holes. They found that data used for one type of hole, i.e., an oblong hole, and for three other types of holes (oblong, circle, square) in the bending area all affected springback. They also established that the use of all types of holes produces more accurate result for the prediction of springback: case errors are fewer than in the case of training each hole separately. Mekras [26] implemented an ANNs model for successful process set-up and used it in the scope of sheet metal forming theory. In the model, set-up parameters including aluminum alloy type, sheet thickness, pressing speed, and the tools’ geometrical details were considered. Mekras found that even in the multi-input and multi-output process models, which included three inputs and four outputs, the models’ accuracy was satisfactory. Kashid and Kumar [27] reviewed sixty-three published research articles and examined the applications of the ANN technique in sheet metal working; SPIF was not mentioned in any of the works cited in the study.

Nowadays, there are many articles concerned with modeling and optimizing different parameters in SPIF processes using an artificial neural network. Maji and Kumar [28] found that the Adaptive Neuro-Fuzzy Inference System (ANFIS) yields more accurate prediction when a hybrid algorithm is used, and even more so when a Backpropagation algorithm is applied. They developed a response surface methodology and ANFIS to predict the outcome of SPIF components; they considered different process parameters and dealt with inverse predictions of process parameters in SPIF. Furthermore, they utilized the desirability function and a non-dominated sorting genetic algorithm for performing multi-objective optimization in the scope of SPIF. Oraon and V. Sharma [29] predicted the surface quality of SPIF parts by adopting the ANN model using a feed-forward neural network along with a backpropagation learning algorithm. They reported a result of 94.744% for ANN simulation performance with a mean absolute error of 1.068%. Also, an ANN model was utilized by Mulay et al. [30] to predict the average surface roughness and the wall angle of AA5052-H3 parts manufactured using SPIF. Oraon et al.[31] trained feed-forward backpropagation (FFBP) in an ANN model with a structure 6-6-1 to predict the surface roughness of a brass Cu67Zn33 piece formed by way of SPIF. Radu et al. [32] evaluated the effectiveness of the Response Surface Method (RSM) and the Neural Network (NN) method for improving and controlling the accuracy of SPIF components. Basing their claims on the accuracy of their experiments, they suggest further research of a broader range of process parameters; they claim that such investigation will help to generate valid general empirical models. Behera et al. [33] analyzed the accuracy of truncated pyramids formed using SPIF. They suggested studying the effects of the interaction between diverse features of SPIF, and they made predictions for that purpose. In addition, they also investigated the effects of material properties and sheet thickness on accuracy profiles. In another study, McAnulty et al. [34] described the effects of forming tip diameter on formability, which is the focus parameter in their review paper: their efforts are underpinned by the fact that contradictory results were published about the impact of tool diameter on formability. Ten articles reported that a decrease in tip diameter causes a reduction in formability, whereas seven articles claimed the opposite. However, six of the studies claimed that the tip diameter should be optimized to reach maximum formability. Bayram and Koksal [35] found that a 0.5 mm step size offers better homogeneous distribution and geometrical accuracy than a 0.2 mm step size in the case of SPIF concerning a 1 mm thick AA2024 aluminum alloy. Nama et al. [36] found that a larger tool head, an increase in tool speed and feed rate lead to better surface roughness of an aluminum 1100 sheet with 0.6 mm thickness. Rattanachan and Chungchoo [37] investigated the formability of DIN 1.0037 steel and found that formability decreased as a consequence of an increase in tool speed. Based on a review published by Nimbalkar and Nandedkar [38], the most significant facet in SPIF is the forming tool. For the optimization of the SPIF process, the quality of formed components should be maximized. The ideal characteristics of the product formed using SPIF are geometric accuracy and maximum forming depth, which can be reached using a desired shape. Besides, formed part accuracy is one of the significant elements of the process capability of SPIF.

Based on the literature, it can be concluded that ANN could be a useful tool for result prediction and modeling prior to starting new experiments. The benefits of using machine learning based artificial neural networks before starting new experiments consist in reducing the time needed for preparing the experiments, minimizing the errors, and increasing efficiency. Furthermore, ANN is one of the most powerful tools to predict experimental data for solving engineering problems, and ANN can serve as a very useful tool to create and evaluate processes and to determine the final details of tools.

The above-detailed issues as well as the lack of well-defined requirements of the SPIF process and the absence of referent mathematical models have motivated the authors to examine the investigation and prediction of the formability and geometric accuracy of truncated frustums processed using SPIF. To the authors’ knowledge, such an experimental process has not been tested or described in the literature. In fact, in the scope of the present project, forming depth was considered an indicator of formability: forming depth is deemed as an indicator of the formability of formed parts, as described in [39,40,41,42,43,44,45,46]. Furthermore, as an aim and novelty in the scope of this paper, a prediction equation for both accuracy and formability based on weights and biases was derived, as well as the joint partitioning weight of the neural network was adopted to assess the Relative Importance (RI) of SPIF parameters on the output. In view of this and in the scope of this paper, influence parameters are tool materials, tool shape, end corner radius, and the surface roughness (Ra/Rz) of the tool.

2 Material properties

In the experiments conducted in the scope of this study, the components’ blank sheet was made of 0.22 mm AlMn1Mg1 aluminum alloy. By cutting the specimens from the sheet at 0°, 45°, and 90° of the rolling directions, tensile tests were carried out at room temperature using an INSTRON 5582 universal testing machine. In the research design, 3 samples were used for each direction regarding the rolling direction. As shown in Table 1, which presents average data of the mechanical properties of the sheet material, the relative standard deviation did not exceed 3%. In the scope of the research design, related tensile tests were carried out based on the EN ISO 6892-1:2010 standard, and an Advanced Video Extensometer (AVE) was used to measure the planar anisotropy values (r10).

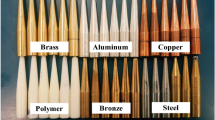

Table 2 shows the chemical composition of the sheet material. In addition, in the SPIF experiments, different tool materials were used: tool materials (see Fig. 1) consisted of steel (C45), brass (CuZn39Pb3), bronze (CuSn12), copper (E-Cu57), aluminum (AlMgSi 0.5), and polymer VeroWhitePlus (RGD835). The hardness of the tools was tested experimentally with the help of a Wolpert Diatronic 2RC S hardness tester, and the measurement was carried out based on the ISO 6506-1:2014 standard. The materials were measured by a FOUNDRY-MASTER Pro2 Optical Emission Spectrometer in order to determine the ISO code of each type of tool material. Using the ISO code of each material, the mechanical properties of the metallic tool were listed. The properties were ensured by measurements executed by [SAARSTAHL, L. KLEIN SA, AURUBIS, PX PRECIMET SA, ALUMINCO S.A.] based on the sequence of the materials in Table 3. Table 4 presents the properties of the polymer tool provided by STRATASYS.

3 Experiments

Experimental tests were performed using forming a frustum geometry (see Fig. 2a). Each component was formed until failure: the crack criterion was defined as the end of forming. Given this, the crack happening during forming is the very criteria for establishing the forming limit. Fig. 3 shows a failed specimen. Two different tool tips were used (spherical and flat), with different tip diameters and corner radiuses. Fig. 2b shows the schematic drawing of the tools, and Table 5 specifies the dimensions of the spherical and flat tools. The experiments were performed on a SIEMENS Topper TMV-510T 4-axis CNC milling machine: Fig. 4 shows the CNC table with a rapid clamping rig. The full forming processes were carried out using the same parameters: a 1500 mm/min feed rate and a 2000 rpm spindle speed were applied. A constant step-down of a value of 0.05 mm was used, as the application of a smaller step-down would have resulted in better geometric accuracy and surface finish of the SPIF components [47, 48]. To increase the reliability of the measurements, each sample was formed three times experimentally; the total number of formed components were thus 108. The data collected from these samples (108) were used as an actual dataset (input and output) for prediction; process parameters were used as inputs, and the obtained results of geometric accuracy and formability (maximum depth) were used as output arguments of the ANN predicted model. In regard to the above conditions (see Table 13 in the Appendix), which lists the raw data of the 108 components formed using experimental SPIF. In each forming process, the surface roughness of the tool was measured prior to and after forming. The value of the surface roughness of the forming tool before the forming process served as the adopted input value of the formed product. As for this value, tool surface roughness following the forming process was taken as input for the subsequent forming process and so on. This method was applied to all tools used in this research. Nevertheless, due to the wear on the polymer tool surface caused by forming, a new polymer-forming tool was used in each forming process, and each polymer tool’s surface roughness was measured before the start of the process. Formed part profiles were measured using a Mitutoyo Coordinate Machine (see Fig. 5). The average deviation along the wall was considered as the value of geometrical accuracy for every 3 SPIF components, and the components were formed under the same process conditions. Depth was measured using a Mitutoyo Digimatic Height Gauge with a maximum jaw distance of 12′′/300 mm and an uncertainty value of x .0005′′/0.01 mm. Forming depth was measured between the bottom part and the upper sheet surface and is expressed as the distance of the jaws, as shown in Fig. 6.

The measurement data were analyzed to obtain formability and geometric accuracy values. In the scope of this, formability constituted the forming depth of the component, while accuracy was understood as the deviation of the wall radius from the designed CAD model. Accuracy was thus expressed in the form of a comparison between the real wall radius obtained by the Mitutoyo Coordinate Machine and the wall radius of the CAD model, which was 25 mm. The wall radius is shown in Fig. 3.

4 Artificial neural networks

It has often been claimed that Warren McCulloch and Walter Pitts’ seminal study of the 1940s introduced the neural network concept. Their original view of neural networks showed that neural networks could compute any function of logic or mathematical formula. In the late 1950s, the innovation of the perceptron network, i.e., the first practical application of artificial neural networks, was introduced [49]. Recently, thousands of papers have been published in connection with neural networks and have been used in various sciences. Such uses include applications by artists, filmmakers, musicians, scientists, and particularly by researchers in order to produce useful and often creative results. Artificial Neural Network (ANN) topology could be determined based on the number of layers (input and output layer(s)), as well as on the transfer function of these layers and the number of neurons in each layer [50]. Any ANN structure has input and output layers and also features a minimum of one hidden layer. There are several neurons in each layer, and they exhibit a transfer function: this allows the transfer of weight backward and forward [51]. In this study, for the ANN model, the backpropagation learning algorithm was used, which is called “multilayer perceptron” (MLP) or “multilayer feed-forward.” The concept of the MLP originated from Werbos 1974, and Rumelhart et al. 1986 [52]. Equation 1 expresses the multilayer perceptron as follows:

where y is the output and x is input, wi are the weights, and b is the bias [53].

In order to predict the actual data obtained from the components formed by SPIF, two different structures of the ANN model were built using the Neural Network Toolbox™ of MATLAB [54]. Both structures had the same number of inputs: ten (different tool materials, tool shapes, tool end/corner radiuses, and tool surface roughness values (Ra and Rz)). The tools were classified into two groups based on their shapes (flat and hemispherical) for the purpose of checking the effect of the tool shape on the accuracy and formability of components. Furthermore, each shape was divided into three sections based on the corner radius (r) of the flat tool and the tip radius (R) so that the effectiveness of these factors on the above-mentioned outputs could be assessed. Each structure had one hidden layer with ten neurons. For the experiments, different training and transfer functions were trained (see Sections 4.2 and 4.3). The main difference between the structures was the number of outputs, which also affected the number of neurons in the output layer, as shown in the pictorial representation of Fig. 7a and b. In the scope of the study, the learning rate was 0.01, the performance goal was 0.001, and the number of epochs was 1000.

4.1 One-hot encoding

One-hot encoding is the common method of describing categorical variables, also known as dummy variables [55]. The concept behind one-hot encoding is to substitute a categorical variable with one or more new features. Through the replacement of the categorical inputs with values 0 and 1, these categorical inputs will sparse-binarize and can be included as a feature for training the ANN model. In this study, two sets of data were encoded. Furthermore, tool materials and tool shapes were binarized as sparse matrices of 0 and 1. It must be considered that when one material is active as number 1, all other materials are encoded as 0, and so on.

4.2 Training function

In a Neural Network (NN), optimization is a procedure used for training a dataset to tune and for finding a set of network weights to create a good map for prediction. Different optimization algorithms (training functions) can be used in the training process to predict the output from a given input. The training algorithm relies on many factors including, and not limited to, the data set, the number of weights and biases, and the performance goal. Hence, selecting the proper training algorithm to be the fastest and best in the scope of a prediction is a challenging task. With this in mind, various types of training-function “learning algorithms” were implemented in the scope of this paper for mapping output parameters. Training functions used for that purpose were Levenberg-Marquardt (Trainlm), Conjugate Gradient Backpropagation with Powell-Beale Restarts (Traincgb), Resilient Backpropagation (Trainrp), Bayesian Regularization Backpropagation (Trainbr), BFGS Quasi-Newton (Trainbfg), and Scaled Conjugate Gradient (Trainscg). Levenberg-Marquardt is faster compared to other training functions and more agile; and likewise, the BFGS Quasi-Newton algorithm is also quite fast [54].

4.3 Transfer function

There are different types of transfer functions, and selecting an appropriate one depends on many factors: particularly the type of ANN. In a NN, the sums of each layer are weighted, and the summed weights undergo a transfer function. Finally, transfer functions calculate a layer’s output from the summed weights that entered a layer. Usually, Log-sigmoid (Logsig) is used in multilayer networks; other functions such as Tan-sigmoid (Tansig) can be an alternative, and this latter is usually used for pattern recognition problems [54]. Nevertheless, in this study, various types of transfer functions were executed, and different training functions were conducted to improve prediction accuracy. Ultimately, the Purelin transfer function was selected for the output layer. Table 6 lists the algorithm of the transfer function used for the purpose of this study.

4.4 Dataset distribution

The historical data of formed components can be used as inputs in order to predict the expected outcome of forming without performing any new process of forming. Actual data must be divided into different subsets: i.e., training, validation, and testing datasets. The performance of any model can be significantly affected by the splitting of the dataset into training and testing data. Shahin [56] claimed that there is no clear relationship between the ratio of the data of different subsets. Zhang et al. [57] describe that one of the primary dataset problems is the dividing ratio, and this problem has no general setting as a solution. Based on their survey, the researchers divided their datasets in line with a different ratio of subsets. The most extensively used ratios are 90% vs. 10%, 80% vs. 20%, or 70% vs. 30%. In fact, unbalanced subsets negatively affect model performance. In the trials conducted in the scope of this paper, optimal prediction resulted from the data of subsets 80% vs. 20% of the actual data concerning training and testing datasets, respectively. To assure that the model learned and assessed all data samples, the dataset of the training had to be divided into validation and test subsets. Accordingly, the training dataset, which is 80% of the whole dataset, was divided into 90% for training, 5% for validation and the remaining 5% for testing. It should be noted that the testing dataset (20%) did not include the training dataset, which was stored for final testing purposes. Concerning the actual dataset, there is 108 rows extracted from the experiments of forming the given sheet using SPIF, and these rows were used as training and testing datasets.

4.5 Overfitting

Overfitting happens if the model takes into consideration variables such as the noise or random fluctuations of the trained data as learning data and considers it as one of the model’s concepts. As a result, this function affects new data saved for testing purposes. Consequently, this concept produces a model that yields good performance on the training dataset but does not perform so effectively on a new sample dataset used as a test sample. In order to ensure that the trained model did not exhibit features of overfitting, 20% of the real data was saved for the model’s final testing. In this set-up and for the purpose of preventing overfitting, regularization discouraged the learning of a more complex or flexible model. Another solution to reduce overfitting is to reduce the complexity of a NN model. One possible method of improving network generalization is to adjust the value of weights by changing network parameters. As a consequence, controlling the complexity of a model is achieved through the use of regularization [58]. The second method of improving generalization is called early stopping. Using this technique, training data, which constitutes 80% of the entire actual data set, is divided into three subsets: training, validation, and test subsets. The training data set is employed to compute the gradient and modernizing weights and biases of the network, whereas the validation set is for monitoring the error as the training process runs. The third subset is the test set, which plots the error during the training process. However, if the overfitting of data commences during the training, the number of errors will increase in the validation set. If the validation error rises above a specified number of iterations, the training stops, and the weights and biases return to the smallest validation error [59].

5 Investigation of accuracy

There are many different metrics for validation but using the proper validation metric is an important consideration in the evaluation and improvement of model performance. In this study, different structures and various training and transferring algorithms were compared and validated. The criteria of validation consist in minimizing error. The coefficient of determination (R2) and adjusted determination (adj. R2) are used for checking the models and structures in question since an (R2) value close to 1 implies good performance. Moreover, Root Mean Square Error (RMSE) and Mean Absolute Error (MAE) were also used for validation. In fact, RMSE is more sensitive to error if MAE is more stable. In fact, RMSE and MAE have better evaluation metrics compared to (R2) due to the latter’s limitation listed in [60]. Better performance of the model is indicated by a situation where MAE and RMSE values are close to 0. Even so, the significant variance between RMSE and MAE values means large variations in error distribution. In this study, Mean Relative Error (MRE) was used to measure the precision of the model, as it is clear that absolute error is the magnitude of the difference between the actual and predicted values; hence, the relative error is the absolute error divided by the magnitude of the actual value. The distribution of prediction values was validated via Standard Error Mean (SEM), where SEM is the standard deviation of the sampling distribution of the sample mean. In other words, the variance of the sample means is inversely proportionate to the sample size, and SEM is the original Standard Deviation (SD) of the sample size over the square root of the sample size. It is worth pointing out that the sample size is 108 for the entire dataset, while 86 for training, and 22 for testing. Error (E), Mean Error (ME), Mean Square Error (MSE), and Standard Deviation (SD) were applied for deriving the validation equations, as mentioned earlier; (R2) and (adj. R2) were derived from the Total Sum of Square (SStot) and the Sum of the Square of Residuals (SSres). The validation equations can be represented as follows:

thus:

6 Contribution analysis of input variables

There are different methods to assess the contribution of each input variable on ANN outputs. For the generation of a dependent variable, this paper utilized Garson’s algorithm [61] to determine the relative importance (RI) of the various inputs as a predictor of predicted outputs. The Garson method was also used in different studies as underpinned by [62,63,64,65,66,67]. The algorithm, as shown in Eq. 19, is based on the connection weights of the neurons. Goh [68] applied the Garson algorithm and claimed that RI estimation requires partitioning of the hidden output weights into elements connected to each neuron in the input layers.

where:

- nv:

-

number of neurons in the input layer,

- nh:

-

number of neurons in the hidden layer,

- yj:

-

absolute value of connection weights between the input and the hidden layers,

- Oj:

-

absolute value of connection weights between the hidden and the output layers.

7 Results and discussion

For the purpose of inspecting the accuracy of different ANN models and for producing comparisons between the two structures, errors of predicted results were analyzed to determine the ANN’s performance. The errors extracted from the results were subjected to various validation metrics.

7.1 One-output structure

7.1.1 Prediction of accuracy

Table 7 illustrates two validation metrics used for checking the one-output ANN structure (the prediction of the accuracy of SPIF components). It can be seen from the data that Scaled Conjugate Gradient (Trainscg) achieved the best values: near to zero concerning MSE and up to 1 concerning R2.

From the data shown in Fig. 8a, it is clear that there is a significant disparity (MSE = 0.1503, R2 = 0.9909) between the different transfer functions in favor of Radbas when the Trainscg training function is run. It can be seen from Fig. 8b that R2 and MSE differed slightly when the Radbas transfer function with various training functions was used, but the highest R2 and smallest MSE were found in the case of Trainscg. With reference to this, Fig. 8 exhibits a one-output structure of the ANN used for predicting the accuracy value of SPIF components.

7.1.2 Prediction of formability

Regarding the prediction of formability via the one-output ANN, the differences between training and transfer functions are highlighted in Table 8. There is a significant positive correlation between Trainbfg and Logsig. Therefore, the results indicate that the smallest (MSE) is 0.0351, and the largest (R2) is 0.9860. Concerning formability, the same values of (MSE) and (R2) as the ones in Fig. 8 can be established based on the values listed in Table 8.

7.1.3 Assessment of the best ANN models for predicting accuracy and formability in the case of the one-output structure

Table 9 presents the setout scenario for validating the ANN model: the use of Trainscg for predicting accuracy and the use of Trainbfg for predicting formability vis a vis the application of the Radbas and Logsig transfer functions, respectively. An overview of all metrics values of the validation was used for comparing the various training and transfer functions; this process is listed in the Appendix as Tables 14 and 15. Such values are related to the one-output ANN structure and were used to predict the accuracy and formability of SPIF parts. Together these results provide valuable insights and suggest the following: positive error means that the predicted value is larger than the actual value, and a negative error means that the predicted value is lower than the actual value.

Figure 9a and b compares two trials of prediction concerning accuracy and formability in addition to their variation with the actual data obtained from SPIF experiments. There is a clear trend of fitting between predicted values and real data. Moreover, Fig. 10a and b clearly shows a significant positive correlation between predicted and actual datasets.

7.2 Two-output structure

Two discrete analyses emerge by comparing the values of ANN in terms of output numbers. First, the rate of the smallest (MSE) was obtained during accuracy prediction: 99.8611% vs. 0.1389% for one and two outputs, respectively. Second, regarding formability, 99.7778% vs. 0.2222% was the (MSE) rate for one and two-output structures, respectively. However, there are several possible explanations of the poor results that originate from two-output structures as compared to the one-output structure. One main reason for these results may be the fluctuation of each output, which are located far from one another, and there is also an enormous difference between the inputs. This is explained by the fact that accuracy values are between 1 and 17 mm, and formability values (maximum depth) are between 10 and 20 mm. The most striking result emerging from the data is that the best prediction is obtained in the two-output structure of Trainlim with Logsig. For the sake of clarity, Table 10 shows the errors of this two-output structure model. Furthermore, the full results are shown in the Appendix as Table 16 and Table 17

7.3 Training and testing assessment of the best ANN models for the one-output structure

A comparison of the results reveals that the suggested structure of ANN is the one-output argument structure, which concurrently utilizes the Trainscg training function and the Radbas transfer function. This scenario offered the best prediction of accuracy. It is important to note that Trainbfg and Logsig emerged as a reliable method of the prediction of formability. Therefore, these results were to be interpreted in the light of numerous analyses and details. Consequently, the actual data were divided into two major sets: training and testing, with values of 80% with 20%, respectively, as discussed in Section 4.4. The suggested structure and model were run on the training datasets and were tested for prediction using a test dataset. Table 11 lists the errors and validation metrics of training and testing prediction resulting from the model, as described above.

The accuracy model was also successful in prediction, but its operation was coupled with an insignificant decrease in performance. Here, one unanticipated finding was that the accuracy of the prediction of formability decreased considerably. An emerging issue from these findings is that a reduction in the sample size leads to an increase in error and causes a decrease in performance. Moreover, the one-output ANN model cannot be extrapolated to different sample sizes. In addition, it is important to bear in mind the presence of possible bias in these responses. Consequently, various models were investigated in an attempt to find an alternative model capable of predicting formability more accurately. As a result, the alternative model using Traincgb with Logsig was found to be capable of successfully predicting formability, as shown in Table 12.

7.4 Analytical equations to predict the accuracy and formability of SPIF

For finding an alternative method of predicting formability and accuracy in an easy and more accurate way, rather than having to build a NN model each time, analytical equations that could predict the accuracy and formability of SPIF were envisaged to be used in place of the approved network. Therefore, two equations (23 and 27) were established, which required constant weights and biases extracted from the recommended ANN network. The ANN network tuning provided the weights and biases necessary for achieving the best prediction. In this study, due to the fact that only one hidden layer was applied, there was only one set of input weight (IW) and layer weight (LW). The IW is between the inputs and the hidden layer, and the LW is situated between the hidden layer and the output layer. The biases for each layer are b1 and b2.

Tables 18 and 19 in the Appendix provide (b1), (b2), (IW), and (LW) obtained from the best trained ANN model regarding accuracy (as shown in Table 18 in the Appendix) and formability (as shown in Table 19).

where: LW = [6.4552 -2.1276 2.7984 7.8625 4.7908 4.3514 -6.4366 -4.2600 2.3087 4.7381]

where LW = [1.7159 1.0223 -1.0223 3.9185 -17.628 16.1231 3.1300 25.4021 25.2140 1.1739]

7.5 Relative importance

With regards to the relative importance and weights analysis of the neural network, it can be seen that the most significant factor in Fig. 11a is the tool-end radius with a RI of 29% concerning accuracy, while tool surface roughness (Ra and Rz) has the lowest effect. Another interesting observation, based on Fig. 11b, is that the tool-end radius with a RI of 54% is the most influential factor with respect to formability. Secondly, in the prediction process, tool shape and tool surface roughness (Ra) with a relative importance of 24% and 28%, respectively, were as important as effects on accuracy and formability. However, concerning accuracy and formability, a striking difference in the RI ratios of the tool-end radius can be noticed. In addition, tool material can also be considered as a powerful factor in determining accuracy.

In the scope of this study, the tools were created by a turning machine under identical machining conditions. However, as mentioned in Section 2, different materials with varying values of hardness were used for producing the tools. Due to that scenario, this resulted in differing values of surface roughness of the tools; and this, in turn, impacted the surface roughness of the final products formed using SPIF. High roughness of the tool tears the wall of the components and causes low depth: this depth is considered a value of formability, as previously mentioned. Similarly to the research presented in the scope of this paper, Hagan and Jeswiet [69] analyzed the influence of several forming variables, such as step-down size and spindle speed on surface roughness in the scope of an ISF process. They claim that tool hardness and its polished surface not only affect depth increment tests but concurrently also result in impacts on the surface roughness of the final product. What Hagan and Jeswiet found explains the change in tool surface roughness identified in the current study: this phenomenon can be explained by changes in formability. As a result of differences in the roughness of the forming tools, differing surface roughness of the products was observed. The latter causes differences in forming depth, which is considered an attribute of formability.

8 Conclusion

This study presented different models and structures of ANN for predicting the accuracy and formability of SPIF components produced from thin aluminum alloy blanks. The main goal of the study was to determine the best model and architecture for that purpose. The most significant finding from the study was that the structure of a one-output solution showed better results than a network with two outputs. The second significant finding was that Trainscg and Trainbfg, as a training function together with Radbas and Logsig as a transfer function, achieved the best prediction concerning accuracy and formability. In the scope of the experiments, the results were assessed using different validation metrics; the highest R2 values were 0.9909 and 0.9860, and the lowest MSE values were 0.1503 and 0.0351 for accuracy and formability, respectively. In fact, this research project marks the first time that the relative importance (RI) method was used to assess SPIF factors on outputs. RI revealed that the tool end radius is an effective factor that impacts accuracy and formability with values of 29% and 54%, respectively. Tools with different materials exhibit diverse hardness, which causes different surface strains. This also holds true regarding tool geometry. Nevertheless, it is a noteworthy finding to point out that two tools from two different materials with identical geometry will cause different surface strains; consequently, they will have different effects on components. Differing values of hardness result in varying tool tip surface roughness, which thereby affect sheet accuracy and formability. Tool shapes together with tool materials are also influential factors, which impact accuracy by 24% and 21%, respectively. Following the tool radius with a value of 28%, tool surface roughness (Ra) is the second most effective factor concerning formability output. Finally, tool surface roughness (Ra) and (Rz) with values of 8% and 2% for each factor, respectively, were found to be the least influential factor on accuracy and formability.

Data availability

Not applicable.

References

Emmens WC, Sebastiani G, van den Boogaard AH (2010) The technology of incremental sheet forming—a brief review of the history. J Mater Process Technol 210(8):981–997. https://doi.org/10.1016/j.jmatprotec.2010.02.014

Reddy NV, Lingam R (2018) Double sided incremental forming: capabilities and challenges. J Phys Conf Ser 1063:012170. https://doi.org/10.1088/1742-6596/1063/1/012170

Behera AK, de Sousa RA, Ingarao G, Oleksik V (2017) Single point incremental forming: An assessment of the progress and technology trends from 2005 to 2015. J Manuf Process 27:37–62. https://doi.org/10.1016/j.jmapro.2017.03.014

Shrivastava P, Tandon P (2019) Microstructure and texture based analysis of forming behavior and deformation mechanism of AA1050 sheet during Single Point Incremental Forming. J Mater Process Technol 266:292–310. https://doi.org/10.1016/j.jmatprotec.2018.11.012

Li Y, Chen X, Liu Z, Sun J, Li F, Li J, Zhao G (2017) A review on the recent development of incremental sheet-forming process. Int J Adv Manuf Technol 92(5–8):2439–2462. https://doi.org/10.1007/s00170-017-0251-z

Duflou JR, Habraken AM, Cao J, Malhotra R, Bambach M, Adams D, Vanhove H, Mohammadi A, Jeswiet J (2018) Single point incremental forming: state-of-the-art and prospects. Int J Mater Form 11(6):743–773. https://doi.org/10.1007/s12289-017-1387-y

Maqbool F, Bambach M (2019) Experimental and numerical investigation of the influence of process parameters in incremental sheet metal forming on residual stresses. J Manuf Mater Process 3(2):31. https://doi.org/10.3390/jmmp3020031

Cawley B, Adams D, Jeswiet J (2013) Examining tool shapes in single point incremental forming. Trans North Am Manuf Res Inst SME 41(April):114–120

Najm SM, Paniti I (2018) Experimental investigation on the single point incremental forming of AlMn1Mg1 foils using flat end tools. IOP Conf Ser Mater Sci Eng 448:012032. https://doi.org/10.1088/1757-899X/448/1/012032

Ziran X, Gao L, Hussain G, Cui Z (2010) The performance of flat end and hemispherical end tools in single-point incremental forming. Int J Adv Manuf Technol 46(9–12):1113–1118. https://doi.org/10.1007/s00170-009-2179-4

Wu SH, Reis A, Andrade Pires FM, Santos AD, Barata da Rocha A (2012) Study of Tool Trajectory in Incremental Forming. Adv Mater Res 472–475:1586–1591. https://doi.org/10.4028/www.scientific.net/AMR.472-475.1586

Zain AM, Haron H, Sharif S (2010) Prediction of surface roughness in the end milling machining using Artificial Neural Network. Expert Syst Appl 37(2):1755–1768. https://doi.org/10.1016/j.eswa.2009.07.033

Amirjan M, Khorsand H, Siadati MH, Eslami Farsani R (2013) Artificial neural network prediction of Cu–Al2O3 composite properties prepared by powder metallurgy method. J Mater Res Technol 2(4):351–355. https://doi.org/10.1016/j.jmrt.2013.08.001

Ezugwu EO, Fadare DA, Bonney J, Da Silva RB, Sales WF (2005) Modelling the correlation between cutting and process parameters in high-speed machining of Inconel 718 alloy using an artificial neural network. Int J Mach Tools Manuf 45(12–13):1375–1385. https://doi.org/10.1016/j.ijmachtools.2005.02.004

Li E (2013) Reduction of springback by intelligent sampling-based LSSVR metamodel-based optimization. Int J Mater Form 6(1):103–114. https://doi.org/10.1007/s12289-011-1076-1

Marouani H, Aguir H (2012) Identification of material parameters of the Gurson–Tvergaard–Needleman damage law by combined experimental, numerical sheet metal blanking techniques and artificial neural networks approach. Int J Mater Form 5(2):147–155. https://doi.org/10.1007/s12289-011-1035-x

Lela B, Bajić D, Jozić S (2009) Regression analysis, support vector machines, and Bayesian neural network approaches to modeling surface roughness in face milling. Int J Adv Manuf Technol 42(11–12):1082–1088. https://doi.org/10.1007/s00170-008-1678-z

Hussaini SM, Singh SK, Gupta AK (2014) Experimental and numerical investigation of formability for austenitic stainless steel 316 at elevated temperatures. J Mater Res Technol 3(1):17–24. https://doi.org/10.1016/j.jmrt.2013.10.010

Kondayya D, Gopala Krishna A (2013) An integrated evolutionary approach for modelling and optimization of laser beam cutting process. Int J Adv Manuf Technol 65(1–4):259–274. https://doi.org/10.1007/s00170-012-4165-5

Ning J, Nguyen V, Huang Y, Hartwig KT, Liang SY (2018) Inverse determination of Johnson–Cook model constants of ultra-fine-grained titanium based on chip formation model and iterative gradient search. Int J Adv Manuf Technol 99(5–8):1131–1140. https://doi.org/10.1007/s00170-018-2508-6

Ning J, Liang SY (2019) Inverse identification of Johnson-Cook material constants based on modified chip formation model and iterative gradient search using temperature and force measurements. Int J Adv Manuf Technol 102(9–12):2865–2876. https://doi.org/10.1007/s00170-019-03286-0

Gok A (2015) A new approach to minimization of the surface roughness and cutting force via fuzzy TOPSIS, multi-objective grey design and RSA. Measurement 70:100–109. https://doi.org/10.1016/j.measurement.2015.03.037

Zuo K-T, Chen L-P, Zhang Y-Q, Yang J (2006) Manufacturing- and machining-based topology optimization. Int J Adv Manuf Technol 27(5–6):531–536. https://doi.org/10.1007/s00170-004-2210-8

Kurra S, Hifzur Rahman N, Regalla SP, Gupta AK (2015) Modeling and optimization of surface roughness in single point incremental forming process. J Mater Res Technol 4(3):304–313. https://doi.org/10.1016/j.jmrt.2015.01.003

Nasrollahi V, Arezoo B (2012) Prediction of springback in sheet metal components with holes on the bending area, using experiments, finite element and neural networks. Mater Des 36:331–336. https://doi.org/10.1016/j.matdes.2011.11.039

Mekras N (2017) Using artificial neural networks to model aluminium based sheet forming processes and tools details. J Phys Conf Ser 896:012090. https://doi.org/10.1088/1742-6596/896/1/012090

Kashid S, Kumar S (2013) Applications of artificial neural network to sheet metal work—a review. Am J Intell Syst 2(7):168–176. https://doi.org/10.5923/j.ajis.20120207.03

Maji K, Kumar G (2020) Inverse analysis and multi-objective optimization of single-point incremental forming of AA5083 aluminum alloy sheet. Soft Comput 24(6):4505–4521. https://doi.org/10.1007/s00500-019-04211-z

Oraon M, Sharma V (2018) Prediction of surface roughness in single point incremental forming of AA3003-O alloy using artificial neural network. Int J Mater Eng Innov 9(1):1. https://doi.org/10.1504/IJMATEI.2018.092181

Mulay A, Ben BS, Ismail S, Kocanda A (2019) Prediction of average surface roughness and formability in single point incremental forming using artificial neural network. Arch Civ Mech Eng 19(4):1135–1149. https://doi.org/10.1016/j.acme.2019.06.004

Oraon M, Sharma V, and Mandal S (2020) “Performance measurement in incremental deformation of Brass Cu67Zn33 through soft computing tool”. 83–89

Radu C, Cristea I, Herghelegiu E, Tabacu S (2013) Improving the accuracy of parts manufactured by single point incremental forming. Appl Mech Mater 332:443–448. https://doi.org/10.4028/www.scientific.net/AMM.332.443

Behera AK, Afonso D, Murphy A, Jin Y, and de Sousa RA (2018) “Accuracy analysis of incrementally formed tunnel shaped parts”. 40–49

McAnulty T, Jeswiet J, Doolan M (2017) Formability in single point incremental forming: a comparative analysis of the state of the art. CIRP J Manuf Sci Technol 16:43–54. https://doi.org/10.1016/j.cirpj.2016.07.003

Bayram H, Köksal NS (2017) Investigation of the geometrical accuracy and thickness distribution using 3D laser scanning of AA2024-T3 sheets formed by SPIF. Mater Tehnol 51(1):111–116. https://doi.org/10.17222/mit.2015.296

Nama SA, Namer NSM, Najm SM (2014) The effect of using grease on the surface roughness of aluminum 1100 sheet during the single point incremental forming process. J Trends Mach Des 1(1):53–56 [Online]. Available: www.stmjournals.com

Rattanachan K, Chungchoo C (2009) Formability in single point incremental forming of dome geometry. Asian Int J Sci Technol Prod Manuf Eng 2(4):57–63

Nimbalkar DH, Nandedkar VM (2013) Review of Incremental Forming of Sheet Metal Components. Int J Eng Res Appl 3(5):39–51

Gatea S, Lu B, Chen J, Ou H, McCartney G (2019) Investigation of the effect of forming parameters in incremental sheet forming using a micromechanics based damage model. Int J Mater Form 12(4):553–574. https://doi.org/10.1007/s12289-018-1434-3

Kumar A, Gulati V, Kumar P, Singh V, Kumar B, Singh H (2019) Parametric effects on formability of AA2024-O aluminum alloy sheets in single point incremental forming. J Mater Res Technol 8(1):1461–1469. https://doi.org/10.1016/j.jmrt.2018.11.001

Shamsari M, Mirnia MJ, Elyasi M, Baseri H (2018) Formability improvement in single point incremental forming of truncated cone using a two-stage hybrid deformation strategy. Int J Adv Manuf Technol 94(5–8):2357–2368. https://doi.org/10.1007/s00170-017-1031-5

Lu B, Fang Y, Xu DK, Chen J, Ai S, Long H, Ou H, Cao J (2015) Investigation of material deformation mechanism in double side incremental sheet forming. Int J Mach Tools Manuf 93:37–48. https://doi.org/10.1016/j.ijmachtools.2015.03.007

Li Y, Liu Z, W. J. T. (Bill. Daniel, and P. A. Meehan (2014) Simulation and experimental observations of effect of different contact interfaces on the incremental sheet forming process. Mater Manuf Process 29(2):121–128. https://doi.org/10.1080/10426914.2013.822977

Lora F, Schaeffer L (2014) Incremental forming process strategy variation analysis through applied strains. Braz J Sci Technol 1(1):5. https://doi.org/10.1186/2196-288X-1-5

Golabi S, Khazaali H (2014) Determining frustum depth of 304 stainless steel plates with various diameters and thicknesses by incremental forming. J Mech Sci Technol 28(8):3273–3278. https://doi.org/10.1007/s12206-014-0738-6

Fiorentino A, Ceretti E, Attanasio A, Mazzoni L, Giardini C (2009) Analysis of forces, accuracy and formability in positive die sheet incremental forming. Int J Mater Form 2(S1):805–808. https://doi.org/10.1007/s12289-009-0467-z

Lu HB, Le Li Y, Liu ZB, Liu S, Meehan PA (2014) Study on step depth for part accuracy improvement in incremental sheet forming process. Adv Mater Res 939:274–280. https://doi.org/10.4028/www.scientific.net/AMR.939.274

Mulay A, Ben BS, Ismail S, Kocanda A, Jasiński C (2018) Performance evaluation of high-speed incremental sheet forming technology for AA5754 H22 aluminum and DC04 steel sheets. Arch Civ Mech Eng 18(4):1275–1287. https://doi.org/10.1016/j.acme.2018.03.004

Hagan MT, Demuth HB, Beale MH, and De Jesús O (2014) Neural Network Design. Martin Hagan

Nabipour M, Keshavarz P (2017) Modeling surface tension of pure refrigerants using feed-forward back-propagation neural networks. Int J Refrig 75:217–227. https://doi.org/10.1016/j.ijrefrig.2016.12.011

Beale MH, Hagan MT, and Demuth HB (2013) “Neural Network Toolbox TM User ’ s Guide R2013b,” Mathworks Inc

Riedmiller PM “Machine Learning : Multi Layer Perceptrons,” Albert-Ludwigs-University Freibg. AG Maschinelles Lernen, [Online]. Available: http://ml.informatik.uni-freiburg.de/_media/documents/teaching/ss09/ml/mlps.pdf

Principe J, Euliano NR, Lefebvre WC (1997) Neural and adaptive systems: fundamentals through simulation: multilayer perceptrons. Neural Adapt Syst Fundam Through Simulation©:1–108. https://doi.org/10.1002/ejoc.201200111

Beale MH, Hagan M, and Demuth H (2019) “Deep learning toolbox getting started guide,” Deep Learn. Toolbox. https://doi.org/10.1016/j.neunet.2005.10.002

A. C. M. & S (1997) Guido, Introduction to with Python Learning Machine

Shahin M, Maier HR, and Jaksa MB (2000) “Evolutionary data division methods for developing artificial neural network models in geotechnical engineering Evolutionary data division methods for developing artificial neural network models in geotechnical engineering by M A Shahin M B Jaksa Departmen,” no. November

Zhang G, Eddy Patuwo B, Hu MY (1998) Forecasting with artificial neural networks:: The state of the art. Int J Forecast 14(1):35–62 [Online]. Available: https://econpapers.repec.org/RePEc:eee:intfor:v:14:y:1998:i:1:p:35-62

Bishop CM (1995) Neural Networks for Pattern Recognition. Oxford University Press, Inc., Oxford

Beale MH, Hagan MT, and Demuth HB (2020) “Deep Learning Toolbox TM User ’ s Guide How to Contact MathWorks”

Misra S and He J (2020) “Stacked neural network architecture to model the multifrequency conductivity/permittivity responses of subsurface shale formations,” in Machine Learning for Subsurface Characterization, Elsevier, pp. 103–127

Garson GD (1991) Interpreting Neural-Network Connection Weights. AI Expert 6(4):46–51

Zarei MJ, Ansari HR, Keshavarz P, Zerafat MM (2020) Prediction of pool boiling heat transfer coefficient for various nano-refrigerants utilizing artificial neural networks. J Therm Anal Calorim 139(6):3757–3768. https://doi.org/10.1007/s10973-019-08746-z

Ding H, Luo W, Yu Y, Chen B (2019) Construction of a robust cofactor self-sufficient bienzyme biocatalytic system for dye decolorization and its mathematical modeling. Int J Mol Sci 20(23):6104. https://doi.org/10.3390/ijms20236104

Zhou B et al (2015) Relative importance analysis of a refined multi-parameter phosphorus index employed in a strongly agriculturally influenced watershed. Water Air Soil Pollut 226(3):25. https://doi.org/10.1007/s11270-014-2218-0

Shabanzadeh P, Yusof R, Shameli K (2015) Artificial neural network for modeling the size of silver nanoparticles’ prepared in montmorillonite/starch bionanocomposites. J Ind Eng Chem 24:42–50. https://doi.org/10.1016/j.jiec.2014.09.007

Vatankhah E, Semnani D, Prabhakaran MP, Tadayon M, Razavi S, Ramakrishna S (2014) Artificial neural network for modeling the elastic modulus of electrospun polycaprolactone/gelatin scaffolds. Acta Biomater 10(2):709–721. https://doi.org/10.1016/j.actbio.2013.09.015

Rezakazemi M, Razavi S, Mohammadi T, Nazari AG (2011) Simulation and determination of optimum conditions of pervaporative dehydration of isopropanol process using synthesized PVA–APTEOS/TEOS nanocomposite membranes by means of expert systems. J Membr Sci 379(1–2):224–232. https://doi.org/10.1016/j.memsci.2011.05.070

Goh ATC (1995) Back-propagation neural networks for modeling complex systems. Artif Intell Eng 9(3):143–151. https://doi.org/10.1016/0954-1810(94)00011-S

Hagan E, Jeswiet J (2004) Analysis of surface roughness for parts formed by computer numerical controlled incremental forming. Proc Inst Mech Eng B J Eng Manuf 218(10):1307–1312. https://doi.org/10.1243/0954405042323559

Funding

Open access funding provided by Budapest University of Technology and Economics. The research reported in this paper and carried out at Budapest University of Technology and Economics has been supported by the NRDI Fund (TKP2020 NC, Grant No. BME-NC) based on the charter of bolster issued by the NRDI Office under the auspices of the Hungarian Ministry for Innovation and Technology and by the European Commission through the H2020 project EPIC (https://www.centre-epic.eu/) under grant No. 739592. Furthermore, this research has been supported by Hungary’s TEMPUS Public Foundation and Stipendium Hungaricum Scholarship.

Author information

Authors and Affiliations

Contributions

Sherwan Mohammed Najm carried out the experiments, model building, data analysis, and interpretation, and played the lead role in drafting the paper. Imre Paniti provided the concept of the work and carried out supervision, including the critical revision of the paper.

Corresponding author

Ethics declarations

Ethical approval and consent to participate

Not applicable.

Consent for publication

Not applicable.

Conflicts of interest

The authors declare no conflict of interest.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix

Appendix

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Najm, S.M., Paniti, I. Artificial neural network for modeling and investigating the effects of forming tool characteristics on the accuracy and formability of thin aluminum alloy blanks when using SPIF. Int J Adv Manuf Technol 114, 2591–2615 (2021). https://doi.org/10.1007/s00170-021-06712-4

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00170-021-06712-4