Abstract

We present a simple iterative method for solving quasimonotone as well as classical variational inequalities without monotonicity. Strong convergence analysis is given under mild conditions and thus generalize the few existing results that only present weak convergence methods under restrictive assumptions. We give finite and infinite dimensional numerical examples to compare and illustrate the simplicity and computational advantages of the proposed scheme.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Let C be a nonempty, closed and convex subset of a real Hilbert space H, and let \(A:H\rightarrow H\) be a single valued mapping. Let \(\langle \cdot , \cdot \rangle \) and \(||\cdot ||\) denote the inner product and induced norm on H, respectively. The classical variational inequality (VI) problem was introduced independently by Fichera (1963) and Stampacchia (1968) as finding a point \(x^*\in C\), such that

We denote the solution set of VI (1.1) by \(V_I,\) and we denote the trivial solution set and non-trivial solution set of the VI (1.1) by \(V_T\) and \(V_N,\) respectively, that is,

Over the years, variational inequality theory has proven to be a major area of research in mathematical analysis, and has drawn the attention of several researchers due to its wide range of applications in diverse fields, such as optimal control, game theory, signal processing, linear programming, image recovery, etc., (see, for instance Chen et al. 2022; Godwin et al. 2023a, c; Iiduka 2012; Kinderlehrer and Stampacchia 1980; Ogwo et al. 2022a; Wickramasinghe et al. 2023). The VI is also known to be a generalization of several other problems in nonlinear analysis, such as fixed point problems, Nash equilibria, complementary problems, etc., (see Alakoya et al. 2023; Liu et al. 2016; Taiwo et al. 2021 for details). For instance, if \(f:C\rightarrow \mathbb {R}\) is a convex function and \(A(x)=\triangledown f(x)\), then the VI (1.1) becomes the minimization problem defined as Yin et al. (2022)

In solving VIs, there are two generally known approaches, namely; the regularised method (RM) and the projection method (PM). Our focus in this work is the projection method. Several works have been done on PMs over the years, (see Gibali et al. 2017; Kraikaew and Saejung 2014; Maingé 2008). The simplest known projection method is the gradient method (GM), which requires only one projection onto the feasible set C. However, this method has a major drawback—the stringent “strongly monotonicity” or “inversely strongly monotonicity” condition imposed on the cost operator, (see Xiu and Zhang 2003). In order to tackle this setback, Korpelvich (1976) and Antipin (1976) proposed the extragradient method (EGM), which only requires the cost operator to be monotone. Although the EGM is an improvement over the earlier GM, it requires computing two projections onto the feasible set C per iteration, which is a major barrier in implementing the EGM. In a bid to overcome this bottleneck, several researchers have tried to improve on the EGM by proposing new iterative methods that only require calculating one projection onto the feasible set per iteration and without the stringent conditions of the the GM. These new methods have proven to be more efficient and easier to implement than the EGM. One of these improved methods over the EGM is the subgradient extragradient method (SEGM), also known as the modified extragradient method (MEGM), which was introduced by Censor et al. (2011). In the SEGM, the second projection onto the feasible set C is replaced by a projection onto a half-space, which can be easily calculated using an explicit formula. Another of these methods is the Tseng’s extragradient method (TEGM), also known as the forward-backward-forward algorithm, which was proposed by Tseng (2000). Another improved method over the EGM is the projection and contraction method (PCM) He (1997). Like the other two methods, the PCM also requires only one projection onto the feasible set per iteration, and is presented as follows.

where \(P_C\) is the projection onto the feasible set C, \(\mu \in (0,2), ~\gamma \in (0,\frac{1}{L}),\) and \(\beta _n:=\frac{\alpha (w_n,y_n)}{\Vert d(w_n,y_n)\Vert ^2}, ~~ \alpha (w_n,y_n):=\langle w_n-y_n, d(w_n,y_n) \rangle , \quad \forall n\ge 0,\) and L is the Lipschitz constant of the cost operator. Over the years and in recent times, researchers have developed new variants of the PCM and have obtained fascinating results, (see Cholamjiak et al. 2020; Gibali et al. 2020).

We note that the PCM (1.3) depends on the Lipschitz constant (L) of the cost operator, which is a significant drawback, due to the difficulty in computing the value of L. One of our goals in this work is to overcome the above drawback of the PCM.

Now, we define the dual variational inequality (DVI) problem of (1.1) as finding a point \(x^*\in C\) such that

We denote the solution of the DVI (1.4) by \(V_D.\)

Remark 1.1

We note the following relationship between the VI (1.1) and DVI (1.4), (see Yin et al. 2022; Cottle and Yao 1992; Ye and He 2015).

-

i.

If A is continuous and C is convex. then \(V_D \subseteq V_I.\)

-

ii.

If A is pseudomonotone and continuous, then \(V_I=V_D.\)

-

iii.

If A is quasimonotone and continuous, then the inclusion \(V_I\subseteq V_D\) fails to hold, but \(V_N\subseteq V_D.\)

-

iv.

The condition \(V_I\subseteq V_D\) is a direct consequence of the pseudomonotonicity of A, that is for any

$$\begin{aligned} x^* \in V_I, ~~ ~~ \langle Ax, x-x^* \rangle \ge 0, \quad \quad \forall x\in C. \end{aligned}$$(1.5)

Most of the results obtained by researchers over the years have been based on conditions (i) and (ii) of Remark 1.1, (see Thong and Hieu 2018; Thong et al. 2021). In this study we are concerned about solving the VI (1.1) for the case when A is quasimonotone, i.e., when \(V_I\nsubseteq V_D\), (see Panyanak et al. 2023; Wang et al. 2023).

Recently, Liu and Yang (2020) proposed a new self-adaptive method for solving the variational inequalities with quasimonotone operator (or without monotonicity). Their algorithm is presented as follows.

Algorithm 1.2

- Step 0.:

-

Take \(\gamma _0>0, ~~ x_0\in H, ~~ 0<\sigma <1.\) Choose a nonnegative real sequence \(\{\theta _n\}\) such that \(\sum _{n=0}^{\infty }\theta _n<+\infty .\)

- Step 1.:

-

Given the current iterate \(x_n,\) compute

$$\begin{aligned} y_n=P_C(x_n-\gamma _n Ax_n). \end{aligned}$$If \(x_n=y_n\) (or \(Ay_n=0\)), then stop: \(y_n\) is a solution. Otherwise,

- Step 2.:

-

Compute

$$\begin{aligned} x_{n+1} = y_n + \gamma _n(Ax_n - Ay_n), \end{aligned}$$and

$$\begin{aligned} \gamma _{n+1}={\left\{ \begin{array}{ll} \min \{\frac{\sigma \Vert x_n-y_n\Vert }{\Vert Ax_n-Ay_n\Vert }, \gamma _n + \theta _n\} &{}\text {if}~~ Ax_n-Ay_n\ne 0,\\ \gamma _n +\theta _n, &{} \text {otherwise}. \end{array}\right. } \end{aligned}$$

The authors obtained a weak convergence result under the following assumptions.

Assumption 1.3

- C1::

-

\(V_D\ne \emptyset .\)

- C2::

-

The mapping A is quasimonotone on H.

- C2’::

-

If \(x_n\rightharpoonup x^*\) and \(\limsup _{n\rightarrow \infty } \langle Ax_n,x_n \rangle \le \langle Ax^*, x^* \rangle ,\) then \(\lim _{n\rightarrow \infty }\langle Ax_n,x_n \rangle = \langle Ax^*, x^* \rangle .\)

- C3::

-

The mapping A is Lipschitz-continuous with constant \(L>0.\)

- C4::

-

The mapping A is sequentially weakly continuous on C, i.e., for each sequence \(\{x_n\}\subset C, ~~ x_n\rightharpoonup x^*\) implies that \(Ax_n\rightharpoonup Ax^*.\)

- C5::

-

Set \(A=\{d\in C: Ad=0\}\setminus V_D\) is a finite set.

- C5’::

-

The set \(B=V_I\setminus V_D\) is a finite set.

Remark 1.4

We note that conditions \(C4-C5'\) are quite restrictive. One of our goals in this study is to relax these conditions. It is known that

So, it is clear that the condition \(V_D\ne \emptyset \) is more relaxed than the condition (1.5) of Remark 1.1 (iv). Hence, \(V_I\ne \emptyset \) together with the pseudomonotonicity property implies that \(V_D\ne \emptyset ,\) but the converse is not true. (For more details on the condition \(V_D\ne \emptyset ,\) see Lemma 2.7).

Yin et al. (2022) proposed the following iterative algorithm for approximating the common solution of fixed point problem with operator T and quasimonotone variational inequalities.

Algorithm 1.5

- Step 0.:

-

Let \(x_0\in H\) be an initial guess. We set \(n=0.\)

- Step 1.:

-

Let the nth iterate \(x_n\) be given. We compute

$$\begin{aligned} {\left\{ \begin{array}{ll} \hat{w}_n=(1-\rho _n)x_n+\rho _nT(x_n),\\ w_n=(1-\eta _n)x_n+\eta _nT(\hat{w}_n). \end{array}\right. } \end{aligned}$$ - Step 2.:

-

Let the nth stepsize \(\gamma _n\) be known. We compute:

$$\begin{aligned} y_n=P_C(w_n-\gamma _n Aw_n), \end{aligned}$$and

$$\begin{aligned} x_{n+1}=(1-\beta _n)w_n + \beta _ny_n+\beta _n\gamma _n(Aw_n-Ay_n). \end{aligned}$$ - Step 3.:

-

We update the \((n+1)th\) stepsize as follows:

$$\begin{aligned} \gamma _{n+1}={\left\{ \begin{array}{ll} \min \{\gamma _n, \frac{\sigma \Vert w_n-y_n\Vert }{\Vert Aw_n-Ay_n\Vert }\} &{}\text {if}~~ Aw_n-Ay_n\ne 0,\\ \gamma _n, &{} \text {otherwise}. \end{array}\right. } \end{aligned}$$

Set \(n:=n+1\) and return to Step 1.

where, T is a Lipschitz continuous pseudocontractive mapping. Also, the authors in Yin et al. (2022) were only able to obtain weak convergence result under certain conditions (which includes condition C5 of Assumption 1.3).

Yin and Hussain (2022) proposed a forward-backward-forward algorithm for solving quasimonotone variational inequalities as follows.

Algorithm 1.6

- Step 0.:

-

Let \(\gamma _0>0\) and \(\sigma \in (0,1).\) Select the starting point \(x_0\in H\) and the sequence of relaxation parameters \(\{\beta _n\}_{n\ge 0}\subset (0,1]\) satisfying \(\liminf _{n\rightarrow \infty }\beta _n>0.\) Set \(n=0.\)

- Step 1.:

-

Let \(x_n\) and \(\gamma _n\) be given. Compute

$$\begin{aligned} y_n=P_C(x_n-\gamma _n Ax_n). \end{aligned}$$If \(x_n=y_n,\) then stop.

- Step 2.:

-

Compute

$$\begin{aligned} x_{n+1} = \beta _n(y_n + \gamma _n(Ax_n - Ay_n))+(1-\beta _n)x_n, \end{aligned}$$and

$$\begin{aligned} \gamma _{n+1}={\left\{ \begin{array}{ll} \min \{\frac{\sigma \Vert x_n-y_n\Vert }{\Vert Ax_n-Ay_n\Vert }, \gamma _n\} ~~ \text {if}~~ Ax_n-Ay_n\ne 0,\\ \gamma _n, \quad \quad \text {otherwise}. \end{array}\right. } \end{aligned}$$

Update k to \(k+1\) and go to Step 1.

Under certain conditions, with the inclusion of condition C5 of Assumption 1.3, Yin and Hussain (2022) established weak convergent of Algorithm 1.6. Recently, Izuchukwu et al. (2022) also proposed an iterative method for solving quasimonotone variational inequality problems, with only weak convergent under certain conditions, including C5.

Over the years, efforts have been made by researchers to speed up the rate of convergence of algorithms. In 1964, Polyak (1964) introduced the inertial scheme, a two-step iteration which has been shown to be a very efficient technique to improving the convergence rate of iterative methods. In recent times, fascinating works have been done via the use of the inertial technique, (see Alakoya and Mewomo 2023; Alakoya et al. 2022; Ogwo et al. 2022b; Taiwo et al. 2021; Uzor et al. 2022a; Wickramasinghe et al. 2023).

Following the above, the natural question arises, and is our foremost goal in this paper.

Is it possible to establish a strong convergent method for solving quasimonotone VI (and VI without monotonicity) such that C4–C5’ of Assumption 1.3are dispensed?

By answering affirmatively this question we improve the works of Yin et al. (2022); Liu and Yang (2020); Yin and Hussain (2022), and Izuchukwu et al. (2022) by achieving a strong convergent method dispensing the stringent conditions C4-C5’ of Assumption 1.3.

The outline of the paper is as follows. In Sect. 2, we state some existing lemmas and relevant definitions that would be useful in establishing our results. In Sect. 3, we present our proposed algorithm and its convergent analysis. In Sect. 4, we present some numerical experiments to showcase the performance of our method over some other methods in literature. Finally, in Sect. 5, we give a brief summary of our results.

2 Preliminaries

Here, we state relevant definitions and lemmas which will be employed ahead in our convergence analysis.

Recall that H is a real Hilbert space and C is a nonempty, closed and convex subset of H. Throughout this paper, we denote the weak and strong convergence of a sequence \(\{x_n\}\) to a point \(x^* \in H\) by \(x_n \rightharpoonup x^*\) and \(x_n \rightarrow x^*\), respectively. Let \(w_\omega (x_n)\) denote the set of weak limit points of \(\{x_n\},\) defined by

The metric projection denoted by \(P_C:H\rightarrow C\) is defined for each \(x\in H,\) as the unique element \(P_Cx\in C\) such that

It is well known that \(P_C\) is firmly nonexpansive (see Alakoya et al. 2022; Uzor et al. 2022b and Lemma 2.2 for more properties of \(P_C\)).

Lemma 2.1

Uzor et al. (2022a) Let H be a real Hilbert space. Then for all \(x,y\in H\) and \(\kappa \in \mathbb {R},\) the following results hold.

-

(i)

\(\Vert x + y\Vert ^2 \le \Vert x\Vert ^2 + 2\langle y, x + y \rangle ;\)

-

(ii)

\(\Vert x + y\Vert ^2 = \Vert x\Vert ^2 + 2\langle x, y \rangle + \Vert y\Vert ^2;\)

-

(iii)

\(\Vert \kappa x + (1-\kappa ) y\Vert ^2 = \kappa \Vert x\Vert ^2 + (1-\kappa )\Vert y\Vert ^2 -\kappa (1-\kappa )\Vert x-y\Vert ^2.\)

Lemma 2.2

Takahashi (2009); Kopecká and Reich (2012) Let C be a nonempty, closed and convex subset of a real Hilbert space H, and let I be the identity map on H. Then for any \(x\in H\) and \(y,z\in C\), the following results hold.

-

(i)

\(z = P_Cx \Longleftrightarrow \langle x - z, z - y\rangle \ge 0.\)

-

(ii)

\(\Vert y-P_Cx\Vert ^2+\Vert x-P_Cx\Vert ^2 \le \Vert x-y\Vert ^2.\)

-

(iii)

\(\langle x-y,P_Cx-P_Cy \rangle \ge \Vert P_Cx-P_Cy\Vert ^2.\)

-

(iv)

\(\langle (I-P_C)x-(I-P_C)y, x-y\rangle \ge \Vert (I-P_C)x-(I-P_C)y\Vert ^2.\)

Definition 2.3

Godwin et al. (2023b) Let H be a real Hilbert space. A mapping \(A: H\rightarrow H\) is said to be

-

(1)

L-Lipschitz continuous, where \(L>0,\) if

$$\begin{aligned} \Vert Ax - Ay\Vert \le L\Vert x-y\Vert ,\quad \forall ~~x,y\in H. \end{aligned}$$If \(L\in [0,1),\) then A is said to be a contraction;

-

(2)

nonexpansive, if A is 1-Lipschitz continuous;

-

(3)

monotone, if

$$\begin{aligned} \langle Ax - Ay, x-y\rangle \ge 0,\quad \forall ~~x,y\in H; \end{aligned}$$ -

(4)

pseudomonotone, if

$$\begin{aligned} \langle Ay, x-y \rangle \ge 0 \Rightarrow \langle Ax, x-y \rangle \ge 0,\quad \forall x,y\in H; \end{aligned}$$ -

(5)

quasimonotone, if

$$\begin{aligned} \langle Ay, x-y \rangle > 0 \Rightarrow \langle Ax, x-y \rangle \ge 0,\quad \forall x,y\in H. \end{aligned}$$

We observe that (3)\(\implies \) (4)\(\implies \) (5), but the converse is not generally true. Hence, the class of quasimonotone mappings is more general than the classes of monotone and pseudomonotone mappings, (see Izuchukwu et al. (2022)).

Lemma 2.4

Maingé (2007) Let \(\{a_n\}, \{c_n\}\subset \mathbb {R_+}, \{\sigma _n\}\subset (0,1)\) and \(\{b_n\}\subset \mathbb {R}\) be sequences such that

Assume \(\sum _{n=0}^{\infty }|c_n|<\infty .\) Then the following results hold.

-

(1)

If \(b_n\le \beta \sigma _n\) for some \(\beta \ge 0,\) then \(\{a_n\}\) is a bounded sequence.

-

(2)

If we have

$$\begin{aligned} \sum _{n=0}^\infty \sigma _n = \infty ~~ \text {and}~~ \limsup _{n\rightarrow \infty }\frac{b_n}{\sigma _n}\le 0, \end{aligned}$$then \(\lim _{n\rightarrow \infty }a_n =0.\)

Lemma 2.5

Saejung and Yotkaew (2012) Let \(\{a_n\}\) be a sequence of non-negative real numbers, \(\{\alpha _n\}\) be a sequence in (0, 1) with \(\sum _{n=1}^\infty \alpha _n = \infty \) and \(\{b_n\}\) be a sequence of real numbers. Assume that

if \(\limsup _{k\rightarrow \infty }b_{n_k}\le 0\) for every subsequence \(\{a_{n_k}\}\) of \(\{a_n\}\) satisfying \(\liminf _{k\rightarrow \infty }(a_{n_{k+1}} - a_{n_k})\ge 0,\) then \(\lim _{n\rightarrow \infty }a_n =0.\)

Lemma 2.6

Tan and H. K., (1993) Suppose \(\{\lambda _n\}\) and \(\{\phi _n\}\) are two nonnegative real sequences such that

If \(\sum _{n=1}^{\infty }\phi _n<+\infty ,\) then \(\lim \limits _{n\rightarrow \infty }\lambda _n\) exists.

Lemma 2.7

Ye and He (2015)

If either,

-

(i)

A is pseudomonotone on C and \(V_I\ne \emptyset ;\)

-

(ii)

If A is the gradient of G, where G is a differentiable quasiconvex function on an open set \(k\supset C\) and attains its global minimum on C;

-

(iii)

A is quasimonotone on \(C,~~ A\ne 0,\) on C and C is bounded;

-

(iv)

A is quasimonotone on \(C, ~~ A\ne 0\) on C and there exists a positive number r, such that for every \(y\in C\) with \(\Vert y\Vert \ge r,\) there exists \(z\in C,\) such that \(\Vert z\Vert \le r\) and \(\langle Ay, z-y \rangle \le 0;\)

-

(v)

A is quasimonotone on \(C, ~~ \text {int}C\ne \emptyset \) and there exists \(x^*\in S\) such that \(Ax^*\ne 0,\)

then \(V_D\) is nonempty.

3 Main result

In this section, we present a new Mann-type projection and contraction method (MTPCM) for solving the quasimonotone variational inequality problem. We assume the following conditions for the convergence analysis of the proposed algorithm.

Assumption A

- (A1):

-

\(V_D\ne \emptyset .\)

- (A2):

-

The mapping \(A:H\rightarrow H\) is \(L-\) Lipschitz continuousFootnote 1

- (A3):

-

\(A:H\rightarrow H\) satisfies the following property whenever \(\{x_n\}\subset C,~ x_n\rightharpoonup d,\) then \(\Vert Ad\Vert \le \liminf \limits _{n\rightarrow \infty }\Vert Ax_n\Vert .\)

- (A4):

-

The mapping \(A:H\rightarrow H\) is quasimonotone.

- (A3’):

-

A is sequentially weakly continuous.

- (A4’):

-

If \(x_n\rightharpoonup x^*\) and \(\lim \sup _{n\rightarrow \infty }\langle Ax_n,x_n \rangle \le \langle Ax^*,x^* \rangle ,\) then \(\lim _{n\rightarrow \infty }\langle Ax_n,x_n \rangle = \langle Ax^*,x^* \rangle .\)

Assumption B

- (B1):

-

Let \( \{\alpha _n\} \subset (0,1)\) such that \(\lim _{n\rightarrow \infty }(1-\alpha _n)=0\) and \(\sum _{n=1}^\infty (1-\alpha _n) = +\infty , \{\beta _n\} \subset [a,b]\subset (0,1).\)

- (B2):

-

Let \(\delta >0, \{\epsilon _n\}\) be a positive sequence such that \(\lim _{n\rightarrow \infty }\frac{\epsilon _n}{1-\alpha _n}=0, ~~ l\in (0,2),\) and \(\sigma \in (0,1).\)

- (B3):

-

Let \(\gamma _0>0,\) and \(\{\theta _n\}\) be a nonnegative sequence such that \(\sum _{n=1}^\infty \theta _n<+\infty .\)

We present our algorithm as follows:

Algorithm 3.1

- Step 0.:

-

Let \(x_0, x_1\in H\) be two arbitrary initial points and set \(n=1.\)

- Step 1.:

-

Given the \((n-1)th\) and nth iterates, choose \(\delta _n\) such that \(0\le \delta _n\le \hat{\delta }_n\) with \(\hat{\delta }_n\) defined by

$$\begin{aligned} \hat{\delta }_n = {\left\{ \begin{array}{ll} \min \Big \{\delta ,~ \frac{\epsilon _n}{\Vert x_n - x_{n-1}\Vert }\Big \}, \quad \text {if}~ x_n \ne x_{n-1},\\ \delta , \hspace{95pt} \text {otherwise.} \end{array}\right. } \end{aligned}$$(3.1) - Step 2.:

-

Compute

$$\begin{aligned}&w_n = x_n + \delta _n(x_n - x_{n-1}); \nonumber \\&y_n=P_C(w_n-\gamma _n Aw_n), \end{aligned}$$(3.2)If \(y_n=w_n\) (or \(Ay_n=0\)), then stop: \(y_n\) is a solution. Otherwise,

- Step 3.:

-

Compute

$$\begin{aligned}&z_n = w_n-l\tau _nd_n, \nonumber \\&\text {where}\quad d_n:= w_n-y_n-\gamma _n(Aw_n-Ay_n), \quad \text {and} \nonumber \\&\tau _n= {\left\{ \begin{array}{ll} \frac{\langle w_n-y_n, d_n \rangle }{\Vert d_n\Vert ^2}, &{} \text {if}~~ d_n\ne 0, \\ 0, &{} \text {otherwise,} \end{array}\right. } \end{aligned}$$(3.3)$$\begin{aligned}&\gamma _{n+1} = {\left\{ \begin{array}{ll} \min \{\frac{\sigma \Vert w_n-y_n\Vert }{\Vert Aw_n-Ay_n\Vert },~~ \gamma _n+\theta _n\},&{}\text {if}~~~ Aw_n\ne Ay_n,\\ \gamma _n+\theta _n, &{} \text {otherwise.} \end{array}\right. } \end{aligned}$$(3.4) - Step 4.:

-

Compute

$$\begin{aligned} x_{n+1} = (1-\beta _n)(\alpha _nw_n)+\beta _nz_n. \end{aligned}$$(3.5)Set \(n:= n +1\) and return to Step 1.

Remark 3.2

-

(i)

We observe that our proposed Algorithm 3.1 requires only one projection onto the feasible set C per iteration, which makes our method computationally inexpensive.

-

(ii)

Our algorithm does not require any linesearch procedure. Rather, we employ a more efficient step size technique in (3.4) which generates a non-monotonic sequence of step sizes. This makes computation and implementation of the proposed method easier.

-

(iii)

Observe that condition (A3) is strictly weaker than the sequentially weakly continuity condition (A3’) often used by researchers when solving pseudomonotone and quasimonotone VIs (see Cholamjiak et al. 2020; Yin et al. 2022; Liu and Yang 2020; Yin and Hussain 2022). In proving our first strong convergence theorem with quasimonotonicity assumption, we do not require condition (A3’). We only require this condition when proving our second strong convergence theorem without monotonicity.

-

(iv)

Observe that the stringent conditions C4–C5’ of Assumption 1.3 employed in Yin et al. (2022); Liu and Yang (2020); Yin and Hussain (2022); Izuchukwu et al. (2022) are dispensed with in our proposed method.

-

(v)

If \(\theta _n\equiv 0\) in Step 3 of Algorithm 3.1, then the step size \(\gamma _n\) reduces to the ones in Uzor et al. (2022a); Yin and Hussain (2022); Yin et al. (2022).

Remark 3.3

-

(i)

By conditions (B1) and (B2), we can easily see from (3.1) that

$$\begin{aligned} \lim _{n\rightarrow \infty }\delta _n\Vert x_n - x_{n-1}\Vert = 0\quad \text {and}\quad \lim _{n\rightarrow \infty }\frac{\delta _n}{(1-\alpha _n)}\Vert x_n - x_{n-1}\Vert = 0. \end{aligned}$$ -

(ii)

The sequence \(\{\gamma _n\}\) generated by (3.4) is well defined and \(\lim \limits _{n\rightarrow \infty }\gamma _n=\gamma \in [\min \{\frac{\sigma }{L}, \gamma _1\}, \gamma _1+\Theta ],\) where \(\Theta =\sum _{n=1}^{\infty }\theta _n\) and L is the Lipschitz constant of A, (see Alakoya et al. 2022; Liu and Yang 2020).

3.1 Convergence analysis

In this section, we first prove some lemmas which would be needed to prove our strong convergence theorem. Next, we give the proof of the strong convergence theorems for our proposed algorithm.

Lemma 3.4

Let \(\{x_n\}\) be a sequence generated by Algorithm 3.1 under assumptions \((A1)-(A4)\) and \((B1)-(B3)\). Then, \(\{x_n\}\) is bounded.

Proof

Let \(d\in V_D.\) From the definition of \(w_n,\) we have

By Remark 3.3, we have that \(\lim \limits _{n\rightarrow \infty }\frac{\delta _n}{(1-\alpha _n)}\Vert x_n-x_{n-1}\Vert =0.\) So, there exists \(J_1>0,\) such that \(\frac{\delta _n}{(1-\alpha _n)}\Vert x_n-x_{n-1}\Vert \le J_1,\) for all \(n\ge 1.\) Consequently, we have

Next, since \(y_n=P_C(w_n-\gamma _nAw_n),\) we have from Lemma 2.2 that

Since \(d\in V_D\) and \(y_n\in C,\) we have \(\langle Ay_n, y_n-d \rangle \ge 0, ~~ \forall n\ge 0.\) Again, since \(\gamma _n>0,\) we have

By summing up (3.8) and (3.9), we have

By the definition of \(d_n\) and (3.10), we obtain

From Step 3, and by applying Lemma 2.1, (3.11)together with the condition on l, we get

Using (3.7), (3.12) and the conditions on \(\alpha _n\) and \(\beta _n,\) we get

Then, by Lemma 2.1, (3.12) and (3.13), we obtain

So, we have

By applying the last inequality in (3.13), we get

where \(M^*:=\sup _{n\in \mathbb {N}}\Big \{\Vert d\Vert +\frac{J_1}{(1-\beta _n)}\Big \}.\) Setting \(a_n:=\Vert x_n-d\Vert ;~ b_n:=(1-\alpha _n)(1-\beta _n)M^*;~ c_n:=0,\) and \(\sigma _n:=(1-\alpha _n)(1-\beta _n).\) By Lemma 2.4(1) together with the conditions on the control parameters, we have that \(\{\Vert x_n-d\Vert \}\) is bounded and this implies that \(\{x_n\}\) is bounded. Hence, \(\{w_n\},~~ \{y_n\},~~ \{z_n\},~~ \{d_n\}\) are all bounded.

Lemma 3.5

Assume \(\{w_n\}\) and \(\{y_n\}\) are sequences generated by Algorithm 3.1, such that conditions (A1)–(A4) and (B1)–(B3) hold. If there exists a subsequence \(\{w_{n_k}\}\) of \(\{w_n\}\) that converges weakly to \(\hat{x}\in H\) such that \(\lim _{k\rightarrow \infty }\Vert w_{n_k}-y_{n_k}\Vert =0,\) then \(\hat{x}\in V_D\) or \(A\hat{x}=0.\)

Proof

Since \(\{w_n\}\) is bounded, then \(w_\omega (w_n)\) is not empty. We let \(\hat{x}\in w_\omega (w_n)\) be an arbitrary element. Then, there exists a subsequence \(\{w_{n_k}\}\) of \(\{w_n\}\) such that \(w_{n_k}\rightharpoonup \hat{x}\) as \(k\rightarrow \infty .\) It follows from the hypothesis of the lemma that \(y_{n_k}\rightharpoonup \hat{x}\in C.\) We consider the following two cases to complete the proof of the lemma.

Case 1: If \(\limsup _{k\rightarrow \infty }\Vert Ay_{n_k}\Vert =0,\) then it implies that \(\lim _{k\rightarrow \infty }\Vert Ay_{n_k}\Vert =\liminf _{k\rightarrow \infty }\Vert Ay_{n_k}\Vert =0.\)

Since \(y_{n_k}\rightharpoonup \hat{x}\in C,\) then by condition (A3), it follows that

Therefore, we have that \(A\hat{x}=0.\)

Case 2: If \(\limsup _{k\rightarrow \infty }\Vert Ay_{n_k}\Vert >0.\) Without loss of generality, let \(\lim _{k\rightarrow \infty }\Vert Ay_{n_k}\Vert =L^*>0.\) Then, it follows that there exists \(K\in \mathbb {N}\) such that \(\Vert Ay_{n_k}\Vert >\frac{L^*}{2},\) for all \(k\ge K.\)

From (3.2), we have that \(y_{n_k}=P_C(w_{n_k}-\gamma _{n_k}Aw_{n_k}).\) Then, by Lemma 2.2 we have

Since \(\{y_{n_k}\}\) is bounded and \(\lim _{k\rightarrow \infty }\gamma _{n_k}=\gamma >0,\) then by applying \(\Vert y_{n_k}-w_{n_k}\Vert =0, ~~ k\rightarrow \infty ,\) the continuity of A and fixing \(d\in C,\) we obtain

If \(\limsup _{k\rightarrow \infty }\langle Ay_{n_k}, z-y_{n_k} \rangle >0,\) then there exists a subsequence \(\{y_{n_{k_j}}\}\) such that \(\lim _{j\rightarrow \infty } \langle Ay_{n_{k_j}}, z-y_{n_{k_j}} \rangle >0.\) Thus, there exist \(j_0\in \mathbb {N}\) such that

By the quasimonotonicity of A, we have that \(\forall j\ge j_0,\)

Thus, as \(j\rightarrow \infty ,\) we see that \(\hat{x}\in V_D.\)

If \(\limsup _{k\rightarrow \infty }\langle Ay_{n_k}, z-y_{n_k} \rangle =0,\) we can easily see from (3.16) that

We set \(\eta _k=|\langle Ay_{n_k}, z-y_{n_k} \rangle |+\frac{1}{k+1}.\) Then, we have

Next, we set \(\xi _{n_k}=\frac{Ay_{n_k}}{\Vert Ay_{n_k}\Vert ^2}\) for all \(k\ge K.\) Then, it follows that

Then, from (3.17), we see that for all \(k\ge K,\)

Then, by the quasimonotonicity of A, we have that for all \(k\ge K,\)

Also, by the Lipschitz continuity of A, we have that for all \(k\ge K,\)

If we let \(k\rightarrow \infty \) in (3.19) and apply the fact that \(\lim _{k\rightarrow \infty }\eta _k=0\) together with the boundedness of \(\{\Vert z+\eta _k\xi _{n_k}-y_{n_k}\Vert \},\) we obtain

It follows that \(\hat{x}\in V_D,\) which completes the proof.

Lemma 3.6

Suppose \(\{x_n\}\) is a sequence generated by Algorithm 3.1 and \(d\in V_D.\) Then, under the conditions (A1)–(A4) and (B1)–(B3), we have the following inequality for all \(n\in \mathbb {N}:\)

Proof

Let \(d\in V_D.\) By using Lemma 2.1 together with the Cauchy inequality, we have

where \(J_2:=\sup _{n\in \mathbb {N}}\{\Vert x_n-d\Vert , \delta _n\Vert x_n-x_{n-1}\Vert \}>0.\) Now, let \(g_n=(1-\beta _n)w_n+\beta _nz_n.\) Then, we have by Lemma 2.1 and (3.12), we get

Next, by applying (3.20), (3.21) and Lemma 2.1, we get

where \(J_3:=\sup \limits _{n\in \mathbb {N}}\{2\beta _n \Vert z_n-w_n\Vert \}.\) This completes the proof.

Lemma 3.7

The following inequality holds for all \(d \in V_D\) and \(n\in \mathbb {N},\) under conditions (A1)–(A4) and (B1)–(B3) :

for some \(J_4>0.\)

Proof

We have from (3.4) that

is true for both \(Aw_n=Ay_n\) and \(Aw_n\ne Ay_n.\)

Next, we see that

We can easily see from Remark 3.3 (ii) that

Hence, from (3.23), we obtain

where \(J_4=\sup _{n\in \mathbb {N}}\Big \{l^{-1}\Big (\frac{\gamma _{n+1} +\sigma \gamma _n}{\gamma _{n+1} -\sigma \gamma _n}\Big )\Vert w_n-y_n\Vert \Big \}.\) Observe that by (3.23) and the definition of \(z_n,\) the last inequality still holds if \(d_n=0.\) This completes the proof.

We now proceed to state and prove the strong convergence theorem for our proposed Algorithm 3.1, as follows.

Theorem 3.8

Let \(\{x_n\}\) be a sequence generated by Algorithm 3.1 such that conditions \((A1)-(A4)\) and \((B1)-(B3)\) hold, and \(Ax\ne 0, ~~ \forall x\in C.\) Then, \(\{x_n\}\) converges strongly to an element \(x^*\in V_D\subset V_I,\) where \(\Vert x^*\Vert =\min \{\Vert p\Vert :p\in V_D \subset V_I\}.\)

Proof

Let \(\Vert x^*\Vert =\min \{\Vert p\Vert :p\in V_D \subset V_I\},\) then \(x^*=P_{V_D}(0).\) It follows that \(x^*\in V_D.\) Then, from (3.22) we obtain

where \(b_n= \frac{3J_2}{(1-\beta _n)} \frac{\delta _n}{(1-\alpha _n)}\Vert x_n-x_{n-1}\Vert \nonumber + 2\beta _n \Vert z_n-w_n\Vert \Vert x_{n+1}-x^*\Vert + 2 \langle x^*, x^*-x_{n+1}\rangle .\) We claim that the sequence \(\{\Vert x_n - x^*\Vert \}\) converges to zero. To establish this claim, it suffices to show by Lemma 2.5 that \(\limsup \limits _{k\rightarrow \infty }b_{n_k}\le 0\) for every subsequence \(\{\Vert x_{n_k} - x^*\Vert \}\) of \(\{\Vert x_n - x^*\Vert \}\) satisfying

Suppose \(\{\Vert x_{n_k} - x^*\Vert \}\) is a subsequence of \(\{\Vert x_n - x^*\Vert \}\) such that (3.25) holds. From Lemma 3.6, we have

By applying (3.25), the fact that \(\lim _{k\rightarrow \infty }(1-\alpha _{n_k})=0\) and Remark 3.3, we obtain

By the conditions on the control parameters, we obtain

Also, by Remark 3.3, we get

Then, from (3.26) and (3.27), we obtain

Also, by Lemma 3.7 and (3.26), we obtain

Moreover, from (3.27) and (3.29) we get

Similarly, from (3.26) and (3.29), we obtain

By the definition of \(x_{n+1}\) and using \(\lim _{k\rightarrow \infty }(1-\alpha _{n_k})=0,\) (3.27) together with (3.28), we have

Now, we complete the proof by first showing that \(w_{\omega }(x_n)\subset V_D.\) Since \(\{x_n\}\) is bounded, then \(w_{\omega }(x_n)\ne \emptyset .\) Let \(\hat{x}\in w_{\omega }(x_n)\) be an arbitrary element. Then, from (3.27) and (3.30), we have that \(w_{\omega }(x_n)=w_{\omega }(w_n)=w_{\omega }(y_n).\) Since \(y_n\in C\) and C is weakly closed, we have \(\hat{x}\in C.\) So, by the assumption that \(Ax\ne 0, ~~ \forall x\in C\) we have \(A\hat{x}\ne 0.\) Thus, by (3.29) and Lemma 3.5 we have that \(\hat{x}\in V_D.\) Since \(\hat{x}\in w_{\omega }(x_n)\) was chosen arbitrarily, we obtain \(w_{\omega }(x_n)\subset V_D.\)

Next, since \(\{x_{n_k}\}\) is bounded, there exists a subsequence \(\{x_{n_{k_j}}\}\) of \(\{x_{n_k}\},\) such that \(x_{n_{k_j}}\rightharpoonup x^{\dagger },\) and

Since \(x^*=P_{V_D}(0),\) it follows from (3.33) that

From (3.32) and (3.34), we obtain

By Remark 3.3, (3.26) and (3.35), we have \(\limsup \limits _{k\rightarrow \infty }b_{n_k}\le 0.\) Thus, by appealing to Lemma 2.5, it follows from (3.24) that \(\lim \limits _{n\rightarrow \infty }\Vert x_n - x^*\Vert =0\) as required. Hence, the proof is complete.

Remark 3.9

We note that the quasimonotonicity of the mapping A was only employed in Case 2 of Lemma 3.5. Now, we proceed to prove the second strong convergence theorem for the proposed Algorithm 3.1 without recourse to the monotonicity property.

Lemma 3.10

Assume that \(\{w_n\}\) and \(\{y_n\}\) are sequence generated by Algorithm 3.1 such that conditions (A1)-(A2), (A3’)-(A4’) and (B1)-(B3) hold. Suppose there exists a subsequence \(\{w_{n_k}\}\) of \(\{w_n\}\) such that \(w_{n_k}\rightharpoonup x^*\in H\) and \(\Vert y_{n_k}-w_{n_k}\Vert \rightarrow 0\) as \(k\rightarrow \infty .\) Then, either \(x^*\in V_D\) or \(Ax^*=0.\)

Proof

From (3.16), following similar argument as in Lemma 3.5 and fixing \(z\in C,\) we have that \(y_{n_k}\rightharpoonup x^*\in C\) and

Next, we choose a positive sequence \(\eta _k\) such that \(\lim _{k\rightarrow \infty }\eta _k=0\) and

Hence, we obtain

We set \(z=x^*\) in (3.36) to obtain

Then, as \(k\rightarrow \infty ,\) by applying condition \((A3')\) and the fact that \(y_{n_k}\rightharpoonup x^*,\) from the last inequality we have

Then, by condition \((A4'),\) we obtain

From (3.36), we obtain

Thus, we have

So, it follows that \(x^*\in V_D.\) Hence, \(x^*\in V_D,\) or \(Ax^*=0\) as required.

Theorem 3.11

Let \(\{x_n\}\) be a sequence generated by Algorithm 3.1 such that conditions (A1)–(A2), (A3’)–(A4’) and (B1)–(B3) hold, and \(Ax\ne 0, ~~ \forall x\in C.\) Then, \(\{x_n\}\) converges strongly to an element \(x^*\in V_D\subset V_I,\) where \(\Vert x^*\Vert =\min \{\Vert p\Vert :p\in V_D \subset V_I\}.\)

Proof

By following similar argument as in Theorem 3.8 and applying Lemma 3.10 we obtain the required result.

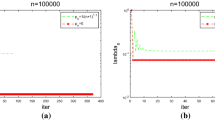

4 Numerical examples

In this section, we carry out some numerical experiments to illustrate and showcase the efficiency of our proposed Algorithm 3.1 (Proposed Alg) in comparison with Algorithm 1.2 (Liu & Yang Alg.), Algorithm 1.5 (Yin et al. Alg.), Algorithm 1.6 (Yin & Hussain Alg.), Appendix 5.1 (Izuchukwu et al. Alg.) and Appendix 5.2 (Alakoya et al. Alg.). In our experiment, we choose for each \(n\in \mathbb {N},~~ \alpha _n=\frac{n}{n+2}, ~~ \beta _n=\frac{n}{2n+1}, ~~\epsilon _n=\frac{2}{(n+2)^3},~~ \delta =0.89, ~~ \theta _n=\frac{1000}{(n+1)^{1.5}},~~ \gamma _0=0.9,~~ \sigma =0.95, ~~l=0.89\) in Algorithm 3.1; \(\eta _n=0.25, \rho _n=0.30, Tx=\frac{x}{2}\) in Algorithm 1.5 and \(\gamma _1=0.75,\vartheta =0.2\) in Appendix 5.1.

We perform our experiment using the MATLAB software, version R2022(b), as follows:

Example 4.1

We consider the following problem Liu and Yang (2020). Let \(C:= [-1,1]\) and

We see that A is quasimonotone and Lipschitz continuous. Also \(V_D=\{-1\}\) and \(V_I=\{-1,0\}\).

We use \(|x_{n+1}-x_n|< 10^{-4}\) as the stopping criterion and choose different starting points as follows:

Case a: \(x_0=0.5000,~x_1=0.0100;\)

Case b: \(x_0=0.4961,~x_1=0.0324;\)

Case c: \(x_0=0.6047,~x_1=0.0209;\)

Case d: \(x_0=5674,~x_1=0.0186.\)

The numerical results are reported in Figs. 1, 2, 3, 4 and Table 1.

Example 4.2

See Izuchukwu et al. (2022). Let \(C:=[0,1]^m\) and \(Ax=(h_1x, h_2x,\ldots ,h_mx),\) where

We consider the cases \(m = 5, m = 10, m = 20\) and \(m = 40\) while the starting points \(x_0\) and \(x_1\) are generated randomly. We use \(|x_{n+1}-x_n|< 10^{-3}\) as the stopping criterion. The numerical results are reported in Figs. 5, 6, 7, 8 and Table 2.

Next, we present an example in an infinite dimensional Hilbert space and compare our proposed Algorithm 3.1 with Appendix 5.2, which is on strong convergence results.

Example 4.3

Let \(H=\ell _2:=\{x=(x_1,x_2,\ldots ,x_i,\ldots ):\sum _{i=1}^{\infty }|x_i|^2<+\infty \}.\) We take \(C:=\{x\in \ell _2:\Vert x\Vert \le 3\}\) and \(Ax:= (x_1 e^{-x_{1}^2},0,0,\ldots ), ~~ ~~ x=(x_1,x_2,x_3,\ldots )\in C.\) Let \(P:\ell _2\rightarrow \ell _2\) be defined by \(P(x_1,x_2,x_3,\ldots )=(x_1,0,0,\ldots );~~ ~~ h(x):=\mu (\langle y,x \rangle )\) with \(y=(1,0,0,\ldots )\in \ell _2\) and \(\mu (t):=e^{-t^2}, ~~ t>0.\) Let \(Ax=h(x)P(x), ~~ x\in \ell _2.\) Then A is quasimonotone, but not monotone on H (see Izuchukwu et al. 2022).

We use \(\Vert x_{n+1}-x_n\Vert < 10^{-4}\) as the stopping criterion and choose different starting points as follows:

Case a: \(x_0=(1,-0.1,0.01,\ldots ),~x_1=(-\frac{1}{3},\frac{1}{9},-\frac{1}{27},\ldots )\),

Case b: \(x_0=(3,1,\frac{1}{3},\ldots ),~x_1=(-\frac{1}{3},\frac{1}{9},-\frac{1}{27},\ldots )\),

Case c: \(x_0=(2,-1,\frac{1}{2},\ldots ),~x_1=(-\frac{1}{3},\frac{1}{9},-\frac{1}{27},\ldots ),\)

Case d: \(x_0=(2,0.2,0.02,\ldots ),~x_1=(-\frac{1}{3},\frac{1}{9},-\frac{1}{27},\ldots )\).

The numerical results are reported in Figs. 9, 10, 11, 12 and Table 3.

5 Conclusion

In this paper, we we studied the class of quasimonotone variational inequality problems and the class of variational inequality problems without monotonicity. We proposed a new Mann-type inertial projection and contraction method for approximating the solutions of these two classes of variational inequality problems. We proved some strong convergence theorems for the proposed algorithm under more relaxed conditions and without the sequentially weakly continuity condition often assumed by authors. Finally, we presented some numerical experiments and compared our method with some existing methods. The numerical results given in Figs. 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12 and Tables 1, 2, 3 show that our method performs better than these existing methods.

Data Availability

Not applicable.

Notes

Prior knowledge of the Lipschitz constant is not required.

References

Alakoya TO, Mewomo OT (2023) S-iteration inertial subgradient extragradient method for variational inequality and fixed point problems. Optimization. https://doi.org/10.1080/02331934.2023.2168482

Alakoya TO, Mewomo OT, Shehu Y (2022) Strong convergence results for quasimonotone variational inequalities. Math Met Oper Res 95:249–279

Alakoya TO, Uzor VA, Mewomo OT, Yao J-C (2022) On a system of monotone variational inclusion problems with fixed-point constraint. J Inequ Appl 2022:47

Alakoya TO, Uzor VA, Mewomo OT (2023) A new projection and contraction method for solving split monotone variational inclusion, pseudomonotone variational inequality, and common fixed point problems. Comput Appl Math 42(1):33

Antipin AS (1976) On a method for convex programs using a symmetrical modification of the Lagrange function. Ekonom Math Methody 12(6):1164–1173

Censor Y, Gibali A, Reich S (2011) The subgradient extragradient method for solving variational inequalities in Hilbert spaces. J Optim Theory Appl 48:318–335

Chen T, Huang N-J, Sofonea M (2022) A differential variational inequality in the study of contact problems with wear, Nonlinear Anal. Real World Appl 67

Cholamjiak P, Thong DV, Cho YJ (2020) A novel inertial projection and contraction method for solving pseudo-monotone variational inequality problems. Acta Appl Math 169:217–245

Cottle RW, Yao JC (1992) Pseudomonotone complementary problems in Hilbert space. J Optim Theory Appl 75:281–295

Fichera G (1963) Sul problema elastostatico di signorini con ambigue condizioni al contorno. Atti Accad Naz Lincei Rend Cl Sci Fis Mat Nat 34(8):138–142

Gibali A, Jolaoso LO, Mewomo OT, Taiwo A (2020) Fast and simple Bregman projection methods for solving variational inequalities and related problems in Banach spaces. Res Math 75(4):36

Gibali A, Reich S, Zalas R (2017) Outer approximation methods for solving variational inequalities in Hilbert space. Optimization 66:417–437

Godwin EC, Alakoya TO, Mewomo OT, Yao J-C (2023) Relaxed inertial Tseng extragradient method for variational inequality and fixed point problems. Appl Anal 102(15):253–4278

Godwin EC, Izuchukwu C, Mewomo OT (2023) Image restoration using a modified relaxed inertial method for generalized split feasibility problems Math. Methods Appl Sci 46(5):5521–5544

Godwin EC, Mewomo OT, Alakoya OT (2023) A strongly convergent algorithm for solving multiple set split equality equilibrium and fixed point problems in Banach spaces. Proc Edinb Math Soc. https://doi.org/10.1017/S0013091523000251

He BS (1997) A class of projection and contraction methods for monotone variational inequalities. Appl Math Optim 35:69–76

Iiduka H (2012) Fixed point optimization algorithm and its application to network bandwidth allocation. J Comput Appl Math 236(7):1733–1742

Izuchukwu C, Shehu Y, Yao J-C (2022) A simple projection method for solving quasimonotone variational inequality problems. Optim Eng. https://doi.org/10.1017/s11081-022-09713-8

Kinderlehrer D, Stampacchia G (1980) An introduction to variational inequalities and their applications. New York Academic Press

Kopecká E, Reich S (2012) A note on alternating projections in Hilbert space. J Fixed Point Theory Appl 12:41–47

Korpelvich GM (1976) The extragradient method for finding saddle points and other problems. Matecon 12:747–756

Kraikaew R, Saejung S (2014) Strong convergence of the Halpern subgradient extragradient method for solving variational inequalities in Hilbert spaces. J Optim Theory Appl 163:399–412

Liu H, Yang J (2020) Weak convergence of iterative methods for solving quasimonotone variational inequalities. Comput Optim Appl 77:491–508

Liu Z, Zeng S, Motreanu D (2016) Evolutionary problems driven by variational inequalities. J Differ Equ 260(9):6787–6799

Maingé PE (2008) Regularised and inertial algorithms for common fixed points of nonlinear operators. J Math Anal Appl 344(2):876–887. https://doi.org/10.1016/j.jmaa.2008.03.028

Maingé PE (2007) Approximation methods for common fixed points of nonexpansive mappings in Hilbert spaces. J Math Anal Appl 325(1):469–479

Ogwo GN, Izuchukwu C, Mewomo OT (2022) Inertial methods for finding minimum-norm solutions of the split variational inequality problem beyond monotonicity. Numer Algorithms 88(3):1419–1456

Ogwo GN, Izuchukwu C, Mewomo OT (2022) Relaxed inertial methods for solving split variational inequality problems without product space formulation. Acta Math Sci Ser B (Engl Ed) 42(5):1701–1733

Polyak BT (1964) Some methods of speeding up the convergence of iteration methods. Politehn Univ Bucharest Sci Bull Ser A Appl Math Phys 4(5):1–17

Panyanak B, Khunpanuk C, Pholasa N, Pakkaranang N (2023) A novel class of forward-backward explicit iterative algorithms using inertial techniques to solve variational inequality problems with quasi-monotone operators. AIMS Math 8(4):9692–9715

Saejung S, Yotkaew P (2012) Approximation of zeros of inverse strongly monotone operators in Banach spaces. Nonlinear Anal 75:742–750

Stampacchia G (1968) Variational Inequalities. In: Theory and applications of monotone operators, proceedings of the NATO advanced study institute, Venice, Italy, Edizioni Odersi, Gubbio, Italy, pp 102–192

Taiwo A, Jolaoso LO, Mewomo OT (2021) Inertial-type algorithm for solving split common fixed point problems in Banach spaces. J Sci Comput 86(12):30

Taiwo A, Owolabi AO-E, Jolaoso LO, Mewomo OT, Gibali A (2021) A new approximation scheme for solving various split inverse problems. Afr Mat 32(3–4):369–401

Takahashi W (2009) Introduction to nonlinear and convex analysis. Yokohama Publishers, Yokohama

Tan KK, Xu HK (1993) Approximating fixed points of nonexpansive mappings by the Ishikawa iteration process. J Math Anal Appl 178:301–308

Thong DV, Hieu DV (2018) Modified subgradient extragradient algorithms for variational inequality problems and fixed point problems. Optimization 67(1):83–102

Thong DV, Long LV, Li X-H, Dong Q-L, Cho YJ, Tuan PA (2021) A new self-adaptive algorithm for solving pseudomonotone variational inequality problems in Hilbert spaces. Optimization 71(12):3669–3693

Tseng P (2000) A modified forward–backward splitting method for maximal monotone mappings. SIAM J Control Optim 38:431–446

Uzor VA, Alakoya TO, Mewomo OT (2022) Strong convergence of a self-adaptive inertial Tseng’s extragradient method for pseudomonotone variational inequalities and fixed point problems. Open Math 20(1):234–257

Uzor VA, Alakoya TO, Mewomo OT (2022) On split monotone variational inclusion problem with multiple output sets with fixed point constraints. Comput Methods Appl Math. https://doi.org/10.1515/cmam-2022-0199

Xiu NH, Zhang JZ (2003) Some recent advances in projection type methods for variational inequalities. J Comput Appl Math 152(1–2):559–585

Wang Z-B, Sunthrayuth P, Adamu A, Cholamjiak P (2023) Modified accelerated Bregman projection methods for solving quasi-monotone variational inequalities. Optimization. https://doi.org/10.1080/02331934.2023.2187663

Wickramasinghe MU, Mewomo OT, Alakoya TO, Iyiola SO (2023) Mann-type approximation scheme for solving a new class of split inverse problems in Hilbert spaces. Appl Anal. https://doi.org/10.1080/00036811.2023.2233977

Ye ML, He YR (2015) A double projection method for solving variational inequalities without monotonicity. Comput Optim Appl 60:141–150

Yin T-C, Hussain N (2022) A forward–backward–forward–forward algorithm for solving quasimonotone variational inequalities. J Funct Spaces 2022:Art. ID. 7117244

Yin T-C, Wu Y-K, Wen C-F (2022) An iterative algorithm for solving fixed point problems and quasimonotone variational inequalities. J Math 2022:Art. ID. 8644675

Acknowledgements

The authors sincerely thank the Associate Editor and anonymous referees for their careful reading, constructive comments and useful suggestions that improved the manuscript.

Funding

Open access funding provided by University of KwaZulu-Natal. The first author is funded by International Mathematical Union Breakout Graduate Fellowship (IMU-BGF). The second author is funded by University of KwaZulu-Natal, Durban, South Africa Postdoctoral Fellowship. The third author is supported by the National Research Foundation (NRF) of South Africa Incentive Funding for Rated Researchers (Grant Number 119903) and DSI-NRF Centre of Excellence in Mathematical and Statistical Sciences (CoE-MaSS), South Africa (Grant Number 2022-087-OPA).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no competing interests.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix

Appendix

Appendix 5.1

(Algorithm 3.2 in Izuchukwu et al. (2022))

Let \(\gamma _0,\gamma _1,~~ \vartheta \in (\delta , \frac{ 1-2\delta }{2})\) with \(\delta \in (0,\frac{1}{4}),\) and choose a nonnegative real sequence \(\{\theta _n\}\) such that \(\sum _{n=1}^{\infty }\theta _n<\infty .\) For arbitrary \(x_0,x_1\in C,\) let the sequence \(\{x_n\}\) be generated by:

Appendix 5.2

Algorithm 3.2 of Alakoya et al. (2022)

Step 1: Select initial point \(x_0,x_1\in H_1.\) Given the iterates \(x_{n-1}\) and \(x_n\) for each \(n \ge 1,\) choose \(\delta _n\) such that \(0\le \delta _n \le \hat{\delta }_n,\) where

Step 2: Compute

Step 3: Compute

If \(w_n=y_n\) (or \(Ay_n=0\)) then stop: \(w_n\) is a solution of the VI. Otherwise, go to Step 4.

Step 4: Compute

Step 5 Compute

where

Set \(n:=n+1\) and go back to Step 1.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Uzor, V.A., Alakoya, T.O., Mewomo, O.T. et al. Solving quasimonotone and non-monotone variational inequalities. Math Meth Oper Res 98, 461–498 (2023). https://doi.org/10.1007/s00186-023-00846-9

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00186-023-00846-9

Keywords

- Projection and contraction method

- Quasimonotone variational inequality problem

- Inertial technique

- Self-adaptive step size