Abstract

Artificial Intelligence (AI) has triggered profound reforms across industries, including education. These developments necessitate the inclusion of AI as a subject in K-12 classrooms. However, the need for students to learn AI demands that educators pay increasing attention, believe in its relevance and intend to promote it among their students and colleagues. This paper aimed to explore teachers' perceptions of and behavioral intention to teach AI. We specifically considered the association of AI anxiety, perceived usefulness, attitude towards AI, AI relevance, AI readiness, and behavioral intention factors. This research further aims to examine the moderator effect of AI for social good and confidence on the relationship in our hypothesized research model. To address this purpose, a quantitative methodology with the use of structural equation modeling was utilized. Data were retrieved through an online questionnaire from 320 lower and upper secondary school in-service teachers, mostly in STEM-related fields. Our findings reveal that teacher perceptions of AI for social good and confidence will affect most relationships in the model. Teacher professional programs should include the benefits and risks of AI and good practice sharing.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

Teachers play a central role in promoting and sustaining school education initiatives and, as a result of this, preparing the future workforce. Despite the current innovation, automation, and technological revolution that keeps influencing classroom instructions, the human role and values teachers provide continue to affirm their impact on learning. Artificial intelligence (AI), one of the prominent technologies currently revolutionizing the world, is a crucial driver of innovation across industries, including education. Its application in education includes custom-tailored education for learners, task automation for teachers, and intelligent content creation, among others (Chen et al., 2022). Pedagogical agents, a product of AI advancement, have been shown to promote student learning (Chen et al., 2020; Park, 2015). These developments, such as the increasing AI-powered society and lessening the global AI skills gap crisis, prompted educational stakeholders to include AI education in K-12 level curricula. Providing students with basic knowledge of AI will equip them to understand the world around them, develop curiosity for AI, and prepare them for an AI-teaming future (Sanusi, 2021a, b).

Introducing AI universally creates the need for resource development, novel activities, and an approach to demystifying AI concepts for students. Accordingly, several initiatives regarding curriculum development (Chiu, 2021; Williams et al., 2019), tool development (Mahipal et al., 2023; Aung et al., 2022), professional development (Lee & Perret, 2022; Lin & Van Brummelen, 2021) and pedagogical approaches (Sanusi & Oyelere, 2020; Xia et al., 2022) to enable youths have access to AI education begin to emerge. While initiatives to develop AI education are ongoing and more are still required, understanding the psychological factors that influence teaching AI in schools has been found to be under-researched. Considering the psychological factors that influence the teaching of AI is necessary on several grounds. First, it has been established that intention is strongly linked to future actions (Ajzen, 1991, 2002). This intention is strongly associated with actual behavior. Several factors, however, influence intention, which necessitates that the interplay of different factors be considered to provide insight into AI teaching intention. In addition, understanding elements that shape teachers' intention to teach AI will also contribute to its implementation in schools. Furthermore, if teachers possess a negative perception of AI, it may reduce the likelihood of them seeking knowledge about the technology, which could have implications for promoting AI learning. Thus, insights into teachers' perceptions about teaching AI are essential to help practitioners anticipate behaviors or barriers that may influence AI implementation in school system.

Several papers have laid the foundation for understanding behavioral intention concerning other variables and AI (Chai et al., 2020, 2021). Such papers, however, do not include the voices of teachers who are required to facilitate the subject, and thus, teachers' behavioral intention to teach AI still needs to be discovered. Although Ayanwale et al. (2022) demonstrate an important start to understanding teachers' perceptions, it focused on readiness and intention and targeted teachers from elementary to high school. Spotlighting specific grade levels could generate more insight for the cohort, and that several factors influence behavioral intention. Thus, to further extend the work of Ayanwale et al. (2022), this paper investigates teachers' perceptions of psychological factors that impact their intention to teach AI. We extended the Planned Behavior Theory (Fishbein & Ajzen, 2010) to measure the associations of AI anxiety, perceived usefulness, attitude towards AI, AI relevance, and AI readiness factors of in-service teachers. This study's result will provide practitioners, researchers, and policymakers with valuable information for professional learning programs and resources designed to increase intention. AI significantly impacts our society; teachers may find AI disruptive; most school teachers often need formal training on AI and may need more confidence to teach AI. These imply that AI for social good and confidence in teaching AI may affect the inter-relationships in the extended model we proposed in this study. Additionally, this paper aimed to provide detailed understanding of teachers' perspectives via the moderating influences of teachers' AI for social good and confidence in teaching AI.

This paper is structured as follows. In Section 2, existing literature was reviewed, and we generated hypotheses to guide our study. Section 3 introduces the methodology, including the context, participants, instruments, and structural equation modeling approach we used. Section 4 presents the results of the participant responses. Section 5 discusses the findings about the implications, including identifying limitations and suggestions for future work. Finally, Section 6 concludes this paper.

2 Related literature and hypothesis development

2.1 AI anxiety

Anxiety has been commonly linked to the use of technology, especially the worry that computers threaten the significance of being human (Wang & Wang, 2022). Specifically, Johnson and Verdicchio (2017) identified AI anxiety due to inaccurate conceptions of technological development and fear expressed in science fiction films and novels. AI anxiety was defined as apprehension or distress about out-of-control AI or nervousness owing to unfamiliar directions of AI advancement (Johnson & Verdicchio, 2017). To our knowledge, no study has been found to establish the connection linking AI anxiety and perceived Usefulness. However, there is evidence in the literature that computer anxiety and perceived Usefulness have been explored (Park et al., 2012; Purnomo & Lee, 2013). While few identified studies show a connection between computer anxiety and perceived Usefulness, several studies suggested a lack of a substantial correlation between the two constructs (Abdullah & Ward, 2016; Ifinedo, 2006; Liu, 2010). Since earlier research suggests that anxiety may not influence perceived Usefulness, it is logical to verify if AI anxiety impacts perceived Usefulness. Hence, this study hypothesizes that:

-

H1: AI anxiety will significantly influence perceived Usefulness.

2.2 Perceived usefulness

It was revealed in the meta-analysis of Scherer and Teo (2019) that educators' perceived Usefulness of technology in enhancing their job performance was an essential and robust predictor of both teachers' technology use intention. Based on the planned behavior theory, Davis (1989) introduced TAM to forecast the acceptance of a technology. Perceived Usefulness (PU) is the extent to which an individual thinks technology would boost their job performance (Davis, 1989). Previous studies have revealed that perceived Usefulness influences school teachers' intention to accept new technology (Antonietti et al., 2022; Bin et al., 2020; Xianhan et al., 2022). Concerning AI, even though limited, studies have also shown that perceived Usefulness could predict health specialists' intention to learn to use AI (Lin et al., 2021), learners' intention to learn AI (Chai et al., 2022), and teachers' intention to teach AI (Ayanwale et al., 2022). Based on these, we intend to validate previous findings and thus hypothesize that:

-

H2: Perceived Usefulness will significantly influence behavioral intention to teach AI.

Evidence exists in literature that indicates a strong association linking perceived Usefulness and attitude towards technology (Antonietti et al., 2022; Hwang et al., 2020). The correlation between the two constructs has also been established regarding students' learning of AI (Chai et al., 2022). However, there is a need to validate the previous findings and determine if perceived Usefulness influences attitudes towards AI concerning teachers' perspective of AI. Hence, we hypothesize that:

-

H3: Perceived Usefulness will significantly influence attitude towards AI.

Perceived Usefulness has been extensively used to predict acceptance and intention, among other variables. However, more information is needed about its relationship with relevance. Relevance, referred to as the match between technology and the needs of users (Park et al., 2009), could be impacted by users' attitudes regarding the Usefulness of the technology. We found a recent study (Elnagar et al., 2022) that suggests relevance predicts the perceived Usefulness of a smart device. Since perceived Usefulness likely influences relevance, we put forward the hypothesis below to validate our assumption.

-

H4: Perceived Usefulness will significantly influence AI relevance.

2.3 Attitude towards AI

Attitudes have been described as positive or negative feelings about specific behaviors (Ajzen, 2012). Sufficient evidence exists in the literature that shows individuals' attitudes directly influence their behavioral intention to use or learn technology (Kong & Lin, 2022; Szymkowiak & Jeganathan, 2022). In the context of AI, Chai et al. (2020) investigated how secondary school students' intentions to learn AI were linked to some psychological factors and found that attitude predicts behavioral intention. The study of Chai et al. (2022) also found an attitude toward using AI as the highest predictor of behavioral intention to learn about AI. While studies have begun to explore the association of this construct considering student participants, limited studies (Ayanwale et al., 2022) have been identified to establish evidence concerning teachers. Building on earlier findings, we hypothesize that:

-

H5: Attitude towards AI will significantly influence behavioral intention to teach AI.

2.4 AI relevance

Park et al. (2009) defined relevance as the extent to which the system effectively provides the users with the requested information. In the learning context, relevance was described as the connection between learned subject matter and the student's needs, future goals, or environments (Dai et al., 2020; Huang et al., 2006). This study, however, explored how teachers perceive the relevance of AI for their personal lives, learning in class, and essential for students' future. Teachers can skip AI training to understand the relevance of AI in school curriculum. We promote the idea that if teachers could establish a connection of AI relevance to their lives and its value for their students, there is a strong indication that teachers will be interested in promoting AI in their classrooms. A limited study (Ayanwale et al., 2022) in the context of AI has explored the connection concerning the relevance of AI and behavioral intention. We therefore hypothesize that:

-

H6: AI relevance will significantly influence behavioral intention to teach AI.

While empirical findings concerning the association between relevance and readiness in AI education from students (Dai et al., 2020) and teachers (Ayanwale et al., 2022) perspectives, more research is needed to establish the relationship between the two constructs. Hence, we hypothesize that:

-

H7: AI relevance will be a significant influence on AI readiness.

2.5 AI readiness

Parasuraman (2000) put technology readiness as the propensity of humans to accept and utilize new technologies for realizing goals in home life and at work. In learning, readiness is the sense of being prepared with the knowledge and skills required for future actions and circumstances (Smith, 2005). Readiness has been identified as critical in enhancing behavioral intention toward technology use or adoption (Shirahada et al., 2019; Tang et al., 2021). Based on Blut and Wang’s (2020) assertion that integrating technology is a complex process that requires readiness, it is reasonable to ascertain teachers’ readiness specifically regarding promoting AI in classrooms. Since.

-

H8: AI readiness will significantly influence behavioral intention to teach AI.

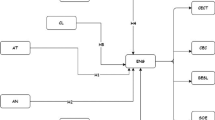

2.6 Moderation effects of AI for social good and confidence in AI

AI may be perceived to harm us because it can potentially replace our jobs. Some may believe that AI does more harm than good. Therefore, how they perceive the impact of AI on us is very important. This implies that AI for social good could affect the relationships in the model. In addition, AI may be perceived to be disruptive with its impact on society, and formal training on AI is limited. Lack of formal training or teacher education programs targeted at AI education will affect most teachers, and as a result, may not have the confidence to teach AI since they are not equipped with AI knowledge. Consequently, confidence in teaching AI could also affect the relationships in the research model in Fig. 1.

2.7 AI for social good

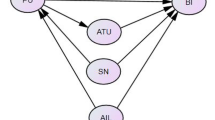

Duncan and Sankey (2019) assert that social good is the overarching aim of education, which emphasizes the significance of moral ideals cultivation. The concept was incorporated into AI textbooks to recognize the importance of promoting social good (Qin et al., 2019; Tang & Chen, 2018). Chai et al. (2021) reported that AI has many possible contributions to social good, such as AI robots for the aging population, among other solutions to societal problems. Empirical evidence has shown that relationships exist between AI for social good and behavioral intention (Ayanwale et al., 2022; Chai et al., 2020, 2022). However, using SG as a moderating variable is limited in the literature. In the context of AI, a study (Chai et al., 2022) has been found to use social good to moderate paths of the SEM model. SG specifically moderated PU → BI, ATU → BI, and SN → BI paths in the study. Our study, however, has a moderate association of AN—> PU, PU—> AT, PU—> RA, and AT—> BI paths. This study hypothesizes that teachers’ beliefs regarding using AI knowledge to solve problems and enhance people’s lives will moderate the paths in H1, H2, H3, H5, and H8. We, therefore, hypothesize that:

-

H9: AI for social good will be a significant moderator between the paths stated in hypotheses 1, 2, 3, 5, and 8.

2.8 Confidence in AI

Confidence is an essential factor influencing students' performance and readiness (Dai et al., 2020). It has also been established that positive feelings may be linked to increased intention to use, learn, or adopt technology (Jong, 2019; Jong & Shang, 2015). In this study, we contextualize confidence in AI as teachers' perception of positive feelings that they can support students' learning of AI and promote it within the school. While studies exist that have established relationships between confidence in AI and behavioral intention (Ayanwale et al., 2022; Chai et al., 2020), we have not identified a study that moderated several paths with confidence in AI to understand teachers’ intention to promote AI learning.

-

H10: Confidence in AI will be a significant moderator between the paths stated in hypotheses 3, 5, 6, and 8.

3 Methodology

This research utilized a quantitative variance-based structural equation modeling (VB-SEM) method to understand teachers' intention to prepare secondary school students for an AI-enabled future.

3.1 Context and participants

This research was conducted in government-owned secondary schools in south-west Nigeria. Realizing that Nigeria cannot be exempted from the global technological revolution, especially AI, that keeps permeating every facet of our lives, we gather the perspectives of in-service teachers to recognize the factors that will aid the ease of AI implementation in schools. Currently, there are no policy directives regarding AI integration into the school system in the Nigerian educational system (Sanusi, 2021a). The country is generating a national AI strategy since the Centre for Artificial Intelligence and Robotics was launched in 2020 to revolutionize the Nigerian digital economy (Oyelere et al., 2022). Nonetheless, there are initiatives by nongovernmental organizations (e.g., Data Science Network, DSN, n.d.) and researchers to promote AI learning (Sanusi et al., 2023) and AI capabilities for teaching and learning for both teachers and students.

A random sampling method was utilized to recruit our participants across several schools in a south-western state in Nigeria. The online questionnaire was shared among school teachers willing to participate through social media platforms and teachers’ association online forums in the region. The data-gathering process was carried out within a month through the online survey. This study followed the guidelines for conducting ethical and responsible research by the Finnish National Board on Research Integrity (TENK, n.d.). Table 1. detailed the demographic information of our respondents. Our respondents' ages, which account for about 41.3%, are mostly within 30 years. The representations of females and males were 51.2% and 48.8%, respectively. Nearly all the respondents have STEM-related degrees, and 62% facilitated such subjects at the upper secondary level. About 75% teach in public schools, and 68% are domiciled in urban settings. We used IBM SPSS Statistics to analyze the demographic data of the study participants.

3.2 Measures

Planned behavior theory was extended in this study to include AI anxiety, perceived Usefulness, attitude towards AI, AI relevance, AI readiness, AI for social good, and confidence in AI. The survey used in gathering the thoughts of the teachers encompasses two segments. The questionnaire's first segment elicits information about the students' demographic details, including gender, age, grade level at which they teach, their area of specialization (Science, Commercial, or Art), school type, and location. The second segment of the survey is the teachers' self-report of their perceptions on 31 items regarding the eight constructs examined in the study. Regardless that the items were adapted from existing and validated scales, we established content validity through experts' review to be appropriate for the teacher participants. Furthermore, items from previous studies were updated and revamped to meet our needs and improve applicability. A brief description of the subscales is highlighted below.

Other than readiness subscales adapted from Keramati et al. (2011) and relevance (Dai et al., 2020), all other construct items were adopted from Ayanwale et al. (2022) (see also Ayanwale & Sanusi, 2023) to measure behavioral intention as observed by the study participants. On a scale of 1 to 6, that is, response possibilities ranging from Strongly disagree (1) to agree (6), the educators stated their level of agreement with the statements concerning their perspectives and intention to teach AI in their future teaching practice. AI readiness items were modified by Keramati et al. (2011). The items reflect teachers' perceived comfort level with AI and access to AI resources with five items (e.g., I have access to relevant content to promote AI in my class). The relevance of AI subscale was modified from Dai et al.'s (2020) study. We adapted four of the five items in the original study (see Appendix). One example of the subscale is "AI content will be related to things I have seen, done, or thought about in my own life." More so, the instruments used in this study were tested to determine whether they were reliable and valid. The results showed that the measures had acceptable benchmarks with high scores for reliability and validity. The measures included AI anxiety, attitude towards AI, perceived Usefulness of AI, AI relevance, AI readiness, and behavioral intention to teach AI. The scores for the measures ranged from 0.868 to 0.952 for reliability and from 0.592 to 0.859 for validity. These results suggest that the measures accurately assess the constructs they intend to measure and that the questions included in the study are consistent. Overall, the measures used in this study are considered effective and appropriate tools for measuring teachers’ intention to prepare school students for AI education.

3.3 Data analysis

The analysis in this study was conducted using SmartPLS 4.0.7 software (Ringle et al., 2015). We chose to analyze the quantitative data using VB-SEM. We used VB-SEM because the approach can easily manage complex models with several indicators, constructs, and correlation models with no problems of identification and causal‐predictive focus (Akter et al., 2017). In addition, this method is more suitable in studies involving complex structural models and testing current theories (Ayanwale et al., 2023; Ringle et al., 2020). The partial least square structural equation modeling (PLS-SEM) involves a narrative of the measurement model and a breakdown of the structural model (Ringle et al., 2020; Wong, 2013). A description of the measurement model ensures that indicators are loaded with acceptable convergent validity, composite reliability, and discriminant validity, which was then used in the structural model. Evaluation of path coefficients and their importance is required for structural model assessment.

4 Result

4.1 Measurement model assessment

To confirm the construct's reliability and validity, the measurement model was scrutinized in the first phase of the evaluation process (Amusa & Ayanwale, 2021; Hair et al., 2017). The process included 25 items in general. A measurement model was evaluated, and two items were removed (ANI (0.143)—"I feel my heart sinking when I hear about AI advancement," and PU3 (-0.009)- "Using AI technology improves my performance") due to their factor loadings falling below the suggested benchmark level of 0.60 (Hair et al., 2016). This resulted in the final measurement process consisting of 23 items (see Table 2). Each construct has an average variance extracted (AVE) and composite reliability (ρC), Cronbach alpha, and rho, both equal to or exceeding benchmarks of 0.50 and 0.70, respectively (Hair et al., 2017; Sarstedt et al., 2022). Thus, convergent validity and reliability are established. In addition, Table 3 shows the discriminant validity results with a cutoff value of less than 0.85 for hetero trait mono trait ratios (Franke & Sarstedt, 2019; Hair et al., 2022; Henseler et al., 2015). As a result, each construct is empirically unique and captures a phenomenon not captured by other constructs in the PLS model (Franke & Sarstedt, 2019; Radomir & Moisescu, 2020; Sarstedt et al., 2022; Voorhees et al., 2016).

4.2 Structural model assessment

Based on the determination coefficient (R2) values for AT, BI, PU, RA, and RE, the independent variable contributed substantially to the variance in the dependent variable. R2 values are 0.535, 0.758, 0.763, 0.644, and 0.723, respectively. Moreover, the R2 values are above the minimum threshold of 0.10 required by Falk and Miller (1992) to sustain the model's in-sample predictive power (Sarstedt et al., 2014; Hair et al., 2019, 2021). In addition, effect size f2, which reveals how an exogenous construct impacts the endogenous construct, was computed as measured by changes in R2. According to this study, BI was forecasted by AT, PU, RA, and RE, AT and RA was predicted by PU, AN predicted PU, and RA forecasted RE. We calculated the relative effect sizes (f2) of the independent constructs. We found that they impacted the endogenous variables very strongly (f2 > 0.35) (Cohen, 2013), except for AN influence on PU and RE influence on BI.

Following the evaluation of the measurement model, the structural model was examined in another phase. Additionally, researchers should take precautions to ensure that potential collinearity between predictor constructs in the structural model does not negatively affect its estimates. According to the results, variance inflation factors (VIFs) ranged from 1.562 to 2.885, suggesting a lack of multicollinearity in the model (Akinwande et al., 2015; Hair et al., 2019). Afterward, the relationships between the constructs were confirmed. The impact of direct paths and standard errors were also evaluated using bootstrap resampling with 10,000 resamples (Ringle et al., 2015). Assessment outcomes for direct associations were summarized in Table 4. Finally, we confirmed that AI for social good (SG) moderated between the paths stated in hypotheses (H1-H3 and H5), and confidence in teaching AI (CON) moderated between the hypotheses (H3 and H5-H6). The results of the moderating examination are shown in Table 5.

Table 4 shows substantial evidence that AT, PU, and RA influence BI somewhat (β = 0.389, t = 5.905, p < 0.05; β = 0.134, t = 3.594, p < 0.05 and β = 0.435, t = 6.765, p < 0.05). As a result, H2, H4, and H6 were substantiated. A similar pattern emerges from the results of the impact of AN on PU (β = -0.272, t = 3.605, p < 0.05) and RA on RE (β = 0.522, t = 11.031, p < 0.05). The results supported H1 and H7. PU also had a substantial direct and positive influence (β = 0.731, t = 9.901, p < 0.05 and β = 0.668, t = 10.180, p < 0.05) on AT and RA. In this case, H3 and H5 were supported. However, RE did not significantly influence BI (β = -0.028, t = 0.754, p > 0.05). Therefore, H8 could not be supported.

4.3 Moderation effects of AI for social good and confidence in teaching AI

Table 5 shows that AI for social good (SG) and confidence in teaching AI (CON) moderated some associations in the hypothesized model, with t-values exceeding 1.96 thresholds. As a result, SG moderated paths between AT → BI, PU → RA, PU → AT, and AN → PU while CON moderated paths between RA → BI, PU → RA, and PU → AT, respectively. However, SG did not moderate for the paths between RE → BI, while CON failed to show significant moderation between RE → BI.

5 Discussion and implication

The present study examined how teachers perceived AI for social good and confidence in teaching AI moderate relationships in our proposed model predicting teachers' BI to teach AI in schools. This is the first study examining these moderators from the teacher’s perspective. A structural equation model of variance-based was applied to investigate eight hypotheses designed to represent inter-relationships of anxiety, attitude, perceived Usefulness, relevance, readiness, and behavioral intention. Seven of our proposed hypotheses were supported by relating to teachers' BI to teach AI based on a TAM and planned behavior theory model, with 75.8% of variance explained by BI's predictor variables (AT, PU, RA, and RE).

The proposed model is partially supported; H1 – H7 are supported. As demonstrated in previous studies (Antonietti et al., 2022; Bin et al., 2020; Xianhan et al., 2022), perceived Usefulness directly influences teachers' acceptance of new technology, and individuals' attitudes directly impact their BI to use or learn technology (Kong & Lin, 2022; Szymkowiak & Jeganathan, 2022). According to many studies that have used TAM, perceived Usefulness and attitude to use are significant predictors of users' behavioral intentions (Buabeng-Andoh, 2021; Chai et al., 2022; Kumar & Mantri, 2021; Park, 2009; Weng et al., 2018). A strong link has been confirmed between perceived Usefulness and attitude toward technology, including technology anxiety (Antonietti et al., 2022; Hwang et al., 2020) to predict behavioral intention. These findings suggest that professional development programs should encourage teachers' understanding of AI's Usefulness and a positive attitude towards using it in the classroom to get them to teach AI. Readiness has also been identified as critical in enhancing BI towards technology use or adoption (Shirahada et al., 2019; Tang et al., 2021). Based on the study of Wang and Wang (2022), the primary purpose of developing an AI anxiety measure is to predict behavior, which is consistent with this study. While existing studies explored the connection involving anxiety and intention (Ayanwale et al., 2022; Chai et al., 2020), this study is interested in how measuring self-perceived fear and discomfort about AI technologies/products affects perceived Usefulness. That is, will the misunderstandings and misconceptions about AI technologies contributing to concerns, fears, and anxieties (Wang & Wang, 2022) affect the perceived Usefulness of AI, such as helping teachers to perform their teaching practices effectively. Relevance, which connotes the association between learned subject matter and the student's needs, future goals, or environments, was an antecedent of readiness and intention (Ayanwale et al., 2022; Dai et al., 2020). These supported relationships generally agree with earlier related studies (e.g., Chai et al., 2022). The results of this study further showed that AI for social good moderated the relationship between factors (AT—> BI, PU—> RA, PU—> AT, and AN—> PU) with a positive influence on teachers' BI to teach AI.

Technologies are created to be worthwhile regardless of whether users are likely to adopt the appropriate attitude to make them useful. Only the relationships between RE and BI are not significantly supported among the eight hypotheses. The result also disagrees with past research (Shirahada et al., 2019; Tang et al., 2021), which contends that readiness is key in increasing the likelihood of adopting technology. In AI education, this finding is inconsistent with earlier studies (e.g., Ayanwale et al., 2022). While Blut and Wang (2020) argue that technology integration is a complicated process that requires readiness, this finding suggests that readiness alone may not account for the intention to promote AI knowledge. This indicates that other factors besides readiness should be considered while implementing AI lessons. While teachers' readiness appears valuable to integrating AI into educational systems, facilitating conditions and state policies are important factors that influence teachers' actions and classroom practices. The paths where AI for social good or confidence in teaching AI did not moderate the relationship between RE and BI (RE—> BI) suggest that social good or confidence did not influence this relationship. Many AI researchers have expressed strong support for designing AI for social good, given the potential of AI (Floridi et al., 2020; Tucker, 2019). AI for social good means developing, implementing, and managing artificial intelligence systems to prevent, mitigate, or resolve adverse effects on human health and/or the environment (Cowls et al., 2021). The idea resonated with the importance of social good moderating the relationship between factors positively influencing teachers’ BI to teach AI (Chai et al., 2022; Qin et al., 2019). This aligns with general educational goals of cultivating caring citizens and with school curriculums focused on computing as a means for the common good (Goldweber et al., 2011). Individuals are motivated to engage in such activities psychologically when learning enhances their ability to serve society (Yeager & Bundick, 2009).

According to Moore (2019, p.2), "Social good shifts between social responsibility, societal impacts, society, common good, the good, development, and ethics." Responsible use of AI and ethics are essential factors when considering AI's social impact. Existing literature suggests that integrating ethical dilemmas and social challenges in the AI curriculum can amplify interest in the course (Saltz et al., 2019; Sanusi & Olaleye, 2022). Based on this, social good can be considered a pedagogical belief, as teaching AI requires teachers to be equipped with pedagogical content knowledge to lead the discussion in the classroom. This study highlights the value of social good and confidence in the pedagogical design of AI as a subject in schools. Since teacher education programs designed to train future teachers on the delivery of AI content are non-existent (Sanusi et al., 2022a, b), and limited professional learning opportunities are available for teachers to learn AI content they will be teaching their students, confidence to teach AI becomes a challenge. The essence of formal training for teachers becomes apparent as AI education continues to develop as a global initiative.

It is expected that the results of this study will contribute to initiatives that encourage teachers to teach AI in schools to ensure its effective implementation, especially since AI is regarded as a concept of importance that all teachers should teach, regardless of grade level. A professional development program for educators can also be based on the results of this study, which is an essential aspect of AI education. This study's paths are moderated by confidence in teaching AI and using AI for social good. In light of these factors, researchers and stakeholders could focus on fostering the positive value of AI and instilling confidence in teachers, empowering them to teach using AI content knowledge and, for example, helping teachers understand the benefits and risks of AI. When the teachers understand how AI benefits our society, such as helping humans make the right healthcare decisions, they are more likely to engage in AI teaching and planning actively. The risks of AI are caused by human bias; therefore, introducing risks or human bias in teacher professional programs will make AI do more good than harm to society. Moreover, introducing successful cases or good practices in the programs will boost the teacher's confidence. This approach will help teachers visualize AI teaching. In conclusion, teachers have a significant role in preparing the next generation and students to learn AI. Teachers must promote AI in the classroom to ensure that AI is implemented in schools and that students are prepared for a future in which humans and AI will work together.

5.1 Limitations and future work

We identified some limitations of the study, which are explicitly related to generalizability and self-reported perspectives. First, we randomly selected our participants in a south-western state in Nigeria, which does not represent teachers' opinions in the region. A study with strategic sampling should be used. Second, the respondents may have yet to clearly understand AI and its application in education; therefore, may have provided premature responses to the measurement items based on their beliefs and not necessarily on AI knowledge and education. We addressed the abovementioned limitation by providing enough instructions through the survey. Future studies should be curriculum-dependent to establish if the teachers' self-report data accurately represent their understanding of AI knowledge. The participants' previous AI knowledge and experience, which could influence teachers' intentions or attitudes toward AI, should have been considered. Future studies could explore these crucial variables. In addition, professional learning opportunities should be designed for in-service teachers to learn the content they will teach. This may include co-designing learning resources with researchers as well as considering incorporating AI across the curriculum, that is, infusing AI concepts in non-computing subjects (Sanusi et al., 2022a, b). Finally, exploring the use of mediation or moderation of the constructs with demographic variables (e.g., gender) could generate valuable insights.

6 Conclusion

While previous studies have provided some insight into the conceptual basis of this rapidly growing domain, these studies should have considered teachers' perspectives. The current paper presents empirical evidence of teachers' perceptions of psychological factors that impact the intention to teach AI. The factors considered include AI anxiety, perceived Usefulness, attitude towards AI, AI relevance, AI readiness, and behavioral intention. We further provided a nuanced understanding of teachers' perspectives through the moderation effects of teachers' AI for social good and confidence in teaching AI. Through a close-ended questionnaire, we inquired about the teachers' perspectives regarding the eight constructs considered in the study. Of the nine hypotheses tested, only two were not supported: AI anxiety does not influence perceived Usefulness, and AI readiness does not impact behavioral intention to teach AI. The relevance of AI emerges as the most significant predictor of behavioral intention to teach AI. For the indirect effect, relevance is the highest predictor of readiness, followed by perceived Usefulness and attitude toward AI. AI for social good and confidence could not moderate between readiness and behavioral intention, while others agree with the path in the research model. Identifying factors that influence teachers to teach AI in schools contributes to the existing work on AI education development as the initiative keeps generating global interest.

Data availability

All data generated or analyzed during this study are included in this manuscript.

Code availability

Not applicable.

References

Abdullah, F., & Ward, R. (2016). Developing a general extended technology acceptance model for E-learning (GETAMEL) by analyzing commonly used external factors. Computers in Human Behavior, 56, 238e256. https://doi.org/10.1016/j.chb.2015.11.036

Ajzen, I. (1991). The Theory of planned behavior. Organizational Behavior and Human Decision Processes, 50, 179–211. https://doi.org/10.1016/0749-5978(91)90020-T

Ajzen, I. (2002). Perceived behavioral control, self-efficacy, locus of control, and the Theory of planned behavior. Journal of Applied Psychology, 32(4), 665–683. https://doi.org/10.1111/j.1559-1816.2002.tb00236.x

Ajzen, I. (2012). The Theory of planned behavior. In Van P. A. M. Lange, A. W. Kruglanski, & E. T. Higgins (Eds.), Handbook of Theories of Social Psychology (pp. 438–459). Sage.

Akinwande, M. O., Dikko, H. G., & Agboola, S. (2015). Variance inflation factor: As a condition for the inclusion of suppressor variable(s) in regression analysis. Open Journal of Statistics, 5(7), 754–767.

Akter, S., Fosso Wamba, S., & Dewan, S. (2017). Why PLS-SEM is suitable for complex modelling? An empirical illustration in big data analytics quality. Production Planning & Control, 28(11–12), 1011–1021.

Amusa, J.O., & Ayanwale, M. A. (2021). Partial Least Square Modeling of Personality Traits and Academic Achievement in Physics. Asian Journal of Assessment in Teaching and Learning, 11(2), 77–92. https://doi.org/10.37134/ajatel.vol11.2.8.2021

Antonietti, C., Cattaneo, A., & Amenduni, F. (2022). Can teachers’ digital competence influence technology acceptance in vocational education? Computers in Human Behavior, 132, 107266.

Aung, Z. H., Sanium, S., Songsaksuppachok, C., Kusakunniran, W., Precharattana, M., Chuechote, S., & Ritthipravat, P. (2022). Designing a novel teaching platform for AI: A case study in a Thai school context. Journal of Computer Assisted Learning, 38(6), 1714–1729.

Ayanwale, M. A., Molefi, R. R., & Matsie, N. (2023). Modelling secondary school students’ attitudes toward TVET subjects using social cognitive and planned behavior theories. Social Sciences & Humanities Open, 8(1), 100478.

Ayanwale, M. A., Sanusi, I. T., Adelana, O. P., Aruleba, K., & Oyelere, S. S. (2022). Teachers’ Readiness and Intention to Teach Artificial Intelligence in Schools. Computers and Education: Artificial Intelligence, 3, 1–11. https://doi.org/10.1016/j.caeai.2022.100099

Ayanwale, M. A. & Sanusi, I. T. (2023). Perceptions of STEM vs. Non-STEM Teachers toward Teaching Artificial Intelligence. 2023 IEEE AFRICON Conference Proceedings (Accepted). IEEE

Bin, E., Islam, A. A., Gu, X., Spector, J. M., & Wang, F. (2020). A study of Chinese technical and vocational college teachers’ adoption and gratification in new technologies. British Journal of Educational Technology, 51(6), 2359–2375.

Blut, M., & Wang, C. (2020). Technology readiness: A meta-analysis of conceptualizations of the construct and its impact on technology usage. Journal of the Academy of Marketing Science, 48, 649–669.

Buabeng-Andoh, C. (2021). Exploring University students’ intention to use mobile learning: A research model approach. Education and Information Technologies, 26(1), 241–256.

Chai, C. S., Lin, P. Y., Jong, M. S. Y., Dai, Y., Chiu, T. K., & Qin, J. (2021). Perceptions of and behavioral intentions towards learning artificial intelligence in primary school students. Educational Technology & Society, 24(3), 89–101.

Chai, C. S., Wang, X., & Xu, C. (2020). An extended theory of planned behavior for the modeling of Chinese secondary school students’ intention to learn Artificial Intelligence. Mathematics, 8(11), 2089.

Chai, C. S., Teo, T., Huang, F., & Chiu, T. K. (2022). Secondary school students’ intentions to learn AI: Testing moderation effects of readiness, social good and optimism. Educational Technology Research and Development, 70(3), 765–782.

Chen, H., Park, H. W., & Breazeal, C. (2020). Teaching and learning with children: Impact of reciprocal peer learning with a social robot on children’s learning and emotive engagement. Computers & Education, 150, 103836.

Chen, X., Zou, D., Xie, H., Cheng, G., & Liu, C. (2022). Two Decades of Artificial Intelligence in Education: Contributors, Collaborations, Research Topics, Challenges, and Future Directions. Educational Technology & Society, 25(1), 28–47.

Chiu, T. K. (2021). A holistic approach to the design of artificial intelligence (AI) education for K-12 schools. TechTrends, 65(5), 796–807.

Cohen, J. (2013). Statistical power analysis for the behavioral sciences. Routledge.

Cowls, J., Tsamados, A., Taddeo, M., & Floridi, L. (2021). A definition, benchmark, and database of AI for social good initiatives. Nature Machine Intelligence, 3(2), 111–115.

Dai, Y., Chai, C. S., Lin, P. Y., Jong, M. S. Y., Guo, Y., & Qin, J. (2020). Promoting students’ well-being by developing their readiness for the artificial intelligence age. Sustainability (switzerland), 12(16), 1–15. https://doi.org/10.3390/su12166597

Davis, F. D. (1989). Perceived Usefulness, perceived ease of use, and user acceptance of information technology. MIS Quarterly, 13(3), 319–340.

DSN. (n.d.). Data scientists network, AI for kids and teens. Retrieved January 31, 2023, from https://www.datasciencenigeria.org/ai-for-kids-and-teens/

Duncan, C., & Sankey, D. (2019). Two conflicting visions of education and their consilience. Educational Philosophy and Theory, 51, 1454–1464.

Elnagar, A., Alnazzawi, N., Afyouni, I., Shahin, I., Nassif, A. B., & Salloum, S. A. (2022). Prediction of the intention to use a smartwatch: A comparative approach using machine learning and partial least squares structural equation modeling. Informatics in Medicine Unlocked, 29, 100913.

Falk, R. F., & Miller, N. B. (1992). A primer for soft modeling. University of Akron Press.

Fishbein, M., & Ajzen, I. (2010). Predicting and changing behavior: The Reasoned action approach. Psychology Press.

Floridi, L., Cowls, J., King, T. C., & Taddeo, M. (2020). How to Design AI for Social Good: Seven Essential Factors. Science and Engineering Ethics, 26, 1771–1796. https://doi.org/10.1007/s11948-020-00213-5

Franke, G. R., & Sarstedt, M. (2019). Heuristics versus statistics in discriminant validity testing: A comparison of four procedures. Internet Research, 29(3), 430–447.

Goldweber, M., Davoli, R., Little, J. C., Riedesel, C., Walker, H., Cross, G., & Von Konsky, B. R. (2011). Enhancing the social issues components in our computing curriculum: Computing for the social good. ACM Inroads, 2(1), 64–82. https://doi.org/10.1145/1929887.1929907

Hair, J. F., Hult, G. T. M., Ringle, C. M., & Sarstedt, M. (2017). A Primer on Partial Least Squares Structural Equation Modeling (PLS‐SEM) (2nd ed.). Sage.

Hair, J. F., Hult, G. T. M., Ringle, C. M., & Sarstedt, M. (2022). A Primer on Partial Least Squares Structural Equation Modeling (PLS‐SEM) (3rd ed.). Sage.

Hair, J. F., Hult, G. T. M., Ringle, C. M., Sarstedt, M., Danks, N. P., & Ray, S. (2021). Partial Least Squares Structural Equation Modeling (PLS-SEM) Using R. Springer.

Hair, J. F., Hult, G. T. M., Ringle, C., & Sarstedt, M. (2016). A primer on partial least squares structural equation modeling (PLS-SEM). Sage publications.

Hair, J. F., Risher, J. J., Sarstedt, M., & Ringle, C. M. (2019). When to use and how to report the results of PLS-SEM. European Business Review, 31(1), 2–24.

Henseler, J., Ringle, C. M., & Sarstedt, M. (2015). A new criterion for assessing discriminant validity in variance-based structural equation modeling. Journal of the Academy of Marketing Science, 43(1), 115–135.

Huang, W., Huang, W., Diefes-Dux, H., & Imbrie, P. K. (2006). A preliminary validation of Attention, Relevance, Confidence and Satisfaction model-based Instructional Material Motivational Survey in a computer-based tutorial setting. British Journal of Educational Technology, 37(2), 243–259.

Hwang, G. J., Xie, H., Wah, B. W., & Gašević, D. (2020). Vision, challenges, roles and research issues of Artificial Intelligence in Education. Computers and Education: Artificial Intelligence, 1. https://doi.org/10.1016/j.caeai.2020.100001

Ifinedo, P. (2006). Acceptance and continuance intention of Web-based Learning Technologies (WLT) use among university students in a Baltic country. The Electronic Journal of Information Systems in Developing Countries, 23(6), 1e20.

Johnson, D. G., & Verdicchio, M. (2017). AI anxiety. Journal of the Association for Information Science and Technology, 68(9), 2267–2270.

Jong, M. S. Y. (2019). Sustaining the adoption of gamified outdoor social inquiry learning in high schools through addressing teachers’ emerging concerns: A three-year study. British Journal of Educational Technology, 50(3), 1275–1293.

Jong, M. S. Y., & Shang, J. J. (2015). Impeding phenomena emerging from students’ constructivist online game-based learning process: Implications for the importance of teacher facilitation. Educational Technology & Society, 18(2), 262–283.

Keramati, A., Afshari-Mofrad, M., & Kamrani, A. (2011). The role of readiness factors in E-learning outcomes: An empirical study. Computers & Education, 57(3), 1919–1929.

Kong, S. C., & Lin, T. (2022). High achievers’ attitudes, flow experience, programming intentions and perceived teacher support in primary school: A moderated mediation analysis. Computers & Education, 190, 104598.

Kumar, A., & Mantri, A. (2021). Evaluating the attitude towards the intention to use ARITE system for improving laboratory skills by engineering educators. Education and Information Technologies. https://doi.org/10.1007/s10639-020-10420-z

Lee, I., & Perret, B. (2022). Preparing High School Teachers to Integrate AI Methods into STEM Classrooms. Association for the Advancement of Artificial Intelligence.

Lin, H. C., Tu, Y. F., Hwang, G. J., & Huang, H. (2021). From precision education to precision medicine: Factors affecting medical staff’s intention to learn to use AI applications in hospitals. Educational Technology & Society, 24(1), 123–137.

Lin, P., & Van Brummelen, J. (2021). Engaging teachers to Co-design integrated AI curriculum for K-12 classrooms. In Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems (pp. 1–12).

Liu, X. (2010). Empirical testing of a theoretical extension of the technology acceptance model: an exploratory study of educational wikis. Communication Education, 59(1), 52e69. https://doi.org/10.1080/03634520903431745

Mahipal, V., Ghosh, S., Sanusi, I. T., Ma, R., Gonzales, J. E., & Martin, F. G. (2023). DoodleIt: A novel tool and approach for teaching how CNNs perform image recognition. In Proceedings of the 25th Australasian Computing Education Conference (pp 31–38).

Moore, J. (2019). AI for Not Bad. Frontiers in Big Data, 2, 32. https://doi.org/10.3389/fdata.2019.00032

Oyelere, S. S., Sanusi, I. T., Agbo, F. J., Oyelere, A. S., Omidiora, J. O., Adewumi, A. E., & Ogbebor, C. (2022). Artificial Intelligence in African Schools: Towards a Contextualized Approach. In 2022 IEEE global engineering education conference (EDUCON) (pp. 1577–1582). IEEE.

Parasuraman, A. (2000). Technology Readiness Index (TRI) a multiple-item scale to measure readiness to embrace new technologies. Journal of Service Research, 2(4), 307–320.

Park, N., Roman, R., Lee, S., & Chung, J. E. (2009). User acceptance of a digital library system in developing countries: An application of the Technology Acceptance Model. International Journal of Information Management, 29(3), 196–209.

Park, S. (2015). The Effects of Social Cue Principles on Cognitive Load, Situational Interest, Motivation, and Achievement in Pedagogical Agent Multimedia Learning. Educational Technology & Society, 18(4), 211–229.

Park, S. Y. (2009). An analysis of the Technology Acceptance Model in understanding university students’ behavioral intention to use e-Learning. Educational Technology & Society, 12(3), 150–162.

Park, Y., Son, H., & Kim, C. (2012). Investigating the determinants of construction professionals' acceptance of web-based training: an extension of the technology acceptance model. Automation in Construction, 22, 377e386.

Purnomo, S. H., & Lee, Y. (2013). E-learning adoption in the banking workplace in Indonesia: an empirical study. Information Development, 29(2), 138e153. https://doi.org/10.1177/0266666912448258

Qin, J. J., Ma, F. G., & Guo, Y. M. (2019). Foundations of artificial intelligence for primary school. Beijing, China: Popular Science Press.

Radomir, L., & Moisescu, O. I. (2020). Discriminant validity of the customer-based corporate reputation scale: Some causes for concern. Journal of Product & Brand Management, 29(4), 457–469.

Ringle, C. M., Wende, S., & Becker, J.‐M. (2015). SmartPLS 3. Bönningstedt: SmartPLS. Retrieved from https://www.smartpls.com/. Accessed 12 Nov 2022

Ringle, C. M., Sarstedt, M., Mitchell, R., & Gudergan, S. P. (2020). Partial least squares structural equation modeling in HRM research. The International Journal of Human Resource Management, 31(12), 1617–1643. https://ssrn.com/abstract=2233795. Accessed 12 Nov 2022

Saltz, J., Skirpan, M., Fiesler, C., Gorelick, M., Yeh, T., Heckman, R., ... & Beard, N. (2019). Integrating ethics within machine learning courses. ACM Transactions on Computing Education (TOCE), 19(4), 1–26.

Sanusi, I. T., & Oyelere, S. S. (2020). Pedagogies of machine learning in K-12 context. In 2020 IEEE Frontiers in Education Conference (FIE) (pp. 1–8). IEEE.

Sanusi, I. T. (2021a). Intercontinental evidence on learners’ differentials in sense-making of machine learning in schools. In Proceedings of the 21st Koli Calling International Conference on Computing Education Research (pp 1–2).

Sanusi, I.T. (2021b). Teaching machine learning in K-12 education. In Proceedings of the 17th ACM conference on international computing education research (pp. 395–397).

Sanusi, I. T., & Olaleye, S. A. (2022). An insight into cultural competence and ethics in K-12 artificial intelligence education. In 2022 IEEE Global Engineering Education Conference (EDUCON) (pp. 790–794). IEEE.

Sanusi, I. T., Oyelere, S. S., & Omidiora, J. O. (2022a). Exploring teachers’ preconceptions of teaching machine learning in high school: A preliminary insight from Africa. Computers and Education Open, 3, 100072.

Sanusi, I. T., Oyelere, S. S., Vartiainen, H., Suhonen, J., & Tukiainen, M. (2022b). A systematic review of teaching and learning machine learning in K-12 education. Education and Information Technologies, 1–31.

Sanusi, I. T., Oyelere, S. S., Vartiainen, H., Suhonen, J., & Tukiainen, M. (2023). Developing middle school students’ understanding of machine learning in an African school. Computers and Education: Artificial Intelligence, 100155.

Sarstedt, M., Hair, J. F., Pick, M., Liengaard, B. D., Radomir, L., & Ringle, C. M. (2022). Progress in partial least squares structural equation modeling use in marketing research in the last decade. Psychology & Marketing, 39, 1035–1064. https://doi.org/10.1002/mar.21640

Sarstedt, M., Ringle, C. M., Henseler, J., & Hair, J. F. (2014). On the emancipation of PLS-SEM: A Commentary on Rigdon (2012). Long Range Planning, 47(3), 154–160.

Scherer, R., & Teo, T. (2019). Unpacking teachers’ intentions to integrate technology: A meta-analysis. Educational Research Review, 27, 90–109.

Shirahada, K., Ho, B. Q., & Wilson, A. (2019). Online public services usage and the elderly: Assessing determinants of technology readiness in Japan and the UK. Technology in Society, 58, 101115.

Smith, P. (2005). Learning Preferences and Readiness for Online Learning. Educational Psychology, 25, 3–12.

Szymkowiak, A., & Jeganathan, K. (2022). Predicting user acceptance of peer‐to‐peer e‐learning: An extension of the technology acceptance model. British Journal of Educational Technology, 53(6), 1993–2011.

Tang, X., and Chen, Y. (2018). Fundamentals of Artificial Intelligence. East China Normal University. ISBN 9787567575615.

Tang, Y. M., Chen, P. C., Law, K. M., Wu, C. H., Lau, Y. Y., Guan, J., ... & Ho, G. T. (2021). Comparative analysis of Student's live online learning readiness during the coronavirus (COVID-19) pandemic in the higher education sector. Computers & education, 168, 104211.

TENK, (n.d.). Finnish Advisory Board on Research Integrity – Responsible conduct in research and procedures for handling allegations of misconduct in Finland. https://tenk.fi/sites/default/files/2023-05/RI_Guidelines_2023.pdf (Accessed 23 July 2023).

Tucker, C. (2019). Privacy, Algorithms, and Artificial Intelligence. In A. Agrawal, J. Gans, & A. Goldfarb (Eds.), The Economics of Artificial Intelligence (pp. 423–438). University of Chicago Press. https://doi.org/10.7208/9780226613475-019

Voorhees, C. M., Brady, M. K., Calantone, R., & Ramirez, E. (2016). Discriminant validity testing in marketing: An analysis, causes for concern, and proposed remedies. Journal of the Academy of Marketing Science, 44(1), 119–134.

Wang, Y. Y., & Wang, Y. S. (2022). Development and validation of an artificial intelligence anxiety scale: An initial application in predicting motivated learning behavior. Interactive Learning Environments, 30(4), 619–634.

Weng, F., Yang, R. J., Ho, H. J., & Su, H. M. (2018). A TAM-based study of the attitude towards use intention of multimedia among School Teachers. Applied System Innovations, 1(3), 36. https://doi.org/10.3390/asi1030036

Williams, R., Park, H. W., Oh, L., & Breazeal, C. (2019). Popbots: Designing an artificial intelligence curriculum for early childhood education. In Proceedings of the AAAI Conference on Artificial Intelligence 33(1), pp. 9729-9736.

Wong, -K.-K.-K. (2013). Partial least squares structural equation modeling (PLS-SEM) techniques using SmartPLS. Marketing Bulletin, 24(1), 1–32. http://marketing-bulletin.massey.ac.nz

Xia, Q., Chiu, T. K., Lee, M., Sanusi, I. T., Dai, Y., & Chai, C. S. (2022). A self-determination theory (SDT) design approach for inclusive and diverse artificial intelligence (AI) education. Computers & Education, 189, 104582.

Xianhan, H., Chun, L., Mingyao, S., & Caixia, S. (2022). Associations of different types of informal teacher learning with teachers’ technology integration intention. Computers & Education, 190, 104604.

Yeager, D. S., & Bundick, M. J. (2009). The role of purposeful work goals in promoting meaning in life and in schoolwork during adolescence. Journal of Adolescent Research, 24(4), 423–452.

Acknowledgements

The authors sincerely appreciate everyone who contributed to the success of this research.

Funding

Open access funding provided by University of Eastern Finland (including Kuopio University Hospital). This research received no specific grant from any funding agency in the public, commercial, or not-for-profit sectors.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing interests

The authors declare that they have no competing interests.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix

Appendix

Please rate your agreement on the following questions regarding your perception of the items below, where 1 = strongly disagree through to 6 = strongly agree.

ANXIETY

-

AN2: When I think about AI, I cannot answer many questions about my future.

-

AN3: When I consider the capabilities of AI, I think about how difficult my future will be.

-

AN4: I have an uneasy, upset feeling when I think about AI.

PERCEIVED USEFULNESS

-

PU1: Using AI technology enables me to accomplish tasks more quickly

-

PU2: Using AI technology enhances my effectiveness

-

PU4: Using AI technology increases my productivity

AS FOR SOCIAL GOOD

-

SG1: AI can be used to help disadvantaged people.

-

SG2: AI can promote human well-being.

-

SG3:I wish to use AI knowledge to serve others.

-

SG4: The use of AI should aim to achieve common good.

ATTITUDE TOWARDS USING AI

-

AT1: Using AI technology is pleasant.

-

AT2: I find using AI technology to be enjoyable

-

AT3: I have fun using AI technology.

CONFIDENCE IN TEACHING AI

-

CON1: I am confident I can introduce the most complex material about AI in class.

-

CON2: I believe that I can succeed in demystifying AI for student if I try hard enough.

-

CON3: I feel confident that I will support students learning of AI in my class.

-

CON4: I am confident I can teach the basic concepts about AI in class.

BEHAVIOURAL INTENTION

-

BI1: I will continue to learn about AI knowledge

-

BI2: I will keep myself updated with the latest AI applications.

-

BI3: I plan to spend time in learning AI technology in the future.

-

BI4: I will pay more attention to emerging AI applications.

-

B5: I intend to use AI to assist my teaching.

RELEVANCE OF AI

-

RA1: Learning AI in class will be useful

-

RA2: AI content will be related to things I have seen, done or thought about in my own life.

-

RA3: It is clear to me how the content of AI is related to my lifestyle.

-

RA4: The content of AI will be useful to me in terms of learning the concept effectively.

READINESS

-

RE1: I have the relevant knowledge to teach AI in my class

-

RE2: I have access to appropriate hardware to teach AI in my class

-

RE3: I have access to appropriate software to teach AI in my class

-

RE4: I have access to relevant content to teach AI in my class

-

RE5: My school administration will support the teaching of AI in my class

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Sanusi, I.T., Ayanwale, M.A. & Chiu, T.K.F. Investigating the moderating effects of social good and confidence on teachers' intention to prepare school students for artificial intelligence education. Educ Inf Technol 29, 273–295 (2024). https://doi.org/10.1007/s10639-023-12250-1

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10639-023-12250-1