Abstract

A Bayesian treatment of deep learning allows for the computation of uncertainties associated with the predictions of deep neural networks. We show how the concept of Errors-in-Variables can be used in Bayesian deep regression to also account for the uncertainty associated with the input of the employed neural network. The presented approach thereby exploits a relevant, but generally overlooked, source of uncertainty and yields a decomposition of the predictive uncertainty into an aleatoric and epistemic part that is more complete and, in many cases, more consistent from a statistical perspective. We discuss the approach along various simulated and real examples and observe that using an Errors-in-Variables model leads to an increase in the uncertainty while preserving the prediction performance of models without Errors-in-Variables. For examples with known regression function we observe that this ground truth is substantially better covered by the Errors-in-Variables model, indicating that the presented approach leads to a more reliable uncertainty estimation.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

In recent years deep neural networks have proven to be a useful and powerful tool in various tasks, ranging from medical applications [19, 20], over language processing [15, 31] to computer vision [16, 42], robotics [24, 27, 34] and autonomous driving [9, 13]. In many applications, especially those in which reliability and safety are crucial [25, 30, 39], it is valuable, if not indispensable, to know the uncertainty behind a prediction of a neural network. This work focuses on the uncertainty evaluation for neural networks that are trained for regression tasks [6, 16, 21, 26, 28, 36]. Regression problems arise in a variety of areas [12, 20, 23, 29] and are typically given by a model \(f_\theta \), parameterized by \(\theta \), that links input data x to outputs y :

where \(\varepsilon _y\sim {\mathcal {N}}(0, \sigma _y^2)\) is some normally distributed noiseFootnote 1 that disturbs the true label (i.e., value of the regression function) \(f_\theta (x)\) corresponding to x.

In deep regression \(f_\theta \) is a neural network with parameters \(\theta \). Training this neural network means to infer a value for \(\theta \) from pairs (x, y) contained in a training set \({\mathcal {D}}\). Uncertainties for predictions of a trained neural network are usually categorized using two different terms. Epistemic uncertainty arises from the uncertainty about the trained model, that is about \(\theta \) in the notation of (1). In a Bayesian approach, as in this work, this uncertainty is described by the posterior \(\pi (\theta |{\mathcal {D}})\), which is the distribution of \(\theta \) conditional on the data \({\mathcal {D}}\) [2, 6, 17]. This type of uncertainty vanishes as the number of observations tends to infinity, as follows for instance from the Bernstein-von Mises theorem. Aleatoric uncertainty, on the other hand, describes an uncertainty that is inherent to the data and cannot be reduced even with an infinite training set. In the context of regression this corresponds to a noise such as \(\varepsilon _y\) in (1) and might be measured, for instance, using \(\sigma _y\). In deep learning, the aleatoric uncertainty expressed by \(\sigma _y\) is often considered as important and is regarded as measuring inherent, irreducible label ambiguity [3, 10, 14, 16]. However, such a point of view no longer applies in those regression problems where the goal is to predict the regression function \(f_\theta (x^*)\) at some (observed) \(x^*\). In this case only the epistemic uncertainty about the network’s parameter \(\theta \) remains which, in principle, could become arbitrarily small as the training set grows. However, in most cases \(x^*=\zeta ^*+\varepsilon _x\) contains some noise \(\varepsilon _x\) as well, and one is actually interested in the value of \(f_\theta (\zeta ^*)\). The uncertainty caused by the fact that \(x^*\), and not \(\zeta ^*\), is observed then constitutes an aleatoric part of the uncertainty for the prediction of \(f_\theta (\zeta ^*)\) that is not covered by \(\sigma _y\) nor by the uncertainty of \(\theta \).

We here attempt to provide a more consistent view on these issues and, we argue, a more accurate depiction. In many cases, it is a too crude assumption to presume that, while y is deranged by noise, x is not. Standard estimation procedures such as maximum likelihood or nonlinear least-squares become biased for a regression model of the form (1) when x is observed with noise [5]. Furthermore, a separate quantification of the aleatoric part of the uncertainty due to the noise of the input is not possible in a model like (1), since the variance \(\sigma _y^2\) accounts for noise in the output. Following the idea of Errors-in-Variables (EiV), a quite classical concept in statistics [5], we resolve these issues by changing (1) to

where \(\zeta \) denotes the true, but unknown, input value. Besides allowing for noisy input data, model (2) allows the aleatoric uncertainty to be treated in a manner that is more coherent from a statistical perspective. Throughout this work we will refer to (2) as the EiV model and to (1) as the non-EiV model. An illustration of both approaches is given by Fig. 1.

The uncertainty that arises in the EiV model for \(\zeta \) given x is an aleatoric uncertainty that must be taken into account whenever predicting \(f_\theta (\zeta )\). It explains why there can be an uncertainty of the prediction even if there is no remaining epistemic uncertainty, without forcing this role on the output noise.

Illustration of the Errors-in-Variables (EiV) approach presented in this work and the method without EiV (non-EiV). The EiV model introduces an additional uncertainty to the input of the network that is aleatoric and, in contrast to the one linked with y, in many cases more coherent with the classical statistical view on uncertainty, cf. Sect. 1. The network illustration was created using [22]

We will approach (2) from a Bayesian point of view, which is a consistent way of describing uncertainty but one that involves the challenge of sampling from a high dimensional posterior on \(\theta \). To overcome this obstacle, we build on the idea to use variational inference, as e.g. in [2, 6, 7, 17]. The main contributions of this work can be summarized as follows:

-

We show how variational inference for Bayesian neural networks can be combined with the concept of an uncertain input to construct a scalable Errors-in-Variables model for Bayesian deep learning.

-

We show that for cases where the input is indeed uncertain, the treatment via an EiV model leads to a substantially improved coverage of the ground truth.

While the presented approach is in principle agnostic regarding the specific form of the variational distribution, the results in Sect. 3 below were produced with the variational distribution induced by Monte Carlo dropout [6]. Studying how well other methods perform under EiV could be an interesting subject of future work.

1.1 Existing Work and Structure of the Article

Errors-in-Variables is a statistical concept that has existed for decades [8]. In [1, 37, 38, 40, 41] the authors consider a non-Bayesian Errors-in-Variables framework for the training of neural networks, without the quantification of uncertainties. In [33, 43, 44] the authors use a Laplace approximation to derive uncertainties for a neural network with uncertain inputs. Their approach requires however the computation of the inverse of the Hessian of the model w.r.t. network parameters which is computationally prohibitive for most modern neural networks. The same is true for methods based on Markov Chain Monte Carlo sampling [46, 48]. In [45] input uncertainty is treated via a Gaussian process approximation to a neural network, which however, requires, in theory, an infinite width of the network.

Many of the methods in the deep learning literature for uncertainty quantification that scale well with the number of parameters rely on variational inference [2, 4, 6, 7, 17, 47]. In this work, we build on the idea to use variational inference to obtain a scalable method for uncertainty quantification in deep learning, but elaborate this idea considerably by allowing for an uncertain input. In contrast to the existing literature, this allows us to treat Errors-in-Variables for deep learning models in a scalable manner that is in line with many state-of-the-art methods based on a non-EiV computation of uncertainties.

The article is structured as follows: in Sect. 2, we discuss the generic approach presented in this work, give a proposal for the underlying priors and discuss some details useful for implementation. In Sect. 3, we will discuss the results of some numerical experiments. The focus will be on simulated models with a known ground truth that allow the inferential behavior of the EiV model to be quantitatively assessed. Finally, we provide a discussion and some conclusions.

2 An EiV Model for Deep Learning

Suppose we have N data points \({\mathcal {D}}=\{(x_1,y_1), \ldots ,(x_N,y_N)\}\subseteq {\mathbb {R}}^{n_x}\times {\mathbb {R}}^{n_y}\) that we model via

with normally distributed \(\varepsilon _{x,i} \sim {\mathcal {N}}(0,\sigma _x^2 I_{n_x \times n_x}), \,\varepsilon _{y,i} \sim {\mathcal {N}}(0,\sigma _y^2 I_{n_y \times n_y})\) and where the parameters \(\theta \in {\mathbb {R}}^p\) and \(\zeta _1,\ldots ,\zeta _N \in {\mathbb {R}}^{n_x}\) are unknown. In this work, we will, similar to [40, 41], fix \(\sigma _x\) prior to training. For the output variance \(\sigma _y\) we will use an initial estimate, that is updated during training. The function \(f_\theta \) is a neural network. The \(\zeta _i\) ought to be considered as the true, but unknown, inputs we would like to feed to \(f_\theta \). Given \(\varvec{\zeta }=(\zeta _1,\ldots ,\zeta _N),\,\theta \) and \(\varvec{\sigma }^2 =(\sigma _x^2, \sigma _y^2)\), the likelihood for the data \({\mathcal {D}}\) under (3) is

with \( p(x_i|\zeta _i, \sigma _x^2)={\mathcal {N}}(x_i|\zeta _i,\sigma _x^2 I_{n_x \times n_x}))\) and \(p(y_i|\theta ,\zeta _i, \sigma _y^2 I_{n_y \times n_y})=\) \({\mathcal {N}}(y_i|f_\theta (\zeta _i), \sigma _y^2 I_{n_y \times n_y})\). While \(\theta \) will be considered as the parameters of interest, the components of \(\varvec{\zeta }\) will be considered as nuisance parameters. Fixing a prior

(cf. Sect. 2.1 below) we have, via Bayes’ theorem,

where \(\pi ({\mathcal {D}}| \varvec{\sigma }^2) = \int \textrm{d}\theta \,\pi (\theta ) \pi ({\mathcal {D}}| \theta ,\varvec{\sigma }^2) \) and, due to (4) and Bayes’ theorem,

with \(\pi (x_i| \sigma _x^2)=\int \textrm{d}\zeta _i \pi (\zeta _i) p(x_i|\zeta _i, \sigma _x^2)\). As we do not expect \(\pi (\theta | {\mathcal {D}}, \varvec{\sigma }^2)\) to be feasible, we approximate it via a variational distribution

with a variational parameter \(\phi \). In variational inference, the distance in (8) is measured via the Kullback-Leibler divergence \(D_{\textrm{KL}}(q_\phi (\theta ) \Vert \pi (\theta |{\mathcal {D}}, \varvec{\sigma }^2))\). As we argue in “Appendix A”, to find a \(\phi \) that minimizes this divergence, we can use backpropagation on the following loss function

where the minibatches \(\{(x_{i_1}, y_{i_1}), \ldots , (x_{i_M}, y_{i_M})) \}\), the M samples \(\theta _m \sim q_\phi (\theta _m)\) and \(M\cdot L\) samples \(\zeta _{i_m, l} \sim \pi (\zeta _{i_m, l}|x_{i_m}, \sigma _x^2 I_{n_x \times n_x})\) are re-drawn in each optimization step. We used throughout this work \(M=1\) for training, \(M=100\) for evaluation and \(L=5\) for both, training and evaluation.

Note that the way \(\theta _m\) is sampled in (9) differs slightly from the way this is done in approaches such as [6, 7, 17], as \(\theta _m\) is identical for all inputs \(\zeta _{i_m,l}\) with the same m.Footnote 2 The loss function in (13) and (9) is the backbone of the EiV algorithm. To make the first term in (9) numerically stable and suitable for backpropagation, the common "logsumexp" function can be used. Before we can use (9) for training, however, we must fix the prior distributions and the variational distribution \(q_\phi \).

2.1 Choosing \(\pi (\theta ), \pi (\zeta )\) and \(q_\phi \)

Choosing \(\pi (\theta )\) is rather standard in the literature on Bayesian neural networks and complies with choosing a regularization for \(\theta \), cf. [2]. We will here use the common choice of a centered normal distribution \(\pi (\theta ) = {\mathcal {N}}(\theta | 0, \lambda _\theta ^2 I_{p \times p})\). For Bernoulli dropout [6] with rate p the term \(\frac{1}{N}D_{\textrm{KL}}(q_{\phi }(\theta )\Vert \pi (\theta ))\) in (9) then equals, up to a constant, \(\frac{(1-p)}{2N\lambda _\theta ^2}|\theta |^2\).

The prior \(\pi (\zeta )\), used for each individual \(\zeta _i\) in (5), is more specific to the EiV approach and influences (9) through the posterior \(\pi (\zeta |x,\sigma _x^2 I_{n_x \times n_x})\) from which we draw Monte Carlo samples for the first term. In this work we use an improper prior for \(\zeta \) which leads to the posterior \(\pi (\zeta |x,\sigma _x^2) = {\mathcal {N}}(\zeta |x,\sigma _x^2 I_{n_x \times n_x})\), cf. Lemma 1 in the “Appendix A.3”, that formalizes the variational inference applied in this work. For a proper, normally distributed \(\pi (\zeta )\) the according posterior \(\pi (\zeta |x,\sigma _x^2 I_{n_x \times n_x})\) is given in the “Appendix A.2”.

The choice of \(q_\phi \) is only limited by three requirements. First, we need to be able to sample from \(q_\phi \). Second, we need an expression for the regularization \(D_{\textrm{KL}}(q_{\phi }(\theta )\Vert \pi (\theta ))\) - either explicitly or via Monte Carlo sampling [18]. Finally, we have to be able to optimize the arising loss function \({\mathcal {L}}^{\mathrm {M.C.}}(\phi )\) w.r.t. \(\phi \) for example via the reparametrization trick [18]. Merely for convenience we will restrict ourselves in this work to the popular choice of Monte Carlo dropout [6] where \(q_\phi \) arises from randomly dropping nodes of the network with some probability and where \(\phi \) simply coincides with the network parameters. However, let us emphasize that the algorithm described in this work is by no means restricted to this particular choice but is usable for any \(q_\phi \) for which the conditions above apply.

The full algorithm used for training the neural networks in this work is summarized in Algorithm 1. Note, that we regularly update \(\sigma _y\) during training to match the RMSE on the training data.

2.2 A New View on Aleatoric Uncertainty

Once the neural network has been trained the learned \(\phi \) can be used to obtain uncertainties and predictions for a new \(x^*\). Namely, we draw \(\zeta ^*\) fromFootnote 3\(\pi (\zeta ^*|x^*, \sigma _x^2)=\pi (\zeta ^*|x^*, {\mathcal {D}}, \sigma _x^2)\) and \(\theta \) from \(q_\phi (\theta )\) and use the distribution of the regression curve \(f_\theta (\zeta ^*)\). To express predictions and uncertainties, we can then either use quantiles or moments. We will use moments in this work and will, in the following, use, for simplicity, univariate notation - the generalization to the multivariate case is straightforward by using the formulas below for each componentFootnote 4. We set

By distinguishing between \(\zeta ^*\) and \(x^*\) the EiV model introduces a new concept of prediction, namely m in (10), that differs from the non-EiV model. The uncertainty \(u(x^*)\) in (10) can be split into (law of total variance)

The detailed algorithm on how to compute \(m(x^*)\) and \(u(x^*)\) is shown in Algorithm 2.

The “classical” treatment of epistemic and aleatoric uncertainty, which is based on the posterior predictive distribution and discussed in Sect. 1, can be easily combined with the above. For the posterior predictive distribution \(\pi (y^*|x^*, {\mathcal {D}}, \varvec{\sigma }^2)\), we draw, for each sample \(f_\theta (\zeta ^*)\), labels \(y^*\) from \({\mathcal {N}}(f_\theta (\zeta ^*), \sigma _y^2 I_{n_y \times n_y})\). The variance of this distribution, also known as the total uncertainty, can then be split into

and is therefore simply augmented by an extra term \(\sigma _y^2\). For \(\sigma _x \searrow 0\) the aleatoric part of \(u(x^*)\) in (11) vanishes, \(u(x^*)\) coincides with the epistemic uncertainty and (12) morphs into the conventional split of aleatoric and epistemic uncertainty.

The usage of the aleatoric part \(\sigma _y^2\) that appears in (12) as an uncertainty is only justified if one is really interested in the uncertainty of a noise-perturbed label \(y^*\) given \(x^*\). If one is actually interested in the value of the regression function \(f_\theta (\zeta ^*)\) (EiV) or \(f_\theta (x^*)\) (non-EiV), it is inappropriate to take the second term in (12) into account. By introducing an additional aleatoric uncertainty in (11), the EiV model introduces an aleatoric uncertainty that is still present in such cases. In summary, the usage of the second term of (11) as an additional aleatoric uncertainty has several advantages:

-

This aleatoric uncertainty is still available if one is interested in the prediction \(f_\theta (\zeta )\) and will, in particular, not vanish for large training data sets.

-

It exploits a source of uncertainty that is not present in the non-EiV model and thus gives a more complete description.

-

As we will observe in Sect. 3 below, this enhanced uncertainty is necessary to achieve a sufficient coverage of the ground truth when the input to the neural network is uncertain.

3 Experiments

In this section, we compare the performance of EiV models with corresponding non-EiV models on various, real and simulated, data sets. To this end we use

-

4 simulated data sets with known ground truth \(g:\zeta \mapsto g(\zeta )\). For illustration purposes all of the chosen problems are one-dimensional in in- and output. We study three polynomial problems: a linear, a quadratic and a cubic model [11, 21]. We will refer to these data sets as linear, quadratic and cubic in the following. In addition, we study a sinusoidal problem, as in [2], to which we will refer as sine. In contrast to [2, 11, 21] we used noisy modifications of the input variables for training as in (2). Details on the problems can be found in “Appendix B”.

-

9 real data sets, with an unknown ground truth, that were used before, e.g. in [6, 11, 21]. All real data sets were normalized to have mean 0 and standard deviation 1 in each feature and label dimension.

For all data sets we trained fully connected neural networks with 4 hidden layers and dropout layers after each hidden layer. The detailed architecture is sketched in the “Appendix C”.from [6] and the EiV modification we proposed in Sect. 2. For each loss function and each data set, we trained 10 different neural networks using 10 different random seedsFootnote 5. For both approaches, EiV and non-EiV, we used the same training hyperparameters such as epoch number and learning rate. These parameters differ between data sets and are listed in Table 2 in the “Appendix”. In particular, we used different values of \(\sigma _x\), once more listed in Table 2. For the simulated data set we used the same value than the one that was used for generating the data. For all real data sets we used \(\sigma _x=0.05\), except for the “naval propulsion” data set where we found that that this choice leads to an intolerably high root mean squared error (RMSE), so that we chose \(\sigma _x = 0.025\) instead. For further details on the training we refer to Sect. 3 in the “Appendix”.

Prediction of the EiV model (red solid line) and non-EiV model (blue solid line) for the linear data set (cf. Sect. 3) together with their uncertainties (shaded areas) times 1.96. The ground truth \(g: \zeta \mapsto \zeta \) underlying the data set is depicted by the black solid line. The right-hand side shows a cut-out of the left-hand side, together with an example of a \(\zeta \) (black dotted vertical line) and the corresponding \(x \sim p(x|\zeta ,\sigma _x^2)\) (gray dashed vertical line) used for the evaluation of the two models. The corresponding values \(g(\zeta )\) and g(x) are marked by the two horizontal lines. (Color figure online)

Figure 2a shows the prediction of the EiV model (red) and non-EiV model (blue) trained on the linear data set, mentioned above, that uses the ground truth \(g(\zeta ):\, \zeta \mapsto \zeta \) (black). For 50 equidistant \(\zeta \) in the range \([-1,1]\) of the training data we drew \(x\sim p(x|\zeta , \sigma _x^2)\) and then computed, as predictions, \({\mathbb {E}}_{\theta \sim q_\phi (\theta )}[f_\theta (x)]\) for the non-EiV model and m(x) as in (10) for the EiV model. To even out random effects due to the training we averaged all predictions over the 10 different training runs and plotted the results as blue (EiV) and red (non-EiV) lines in Fig. 2a against \(\zeta \). The corresponding values of \(g(\zeta )\) are shown by the black line. The shaded areas mark the epistemic uncertainty \(\left( \textrm{Var}_{\theta \sim q_\phi (\theta )}(f_\theta (x))\right) ^{1/2}\) for the non-EiV model (blue) and the combined uncertainty u(x) from (10) for the EiV model (red), both again averaged over the 10 training runs.

Figure 2a shows a pattern which we observe for all data sets studied in this work: the predictions of both models are similar, but the uncertainties differ markedly. Moreover, the ground truth (black line) is substantially better covered by the uncertainties of the EiV model. To get better insight in these observations, Fig. 2b shows a cut-out of Fig. 2a together with a single choice of \(\zeta \) (vertical dotted black line) and the corresponding draw \(x\sim p(x|\zeta , \sigma _x^2)\) (vertical dashed gray line) used for the prediction. The two horizontal lines show the values of \(g(\zeta )\) (black dotted) and g(x) (gray dashed). Apparently, the prediction of both, the EiV and non-EiV model, is considerably closer to g(x) than to \(g(\zeta )\) . The epistemic, i.e. parameter, uncertainty of the non-EiV model is not large enough to account for the deviation \(g(\zeta )-g(x)\) and does therefore not cover the ground truth \(g(\zeta )\). Through \(\pi (\zeta | x) = {\mathcal {N}}(\zeta |x,\sigma _x^2 I_{n_x \times n_x})\) (cf. Sect. 2.1) the EiV model accounts for the uncertainty about \(\zeta \) and thereby yields an uncertainty u(x) that covers the ground truth \(g(\zeta )\).

The deviation of the prediction of the EiV model (marker \(+\)) and the non-EiV model (marker \(\times \)) from \(g(\zeta )\) (abscissa) and g(x) (ordinate), where \(x\sim p(x|\zeta ,\sigma _x^2)\), for all simulated data sets in this work: linear (gray), quadratic (orange), cubic (green) and sine (purple). The dotted black line marks the diagonal. (Color figure online)

Figure 3 shows that this sort of behavior is systematic. For each simulated data set, linear (gray), quadratic (orange), cubic (green) and sine (purple), and their corresponding ground truth function g we drew 200 test \(\zeta \) and corresponding \(x\sim p(x|\zeta , \sigma _x^2)\) and computed the deviation of the prediction of the EiV (marker \(+\)) and the non-EiV model (marker \(\times \)) from \(g(\zeta )\) (x-axis) and g(x) (y-axis)Footnote 6. For all considered data sets, most of the points are located below the diagonal (dashed black line). In other words, for the majority of pairs \((x,\zeta )\) the prediction of both models, EiV and non-EiV, is closer to g(x) than to \(g(\zeta )\). As g(x) usually differs from the ground truth \(g(\zeta )\) this leads to an error.

We already saw in Fig. 2 that for the linear data set this error is not sufficiently covered by the non-EiV model but is well covered by the EiV approach. Figure 4 shows that this is also true for the other simulated data sets considered in this work. We observe, once more, that the predictions of both models are quite similar whereas the uncertainty is substantially increased for the EiV model. The ground truth \(g:\,\zeta \mapsto g(\zeta )\) for all three data sets (black solid line) is substantially better covered by the EiV model. We will give full range coverages for all four simulated data sets in Fig. 6 below and observe that they match well the theoretical expectation.

Figure 5 shows the performance of the EiV and non-EiV model for all data sets considered in this work, including the 9 real data sets mentioned above. Figure 5a shows the root-mean-squared-errorFootnote 7 (RMSE) of the EiV models (red) and non-EiV models (blue). Again, all results were averaged over 10 training runs. The corresponding standard errors (of the mean) are marked by the small bars surrounding the markers. We observe, with the only exception of the naval data set, an almost identical RMSE for both models. This is in consistence with the observation from Figs. 2 and 4, where the predictions of both models, and thus the RMSE, are pretty similar. Figure 5b shows the coverage of the labels y within the test set by the total uncertainty. More precisely, for each input and each label dimension the total uncertainty was computed and multiplied by a factor \(c_{n_y}\), where we recall that \(n_y\) denotes the label dimension. Adding and subtracting these values to the predictions in each label dimension we obtain for each input the \(2^{n_y}\) corners of \(n_y\)-dimensional cuboid. Building on a diagonal, normal approximation we chose \(c_{n_y}\) such that \([-c_{n_y}, c_{n_y}]^{n_y}\) would have a coverage of 0.95 for a multivariate standard normal. In particular, we have for univariate cases, such as the simulated examples, \(c_1=1.96\). Figure 5b then shows for each dataset the coverage of the labels y by the prediction-centered cuboids explained above. Results were again averaged over 10 training runs and the standard error is depicted as well. The vertical dashed line shows the theoretically expected value of 0.95. Both models, EiV and non-EiV, show similar coverages which are close to this optimal value. When looking instead at the coverage by u as in (10) (for EiV) and the epistemic uncertainty (for non-EiV) we see, however, a substantial difference between both models, cf. Fig. 5c. As in Figs. 2 and 4, we observe a substantially increased coverage through introducing Errors-in-Variables. As can be observed in Fig. 8 in the “Appendix” this is due to an increased uncertainty u of the EiV model. While the increased coverage in Fig. 5c backs our proposed new view on the impact of aleatoric input uncertainty - explaining some of the error via an aleatoric input uncertainty and less by the aleatoric output uncertainty - Fig. 6a does not allow for a judgment which of the two models is the better description of “reality”, as we have no access to an optimal coverage value as in Fig. 5b.

Prediction of the EiV model (red) and the non-EiV model (blue) for three simulated data sets, together with their uncertainties (times 1.96) depicted by the red (EiV) and blue (non-EiV) area. The used data sets are the quadratic (left), cubic (middle) and sine (right) data set, described in Sect. 3. The corresponding ground truth is given by the black solid line. (Color figure online)

The RMSE (left) and the coverage of the labels y by the total uncertainty (middle) and the epistemic uncertainty (non-EiV) or u (EiV) (right) for all data sets used in this work, cf. Sect. 3 for details. Results from the non-EiV model are shown in blue, results from the EiV model are shown in red. The dashed line in the middle plot depicts 0.95. The results were averaged over 10 different training runs. The bars surrounding the markers depict the standard errors from these 10 runs

To allow for such a judgment we have to restrict ourselves, once more, to those cases where we have access to the ground truth \(g:\,\zeta \mapsto g(\zeta )\), in other words to the simulated data sets. Figure 6a shows the coverage of the ground truth, that is the values \(g(\zeta )\), for the test points of the 4 simulated data sets, by (an interval around the estimate of width) 1.96 times the epistemic uncertainty (for non-EiV, in blue) or u (for EiV, in red). The optimal value of 0.95 is indicated by the dashed, black line. In addition, we plotted the coverage of a Bayesian regression model (black) with a non-informative prior and a regression function based on the g used for generating the data [36], cf. “Appendix B.1” for details. Results were, once more, averaged over 10 training runs with standard errors plotted by the bars next to the markers. We observe that for all 4 data sets, the EiV approach yields a coverage of the ground truth that is far closer to the optimal value of 0.95 than the coverage achieved by the non-EiV model and the Bayesian regression model based on g. The insufficient coverage of the latter reveals that the low coverage of the non-EiV model is not based on a model misfit but really on an underestimated uncertainty: for both, the non-EiV model and the g-based model, the estimated uncertainty is not sufficient to cover the error that arises from the fact that we can only use x and not \(\zeta \) as an input.

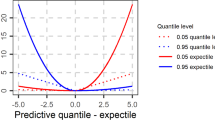

Left: The coverage of the ground truth by \(1.96\cdot u\) for a EiV model (red) or 1.96 times the epistemic uncertainty for a non-EiV model (blue) and a linear Bayesian regression model (black) for the simulated data sets used in this work. Right: The coverage of the ground truth for various intervals, determined by q as described in Sect. 3. EiV coverages are depicted by solid lines, whereas the non-EiV coverages are depicted by dashed lines. In theory, the coverages should coincide with q, shown by the black, dotted diagonal. (Color figure online)

One might argue that the better coverage of the EiV model could not root in a better description of “reality” by the EiV model but solely in the fact that the additional aleatoric input uncertainty simply raises the coverage closer to the maximal coverage of 1.0 and that 0.95 is close to the latter. Figure 6b shows that this is not the case. For various values of q between 0 and 1 we computed the corresponding multiple of the epistemic uncertainty (for non-EiV) and u (for EiV) that should, in theory, lead to a coverage of q. On the ordinate we plotted the actual coverage of the two methods for the 4 simulated data sets. The results for the EiV method (solid lines) and the non-EiV methods (dashed) were averaged over the 10 training runs. The shaded areas depict the corresponding standard errors. For all values of q we observe that the EiV model is substantially closer to the theoretical value of q, depicted by the diagonal (dotted, black). This indicates that the uncertainty of the EiV model describes the error of its prediction far more reliable.

Let us summarize our observations:

-

EiV and non-EiV yield comparable predictions and thus show a comparable RMSE for both, simulated and real, data sets. The coverage of noisy labels through the total uncertainty is also comparable for both models and close to the optimal value.

-

Using EiV leads to an increased coverage of both, the ground truth and noisy labels, when not including the aleatoric output uncertainty.

-

This indicates that EiV relies less on the aleatoric output uncertainty to explain its error. This is achieved by introducing an aleatoric input uncertainty.

-

In cases where we have access to the ground truth the increase in coverage of the ground truth by the EiV model matches substantially better the theoretical expectation and is thus a more reliable description of the problem.

4 Discussion and Outlook

This article studies the effect of using an EiV model in Bayesian deep learning. As a posterior distribution for the true (but usually unknown) input \(\zeta \) we derive in Sect. 2 the posterior distribution \(\pi (\zeta |x) = {\mathcal {N}}(\zeta |x,\sigma _x^2 I_{n_x \times n_x})\) which, loosely speaking, takes the observed x as an estimate for \(\zeta \) but equips it with an uncertainty. In [40, 41] the authors used a non-Bayesian Errors-in-Variables approach and constructed a guess on \(\zeta \) via updating it through backpropagation. They observe that this reduces the bias of the estimated network parameters, at least if repeated measurements of x for each \(\zeta \) are available (which is rarely the case for most deep learning applications). Translating such an approach to a Bayesian approach, as in this work, would complicate the presented framework, raise the computational burden and, as argued in [40], raise the need for additional precautions, such as early stopping, to prevent the EiV approach from overfitting. However, such a study could be an exciting outlook for future work on Bayesian EiV in deep learning.

For the experiments in Sect. 3 we fixed, similar as in [40, 41], \(\sigma _x\) prior to training. In those cases where one has access to several x for a fixed \(\zeta \) the value of \(\sigma _x\) can be estimated from the data. In all other cases \(\sigma _x\) has to be estimated from prior knowledge or by a reasonable guess. We observed that learning \(\sigma _x\) during training via (9) leads to overfitting, that is the learned \(\sigma _x\) is pushed towards 0 during training. A compromise that takes prior knowledge into account but still allows for an adaption of \(\sigma _x\) during training could be a modification of the presented approach that is based on Bayesian hierarchical modeling. We will leave such considerations to future work.

To keep the setup simple, we restricted ourselves to variational inference based on Bernoulli dropout as in [6, 16] and to regression tasks. Analyzing how different approaches perform under Errors-in-Variables would be a natural follow-up study to this article. The same is true for an enhancement of the distributions involved in (2), which could, for instance, involve an anisotropic or heteroscedastic adaptation or the usage of a learnable push-forward mapping.

Finally, while we studied in this work both, real and simulated data, only the simulated cases allow us to evaluate the quality of the uncertainty u in a conclusive manner. The difficulty of assessing uncertainties without access to a ground truth is a well-known problem [14, 36], whose solution is well beyond the scope of this article. A future work that would deepen the understanding of the performance for data without a ground truth could involve a study, again on simulated data, about the sensitivity of the EiV uncertainty quantification to deviations between the model assumptions in (3) and the generation of the data, e.g. with respect to \(\sigma _x\) or normally distributed noise.

5 Conclusion

In this work, we have shown how Errors-in-Variables (EiV), a classical concept from statistics, can be combined with existing Bayesian methods for uncertainty quantification in deep regression. This not only allows to treat the input of the network as equipped with an uncertainty but also provides a notion of aleatoric uncertainty that is, in many cases, more coherent with statistics. We found this method to give similar predictions to those of the non-EiV method but with an increased uncertainty. For examples with known ground truth and noisy inputs, this increased uncertainty was observed to be necessary in order to achieve a sufficient coverage of the regression function, which indicates that using an EiV model leads to a more robust and reliable uncertainty quantification in applications where uncertain inputs are considered.

Notes

The index y should not be misunderstood as \(\varepsilon _y\) or \(\sigma _y\) being y dependent, but is supposed to indicate that the noise corresponds to the output. The same is true for \(\varepsilon _x\) and \(\sigma _x\), which we introduce in (2) below.

For the approach from [17] this also means that Gaussian dropout of “type A” has to be used.

Note that we understand \(\zeta ^*\) as independent of the \(\zeta _i\) that generated the \(x_i\) and \(y_i\) in \({\mathcal {D}}\), which is why we can drop the conditioning on \({\mathcal {D}}\).

For each run, we used a different split of the full data into training and test set.

As above, all predictions of the networks obtained from different training runs where averaged before computing the deviations.

The RMSE was computed w.r.t the labels y, even for the simulated models with known ground truth g.

Mathematically speaking we here mean pointwise convergence of densities, which implies, by Scheffé’s Lemma, convergence in distribution.

References

Bassu D, Lo JT, Nave J (1999) Training recurrent neural networks with noisy input measurements. In: IJCNN’99. International joint conference on neural networks. Proceedings (Cat. No. 99CH36339), vol 1, pp 359–363. IEEE

Blundell C, Cornebise J, Kavukcuoglu K, Wierstra D (2015) Weight uncertainty in neural network. In: International conference on machine learning, pp 1613–1622. PMLR

Depeweg S, Hernandez-Lobato J-M, Doshi-Velez F, Udluft S (2018) Decomposition of uncertainty in bayesian deep learning for efficient and risk-sensitive learning. In: International conference on machine learning, pp 1184–1193. PMLR

Duvenaud D, Maclaurin D, Adams R (2016) Early stopping as nonparametric variational inference. In: Artificial intelligence and statistics, pp 1070–1077. PMLR

Fuller WA (2009) Measurement error models, vol 305. John Wiley and Sons, New Jersey

Gal Y, Ghahramani Z (2016) Dropout as a bayesian approximation: representing model uncertainty in deep learning. In: international conference on machine learning, pp 1050–1059. PMLR

Gal Y, Hron J, Kendall A (2017) Concrete dropout. arXiv preprintarXiv:1705.07832

Gillard J (2006) An historical overview of linear regression with errors in both variables. Math. School, Cardiff Univ., Wales, UK, Tech. Rep

Grigorescu S, Trasnea B, Cocias T, Macesanu G (2020) A survey of deep learning techniques for autonomous driving. J Field Robot 37(3):362–386

Gustafsson FK, Danelljan M, Schon TB (2020) Evaluating scalable bayesian deep learning methods for robust computer vision. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition workshops, pp 318–319

Hernández-Lobato JM, Adams R (2015) Probabilistic backpropagation for scalable learning of bayesian neural networks. In: International conference on machine learning, pp 1861–1869. PMLR

Hoffmann L, Fortmeier I, Elster C (2021) Uncertainty quantification by ensemble learning for computational optical form measurements. Mach Learn Sci Technol 2(3):035030

Huang Y, Chen Y (2020) Survey of state-of-art autonomous driving technologies with deep learning. In: 2020 IEEE 20th international conference on software quality, reliability and security companion (QRS-C), pp 221–228. IEEE

Hüllermeier E, Waegeman W (2021) Aleatoric and epistemic uncertainty in machine learning: an introduction to concepts and methods. Mach Learn 110(3):457–506

Kamath U, Liu J, Whitaker J (2019) Deep learning for NLP and speech recognition, vol 84. Springer, Berlin

Kendall A, Gal Y (2017) What uncertainties do we need in bayesian deep learning for computer vision? arXiv preprintarXiv:1703.04977

Kingma DP, Salimans T, Welling M (2015) Variational dropout and the local reparameterization trick. arXiv preprint arXiv:1506.02557

Kingma DP, Welling M (2013) Auto-encoding variational bayes. arXiv preprint arXiv:1312.6114

Kooi T, Litjens G, Van Ginneken B, Gubern-Mérida A, Sánchez CI, Mann R, den Heeten A, Karssemeijer N (2017) Large scale deep learning for computer aided detection of mammographic lesions. Med Image Anal 35:303–312

Kretz T, Anton M, Schaeffter T, Elster C (2019) Determination of contrast-detail curves in mammography image quality assessment by a parametric model observer. Phys Med 62:120–128

Lakshminarayanan B, Pritzel A, Blundell C (2016) Simple and scalable predictive uncertainty estimation using deep ensembles. arXiv preprint arXiv:1612.01474

LeNail A (2019) Nn-svg: publication-ready neural network architecture schematics. J Open Source Softw 4(33):747

Li Z, Li S, Bamasag OO, Alhothali A, Luo X (2022) Diversified regularization enhanced training for effective manipulator calibration. IEEE Trans Neural Netw Learn Syst 1–13. https://doi.org/10.1109/TNNLS.2022.3153039

Li Z, Li S, Luo X (2021) An overview of calibration technology of industrial robots. IEEE/CAA J Autom Sin 8(1):23–36

Litjens G, Kooi T, Bejnordi BE, Setio AAA, Ciompi F, Ghafoorian M, Van Der Laak JA, Van Ginneken B, Sánchez CI (2017) A survey on deep learning in medical image analysis. Med Image Anal 42:60–88

Loquercio A, Segu M, Scaramuzza D (2020) A general framework for uncertainty estimation in deep learning. IEEE Robot Autom Lett 5(2):3153–3160

Lu H, Jin L, Luo X, Liao B, Guo D, Xiao L (2019) Rnn for solving perturbed time-varying underdetermined linear system with double bound limits on residual errors and state variables. IEEE Trans Industr Inf 15(11):5931–5942

Maddox WJ, Izmailov P, Garipov T, Vetrov DP, Wilson AG (2019) A simple baseline for bayesian uncertainty in deep learning. In: Wallach H, Larochelle H, Beygelzimer A, d’Alché-Buc F, Fox E, Garnett R (eds) Advances in neural information processing systems, vol 32. Curran Associates, Inc. https://proceedings.neurips.cc/paper/2019/file/118921efba23fc329e6560b27861f0c2-Paper.pdf

Martin J, Bartl G, Elster C (2019) Application of bayesian model averaging to the determination of thermal expansion of single-crystal silicon. Meas Sci Technol 30(4):045012

McAllister R, Gal Y, Kendall A, Van Der Wilk M, Shah A, Cipolla R, Weller A (2017) Concrete problems for autonomous vehicle safety: advantages of bayesian deep learning. In: International joint conferences on artificial intelligence, Inc

Otter DW, Medina JR, Kalita JK (2020) A survey of the usages of deep learning for natural language processing. IEEE Trans Neural Netw Learn Syst 32(2):604–624

Pace RK, Barry R (1997) Sparse spatial autoregressions. Stat Probab Lett 33(3):291–297

A. Pavone, J. Svensson, A. Langenberg, N. Pablant, U. Hoefel, S. Kwak, R. Wolf, W. -X. Team (2018) Bayesian uncertainty calculation in neural network inference of ion and electron temperature profiles at w7–x. Rev Sci Instrum 89(10):10K102

Pierson HA, Gashler MS (2017) Deep learning in robotics: a review of recent research. Adv Robot 31(16):821–835

Robert CP et al (2007) The Bayesian choice: from decision-theoretic foundations to computational implementation, vol 2. Springer, Berlin

Schmähling F, Martin J, Elster C (2021) A framework for benchmarking uncertainty in deep regression. arXiv preprint arXiv:2109.09048

Seghouane A-K, Fleury G (2001) A cost function for learning feedforward neural networks subject to noisy inputs. In: Proceedings of the sixth international symposium on signal processing and its applications (Cat. No. 01EX467), vol 2, pp 386–389. IEEE

Sragner L, Horvath G (2003) Improved model order estimation for nonlinear dynamic systems. In: Second IEEE international workshop on intelligent data acquisition and advanced computing systems: technology and applications, 2003. Proceedings, pp 266–271. IEEE

Sünderhauf N, Brock O, Scheirer W, Hadsell R, Fox D, Leitner J, Upcroft B, Abbeel P, Burgard W, Milford M et al (2018) The limits and potentials of deep learning for robotics. Int J Robot Res 37(4–5):405–420

Van Gorp J, Schoukens J, Pintelon R (1998) The errors-in-variables cost function for learning neural networks with noisy inputs. Intell Eng Artif Neural Netw 8:141–146

Van Gorp J, Schoukens J, Pintelon R (2000) Learning neural networks with noisy inputs using the errors-in-variables approach. IEEE Trans Neural Netw 11(2):402–414

Voulodimos A, Doulamis N, Doulamis A, Protopapadakis E (2018) Deep learning for computer vision: a brief review. Comput Intell Neurosci 2018:7068349. https://doi.org/10.1155/2018/7068349

Wright W (1999) Bayesian approach to neural-network modeling with input uncertainty. IEEE Trans Neural Netw 10(6):1261–1270

Wright W, Ramage G, Cornford D, Nabney IT (2000) Neural network modelling with input uncertainty: theory and application. J VLSI Signal Process Syst Signal Image Video Technol 26(1):169–188

Xie G, Chen X, Weng Y (2020) Input modeling and uncertainty quantification for improving volatile residential load forecasting. Energy 211:119007

Yuan J, Zhu J, Nian V (2020) Neural network modeling based on the bayesian method for evaluating shipping mitigation measures. Sustainability 12(24):10486

Zhang G, Sun S, Duvenaud D, Grosse R (2018) Noisy natural gradient as variational inference. In: International conference on machine learning, pp 5852–5861. PMLR

Zhang X, Liang F, Yu B, Zong Z (2011) Explicitly integrating parameter, input, and structure uncertainties into bayesian neural networks for probabilistic hydrologic forecasting. J Hydrol 409(3–4):696–709

Acknowledgements

This work was created in connection with the M4AIM project.

Funding

Open Access funding enabled and organized by Projekt DEAL.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors of this work are not aware of any conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Appendix A: Theoretical Aspects

1.1 A.1: Variational Inference for Informative \(\pi (\zeta )\)

Given the setup from Sect. 2 the Kullback-Leibler divergence between the variational distribution \(q_\phi (\theta )\) and the posterior \(\pi (\theta |{\mathcal {D}}, \varvec{\sigma }^2))\) from (6) can be written as

where we used (6). Dropping the last two terms, which are independent of \(\phi \), we arrive at the following loss function

Finding the \(\phi \) that minimizes \(\pi (\theta |{\mathcal {D}}, \varvec{\sigma }^2))\) is therefore equivalent to the following minimization problem

In practice, we can use the Monte Carlo approximation (9) instead.

1.2 A.2: Informative Distribution for \(\pi (\zeta )\)

For the choice \(\pi (\zeta ) = {\mathcal {N}}(\zeta | 0, \lambda _\zeta ^2 I_{n_x \times n_x})\) we obtain, for the setup of Sect. 2, the following distributions via standard Bayesian calculus [35]

If we consider, for instance, images as the inputs to our neural network a natural choice for \(\lambda _\zeta \) would be such that the bulk of \(\pi (\zeta )\) covers the pixel range. For other cases there might be no natural choice or a priori knowledge available for \(\lambda _\zeta \) and the non-informative limit \(\lambda _\zeta \rightarrow \infty \), cf. Sect. 3, can be more suitable.

1.3 A.3: Variational Inference for \(\lambda _\zeta \rightarrow \infty \)

In this section we describe how to use the non-informative prior that arises from the setup in Sect. 2 for \(\lambda _\zeta \rightarrow \infty \). In this limit the marginal \(\pi (x_i | \sigma _x^2)\) as in (15) is obviously improper. The following lemma shows that this is not really problem and summarizes the actual algorithm used in Sect. 3 of this work.

Lemma 1

Consider the setup from Sects. 2 and A.2 . In the limit \(\lambda _\zeta \rightarrow \infty \) the distributions \(\pi (\zeta |x, \sigma _x^2)\) and the posterior \(\pi (\theta |{\mathcal {D}}, \varvec{\sigma }^2)\) both convergeFootnote 8 to a meaningful limit, namely

where \(\rho (\theta ; {\mathcal {D}} ) = \prod _{i=1}^N\int \textrm{d}\zeta _i\, \pi (\zeta _i | x_i, \sigma _x^2) p(y_i|\zeta _i,\theta , \sigma _y^2)\). For a variational distribution \(q_\phi (\theta )\) the Kullback-Leibler divergence \(D_{\textrm{KL}}(q_\phi (\theta )|\pi (\theta |{\mathcal {D}},\sigma ^2))\) coincides, up to terms independent of \(\phi \), with the expression (13).

Proof

For \(\pi (\zeta |x,\sigma _x^2)\) this directly follows from (15). Concerning the posterior \(\pi (\theta |{\mathcal {D}}, \varvec{\sigma }^2) \) note that with (7) we have

where we wrote \(c=\prod _{i=1}^N \pi (x_i|\sigma _x^2)\). Plugging this into (6) we obtain indeed

which is well-defined in the limit \(\lambda _\zeta \rightarrow \infty \). Using this expression one now easily reshapes \(D_{\textrm{KL}}(q_\phi (\theta )|\pi (\theta |{\mathcal {D}},\sigma ^2))\), similar as in Sect. 1, to \({\mathcal {L}}(\phi ) + \log \int \textrm{d}\theta \,\pi (\theta )\rho (\theta ;{\mathcal {D}})\). \(\square \)

Appendix B: Details on Training Data

1.1 B.1: Simulated Data

The following table lists the simulated data sets used for the experiments in Sect. 3. They were constructed, in analogy to (2), via a ground truth function g and

where \(\varepsilon _x\sim {\mathcal {N}}(0,\sigma _x^2 I_{n_x \times n_x}), \,\varepsilon _y \sim {\mathcal {N}}(0,\sigma _y^2 I_{n_y \times n_y})\) and where the \(\zeta \) are uniformly drawn from a certain range, cf. Table 1 below.

As explained in Sect. 3 we performed 10 different training runs for each method (EiV and non-EiV) and each data set, for which the data above was 10 times randomly split into a training set (\(80\%\) of the data points) and a test set (\(20\%\) of the data points).

In Fig. 6a we also presented results of a Bayesian regression based on functions similar to the g listed in Table 1. In detail, we used \({\tilde{g}}(x)=\sum _{i=0}^K a_i x^i\) with \(K=1\)(for linear), \(K=2\) (for quadratic), \(K=3\) (for cubic) and \({\tilde{g}}(x)= a_0 x+a_1 \sin (2\pi x)+a_2 \sin (4\pi x)\) (for sine). From \({\tilde{g}}\) we constructed the statistical model \(p(y|x,a_1,a_2,\ldots )={\mathcal {N}}({\tilde{g}}(x), \sigma _y^2)\), with the according \(\sigma _y\) from Table 1, and used it together with the non-informative prior \(\pi (a_1,a_2,\ldots )\propto 1\) to obtain the posterior predictive distribution for y given an input x and the training data. Estimate and uncertainty are then taken to be the mean and standard deviation of the posterior predictive distribution.

1.2 B.2: Real Data

The real data sets used in this work are all freely available and rather standard in the literature about uncertainty quantification in deep learning. We refer to [6, 11, 21]. Similarly to the simulated data from Sect. 1, we split for each of the 10 training runs the data randomly into a training set (\(80\%\) of the data points) and a test set (\(20\%\) of the data points). In contrast to [6, 11, 21] we used the California housing data set [32] instead of the Boston housing data set.

Sketch of the architecture of the networks used in this work. The precise architecture depends on two hyperparameters: the number of hidden units h and the dropout rate p. Both are specified in Table 2

Appendix C: Network and Training Details

Throughout this work we used fully connected neural networks of the architecture sketched in Fig. 7. The precise architecture depends on two hyperparameters: the number of neurons h in a hidden layer and the dropout rate p. Both are specified, together with details on the training, in Table 2 below. For all data sets and both approaches, EiV and non-EiV, we used an Adam optimizer and a \(L^2\) regularization of \(\frac{1}{2\lambda _\theta ^2}=10\), with the notation of Sect. 2.1.

For both, the EiV and the non-EiV model, we used \(M=1\) parameter samples in each training step and \(M=100\) samples during evaluation. For the EiV model we used \(L=5\) samples of \(\zeta \) for both, evaluation and training (cf. Sect. 2).

Appendix D: Comparison of Uncertainties for Real and Simulated Data

Predicted uncertainties u, averaged over the output dimension, for the EiV model (red) and the non-EiV model (blue) for the real and simulated data sets from Fig. 5a along the “diagonal” \(\zeta _\lambda = (1-\lambda ) \,\zeta _{\textrm{min}} + \lambda \,\zeta _{\textrm{max}}, \,\lambda \in [0,1]\), where we chose \(\zeta _{\textrm{min}}, \zeta _{\textrm{max}}\) according to range in Table 1 for the simulated data sets and as \(\zeta _{\textrm{min}}=(-1,-1,\ldots ,-1),\,\zeta _{\textrm{max}}=(1,1,\ldots ,1)\) for the real data sets. (Color figure online)

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Martin, J., Elster, C. Aleatoric Uncertainty for Errors-in-Variables Models in Deep Regression. Neural Process Lett 55, 4799–4818 (2023). https://doi.org/10.1007/s11063-022-11066-3

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11063-022-11066-3