Abstract

Two commonly used ideas in the development of citation-based research performance indicators are the idea of normalizing citation counts based on a field classification scheme and the idea of recursive citation weighing (like in PageRank-inspired indicators). We combine these two ideas in a single indicator, referred to as the recursive mean normalized citation score indicator, and we study the validity of this indicator. Our empirical analysis shows that the proposed indicator is highly sensitive to the field classification scheme that is used. The indicator also has a strong tendency to reinforce biases caused by the classification scheme. Based on these observations, we advise against the use of indicators in which the idea of normalization based on a field classification scheme and the idea of recursive citation weighing are combined.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

In bibliometric and scientometric research, there is a trend towards developing more and more sophisticated citation-based research performance indicators. In this paper, we are concerned with two streams of research. One stream of research focuses on the development of indicators that aim to correct for the fact that the density of citations (i.e., the average number of citations per publication) differs among fields. Two basic approaches can be distinguished. One approach is to normalize citation counts for field differences based on a classification scheme that assigns publications to fields (e.g., Braun and Glänzel 1990; Moed et al. 1995; Waltman et al. 2011). The other approach is to normalize citation counts based on the number of references in citing publications or citing journals (e.g., Moed 2010; Zitt and Small 2008). The latter approach, which is sometimes referred to as source normalization (Moed 2010), does not need a field classification scheme.

A second stream of research focuses on the development of recursive indicators, typically inspired by the well-known PageRank algorithm (Brin and Page 1998). In the case of recursive indicators, citations are weighed differently depending on the status of the citing publication (e.g., Chen et al. 2007; Ma et al. 2008; Walker et al. 2007), the citing journal (e.g., Bollen et al. 2006; Pinski and Narin 1976), or the citing author (e.g., Radicchi et al. 2009; Życzkowski 2010). The underlying idea is that a citation from an influential publication, a prestigious journal, or a renowned author should be regarded as more valuable than a citation from an insignificant publication, an obscure journal, or an unknown author. It is sometimes argued that non-recursive indicators measure popularity while recursive indicators measure prestige (e.g., Bollen et al. 2006; Yan and Ding 2010).

Based on the above discussion, we have two binary dimensions along which we can distinguish citation-based research performance indicators, namely the dimension of normalization based on a field classification scheme versus source normalization and the dimension of non-recursive mechanisms versus recursive mechanisms. These two dimensions yield four types of indicators. This is shown in Table 1, in which we list some examples of the different types of indicators. It is important to note that all currently existing indicators that use a classification scheme for normalizing citation counts are of a non-recursive nature. Hence, there currently are no recursive indicators that make use of a classification scheme.Footnote 1 Instead, the currently existing recursive indicators can best be regarded as belonging to the family of source-normalized indicators. This is because these indicators, like non-recursive source-normalized indicators, are based in one way or another on the idea that each unit (i.e., each publication, journal, or author) has a certain weight which it distributes over the units it cites. We refer to Waltman and Van Eck (2010a) for a detailed analysis of the close relationship between a source-normalized indicator (i.e., the audience factor) and two recursive indicators (i.e., the Eigenfactor indicator and the influence weight indicator).

In this paper, we focus on the empty cell in the lower left of Table 1. Hence, we focus on recursive indicators that use a field classification scheme for normalizing citation counts. We first propose a recursive variant of the mean normalized citation score (MNCS) indicator (Waltman et al. 2011). We then present an empirical analysis of this recursive MNCS indicator. In the analysis, the recursive MNCS indicator is used to study the citation impact of journals and research institutes in the field of library and information science. Our aim is to get insight into the validity of recursive indicators that use a classification scheme for normalizing citation counts. We pay special attention to the sensitivity of such indicators to the classification scheme that is used.

Recursive mean normalized citation score

The ordinary non-recursive MNCS indicator for a set of publications equals the average number of citations per publication, where for each publication the number of citations is normalized for differences among fields (Waltman et al. 2011). The normalization is performed by dividing the number of citations of a publication by the publication’s expected number of citations. The expected number of citations of a publication is defined as the average number of citations per publication in the field in which the publication was published. An example of the calculation of the non-recursive MNCS indicator is provided in Table 2.

The non-recursive MNCS indicator can also be referred to as the first-order MNCS indicator. We define the second-order MNCS indicator in the same way as the first-order MNCS indicator except that citations are weighed differently. In the first-order MNCS indicator, all citations have the same weight. In the second-order MNCS indicator, on the other hand, the weight of a citation is given by the value of the first-order MNCS indicator for the citing journal. Hence, citations from journals with a high value for the first-order MNCS indicator are regarded as more valuable than citations from journals with a low value for the first-order MNCS indicator. We have now defined the second-order MNCS indicator in terms of the first-order MNCS indicator. In the same way, we define the third-order MNCS indicator in terms of the second-order MNCS indicator, the fourth-order MNCS indicator in terms of the third-order MNCS indicator, and so on. This yields the recursive MNCS indicator that we study in this paper.

To make the above definition of the recursive MNCS indicator more precise, we formalize it mathematically. We use i, j, k, and l to denote, respectively, a publication, a journal, a field, and an institute. We define

For α = 1, 2,…, the αth-order citation score of publication i is defined as

where w (α) i′ denotes the weight of a citation from publication i′. For α = 1, w (α) i′ = 1 for all i′. For α = 2, 3,…,w (α) i′ is given by

It follows from (5) and (6) that the αth-order citation score of a publication equals a weighted sum of the citations received by the publication. For α = 1, all citations have the same weight. For α = 2, 3,…, the weight of a citation is given by the (α − 1)th-order MNCS of the citing journal.

We define the αth-order mean citation score of a field as the average αth-order citation score of all publications belonging to the field, that is,

The αth-order expected citation score of a publication is defined as the αth-order mean citation score of the field to which the publication belongs,Footnote 2 and the αth-order normalized citation score of a publication is defined as the ratio of the publication’s αth-order citation score and its αth-order expected citation score. This yields

If the αth-order normalized citation score of a publication is greater (less) than one, this indicates that the αth-order citation score of the publication is greater (less) than the average αth-order citation score of all publications in the field.

We define the αth-order MNCS of a set of publications as the average αth-order normalized citation score of the publications in the set. In the case of journals and institutes, we obtain, respectively

where

It follows from (11) and (12) that in the case of institutes we take a fractional counting approach. That is, a publication resulting from a collaboration of, say, three institutes is counted for each institute as 1/3 of a full publication. Alternatively, a full counting approach could have been taken. A collaborative publication would then be counted as a full publication for each of the institutes involved. A full counting approach is obtained by replacing \( \bar{a}_{il} \) by a il in (11).

Until now, we have only discussed the issue of normalization for the field in which a publication was published. We have not discussed the issue of normalization for the age of a publication. The latter type of normalization can be used to correct for the fact that older publications have had more time to earn citations than younger publications. Normalization for the age of a publication can easily be incorporated into indicators that make use of a field classification scheme, such as the MNCS indicator. It is more difficult to incorporate into (recursive or non-recursive) source-normalized indicators (see however Waltman and Van Eck 2010b). In the empirical analysis presented later on in this paper, the recursive MNCS indicator performs a normalization both for the field in which a publication was published and for the age of a publication. This means that in the above mathematical description of the recursive MNCS indicator k in fact represents not just a field but a combination of a field and a publication year. As a consequence, b ik indicates whether a publication was published in a certain field and year, and \( {\text{MCS}}_{k}^{(\alpha )} \) indicates the average αth-order citation score of all publications published in a certain field and year.

Data

To test our recursive MNCS indicator, we use the indicator to study the citation impact of journals and research institutes in the field of library and information science (LIS). We focus on the period from 2000 to 2009. Our analysis is based on data from the Web of Science database.

We first needed to delineate the LIS field. We used the Journal of the American Society for Information Science and Technology (JASIST) as the ‘seed’ journal for our delineation. We decided to select the 47 journals that, based on co-citation data, are most strongly related with JASIST. Only journals in the Web of Science subject category Information Science & Library Science were considered. JASIST together with the 47 selected journals constituted our delineation of the LIS field. From the journals within our delineation, we selected all 12,202 publications in the period 2000–2009 that are of the document type ‘article’ or ‘review’. It is important to emphasize that in our analysis we only take into account citations within the set of 12,202 publications. Citations given by publications outside this set are not considered.Footnote 3 Self citations are also excluded.

In our analysis, we also study the effect of splitting up the LIS field in a number of subfields. The following procedure was used to split up the LIS field. We first collected bibliographic coupling data for the 48 journals in our analysis.Footnote 4 Based on the bibliographic coupling data, we created a clustering of the journals. The VOS clustering technique (Waltman et al. 2010), available in the VOSviewer software (Van Eck and Waltman 2010), was used for this purpose. We tried out different numbers of clusters. We found that a solution with three clusters yielded the most satisfactory interpretation in terms of well-known subfields of the LIS field. We therefore decided to use this solution. The three clusters can roughly be interpreted as follows. The largest cluster (27 journals) deals with library science, the smallest cluster (7 journals) deals with scientometrics, and the third cluster (14 journals) deals with general information science topics. The assignment of the 48 journals to the three clusters is shown in Table 3. The clustering of the journals is also shown in the journal map in Fig. 1. This map, produced using the VOSviewer software, is based on bibliographic coupling relations between the journals.

Results

As discussed in the previous section, we can treat LIS either as a single integrated field or as a field consisting of three separate subfields (i.e., library science, information science, and scientometrics). In the latter case, the recursive MNCS indicator normalizes for differences among the three subfields in the average number of citations per publication. Below, we first present the results obtained when LIS is treated as a single integrated field. We then present the results obtained when LIS is treated as a field consisting of three separate subfields. We also present a comparison of the results obtained using the two approaches. We note that all correlations that we report are Spearman rank correlations. Furthermore, we emphasize once more that in our analysis citations given by publications outside our set of 12,202 publications are not taken into account.

Single integrated LIS field

We first consider the case of a single integrated LIS field. The recursive MNCS indicator is said to have converged for a certain α if there is virtually no difference between values of the αth-order MNCS indicator and values of the (α + 1)th-order MNCS indicator. For our data, convergence of the recursive MNCS indicator can be observed for α = 20. In our analysis, our main focus therefore is on comparing the first-order MNCS indicator (i.e., the ordinary non-recursive MNCS indicator) with the 20th-order MNCS indicator.

In Table 4, we list the top 10 journals according to both the first-order MNCS indicator and the 20th-order MNCS indicator. In the case of the first-order MNCS indicator, the top 10 consists of journals from all three subfields. However, journals from the information science and scientometrics subfields seem to slightly dominate journals from the library science subfield. There are three library science journals in the top 10, at ranks 4, 8, and 10. Given that more than half of the journals in our analysis belong to the library science subfield (27 of the 48 journals), the library science journals seem to be underrepresented in the top 10. Also, within the top 10, the three library science journals have relatively low ranks.

Let’s now turn to the top 10 journals according to the 20th-order MNCS indicator. This top 10 provides a much more extreme picture. The top 10 is now almost completely dominated by information science and scientometrics journals. There is only one library science journal left, at rank 9. Moreover, when looking at the values of the MNCS indicator, large differences can be observed within the top 10. Especially the extremely high value of the MNCS indicator for Journal of Informetrics, the highest ranked journal, is striking. The value of the MNCS indicator for this journal is more than three times as high as the value of the MNCS indicator for Annual Review of Information Science and Technology, which is the second-highest ranked journal.

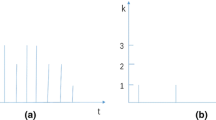

In Fig. 2, the first-order MNCS indicator is compared with the second-order MNCS indicator (left panel) and the 20th-order MNCS indicator (right panel) for the 48 LIS journals in our data set. As can be seen in the figure, the differences between the first- and the second-order MNCS indicator are relatively small, although there is one journal (Journal of Informetrics) that benefits quite a lot from going from the first-order MNCS indicator to the second-order MNCS indicator. The differences between the first- and the 20th-order MNCS indicator are much larger. As was also seen in Table 4, there are a number of journals for which the value of the 20th-order MNCS indicator is higher than the value of the first-order MNCS indicator. These are all information science and scientometrics journals. The library science journals all turn out to have a lower value for the 20th-order MNCS indicator than for the first-order MNCS indicator.

Comparison of the first-order MNCS indicator with the second-order MNCS indicator (left panel; ρ = 0.90) and the 20th-order MNCS indicator (right panel; ρ = 0.75) for 48 LIS journals. LIS is treated as a single integrated field in the calculation of the indicators. Library science, information science, and scientometrics journals are indicated by, respectively, red points, green crosses, and blue circles. (Color figure online)

In addition to journals, we also consider research institutes in LIS. We restrict our analysis to the 86 institutes that have at least 25 publications in our data set. (Recall that publications are counted fractionally.) The top 10 institutes according to both the first-order MNCS indicator and the 20th-order MNCS indicator are listed in Table 5. Comparing the results of the two MNCS indicators, it is clear that institutes which are mainly active in the scientometrics subfield benefit a lot from the use of a higher-order MNCS indicator. For these institutes, the value of the 20th-order MNCS indicator tends to be much higher than the value of the first-order MNCS indicator. This is consistent with our above analysis for LIS journals, where we found that the two most important scientometrics journals (Journal of Informetrics and Scientometrics) benefit quite significantly from the use of a higher-order MNCS indicator.

Three separate LIS subfields

We now consider the case in which LIS is divided into three separate subfields (i.e., library science, information science, and scientometrics). In the calculation of the recursive MNCS indicator, a normalization is performed to correct for differences among the three subfields in the average number of citations per publication. Like in the above analysis, we focus mainly on comparing the first-order MNCS indicator with the 20th-order MNCS indicator.

In Table 6, the top 10 journals according to both the first-order MNCS indicator and the 20th-order MNCS indicator is shown. This table is similar to Table 4 above, except that in the calculation of the indicators LIS is treated as a field consisting of three separate subfields rather than as a single integrated field. Comparing Table 6 with Table 4, it can be seen that library science journals now play a much more prominent role, both in the case of the first-order MNCS indicator and in the case of the 20th-order MNCS indicator. As a consequence, the top 10 journals now looks much more balanced for both MNCS indicators. Also, unlike in Table 4, there are no journals in Table 6 with an extremely high value for the 20th-order MNCS indicator.

As can be seen in Table 6, the journal with the highest value for the 20th-order MNCS indicator is Interlending & Document Supply. We investigated this journal in more detail and found that each issue of the journal contains a review article entitled “Interlending and document supply: A review of the recent literature”. These review articles refer to other articles published in the same issue of the journal. Clearly, the practice of publishing these review articles is an important contributing factor to the journal’s top ranking in Table 6.

In Fig. 3, a comparison is presented of the first-order MNCS indicator with the second-order MNCS indicator (left panel) and the 20th-order MNCS indicator (right panel) for the 48 LIS journals in our data set. The figure shows that most of the differences between the first-order MNCS indicator and the higher-order MNCS indicators are not very large, especially when compared with the differences shown in Fig. 2. The journal that is most sensitive to the use of a higher-order MNCS indicator is Interlending & Document Supply. As pointed out above, this journal has quite special citation characteristics, which explains its sensitivity to the use of a higher-order MNCS indicator.

Comparison of the first-order MNCS indicator with the second-order MNCS indicator (left panel; ρ = 0.93) and the 20th-order MNCS indicator (right panel; ρ = 0.89) for 48 LIS journals. LIS is treated as a field consisting of three separate subfields in the calculation of the indicators. Library science, information science, and scientometrics journals are indicated by, respectively, red points, green crosses, and blue circles. (Color figure online)

Results for research institutes in LIS are reported in Table 7. In the table, the top 10 institutes according to both the first-order MNCS indicator and the 20th-order MNCS indicator are shown. Like in Table 5, the top of the ranking is dominated by institutes with a strong focus on scientometrics research. This is the case both for the first-order MNCS indicator and for the 20th-order MNCS indicator. However, comparing Table 7 with Table 5, it can be seen that the MNCS values of the scientometrics institutes have decreased quite considerably, especially when looking at the 20th-order MNCS indicator. Hence, although the scientometrics institutes still occupy the top positions in the ranking, the differences with the other institutes have become smaller.

Comparison

In Fig. 4, we present a direct comparison of on the one hand the results obtained when LIS is treated as a single integrated field and on the other hand the results obtained when LIS is treated as a field consisting of three separate subfields. The comparison is made for the 48 LIS journals in our data set. Figure 4 clearly shows how treating LIS as a single integrated field benefits the scientometrics journals and harms the library science journals. In addition, the figure shows that this effect is strongly reinforced when instead of the first-order MNCS indicator the 20th-order MNCS indicator is used.

Comparison of the MNCS indicator when LIS is treated as a single integrated field with the MNCS indicator when LIS is treated as a field consisting of three separate subfields. The comparison is made for 48 LIS journals, and either the first-order MNCS indicator (left panel; ρ = 0.96) or the 20th-order MNCS indicator (right panel; ρ = 0.77) is used. Library science, information science, and scientometrics journals are indicated by, respectively, red points, green crosses, and blue circles. (Color figure online)

Discussion and conclusion

Recursive bibliometric indicators are based on the idea that citations should be weighed differently depending on the source from which they originate. Citations from a prestigious journal, for instance, should have more weight than citations from an obscure journal. It is sometimes argued that by weighing citations differently depending on their source it is possible to measure not just the popularity of publications but also their prestige.

In this paper, we have combined the idea of recursive citation weighing with the idea of using a classification scheme to normalize citation counts for differences among fields. The combination of these two ideas has not been explored before. Although when used separately from each other the two ideas can be quite useful, our empirical analysis for the field of LIS indicates that the combination of the two ideas does not yield satisfactory results. The main observations from our analysis are twofold. First, our proposed recursive MNCS indicator is highly sensitive to the way in which fields are defined in the classification scheme that one uses. And second, if within a field there are subfields with significantly different citation characteristics, the recursive MNCS indicator will be strongly biased in favor of the subfields with the highest density of citations.

The sensitivity of bibliometric indicators to the field classification scheme that is used for normalizing citation counts has been investigated in various studies (Adams et al. 2008; Bornmann et al. 2008; Neuhaus and Daniel 2009; Van Leeuwen et al. 2009; Zitt et al. 2005). Our empirical results for the first-order MNCS indicator (i.e., the ordinary non-recursive MNCS indicator) are in line with earlier studies in which a significant sensitivity of bibliometric indicators to the classification scheme that is used has been reported. For the 20th-order MNCS indicator, this sensitivity even turns out to be much higher. Treating LIS as a single integrated field or as a field consisting of three separate subfields yields very different results for the 20th-order MNCS indicator.

If a field as defined in the classification scheme that one uses is heterogeneous in terms of citation characteristics, bibliometric indicators will have a bias that favors subfields with a higher citation density over subfields with a lower citation density. This is a general problem of bibliometric indicators that use a classification scheme to normalize citation counts. In the case of the recursive MNCS indicator, our empirical results show that the idea of recursive citation weighing strongly reinforces biases caused by the classification scheme. Within the field of LIS, the scientometrics subfield has the highest citation density, followed by the information science subfield. The library science subfield has the lowest citation density. When LIS is treated as a single integrated field, the differences in citation density among the three LIS subfields cause both the first-order MNCS indicator and the 20th-order MNCS indicator to be biased, where the bias favors the scientometrics subfield and harms the library science subfield. However, the bias is much stronger for the 20th-order MNCS indicator than for the first-order MNCS indicator. For instance, in the case of the 20th-order MNCS indicator, library science journals are completely dominated by journals in scientometrics and information science.

Based on the above observations, we advise against the introduction of recursiveness into bibliometric indicators that use a field classification scheme for normalizing citation counts (such as the MNCS indicator). Instead of providing more sophisticated measurements of citation impact (measurements of ‘prestige’ rather than ‘popularity’), the main effect of introducing recursiveness is to reinforce biases caused by the way in which fields are defined in the classification scheme that one uses. Although our negative results may partly relate to specific characteristics of the MNCS indicator, we expect our general conclusion to be valid for all field-normalized indicators.

Notes

However, a first step in the direction of such indicators was taken by Van Leeuwen et al. (2003). They proposed an indicator that weighs citations by the average field-normalized number of citations per publication of the citing journal.

For simplicity, we assume that fields are non-overlapping. A publication therefore always belongs to exactly one field.

In the case of citations given by publications outside the set of 12,202 publications, we do not know the MNCS value of the citing journal. We need to know the MNCS value of the citing journal in order to calculate the recursive MNCS indicator. In general, when calculating recursive indicators, the set of citing journals and the set of cited journals need to coincide.

We also tried out using co-citation data, but this gave less satisfactory results.

References

Adams, J., Gurney, K., & Jackson, L. (2008). Calibrating the zoom—A test of Zitt’s hypothesis. Scientometrics, 75(1), 81–95.

Bergstrom, C. T. (2007). Eigenfactor: Measuring the value and prestige of scholarly journals. College and Research Libraries News, 68(5), 314–316.

Bollen, J., Rodriguez, M. A., & Van de Sompel, H. (2006). Journal status. Scientometrics, 69(3), 669–687.

Bornmann, L., Mutz, R., Neuhaus, C., & Daniel, H.-D. (2008). Citation counts for research evaluation: Standards of good practice for analyzing bibliometric data and presenting and interpreting results. Ethics in Science and Environmental Politics, 8(1), 93–102.

Braun, T., & Glänzel, W. (1990). United Germany: The new scientific superpower? Scientometrics, 19(5–6), 513–521.

Brin, S., & Page, L. (1998). The anatomy of a large-scale hypertextual Web search engine. Computer Networks and ISDN Systems, 30(1–7), 107–117.

Chen, P., Xie, H., Maslov, S., & Redner, S. (2007). Finding scientific gems with Google’s PageRank algorithm. Journal of Informetrics, 1(1), 8–15.

Glänzel, W., Schubert, A., Thijs, B., & Debackere, K. (2011). A priori vs. a posteriori normalisation of citation indicators. The case of journal ranking. Scientometrics, 87(2), 415–424.

González-Pereira, B., Guerrero-Bote, V. P., & Moya-Anegón, F. (2010). A new approach to the metric of journals’ scientific prestige: The SJR indicator. Journal of Informetrics, 4(3), 379–391.

Leydesdorff, L., & Bornmann, L. (2011). How fractional counting of citations affects the impact factor: Normalization in terms of differences in citation potentials among fields of science. Journal of the American Society for Information Science and Technology, 62(2), 217–229.

Lundberg, J. (2007). Lifting the crown—Citation z-score. Journal of Informetrics, 1(2), 145–154.

Ma, N., Guan, J., & Zhao, Y. (2008). Bringing PageRank to the citation analysis. Information Processing and Management, 44(2), 800–810.

Moed, H. F. (2010). Measuring contextual citation impact of scientific journals. Journal of Informetrics, 4(3), 265–277.

Moed, H. F., De Bruin, R. E., & Van Leeuwen, T. N. (1995). New bibliometric tools for the assessment of national research performance: Database description, overview of indicators and first applications. Scientometrics, 33(3), 381–422.

Neuhaus, C., & Daniel, H.-D. (2009). A new reference standard for citation analysis in chemistry and related fields based on the sections of Chemical Abstracts. Scientometrics, 78(2), 219–229.

Pinski, G., & Narin, F. (1976). Citation influence for journal aggregates of scientific publications: Theory, with application to the literature of physics. Information Processing and Management, 12(5), 297–312.

Radicchi, F., Fortunato, S., Markines, B., & Vespignani, A. (2009). Diffusion of scientific credits and the ranking of scientists. Physical Review E, 80(5), 056103.

Van Eck, N. J., & Waltman, L. (2010). Software survey: VOSviewer, a computer program for bibliometric mapping. Scientometrics, 84(2), 523–538.

Van Leeuwen, T. N., & Calero Medina, C. (2009). Redefining the field of economics: Improving field normalization for the application of bibliometric techniques in the field of economics. In B. Larsen & J. Leta (Eds.), Proceedings of the 12th international conference on scientometrics and informetrics (pp. 410–420).

Van Leeuwen, T. N., Visser, M. S., Moed, H. F., Nederhof, T. J., & Van Raan, A. F. J. (2003). The Holy Grail of science policy: Exploring and combining bibliometric tools in search of scientific excellence. Scientometrics, 57(2), 257–280.

Walker, D., Xie, H., Yan, K.-K., & Maslov, S. (2007). Ranking scientific publications using a model of network traffic. Journal of Statistical Mechanics: Theory and Experiment, P06010.

Waltman, L., & Van Eck, N. J. (2010a). The relation between Eigenfactor, audience factor, and influence weight. Journal of the American Society for Information Science and Technology, 61(7), 1476–1486.

Waltman, L., & Van Eck, N.J. (2010b). A general source normalized approach to bibliometric research performance assessment. In Book of Abstracts of the 11th International Conference on Science and Technology Indicators (pp. 298-299).

Waltman, L., Van Eck, N. J., & Noyons, E. C. M. (2010). A unified approach to mapping and clustering of bibliometric networks. Journal of Informetrics, 4(4), 629–635.

Waltman, L., Van Eck, N. J., Van Leeuwen, T. N., Visser, M. S., & Van Raan, A. F. J. (2011). Towards a new crown indicator: Some theoretical considerations. Journal of Informetrics, 5(1), 37–47.

West, J. D., Bergstrom, T. C., & Bergstrom, C. T. (2010). The Eigenfactor metrics: A network approach to assessing scholarly journals. College and Research Libraries, 71(3), 236–244.

Yan, E., & Ding, Y. (2010). Weighted citation: An indicator of an article’s prestige. Journal of the American Society for Information Science and Technology, 61(8), 1635–1643.

Zitt, M. (2010). Citing-side normalization of journal impact: A robust variant of the Audience Factor. Journal of Informetrics, 4(3), 392–406.

Zitt, M., Ramanana-Rahary, S., & Bassecoulard, E. (2005). Relativity of citation performance and excellence measures: From cross-field to cross-scale effects of field-normalisation. Scientometrics, 63(2), 373–401.

Zitt, M., & Small, H. (2008). Modifying the journal impact factor by fractional citation weighting: The audience factor. Journal of the American Society for Information Science and Technology, 59(11), 1856–1860.

Życzkowski, K. (2010). Citation graph, weighted impact factors and performance indices. Scientometrics, 85(1), 301–315.

Open Access

This article is distributed under the terms of the Creative Commons Attribution Noncommercial License which permits any noncommercial use, distribution, and reproduction in any medium, provided the original author(s) and source are credited.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This is an open access article distributed under the terms of the Creative Commons Attribution Noncommercial License (https://creativecommons.org/licenses/by-nc/2.0), which permits any noncommercial use, distribution, and reproduction in any medium, provided the original author(s) and source are credited.

About this article

Cite this article

Waltman, L., Yan, E. & van Eck, N.J. A recursive field-normalized bibliometric performance indicator: an application to the field of library and information science. Scientometrics 89, 301–314 (2011). https://doi.org/10.1007/s11192-011-0449-z

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11192-011-0449-z