Abstract

Purpose

ChatGPT is a large language model trained on a large dataset covering a broad range of topics, including the medical literature. We aim to examine its accuracy and reproducibility in answering patient questions regarding bariatric surgery.

Materials and methods

Questions were gathered from nationally regarded professional societies and health institutions as well as Facebook support groups. Board-certified bariatric surgeons graded the accuracy and reproducibility of responses. The grading scale included the following: (1) comprehensive, (2) correct but inadequate, (3) some correct and some incorrect, and (4) completely incorrect. Reproducibility was determined by asking the model each question twice and examining difference in grading category between the two responses.

Results

In total, 151 questions related to bariatric surgery were included. The model provided “comprehensive” responses to 131/151 (86.8%) of questions. When examined by category, the model provided “comprehensive” responses to 93.8% of questions related to “efficacy, eligibility and procedure options”; 93.3% related to “preoperative preparation”; 85.3% related to “recovery, risks, and complications”; 88.2% related to “lifestyle changes”; and 66.7% related to “other”. The model provided reproducible answers to 137 (90.7%) of questions.

Conclusion

The large language model ChatGPT often provided accurate and reproducible responses to common questions related to bariatric surgery. ChatGPT may serve as a helpful adjunct information resource for patients regarding bariatric surgery in addition to standard of care provided by licensed healthcare professionals. We encourage future studies to examine how to leverage this disruptive technology to improve patient outcomes and quality of life.

Graphical Abstract

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

Introduction

The utilization of artificial intelligence (AI) in medicine has grown significantly in recent years with the advent of a broad range of applications and technologies used to improve patient care and evidence-based medicine. These technologies are often used by healthcare providers or the healthcare system for patient care and research. On November 30, 2022, the chatbot ChatGPT was launched by the company OpenAI for public use through a free online platform available to all users with a free account. ChatGPT is an AI large language model trained on a large dataset covering a broad range of topics, including the medical literature. When prompted with inquiries, ChatGPT can provide well-formulated, conversational, and easy-to-understand seemingly knowledgeable responses. Discussion regarding the potential of ChatGPT to disrupt all fields of academia is ongoing, and its applicability is actively under investigation [1, 2]. One recent study-showed ChatGPT achieved a passing score when prompted with USMLE style questions, while others have shown its appropriateness in responding to cardiovascular disease prevention-related inquiries and accuracy in responding to commonly asked cirrhosis and hepatocellular carcinoma-related questions [3-5]. There are currently no studies examining the clinical application of ChatGPT in the field of bariatric surgery.

Obesity rates are on the rise in the USA, with the prevalence of obesity and severe obesity increasing over the past two decades [6]. Bariatric surgery has been shown to be an effective and safe therapeutic modality for long-term weight loss as well as resolution of obesity-related comorbidities such as diabetes mellitus, hypertension, and obstructive sleep apnea [7]. Despite its proven efficacy and safety, less than 1% of eligible patients undergo bariatric surgery in the USA [8]. The reason for this underutilization is likely multifactorial, and multiple studies have highlighted the various barriers to care such as provider perceptions, insurance coverage, access to resources, and patient perceptions [9,10]. Some of these barriers are rooted in the lack of familiarity with the safety and efficacy of bariatric surgery, making it difficult for patients and their providers to make informed decisions regarding referring for bariatric surgery.

Even after obtaining a referral, patients typically undergo a preoperative evaluation process and optimization of preexisting comorbidities, as well as receive education and lifestyle counseling. The literature, while limited, reveals that patients often seek sources of information outside of their healthcare provider’s office, with various social media venues being a popular source of information [11]. In light of this, and given ChatGPTs rapidly growing number of users, we anticipate patients may utilize ChatGPT as a source of information as part of their weight loss journey. Therefore, it is crucial to determine the reliability of this new tool. In this study, we aimed to examine the accuracy and reproducibility of ChatGPT’s responses to commonly asked patient questions regarding bariatric surgery.

Methods

Question Curation/Data Source

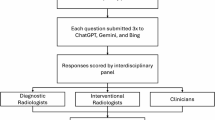

Questions were obtained from the Frequently Asked Questions pages from the American Society for Metabolic and Bariatric Surgery (ASMBS) and MedlinePlus Medical Encyclopedia, along with two social media support groups for patients who were considering, eligible for, or who had recently undergone a bariatric procedure: Bariatric Surgery & Gastric Sleeve Support Group (Facebook) and Gastric Sleeve and Bariatric Surgery Group, Supporting the New You (Facebook). Questions were curated, screened, and approved by three authors (J. S., N. R., K. S.) to evaluate their inclusion in the study. Only questions regarding bariatric surgery or weight loss surgery were included. Duplicate and similar questions from multiple sources were removed (Fig. 1). Questions requiring subjective or personalized responses (e.g., How will bariatric surgery change my lifespan?) and questions that were vague (e.g., How much weight is normally lost after this surgery?) were rephrased to a generic language format to allow for inclusion in the study (Fig. 1). Other questions were grammatically modified to eliminate ambiguity. A total of 151 questions were included and were used to generate responses from ChatGPT. To better characterize ChatGPTs performance in various topics within bariatric surgery, questions were categorized into multiple groups for statistical analysis purposes: (1) eligibility, efficacy, and procedure options; (2) preoperative preparation; (3) recovery, risks, and complications; (4) lifestyle changes; and (5) other.

ChatGPT

ChatGPT is a large language model trained on a large database of information from a wide range of sources including online websites, books, and articles leading up to 2021 [12]. When prompted with inquiries, ChatGPT can provide well-formulated, conversational, and easy-to-understand responses. The creators utilized reinforcement learning from human feedback (RLHF) to fine-tune the model to follow a broad class of commands and written instructions fine-tuned by human preferences as a reward signal [13]. The model was also trained to align with user intentions and minimize bias, toxic, or otherwise harmful responses. The source of information used to train ChatGPT is unknown.

Response Generation

To generate responses, each question was prompted to ChatGPT (December 15th version). Each individual question was inputted twice on separate occasions using the “new chat” function in order to generate two responses per question. This was done to determine the reproducibility of responses to the same question.

Question Grading

Responses to questions were first independently graded for accuracy and reproducibility by two board-certified, fellowship-trained, active practice academic bariatric surgeon reviewers (L. H. and S. A.). Reviewers were instructed to grade the accuracy of responses based on known information leading up to 2021. Reproducibility was graded based on the similarity in accuracy of the two responses per question generated by ChatGPT. If the responses were similar, only the first response from ChatGPT was graded. If the responses were not similar, both responses were individually graded.

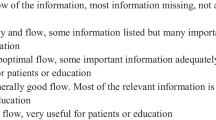

Accuracy of each response was graded with the following scale:

-

1.

Comprehensive: Defined as accurate and comprehensive, nothing more a board-certified bariatric surgeon can add if asked this question by a patient

-

2.

Correct but inadequate: All information is correct but incomplete; a board-certified bariatric surgeon would have more important information to add if asked this question by a patient.

-

3.

Some correct and some incorrect

-

4.

Completely incorrect

Disagreement in reproducibility or grading of each response was resolved by a third board-certified, fellowship-trained, active practice academic bariatric surgeon (K. S.). The final grades were then compiled and used to analyze the overall performance of ChatGPT in answering questions related to bariatric surgery.

Statistical Analysis

The proportions of responses earning each grade were calculated. To determine reproducibility, responses were categorized into two groups: a grade of 1 and 2 comprised the first group, and a grade of 3 and 4 comprised the second group. The two responses to each question were considered significantly different from one another, or not reproducible, if the assigned grades for each response fell under different groups. Interrater reliability between reviewer 1 and reviewer 2 was tested using Kappa statistic and showed moderate agreement with a Kappa value of 0.762 with a 95% confidence interval of 0.684–0.825. All analyses were conducted using Microsoft Excel (version 16.69.1).

Results

In total, 151 questions related to bariatric surgery were inputted into ChatGPT (Supplementary Table 1). The model provided “comprehensive” responses to 131/151 (86.8%) of questions. The model provided “comprehensive” responses to 131/151 (86.8%) of questions. When examined by category, the model provided “comprehensive” responses to 93.8% of questions related to “efficacy, eligibility and procedure options”; 93.3% to “preoperative preparation”; 85.3% to “recovery, risks, and complications”; and 88.2% to “lifestyle changes” (Table 1). The percentage of comprehensive responses was lower for the “others” category, with only two-thirds of responses graded as “comprehensive,” while 25% of responses were graded as “some correct and some incorrect.” In total, 4 questions across all categories were given a rating of “completely incorrect.” For example, when prompted regarding the expected scars from surgery, the model described the open approach rather than the commonly used laparoscopic approach. When prompted regarding the use of straws after surgery, the model explained “Using a straw can help to bypass the smaller stomach and deliver fluids directly to the intestines, where they can be absorbed more easily.”

The model provided reproducible answers to 137 (90.7%) of questions. Reproducibility of responses in the “lifestyle changes” and “others” categories was 100% but lower in the “recovery, risks and complications” (86.7%); “eligibility, efficacy and procedure options” (90.6%); and “preoperative preparation” (93.3%) categories (Table 2).

Discussion

We examined the accuracy and reproducibility of the new AI model ChatGPT in responding to commonly asked patient questions related to bariatric surgery. The responses of ChatGPT to patient questions obtained from nationally regarded professional societies and health institutions as well as Facebook patient support groups were independently assessed for accuracy and reproducibility by a panel of board-certified and fellowship-trained bariatric surgeons. Overall, ChatGPT’s responses were accurate with most responses graded as “comprehensive” on our grading scale along with high reproducibility. The model performed well in the majority of topics such as “efficacy, eligibility and procedure options”; “preoperative preparation”; “recovery, risks, and complications”; and “lifestyle changes.” Our study shows the potential significant impact of this technology in improving patients’ experience and outcomes in the future. Furthermore, we highlight important limitations and considerations.

Previous literature has shown patients seek information related to bariatric surgery outside of their healthcare providers, with social media serving as a popular resource [11, 14, 15]. Multiple studies have shown limitations in the quality and reliability of online sources of information regarding bariatric surgery, including social media [16-18]. Furthermore, research has demonstrated that there is a lack of easy-to-understand online resources about bariatric surgery with over 93% of websites surveyed receiving a rating of unacceptable readability [19]. Furthermore, navigating current search engines can be difficult when searching for specific answers. Search results may be overwhelming, not relevant to the question asked or difficult to ascertain the reliability and accuracy of sources. Given these limitations, we hypothesize that patients will utilize ChatGPT as a source of information for their bariatric surgery-related questions. Our reviewers found ChatGPT easy to access and understand. Universal access AI models may also serve to bridge access and knowledge disparities in communities with limited resources. Our study findings are encouraging, and we anticipate significant future interest in this technology as it relates not only to bariatric surgery but also other fields of medicine.

ChatGPT has the potential to mitigate disparities in health literacy among bariatric surgery candidates and patients. Levels of health literacy have been shown to impact bariatric surgery outcomes with lower literacy being associated with lower short-term as well as long-term postoperative weight loss [20-22]. Other studies have shown the association of health literacy with adherence to medical appointments 1 year after bariatric surgery [23]. One study demonstrated that low health literacy among bariatric surgery candidates had a negative association with eventually undergoing bariatric surgery [24]. While there are no studies that have explored the impact of health literacy on bariatric surgery complications, health literacy has been shown to be negatively associated with postoperative complications after colorectal surgery [25, 26]. Our study demonstrates that the currently available version of ChatGPT provided comprehensive responses to questions regarding the safety, efficacy, and complications of bariatric surgery for most questions reviewed. Future iterations of this technology will likely offer improvements in accuracy and readability. While there are no current studies examining the impact of the use of ChatGPT on health literacy, we hypothesize that utilization of this model will provide patients with an easy-to-understand, accurate, and reproducible resource of information for patients.

ChatGPT may also serve as a tool for improving the perception of the safety and efficacy of bariatric surgery both among prospective candidates and the general public, thereby increasing its adoption as a treatment modality for severe obesity. Despite its known efficacy, less than 1% of eligible patients undergo bariatric surgery. Previous studies have shown both lack of familiarity and discordance between patient perceptions and the demonstrated clinical safety and efficacy profile of bariatric surgery [10]. These trends were also found among the general public. Furthermore, portrayal of bariatric surgery in the media may also contribute to its underutilization with one study in the UK finding 33% of articles published in newspapers were negatively slanted against bariatric surgery [27]. Having easy access to information regarding bariatric surgery may help raise awareness among the general public and eligible candidates, thereby decreasing the stigma surrounding surgery and potentially increase its utilization.

Strengths and Limitations

To our knowledge, this is the first study to examine the utility of the model ChatGPT in the field of bariatric surgery. Patient questions were obtained from well-regarded sources and societies as well as patient questions from Facebook support groups to provide a comprehensive and realistic sample of patient questions. Responses were independently graded by board-certified bariatric surgeons with discrepancies resolved by a blinded third senior reviewer to comprehensively evaluate the accuracy and reproducibility of ChatGPTs responses.

There are several limitations of ChatGPT that should be highlighted and considered when evaluating it as a potential source of information for patients. While the model performed well with accurate and often reproducible responses, there were several responses that contained incorrect information which may be dangerous to patients without the direction of a healthcare provider. This limitation supports the potential use of ChatGPT as an adjunct source of information but not a replacement of the standard of care provided by a team of licensed healthcare professionals. We hope this limitation is minimized with the expected continuous improvement of the model overtime and in turn provide more accurate and reproducible responses. Secondly, recommendations and standards of practice may vary by region, medical society, or country of residence, providing a challenge in assessing the accuracy of responses for each user around the country or world regarding certain topics. The source of information in which ChatGPT uses to produce responses is unknown which may impact the reliability of its responses for certain topics. Third, ChatGPT may write linguistically convincing responses which are incorrect or nonsensical. This false sense of confidence in responses may lead to the adoption of incorrect and potentially dangerous recommendations by unsuspecting users. OpenAI reports that the current version of the model is expected to produce false positives and false negatives but hope to minimize these limitations with user feedback and ongoing improvement in the model.

Future Directions

ChatGPT and future similar technologies are expected to revolutionize all fields of academia and likely the field of medicine, although its utility and implementation process remain unclear. If validated by future studies, ChatGPT can be a powerful tool to empower patients. Its performance in answering patient questions regarding cirrhosis and hepatocellular carcinoma has been studied with promising results, similar to the current study [5]. We encourage future studies to expand on our findings in order to study the utility of this revolutionary technology in improving patient experience and outcomes in bariatric surgery.

Conclusion

The large language model ChatGPT provided accurate and reproducible responses to common questions related to bariatric surgery. ChatGPT may serve as a helpful adjunct source of information regarding bariatric surgery for patients and surgical candidates in addition to standard of care provided by licensed healthcare professionals. We encourage future studies to examine how to leverage this disruptive technology to improve patient outcomes and quality of life.

References

O'Connor S. ChatGPT. Open artificial intelligence platforms in nursing education: tools for academic progress or abuse? Nurse Educ Pract. 2023;66:103537. https://doi.org/10.1016/j.nepr.2022.103537.

Graham F. Daily briefing: will ChatGPT kill the essay assignment? Nature. 2022; https://doi.org/10.1038/d41586-022-04437-2.

Gilson A, Safranek CW, Huang T, Socrates V, Chi L, Taylor RA, Chartash D. How does ChatGPT perform on the United States Medical Licensing Examination? The implications of large language models for medical education and knowledge assessment. JMIR Med Educ. 2023;8(9):e45312. https://doi.org/10.2196/45312.

Sarraju A, Bruemmer D, Van Iterson E, Cho L, Rodriguez F, Laffin L. Appropriateness of cardiovascular disease prevention recommendations obtained from a popular online chat-based artificial intelligence model. JAMA. 2023;329(10):842. https://doi.org/10.1001/jama.2023.1044.

Yeo YH, Samaan JS, Ng WH, Ting PS, Trivedi H, Vipani A, Ayoub W, Yang JD, Liran O, Spiegel B, Kuo A. Assessing the performance of ChatGPT in answering questions regarding cirrhosis and hepatocellular carcinoma. Clin Mol Hepatol. 2023; https://doi.org/10.3350/cmh.2023.0089.

Obesity is a common, serious, and costly disease. Centers for Disease Control and Prevention. Accessed January 21, 2023. https://www.cdc.gov/obesity/data/adult.html

Arterburn DE, Telem DA, Kushner RF, Courcoulas AP. Benefits and risks of bariatric surgery in adults: a review. JAMA. 2020 Sep;324(9):879–87. https://doi.org/10.1001/jama.2020.12567.

New study finds most bariatric surgeries performed in northeast, and fewest in south where obesity rates are highest, and economies are weakest. American Society for Metabolic and Bariatric Surgery. Published online November 15, 2018. Accessed January 23, 2023. https://asmbs.org/articles/new-study-finds-most-bariatric-surgeries-performed-in-northeast-and-fewest-in-south-where-obesity-rates-are-highest-and-economies-are-weakest

Premkumar A, Samaan JS, Samakar K. Factors associated with bariatric surgery referral patterns: a systematic review. J Surg Res. 2022;276:54–75. https://doi.org/10.1016/j.jss.2022.01.023.

Rajeev ND, Samaan JS, Premkumar A, Srinivasan N, Yu E, Samakar K. Patient and the public’s perceptions of bariatric surgery: a systematic review. J Surg Res. 2023;283:385–406. https://doi.org/10.1016/j.jss.2022.10.061.

Scarano Pereira JP, Martinino A, Manicone F, Scarano Pereira ML, Iglesias Puzas Á, Pouwels S, Martínez JM. Bariatric surgery on social media: a cross-sectional study. Obes Res Clin Pract. 2022;16(2):158–62. https://doi.org/10.1016/j.orcp.2022.02.005.

openai. ChatGPT: optimizing language models for dialogue. 2023; https://openai.com/blog/chatgpt/. Accessed 1/1/2023, 2023.

Ouyang L, Wu J, Jiang X, et al. Training language models to follow instructions with human feedback. Adv Neur Inform Proc Syst. 2022;35:27730. https://doi.org/10.48550/ARXIV.2203.02155.

Athanasiadis DI, Roper A, Hilgendorf W, Voss A, Zike T, Embry M, Banerjee A, Selzer D, Stefanidis D. Facebook groups provide effective social support to patients after bariatric surgery. Surg Endosc. 2021;35(8):4595–601. https://doi.org/10.1007/s00464-020-07884-y.

Koball AM, Jester DJ, Domoff SE, Kallies KJ, Grothe KB, Kothari SN. Examination of bariatric surgery Facebook support groups: a content analysis. Surg Obes Relat Dis. 2017;13(8):1369–75. https://doi.org/10.1016/j.soard.2017.04.025.

Batar N, Kermen S, Sevdin S, Yıldız N, Güçlü D. Assessment of the quality and reliability of information on nutrition after bariatric surgery on YouTube. Obes Surg. 2020;30:4905–10. https://doi.org/10.1007/s11695-020-05015-z.

Corcelles R, Daigle CR, Talamas HR, Brethauer SA, Schauer PR. Assessment of the quality of Internet information on sleeve gastrectomy. Surg Obes Relat Dis. 2015;11(3):539–44. https://doi.org/10.1016/j.soard.2014.08.014.

Koball AM, Jester DJ, Pruitt MA, Cripe RV, Henschied JJ, Domoff S. Content and accuracy of nutrition-related posts in bariatric surgery Facebook support groups. Surg Obes Relat Dis. 2018;14:1897–902. https://doi.org/10.1016/j.soard.2018.08.017.

Meleo-Erwin Z, Basch C, Fera J, Ethan D, Garcia P. Readability of online patient-based information on bariatric surgery. Health Promot Perspect. 2019;9(2):156–60. https://doi.org/10.15171/hpp.2019.22.

Mahoney ST, Strassle PD, Farrell TM, Duke MC. Does lower level of education and health literacy affect successful outcomes in bariatric surgery? J Laparoendosc Adv Surg Tech A. 2019;29(8):1011–5. https://doi.org/10.1089/lap.2018.0806.

Erdogdu UE, Cayci HM, Tardu A, Demirci H, Kisakol G, Guclu M. Health literacy and weight loss after bariatric surgery. Obes Surg. 2019;29(12):3948–53. https://doi.org/10.1007/s11695-019-04060-7.

Miller-Matero LR, Hecht L, Patel S, Martens KM, Hamann A, Carlin AM. The influence of health literacy and health numeracy on weight loss outcomes following bariatric surgery. Surg Obes Relat Dis. 2021;17(2):384–9. https://doi.org/10.1016/j.soard.2020.09.021.

Hecht LM, Martens KM, Pester BD, Hamann A, Carlin AM, Miller-Matero LR. Adherence to medical appointments among patients undergoing bariatric surgery: do health literacy, health numeracy, and cognitive functioning play a role? Obes Surg. 2022;32(4):1391–3. https://doi.org/10.1007/s11695-022-05905-4.

Hecht L, Cain S, Clark-Sienkiewicz SM, Martens K, Hamann A, Carlin AM, Miller-Matero LR. Health literacy, health numeracy, and cognitive functioning among bariatric surgery candidates. Obes Surg. 2019;29(12):4138–41. https://doi.org/10.1007/s11695-019-04149-z.

Theiss LM, Wood T, McLeod MC, Shao C, Santos Marques ID, Bajpai S, Lopez E, Duong AM, Hollis R, Morris MS, Chu DI. The association of health literacy and postoperative complications after colorectal surgery: a cohort study. Am J Surg. 2022;223(6):1047–52. https://doi.org/10.1016/j.amjsurg.2021.10.024.

Baker S, Malone E, Graham L, Dasinger E, Wahl T, Titan A, Richman J, Copeland L, Burns E, Whittle J, Hawn M, Morris M. Patient-reported health literacy scores are associated with readmissions following surgery. Am J Surg. 2020;220(5):1138–44. https://doi.org/10.1016/j.amjsurg.2020.06.071.

Williamson JM, Rink JA, Hewin DH. The portrayal of bariatric surgery in the UK print media. Obes Surg. 2012;22(11):1690–4. https://doi.org/10.1007/s11695-012-0701-5.

Funding

Open access funding provided by SCELC, Statewide California Electronic Library Consortium

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Ethical Approval

This article does not contain any studies with human participants or animals performed by any of the authors.

Informed Consent

Informed consent does not apply.

Conflict of Interest

The authors declare no competing interests.

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Key Points

• ChatGPT provided accurate responses to bariatric surgery-related questions.

• ChatGPT provided reproducible responses to bariatric surgery-related questions.

• ChatGPT may serve as an adjunct source of information for patients and candidates.

Supplementary Information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Samaan, J.S., Yeo, Y.H., Rajeev, N. et al. Assessing the Accuracy of Responses by the Language Model ChatGPT to Questions Regarding Bariatric Surgery. OBES SURG 33, 1790–1796 (2023). https://doi.org/10.1007/s11695-023-06603-5

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11695-023-06603-5